A New Framework for Network Disruption

Traditional network disruption approaches focus on disconnecting or lengthening paths in the network. We present a new framework for network disruption that attempts to reroute flow through critical vertices via vertex deletion, under the assumption that this will render those vertices vulnerable to future attacks. We define the load on a critical vertex to be the number of paths in the network that must flow through the vertex. We present graph-theoretic and computational techniques to maximize this load, firstly by removing either a single vertex from the network, secondly by removing a subset of vertices.

💡 Research Summary

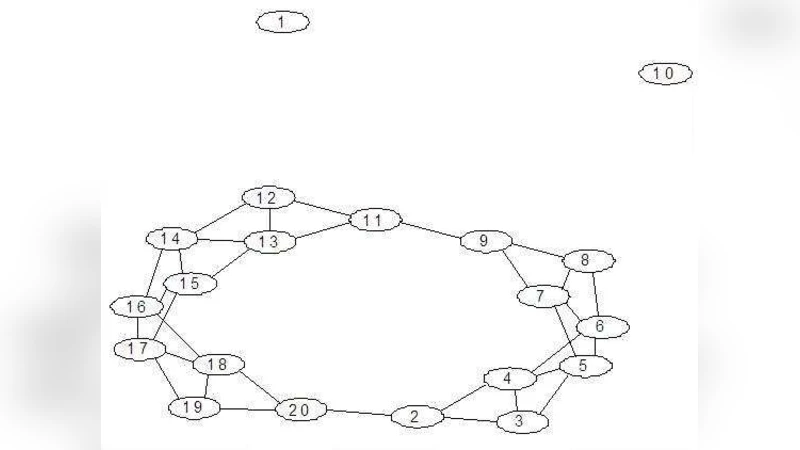

The paper introduces a novel perspective on network disruption that shifts focus from traditional strategies—such as disconnecting nodes or lengthening shortest paths—to deliberately increasing the traffic through a designated “critical” vertex in order to make it more vulnerable to subsequent attacks. The authors formalize this idea by defining a new centrality measure called “load.” For a given graph G and a critical vertex k, the load Lk(G) is the difference between the total flow capacity of G with respect to k (the maximum number of edge‑disjoint s‑t paths that do not start or end at k) and the flow capacity of the graph after k is removed, G{k}. In effect, Lk(G) counts how many s‑t paths are forced to pass through k; a larger value indicates that k is more essential for network flow.

To quantify the impact of removing vertices, the authors define the “load effect” Ek(G,S) = Lk(G\S) – Lk(G), where S is a set of vertices deleted from G. A positive load effect means that the removal of S reroutes additional flow through the critical vertex k, thereby increasing its load and, by the authors’ hypothesis, its susceptibility to future disruption. Two optimization problems are formulated: (1) Single‑LOMAX, which seeks a single vertex i whose removal maximizes Ek(G,{i}); and (2) LOMAX, which seeks a subset S⊆V that maximizes Lk(G\S). The load function is inherently non‑linear, non‑monotonic, and non‑convex, precluding standard integer linear programming formulations. Consequently, the paper explores combinatorial and heuristic approaches.

The theoretical contribution includes several structural results. The authors prove that if k is a cut‑vertex or lies on a tree leaf, no vertex removal can produce a positive load effect. Conversely, when k has high betweenness, closeness, or degree centrality, there often exist vertices whose removal forces additional flow through k. They also establish bounds on when a vertex is guaranteed not to yield a positive effect, providing insight into the problem’s complexity.

Empirical evaluation is conducted on four canonical synthetic network models: Erdős‑Rényi random graphs, Watts‑Strogatz small‑world graphs, Barabási‑Albert scale‑free graphs, and Holme‑Kim graphs that incorporate clustering. For each model, 276 instances of 100‑node graphs are generated. The critical vertex k in each instance is chosen as the node with the highest average rank across betweenness, closeness, and degree centralities. Single‑LOMAX is solved by exhaustive search. Results show that virtually every graph contains at least one vertex whose removal yields a positive load effect, especially when k is highly central. However, the average load effect across all vertices is negative, reflecting that random deletions typically disperse flow rather than concentrate it.

Because LOMAX is combinatorial (the number of subsets grows exponentially), the authors design a genetic algorithm (GA) to approximate optimal solutions for larger deletions. In the GA, chromosomes encode candidate deletion sets as binary strings; fitness is directly computed as Lk(G\S). Crossover and mutation operators explore the search space, and experiments varying population size, number of generations, and mutation rates demonstrate that the GA can achieve load increases up to 1.8 times those obtained by the best single‑vertex deletion, when deleting 5‑10 % of the vertices. Convergence analysis indicates that moderate population sizes (≈50) and 100 generations provide a good trade‑off between solution quality and runtime.

The discussion section connects the theoretical framework to practical scenarios such as disrupting covert terrorist cells, illegal weapons trafficking networks, and human‑trafficking rings. In such “cover” networks, traditional interdiction that lengthens paths may be less effective because participants deliberately use longer, less observable routes. By contrast, increasing the load on a key leader or hub could cause overload, detection, or cascade failures, offering a stealthier and potentially more decisive disruption. The authors also suggest that infrastructure designers could use load analysis to avoid creating single points of failure in power grids or communication systems.

In conclusion, the paper contributes a new “load‑maximization” paradigm for network disruption, provides rigorous definitions, theoretical bounds, and empirical evidence across multiple network topologies, and offers a practical heuristic (genetic algorithm) for tackling the NP‑hard LOMAX problem. Future work is outlined to extend the model to dynamic flow settings, multiple critical vertices, and real‑world data sets, thereby bridging the gap between abstract graph theory and actionable security or resilience strategies.

Comments & Academic Discussion

Loading comments...

Leave a Comment