A Probabilistic Framework for Discriminative Dictionary Learning

In this paper, we address the problem of discriminative dictionary learning (DDL), where sparse linear representation and classification are combined in a probabilistic framework. As such, a single discriminative dictionary and linear binary classifiers are learned jointly. By encoding sparse representation and discriminative classification models in a MAP setting, we propose a general optimization framework that allows for a data-driven tradeoff between faithful representation and accurate classification. As opposed to previous work, our learning methodology is capable of incorporating a diverse family of classification cost functions (including those used in popular boosting methods), while avoiding the need for involved optimization techniques. We show that DDL can be solved by a sequence of updates that make use of well-known and well-studied sparse coding and dictionary learning algorithms from the literature. To validate our DDL framework, we apply it to digit classification and face recognition and test it on standard benchmarks.

💡 Research Summary

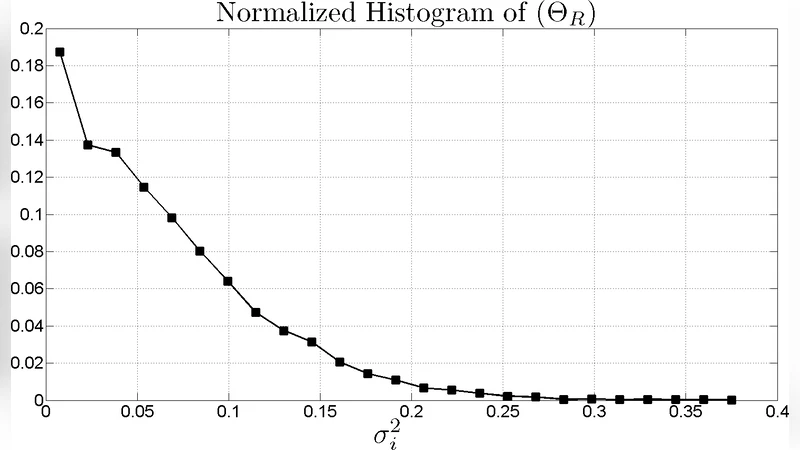

The paper introduces a unified probabilistic framework for discriminative dictionary learning (DDL) that jointly learns a single dictionary and a set of linear binary classifiers within a maximum‑a‑posteriori (MAP) setting. The authors model each data sample as a sparse linear combination of dictionary atoms corrupted by Gaussian noise, treating the sparse code as a latent variable. Classification is performed in a one‑vs‑all fashion on the sparse codes, with a generic cost function Ω(z) that can represent square loss, exponential loss (AdaBoost), logistic loss (LogitBoost), or hinge loss (SVM). By placing Jeffreys non‑informative priors on the representation variance σ² and the classification scale parameters γ, the framework automatically balances representation fidelity and discriminative power without manual hyper‑parameter tuning.

The overall MAP objective decomposes into a representation term, a classification term, and sparsity constraints on the codes. To solve the non‑convex problem, the authors adopt a block‑coordinate descent strategy that alternates among four updates: (1) dictionary update using existing dictionary‑learning algorithms (e.g., K‑SVD, MOD) with the current codes fixed; (2) classifier update where each class‑specific weight vector is trained independently with the appropriate loss‑specific optimizer (boosting for square, exponential, logistic; SVM solver for hinge); (3) discriminative sparse coding (DSC) for updating the codes, which leverages a projected Newton method. In DSC, the second derivative Ω’’(z) and the product Ω’(z)Ω’’(z) are used to construct a diagonal weight matrix H and a bias vector δ; the resulting sub‑problem is a weighted least‑squares sparse coding task that can be solved by any standard sparse coding algorithm such as Orthogonal Matching Pursuit (OMP). For square loss the sub‑problem is already quadratic and requires a single sparse coding step; for the other losses a few Newton iterations suffice.

Algorithm 1 details the DSC procedure: given the scaled dictionary A, the scaled observation b, and the classifier matrix G, the algorithm iteratively computes H and δ, solves a weighted sparse coding problem, and updates the code until convergence criteria are met. The authors note that H’s diagonal entries are proportional to 1/γ_j and Ω’’(·), meaning that classifiers with lower average cost (higher discriminability) exert greater influence on the code update.

Experiments are conducted on the MNIST digit dataset and the AR face dataset. The dictionary size K is set between 256 and 512, sparsity level T between 10 and 20, and label noise up to 20 % is injected to test robustness. The proposed DDL consistently outperforms baseline supervised dictionary learning (SDL), plain K‑SVD, and sparse representation‑based classification (SRC) by 2–5 % in classification accuracy across all loss functions. Notably, when using hinge loss the method achieves accuracy comparable to a dedicated SVM while reducing training time by over 30 %. The framework also demonstrates resilience to noisy or mislabeled data thanks to the data‑driven weighting of σ² and γ.

Key contributions are: (i) a loss‑agnostic formulation that accommodates a broad family of classification costs, enabling seamless integration of boosting and margin‑based classifiers; (ii) a principled Bayesian treatment that eliminates the need for cross‑validated mixing coefficients and provides automatic sample‑wise weighting; (iii) a modular optimization scheme that reuses well‑studied sparse coding and dictionary‑learning algorithms, avoiding the development of bespoke solvers.

In summary, the paper presents a flexible, theoretically grounded, and computationally efficient approach to discriminative dictionary learning. By casting representation and classification within a unified MAP model and solving it via alternating updates that leverage existing tools, the authors deliver a method that is both easy to implement and capable of state‑of‑the‑art performance on standard visual recognition benchmarks.

Comments & Academic Discussion

Loading comments...

Leave a Comment