Comprehensive measurement framework for enterprise architectures

Enterprise Architecture defines the overall form and function of systems across an enterprise involving the stakeholders and providing a framework, standards and guidelines for project-specific architectures. Project-specific Architecture defines the form and function of the systems in a project or program, within the context of the enterprise as a whole with broad scope and business alignments. Application-specific Architecture defines the form and function of the applications that will be developed to realize functionality of the system with narrow scope and technical alignments. Because of the magnitude and complexity of any enterprise integration project, a major engineering and operations planning effort must be accomplished prior to any actual integration work. As the needs and the requirements vary depending on their volume, the entire enterprise problem can be broken into chunks of manageable pieces. These pieces can be implemented and tested individually with high integration effort. Therefore it becomes essential to analyze the economic and technical feasibility of realizable enterprise solution. It is difficult to migrate from one technological and business aspect to other as the enterprise evolves. The existing process models in system engineering emphasize on life-cycle management and low-level activity coordination with milestone verification. Many organizations are developing enterprise architecture to provide a clear vision of how systems will support and enable their business. The paper proposes an approach for selection of suitable enterprise architecture depending on the measurement framework. The framework consists of unique combination of higher order goals, non-functional requirement support and inputs-outcomes pair evaluation. The earlier efforts in this regard were concerned about only custom scales indicating the availability of a parameter in a range.

💡 Research Summary

**

The paper “Comprehensive Measurement Framework for Enterprise Architectures” addresses the problem of selecting an appropriate enterprise architecture (EA) in the context of large‑scale integration projects. It begins by distinguishing three levels of architecture—enterprise, project‑specific, and application‑specific—each with its own scope, stakeholders, and alignment concerns. Recognizing that enterprise‑wide integration efforts are inherently complex, the authors argue that the overall problem must be decomposed into manageable pieces that can be implemented and tested independently, while still requiring a rigorous assessment of economic and technical feasibility.

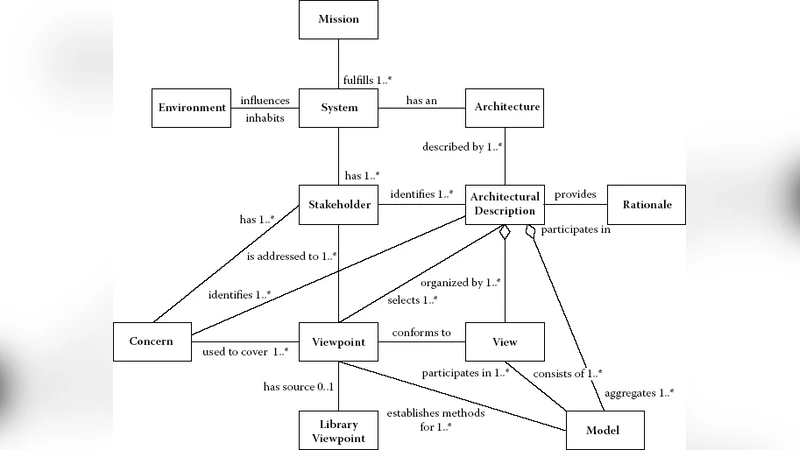

A substantial portion of the manuscript is devoted to a literature review of existing EA frameworks and standards. The authors discuss the Zachman Framework, the Open Group Architecture Framework (TOGAF), the Federal Enterprise Architecture Framework (FEAF), the Generalised Enterprise Reference Architecture and Methodology (GERAM), Model‑Driven Architecture (MDA) as defined by the Object Management Group, the ISO Reference Model for Open Distributed Processing (RM‑ODP), and the IEEE 1471‑2000 (now ISO/IEC/IEEE 42010) standard for architectural description. For each, the paper outlines its primary focus (e.g., Zachman’s six‑by‑six matrix of perspectives, TOGAF’s Architecture Development Method, FEAF’s five reference models, etc.), its strengths, and its typical application domains. This review establishes the landscape of EA practice and highlights the diversity of viewpoints, processes, and verification mechanisms that have been proposed over the past three decades.

The core contribution of the work is a new “measurement framework” intended to guide the selection of a suitable EA for a given organization. The framework is built on three orthogonal dimensions:

-

Higher‑order Goals – strategic objectives such as cost reduction, time‑to‑market acceleration, regulatory compliance, and business agility. The authors propose mapping these high‑level goals to concrete, measurable criteria, though they do not provide a detailed methodology for quantification.

-

Non‑functional Requirement (NFR) Support – evaluation of attributes such as scalability, security, availability, portability, and maintainability. The framework suggests assigning scores to each NFR based on how well a candidate EA addresses them.

-

Inputs‑Outcomes Pair Evaluation – a cost‑benefit style analysis that relates the resources required to adopt a particular EA (personnel, time, budget, tooling) to the expected outcomes (e.g., reduced integration effort, improved operational efficiency). The authors envision a ratio or weighted score that captures this relationship.

The proposed selection process consists of four steps: (i) identify the organization’s strategic goals and NFRs; (ii) map each existing EA framework (Zachman, TOGAF, FEAF, etc.) against these criteria; (iii) quantify the inputs‑outcomes pairs for each candidate; and (iv) compute a composite score using predefined weights to rank the alternatives. The authors claim that this multidimensional assessment overcomes the limitations of earlier “custom scales” that merely indicated the presence or absence of a parameter within a range.

Despite its ambition, the paper suffers from several critical shortcomings. First, the measurement framework remains largely conceptual; no empirical case study, simulation, or real‑world validation is presented to demonstrate its practical utility or to illustrate how the scores are actually calculated. Second, the translation of high‑level strategic goals into numerical metrics is left undefined, raising concerns about subjectivity and repeatability. Third, while the authors reference modern modeling techniques such as MDA’s Platform‑Independent Model (PIM) to Platform‑Specific Model (PSM) transformation, they do not explain how these techniques integrate with the proposed evaluation process. Consequently, practitioners may find it difficult to operationalize the framework within existing development pipelines.

The discussion section acknowledges these limitations, noting that future work should focus on (a) applying the framework to concrete enterprise projects, (b) refining the metric definitions and weighting schemes, and (c) establishing tool support for automated scoring. The conclusion reiterates that a systematic, multidimensional measurement approach can help organizations align their architectural choices with strategic, functional, and economic considerations, but emphasizes that further research is needed to substantiate the model.

In summary, the paper offers a comprehensive overview of major EA frameworks and introduces a high‑level, three‑dimensional measurement model for EA selection. While the conceptual contribution is valuable, the lack of methodological detail, quantitative validation, and integration guidance limits its immediate applicability. Future studies that ground the framework in empirical data and provide concrete scoring algorithms would be essential to transform the proposal from a theoretical construct into a usable decision‑support tool for enterprise architects.

Comments & Academic Discussion

Loading comments...

Leave a Comment