Optimization and Evaluation of a Multimedia Streaming Service on Hybrid Telco cloud

With recent developments in cloud computing, a paradigm shift from rather static deployment of resources to more dynamic, on-demand practices means more flexibility and better utilization of resources. This demands new ways to efficiently configure networks. In this paper, we will characterize a class of competitive cloud services that telecom operators could provide based on the characteristics of telecom infrastructure through an applicable streaming service architecture. Then, we will model this architecture as a cost-based mathematic model. This model provides a tool to evaluate and compare the cost of software services for different telecom network topologies and deployment strategies. Additionally, with each topology it acts as a means to characterize the deployment solution that yields the lowest resource usage over the entire network. These applications are illustrated through numerical analysis. Finally, a proof-of-concept prototype is deployed to shows dynamic properties of the service in the architecture and the model above.

💡 Research Summary

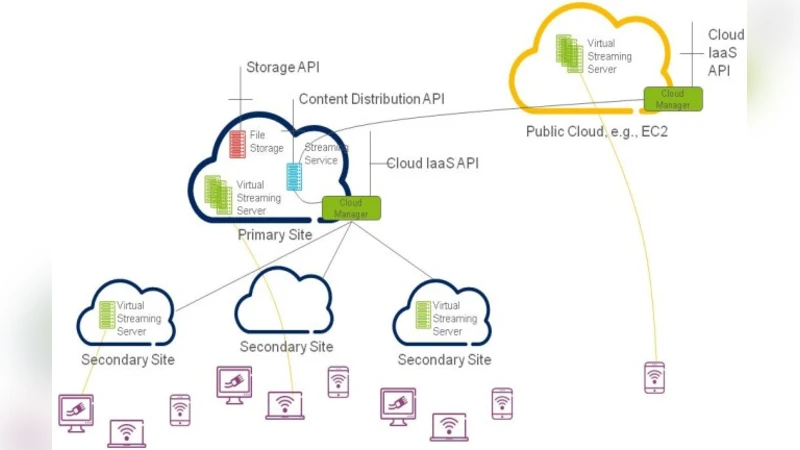

The paper addresses the emerging need for dynamic, on‑demand resource provisioning in telecommunications networks by proposing a hybrid “Telco Cloud” architecture that combines operator‑owned primary and secondary sites with public cloud resources (e.g., Amazon EC2). The authors first describe a realistic mobile network topology: a small number of primary sites (large data‑center‑like nodes) and several secondary sites (edge nodes) that are dual‑homed for redundancy and may also have direct Internet connections. This topology is used as the foundation for a multimedia streaming service, chosen because of its high bandwidth, low‑latency, and quality‑of‑experience (QoE) requirements.

The core contribution is a cost‑based mathematical model. The model captures two main cost components: (1) server operating cost, expressed as a fixed cost per virtual machine (VM) deployed at each site, and (2) link transmission cost, proportional to the amount of traffic traversing backbone and external links. Constraints enforce that all client requests are served, that each site’s capacity limits are respected, and that redundancy (dual‑homed links) is maintained. The objective function minimizes the total cost (operational + transmission) and is formulated as an integer linear program (ILP), allowing the determination of the optimal number of streaming servers, their placement (primary, secondary, or public cloud), and the request‑routing scheme.

To evaluate the model, three deployment strategies are examined:

- Centralized – all streaming servers reside in primary sites only.

- Hybrid Edge – edge servers are placed at secondary sites, with additional capacity provisioned in the public cloud during traffic peaks.

- Public‑Centric – primary sites are omitted; only secondary sites and the public cloud are used.

Numerical experiments vary traffic load, cost parameters, and capacity limits. The results show that the Hybrid Edge strategy consistently yields the lowest total cost, especially when peak demand exceeds the baseline capacity by 30 % or more. This approach also reduces end‑to‑end latency because edge servers serve nearby users, thereby improving QoE. The Public‑Centric scenario can be cost‑effective only when the cost of operating primary sites is prohibitively high, but it suffers from higher latency and reduced resilience.

A proof‑of‑concept prototype validates the theoretical findings. Satellite video streams are encoded and fed to streaming servers that are instantiated on demand in an OpenStack private cloud and on AWS EC2. Automated orchestration provisions a new VM within five minutes and scales the fleet up or down within 30 seconds in response to measured client demand. The experiment demonstrates that the head‑end load remains stable while the number of edge or cloud servers adapts dynamically, confirming that the model’s optimal solutions are achievable in practice.

The paper concludes by acknowledging limitations: the current model does not incorporate energy consumption, multi‑objective criteria (e.g., latency vs. cost vs. energy), or service‑level agreement (SLA) constraints, and it does not explore integration with emerging 5G/MEC architectures. Future work is suggested to extend the model with energy‑aware terms, SLA‑driven multi‑objective optimization, and predictive scaling based on traffic forecasting. Overall, the study provides a rigorous, cost‑focused framework for operators to design and evaluate hybrid cloud‑enabled streaming services, offering both academic insight and practical guidance for telecom operators seeking to monetize their network assets in the cloud era.

Comments & Academic Discussion

Loading comments...

Leave a Comment