Localization on low-order eigenvectors of data matrices

Eigenvector localization refers to the situation when most of the components of an eigenvector are zero or near-zero. This phenomenon has been observed on eigenvectors associated with extremal eigenvalues, and in many of those cases it can be meaningfully interpreted in terms of “structural heterogeneities” in the data. For example, the largest eigenvectors of adjacency matrices of large complex networks often have most of their mass localized on high-degree nodes; and the smallest eigenvectors of the Laplacians of such networks are often localized on small but meaningful community-like sets of nodes. Here, we describe localization associated with low-order eigenvectors, i.e., eigenvectors corresponding to eigenvalues that are not extremal but that are “buried” further down in the spectrum. Although we have observed it in several unrelated applications, this phenomenon of low-order eigenvector localization defies common intuitions and simple explanations, and it creates serious difficulties for the applicability of popular eigenvector-based machine learning and data analysis tools. After describing two examples where low-order eigenvector localization arises, we present a very simple model that qualitatively reproduces several of the empirically-observed results. This model suggests certain coarse structural similarities among the seemingly-unrelated applications where we have observed low-order eigenvector localization, and it may be used as a diagnostic tool to help extract insight from data graphs when such low-order eigenvector localization is present.

💡 Research Summary

The paper investigates a phenomenon that has received little attention in the spectral analysis of graphs: the localization of eigenvectors that correspond to non‑extremal (i.e., “low‑order”) eigenvalues, which lie in the middle of the spectrum rather than at the top or bottom. While eigenvector localization on extremal eigenvalues is well‑studied—often linked to high‑degree nodes, small dense communities, or other structural heterogeneities—the authors demonstrate that similar localization can occur on eigenvectors that are far from the spectral edges.

Two real‑world data sets are used as case studies. The first, called the “Congress” data set, encodes roll‑call voting similarity among U.S. Senators from the 70th to the 110th Congress (1927‑2008). An adjacency matrix of size 735 × 735 is built from pairwise voting agreement, with additional weak edges linking the same senator across consecutive Congresses. The graph Laplacian is then formed and its generalized eigenvalue problem L x = λ D x is solved. The second data set, termed “Migration,” is derived from the 2000 U.S. Census and records inter‑county migration flows among 3,107 counties. A similarity matrix W is constructed as W_{ij}=M_{ij}²/(P_i P_j), where M_{ij} is the bidirectional migration count and P_i the county population. The random‑walk matrix P = D⁻¹W is analyzed.

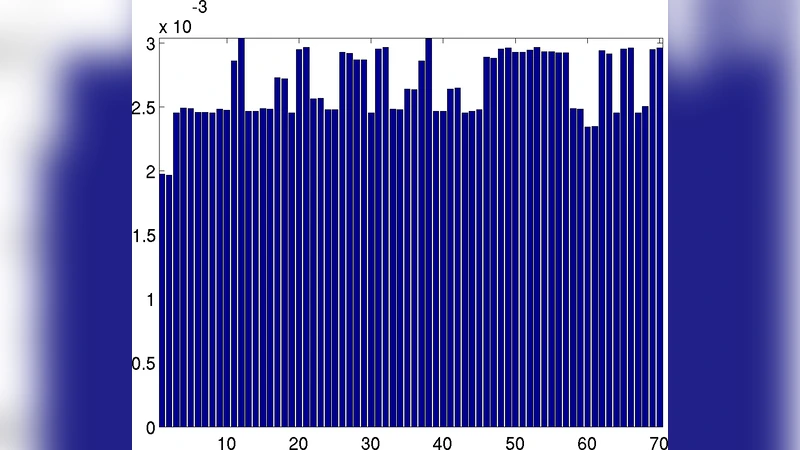

For each data set the authors compute two localization metrics: the inverse participation ratio (IPR) and a component‑wise statistical leverage (CSL). IPR measures how concentrated the mass of a single eigenvector is, while CSL evaluates how much each node contributes to a given eigenvector. In both data sets, eigenvectors with ranks well beyond the leading few (e.g., the 30th–50th eigenvectors in the Congress case) exhibit dramatically higher IPR values, indicating that most of their weight is confined to a small subset of nodes. Visual inspection shows that these subsets correspond to meaningful structures: specific historical periods or political blocs in the Senate, and geographically coherent regions in the migration network.

To explain why such “buried” eigenvectors become localized, the authors propose a simple two‑level tensor product model. The first level consists of structured blocks (e.g., individual Congresses or geographic regions) with strong intra‑block connections; the second level adds a random or sparse component that connects blocks weakly. Spectral analysis of this model reveals that eigenvectors associated with the block structure occupy the top of the spectrum, while eigenvectors that capture inter‑block transitions appear at lower‑order positions. When inter‑block coupling is weak, the corresponding eigenvectors are forced by orthogonality constraints to concentrate their mass on a single block, producing the observed localization. Thus, the core mechanism is a hierarchical block structure combined with weak inter‑block links.

The paper also discusses practical implications. Many machine‑learning techniques—principal component analysis, spectral clustering, Laplacian eigenmaps, diffusion maps—rely on the assumption that eigenvectors capture smooth, global variation and are sufficiently delocalized. When low‑order eigenvectors are already highly localized, orthogonality forces them to contain “noise‑like” fluctuations, leading to unintuitive patterns such as ringing in eigenfaces or unstable clustering results. This problem is especially acute for high‑dimensional, sparse, or temporally evolving graphs, where low‑order eigenvectors may dominate the residual variance after the leading components have been extracted.

Finally, the authors suggest using IPR and CSL as diagnostic tools. By identifying which eigenvectors are localized, analysts can either interpret the underlying structural signal (e.g., a particular political shift or migration corridor) or deliberately exclude those vectors from downstream tasks to improve robustness. Moreover, the simple block‑plus‑noise model can guide the design of synthetic data or regularization schemes that either suppress unwanted low‑order localization or exploit it for feature extraction.

In summary, the study reveals that eigenvector localization is not confined to extremal eigenvalues; it can naturally arise in the middle of the spectrum whenever the data graph exhibits hierarchical, sparsely connected blocks. This insight challenges common intuitions in spectral methods, highlights a hidden source of instability in many graph‑based learning algorithms, and opens the door to new diagnostic and modeling strategies that explicitly account for low‑order eigenvector localization.

Comments & Academic Discussion

Loading comments...

Leave a Comment