Polyethism in a colony of artificial ants

We explore self-organizing strategies for role assignment in a foraging task carried out by a colony of artificial agents. Our strategies are inspired by various mechanisms of division of labor (polyethism) observed in eusocial insects like ants, termites, or bees. Specifically we instantiate models of caste polyethism and age or temporal polyethism to evaluated the benefits to foraging in a dynamic environment. Our experiment is directly related to the exploration/exploitation trade of in machine learning.

💡 Research Summary

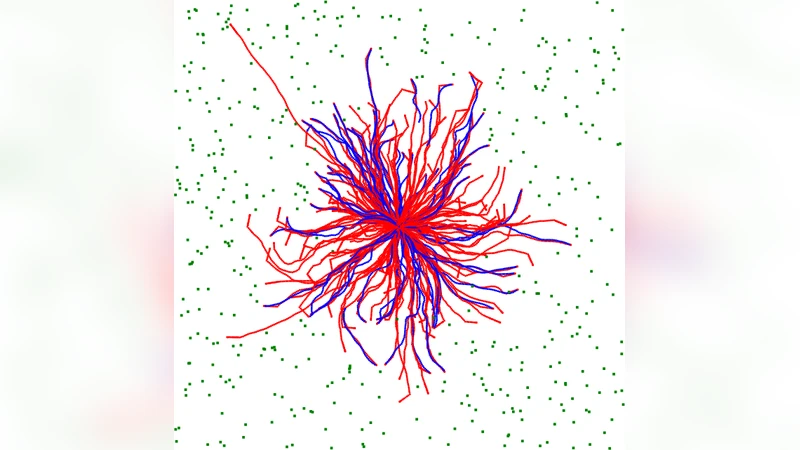

The paper investigates how self‑organizing role‑assignment mechanisms observed in eusocial insects—specifically caste polyethism and age (temporal) polyethism—can be translated into artificial ant colonies tasked with foraging. Two elementary foraging strategies are defined for the agents: (1) an “exploratory” strategy in which ants leave a “seeker” pheromone trail, wander largely straight‑line away from the nest, avoid other seeker trails, and return to the nest by following their own trail; and (2) an “exploitative” strategy in which ants follow “carrier” trails that mark already discovered food sources. Explorers ignore carrier trails, while exploiters ignore seeker trails. This dichotomy mirrors observations that small natural colonies rely on individual exploration, whereas larger colonies increasingly employ cooperative exploitation.

Four colony configurations are compared: (i) a control colony that produces only explorers, (ii) a control colony that produces only exploiters, (iii) an adaptive caste‑polyethism colony in which the queen adjusts the proportion of newly created explorers versus exploiters based on the recent (last 500 simulation rounds) food return rates of each type, and (iv) an adaptive age‑polyethism colony in which all workers share the same behavioral repertoire but start as explorers; after a fixed age (or after one or two foraging trips) they may switch to exploitation with a probability proportional to the colony‑wide success rates, again ensuring at least one individual of each type for reliable statistics. The adaptive colonies thus implement a response‑threshold style feedback loop that continuously re‑balances exploration and exploitation.

The colonies are tested in five distinct environments, each delivering food at the same average rate (one unit every five rounds) and keeping each food item for exactly 1 000 rounds unless collected: (a) a uniform distribution of food across the toroidal arena, (b) a “patch” environment where food appears only in one or more fixed clusters, (c) a “roaming‑patch” where a single patch relocates every 1 000 rounds, (d) a “seasonal” environment that alternates between uniform and patch distributions every 1 000 rounds (producing an overlap of both patterns), and (e) a “mixed” environment that simultaneously contains uniform drops and a static patch. These settings allow the authors to probe both static and dynamic resource landscapes.

Simulation details include a queen that spawns larvae only when the stored food exceeds the current total of workers plus larvae, workers that consume one food unit every 450 rounds, a maximum lifespan drawn uniformly from 2 750–3 250 rounds, and a death rule that triggers when energy is exhausted. The colony starts with 32 food units, creates 16 workers during the first 116 rounds, and then must sustain itself through foraging. The maximum sustainable population hovers around 80 agents, though transient overshoots occur due to non‑linear dynamics.

Results show that in the uniform environment the explorer‑only colony achieves near‑optimal foraging, confirming earlier biological observations that small colonies favor individual exploration. However, as colony size grows, the exploiter‑only colony approaches comparable performance, indicating that cooperative trails can also be efficient when food is evenly spread. In the patch environment, exploiters dramatically outperform explorers because a single discovered patch can be rapidly recruited to, whereas explorers waste effort wandering in the wrong direction. The roaming‑patch, seasonal, and mixed environments—each introducing temporal variability—reveal the superiority of the adaptive colonies. Both the caste‑polyethism and age‑polyethism colonies automatically shift the explorer/exploiter ratio in response to changing success rates, maintaining high food intake and stable population growth. The static colonies, by contrast, either over‑explore (wasting energy) or over‑exploit (missing newly appearing patches), leading to lower overall efficiency and higher mortality.

The authors interpret these findings through the lens of the exploration‑exploitation trade‑off central to reinforcement learning and multi‑armed bandit problems. Caste polyethism functions as a meta‑controller that allocates a fixed proportion of agents to each strategy, while age polyethism implements a decentralized, experience‑driven threshold mechanism where individual agents switch roles based on colony‑wide feedback. Both mechanisms provide robust, scalable solutions for dynamic environments without requiring centralized planning or explicit environmental sensing beyond local pheromone cues.

In conclusion, the paper demonstrates that incorporating biologically inspired polyethism into multi‑agent systems yields significant performance gains in heterogeneous and time‑varying tasks. The work bridges ethology, swarm robotics, and machine‑learning theory, suggesting that future robotic swarms could benefit from adaptive role allocation schemes similar to those observed in ants, termites, and bees. Potential extensions include real‑world robot experiments, richer task sets (e.g., object transport, map building), and exploration of additional polyethism variants such as elitism or task‑specific specialization.

Comments & Academic Discussion

Loading comments...

Leave a Comment