A qos ontology-based component selection

In the component-based software development, the selection step is very important. It consists of searching and selecting appropriate software components from a set of candidate components in order to satisfy the developer-specific requirements. In the selection process, both functional and non-functional requirements are generally considered. In this paper, we focus only on the QoS, a subset of non-functional characteristics, in order to determine the best components for selection. The component selection based on the QoS is a hard task due to the QoS descriptions heterogeneity. Thus, we propose a QoS ontology which provides a formal, a common and an explicit description of the software components QoS. We use this ontology in order to semantically select relevant components based on the QoS specified by the developer. Our selection process is performed in two steps: (1) a QoS matching process that uses the relations between QoS concepts to pre-select candidate components. Each candidate component is matched against the developer’s request and (2) a component ranking process that uses the QoS values to determine the best components for selection from the pre-selected components. The algorithms of QoS matching and component ranking are then presented and experimented in the domain of multimedia components.

💡 Research Summary

The paper addresses the challenge of selecting software components in Component‑Based Software Engineering (CBSE) when non‑functional requirements, specifically Quality of Service (QoS) attributes, are heterogeneous across component descriptions. Existing CBSE approaches largely ignore QoS or treat it with ad‑hoc, non‑semantic models that are difficult to extend and often tied to particular platforms. To overcome these limitations, the authors propose a formal, shared QoS ontology and a two‑stage component selection process that leverages this ontology for both semantic matching and quantitative ranking.

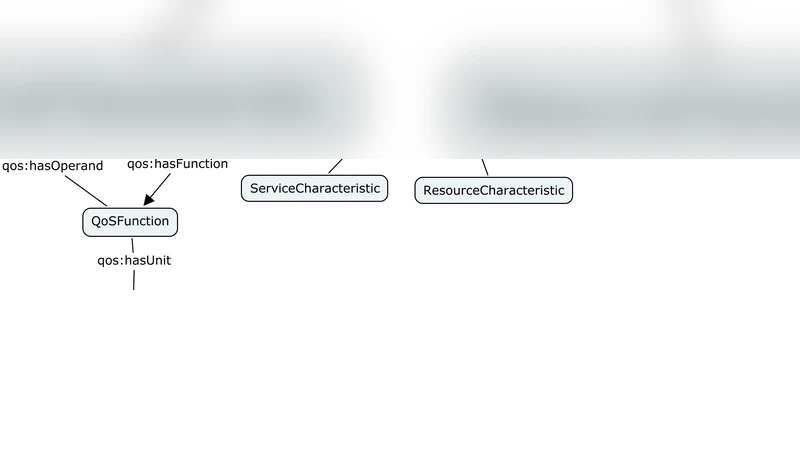

The ontology is built in OWL and models a component as a set of provided and required interfaces. Each interface is linked to a QoSProfile, which aggregates one or more QoSCharacteristics. Characteristics are divided into service‑level (e.g., frame rate, video resolution) and resource‑level (e.g., CPU usage, network latency). Every characteristic is measured by one or more QoSMetric instances. A metric carries a minValue, maxValue, a measurement process (linking to a QoSFunction), a direction flag (increasing or decreasing), and a unit. The ontology also defines a ConversionRate class to handle unit transformations (e.g., seconds ↔ milliseconds). This structure is domain‑independent but can be extended with domain‑specific subclasses, as illustrated with a multimedia camera component example.

The selection process consists of:

-

QoS Matching – After functional matching has produced a candidate set, each candidate’s QoSProfile is compared with the developer’s request using subsumption relationships defined over the ontology. Three relationship types are defined:

- Plugin – For provided interfaces, the candidate’s metric must be a subclass (more restrictive) of the request’s metric (C_i.I_j.Q ⊑ R.I_j.Q). This is the strongest match for provided QoS.

- Subsume – For required interfaces, the request’s metric must be a subclass of the candidate’s metric (R.I_j.Q ⊑ C_i.I_j.Q). This is the strongest match for required QoS.

- Exact – Both directions hold (equivalence). If no metric in the candidate satisfies the request’s metric, the match fails and the candidate is discarded.

-

Component Ranking – For candidates that survive matching, a dissimilarity measure is computed across all QoS metrics. Each metric is normalized according to its direction (higher values are better for increasing metrics, lower values are better for decreasing metrics). The overall distance between the request profile and a candidate profile is aggregated (e.g., weighted Euclidean distance). Candidates are then ordered by ascending distance, meaning the smallest distance indicates the best QoS fit.

The authors implemented the ontology and algorithms and evaluated them on a set of multimedia components (video streaming, camera control, DV format). The experimental scenario required at least 25 frames per second, a start‑up latency no greater than 10 ms, and a reliability of at least 99 %. The ontology‑driven approach successfully identified components meeting these constraints and ranked them according to how closely they matched the quantitative QoS targets. Compared with a baseline string‑matching approach, the ontology‑based method achieved higher matching precision and reduced processing time, largely because unit conversion and composite metric calculation were handled automatically by the ontology’s reasoning engine.

Key contributions of the paper include:

- A comprehensive, extensible QoS ontology that captures both qualitative (direction, min/max) and quantitative (numeric values, units) aspects of QoS.

- Formal subsumption rules that enable semantic matching of QoS profiles for both provided and required interfaces.

- A ranking algorithm that translates heterogeneous QoS metrics into a single similarity score, facilitating automated component selection.

- Empirical validation in a realistic multimedia domain, demonstrating practical benefits over traditional ad‑hoc methods.

The authors conclude that integrating a QoS ontology into the component selection pipeline mitigates semantic heterogeneity, supports automated reasoning, and provides a scalable foundation for future extensions such as dynamic ontology updates, more complex non‑linear QoS models, and application to cloud‑based micro‑service ecosystems.

Comments & Academic Discussion

Loading comments...

Leave a Comment