Evaluation of Huffman and Arithmetic Algorithms for Multimedia Compression Standards

Compression is a technique to reduce the quantity of data without excessively reducing the quality of the multimedia data. The transition and storing of compressed multimedia data is much faster and more efficient than original uncompressed multimedi…

Authors: Asadollah Shahbahrami, Ramin Bahrampour, Mobin Sabbaghi Rostami

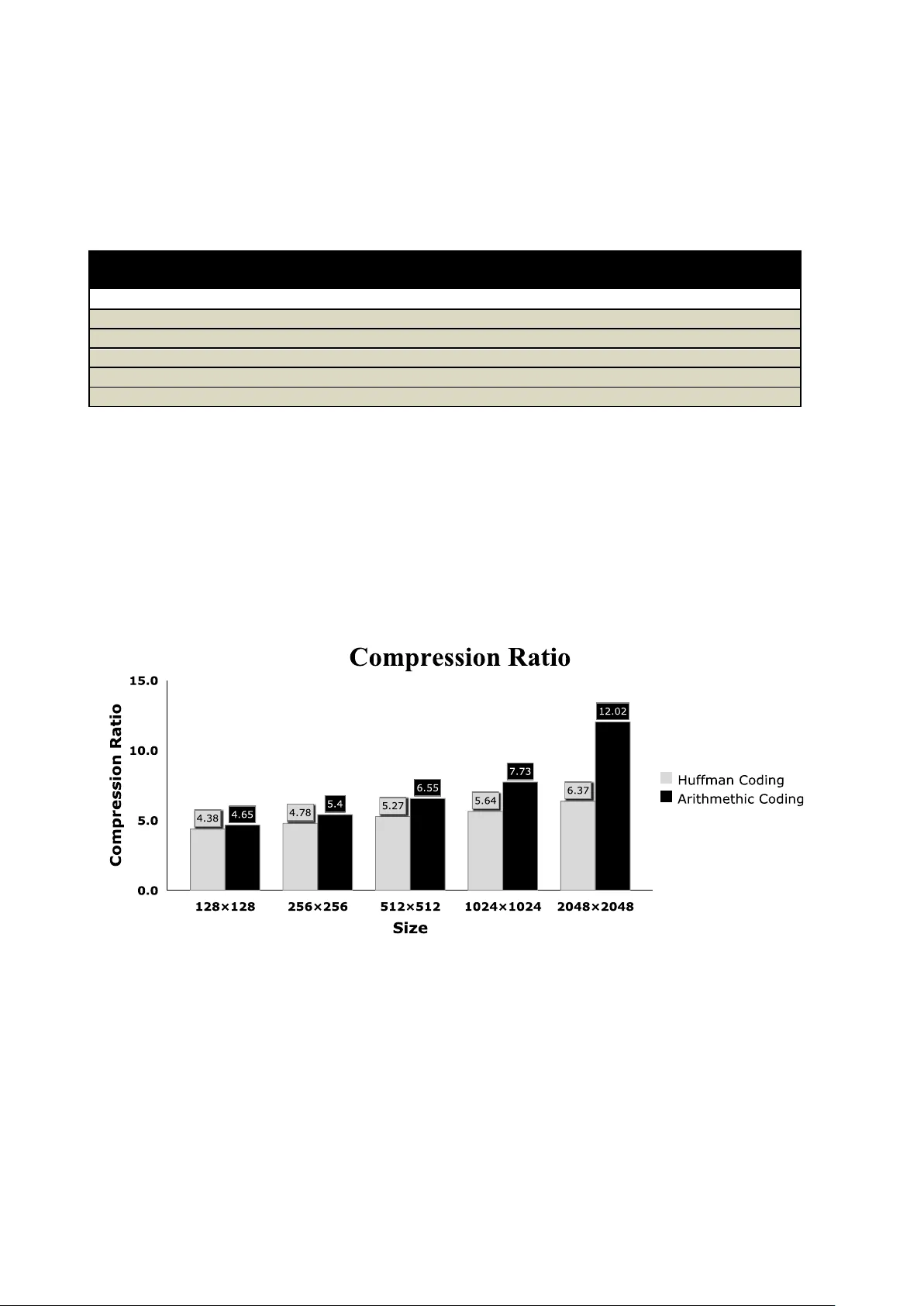

Evaluation of Huffman and Arithmetic Algorithms for Multimedia Compression Standards Asadollah Shahbahrami, Ramin Bahrampour, M obin Sabbaghi Rosta mi, Mostafa A youbi Mobarhan, Department of Computer Engineering , Facult y of Engineering, Unive rsity of Guilan, Rasht, Iran . E-mail: shahbahra mi@guilan.ac.ir ramin.fknr@gmail.co m , mobin.sabbaghi@g mail.com , mostafa_finiks7@ yahoo.com Abstract Compr ess ion is a techn ique to reduc e the quant ity of da ta withou t excess ively reduc ing the qual ity of the mul timed ia data. Th e trans ition an d stor ing of comp resse d mult imedi a data is muc h faste r and more e ffici ent than o rig inal un com presse d m ultim edia da ta. Th ere a re v arious tech niqu es and s tand ards f or m ultimed ia da ta comp ressi on, espec ially for imag e com press ion such as the JPEG an d JPEG 2000 standard s. These stand ard s cons ist o f di ffere nt fu nct ions s uch as colo r space conv ersio n and ent ropy cod ing. Arithm eti c and Huffm an coding are norma lly used in the entropy cod ing pha se. In this paper we try to answe r the follow ing quest ion. Whic h ent ropy coding, arithm etic or Huffm an, is mor e suit able compar ed to oth er from the compres sion ratio , per forma nce, and im pleme ntati on po ints o f vie w ? We hav e imp lemen ted an d test ed Huf fman and arithm eti c algor ithms. Our implem ente d result s show that compre ssion ratio of a rithme tic coding is b etter than Hu ffman coding, while the perform anc e of the Huffm an coding is higher than Ar ithm etic codi ng. In addi tion, impl emen tation of H uffm an co ding is m uch easie r than the Ar ithme tic co ding. Keywords: Multimedia Compr ession , JPEG st anda rd, A rithm etic coding , H uffm an cod ing. 1 Introd uction Multim ed ia data, especia lly imag es hav e been incr easi ng every day. Beca use of their larg e capac ity, storing and tr ansmi tting are not easy and they need large st orage d evices and h igh band width netwo rk systems . In orde r to al levia te thes e requ irem ents, comp ress ion techniq ues an d stan dard s such as JPEG , JPEG 2000 , MPEG- 2, and MPEG-4 have been us ed and prop osed . To compr ess som ethi ng means that you have a piec e of data and you dec rease its size [1 , 2, 3, 4, 5]. The JPEG is a well-k nown standard ized image compres sio n tech nique tha t it lo ses infor matio n, so the decom press ed pi cture is n ot t he sam e as the o rigin al on e. Of c our se the deg ree of losse s can be adjuste d by setting the compre ssion paramet ers. The JPEG standa rd const ructe d from several functi ons su ch as DC T, qu antiz atio n, and entropy cod ing. H uffm an and ari thme tic co ding are the two most import ant entropy coding in image compre ssion standa rds . In this paper, we are planning to answe r the follow ing que stion . Which ent ropy cod ing, arit hme tic or Huffm an, is more sui table from the comp ressi on ra tio, p erfo rman ce, a nd im plem enta tion poin ts o f vi ew com par ed to o ther ? We have s tudie d, impl emente d, and teste d these impor tan t a lgori thm s u sing differen t image c onten ts and size s. Ou r expe rimen tal re sults show that compr essio n rat io of a rithm etic c odin g is high er th an Hu ffman coding , whil e the pe rform ance of th e Huffm an cod ing is hig her th an Arithme tic codi ng. In ad ditio n, imp lemen tation com plexi ty of Huf fman coding is less than the A rit hme tic co ding. The res t of the pape r is organ ized as follow. Sec tion 2 describ es the JPEG compr essio n stand ard and Sec tion 3 and 4 ex plain Hu ffman and arithm etic alg orithm s, resp ect ively. Se ction 5 dis cusses imp lem entat ion of the algo rithm s and s tanda rd tes t imag es. Ex perim ental resu lts ar e expl aine d in S ection 6 follow ed by related work in S ectio n 7. F inal ly, conc lusion s ar e draw n in Se ction 8. 2 The JPEG Compressi on Standard The JPE G is an im age com pres sion s tanda rd deve loped by the Joint Photogr aphi c Exper ts Grou p . It wa s forma lly acce pted as an inte rnat iona l in 19 92. The JPEG consis ts of a num ber o f steps, each of which cont ribu tes to com press ion [3 ]. Figure 1. B lock diagram of the J PEG encoder [3] . Figur e 1 sho ws a blo ck diagram f or a JPEG encoder . I f w e reverse the arrows in the f igure , w e basica lly obta in a JPEG deco der. The JPEG encod er co nsis ts o f the fol lowing m ain s teps. The f irst s tep is abo ut co lor sp ace c onve rsion . Many col or imag es a re rep resen ted using the RGB col or spac e. RGB represen tat ions, however, are highly corre lated, which implies that the RGB colo r space is not well- suite d for i ndep enden t cod ing [29 ]. Sin ce th e hum an visua l sys tem is less se nsitiv e to the pos ition and mo tion of co lor tha n lum inan ce [6 , 7]. Th eref ore, some color s pace conve rsions such as RGB t o YCbC r a re used [29, 8]. The next step of t he JPEG standa rd cons ists of Discr ete Cosine Trans form (DCT). A DCT expre sses a seque nce of finit ely many data poi nts in terms of a sum of cosi ne funct ions osci llating at dif fere nt freq uenc ies. D CTs are an importan t part in nume rous appli cation s in scie nce and enginee ring for the lossless comp ressi on of mu ltim edia da ta [1, 3]. The DCT separates th e image into diffe rent freq uencie s part. High er freq uenc ies re pres ent q uick chang es be twee n im age p ixels and low freq uen cies represe nt gr adua l cha nges betwe en im age pixel s. I n orde r to per form the DC T on an imag e, th e im age shou ld be div ided into 8 × 8 o r 16 × 16 b lock s [9 ]. In order to keep some impo rtan t DC T coe fficie nts, qua ntiz ation is applie d on t he tran sform ed block [10, 11]. Afte r this step zigzag scan ning is used. There are m any runs of z eros in an i mag e which has be en quan tize d thro ughou t the mat rix so, th e 8 × 8 blo cks are reo rde red as singl e 64-e lemen t colum ns [4, 9]. We ge t a vector sorted by the crite ria of the spatial frequency that giv es long runs of z ero s. The D C coeff icien t is treated sepa rately from the 63 AC coef ficie nts. The DC co effi cien t is a meas ure o f the ave rage v alue o f the 64 im age samp les [ 12 ]. Fina lly, in th e final ph ases co ding algo rithm s such as Run L ength Co ding (R LC) an d Differ entia l Puls e Code Modu lation ( DPCM ) a nd entropy cod ing are applied . The RLC is a simple and popula r data compress ion algo rithm [13]. It is ba sed on the idea to repla ce a long sequenc e of the same symbol by a shorte r seque nce. The DC coe ffici ents are coded separa tely from the AC ones . A DC coeffi cien t is coded by th e DPCM, wh ich is a los sles s data com pres sion techn ique . While AC coef ficien ts are coded u sing R LC alg ori thm. The DPC M algo rithm reco rds the diffe renc e betw een the DC coeffici ents of the curren t blo ck and the p revio us bl ock [14]. Si nce ther e is usually stro ng corr elati on betwe en the DC coeffic ients of ad jacent 8×8 blocks , it results a set of sim ilar number s with hig h occu rrence [1 5]. DPC M condu cted o n pixe ls with co rrela tion be tween succ essive sampl es leads to good comp ress ion rati os [16]. Entropy coding achiev es addi tiona l compre ssio n using encod ing the qu antiz ed DCT co effic ient s more com pact ly base d on the ir sta tistic al charact erist ics. Basic ally ent ropy cod ing is a critica l step of the JPEG stan dard as all pas t steps depen d on entropy coding and i t is importa nt which alg orithm is used, [17]. The JPEG prop osal specifi es two entropy coding algo rithm s, Huf fman [18] and ari thmet ic coding [19]. I n ord er t o determ ine which entropy cod ing is suita ble from p erfo rma nce, compress ion ratio, a nd im plem entat ion po ints of v iew, we f ocus on the m enti one d algo rithm s in this pape r. 3 Huffman Coding In com puter science and inform ation theory, Huffman coding is an entropy encoding algorithm used for lossless data compression [ 9]. The term refers to the use of a v ariable-length code tabl e for encoding a source sym bol (such as a charact er in a file) wher e the variable-length code table has been de rived in a particular way based on the estim ated probability of occurrence for each possible value of t he sourc e symbol. Huffman coding is based on f requency of occurrence of a data item. The principle is to use a l ower number of bits to encode the data that occurs more frequently [1]. The average length of a Huffman code depends on the statistical frequency with which the source produces each sy mbol from its alphabet. A Huffman code dictionary [3], which associates each data symbol with a codewor d, has the property that no code-word in the dictiona ry is a prefix of a ny other codeword in the dictionary [20]. The basis for this coding is a code tree according to Huffman, which assigns short code words t o sym bols frequently used and long code words to symbols rarely used for both DC and AC coefficients, each symbol is encoded with a variable- leng th code from the Huf fman table se t as signed to the 8x8 block’s image component. H uffman codes must be speci fied externally as an input to J PEG encoders. Note that the form in which Huff man tables are represented i n the data stream is an indirect specificat ion with which the decode r must construct the tables themselves prior to decompression [4 ]. The a lgorithm for building the encoding follows this algorithm each sy mbol is a leaf and a root. The flowchart of the Hu ffman algor ithm is depicted in figure 2. Figure 2. The flow chart of Huf fman algo rithm. Arrange source symbols i n descending order of probabilities Merge two of the lowest p rob. Symbols into one subgro up Assign zero & one to to p a nd Bottom branches, respecti vely Is there more than one unmerge node? Stop, read tr ansition bits on the branches fro m top to bottom to generate co dewords Y N End Start In order to clarify this algo rithm, we giv e an exam ple. We suppo se that a list co nsist s of 0, 2, 14, 13 6, and 222 symbols . The ir occurre nces are d epict ed in Table 1. As this t ab le show s, symbol 0 occ urs 100 time s i n the m enti oned list. The Huf fman tree and thei r fin al co de are show n in fig ure 3 and Table 2 [ 21, 3 ]. As can be seen in Tab le 2, the minim um num ber of bits th at is assigne d to the larges t occ urren ces symbol is one bi t, bit 1 that is assign ed to symbol 0. This mea ns tha t we can not assign fewer bit s than one bit t o that sym bol. This is the m ain limitat ion of the of the Huffm an coding. I n orde r to o vercom e on this p roblem arithm eti c cod ing i s us ed th at is disc ussed in t he fo llow ing s ectio n. 4 Arithmetic Coding Arit hmeti c cod ing a ssign s a s equ ence o f bit s to a mes sag e, a s ting of sy mbo ls. Arit hmeti c cod ing c an tr eat the whole sym bols in a list or in a mess age as one u nit [22]. Unl ike Huffm an coding , arithme tic coding doesn´ t use a disc rete n umbe r of bits for e ach. The num be r of b its us ed to enco de ea ch sym bol v arie s acc ording to the proba bili ty as sign ed to that sy mbo l. Low pro babi lity symbols use many b it, h igh pr obab ility sym bols use fewer bit s [2 3]. The m ain idea behind Arithm etic coding is to assig n eac h sym bol an interval . Sta rting with the interv al [0...1), each int erval is divided in several sub inte rval, which its sizes ar e p ropor tiona l to the curr ent probab ility of the corre spo nding sym bol s [24]. The sub inte rval from the coded symbol is t hen tak en as the interva l for the next symbo l. The outp ut is the interval of the last symbo l [ 1, 3]. A rithm etic coding algo rithm is shown in t he fo llow ing. Symbols Frequency 0 100 2 10 14 9 136 7 222 5 Symbols Code Frequency 0 1 100 2 011 10 14 010 9 136 001 7 222 000 5 Figure 3. Pro cess of building Huffma n tree. Table 2. Sequence of symbols and co des that are sent to the decoders . Table 1. Input symbols wit h their frequen cy. 1 14 ( 9 ) 2 ( 10) 12 19 1 1313 1 1 0 31 0 (100) 0 222 (5) 136 (7) 14 (9) 2 (10) 1 1 0 0 222 (5) 31 12 2 136 (7) 19 14 (9) 2 (10) BEGI N low = 0. 0; h igh = 1.0; rang e = 1 .0; whil e (sy mbo l != t ermi nato r) { ge t (sym bol ); low = low + r ange * Rang e_low (sym bol ); hig h = l ow + rang e * R ange _high (sym bol ); rang e = high - lo w; } ou tput a co de so tha t low <= cod e < h igh; END.[ 3] The Figure 4 dep icts the flowchart of the arithmetic coding. Figure 4. T he flowchart of the arithmetic algorit hm. N Is another symbol av ailable? End Lower endpoint = upper endpoint continue with the next symbol from alphabet (x++) Write symbol (x) Upper endpoint = Lower endpoint + interval size * p(x) Dose the code word fall into current interval? Start Lower endpoint=0 Upper endpoint=1 Interval size = upper- lower endpoint Load significant digits to codeword, if required Start with the first sym bol Of the alphabet(x=0) N Y Y In order to clari fy the arithm etic coding, we explain the previous ex ample using this algorithm. Table 3 depicts the probabi lity and t he range of the probab ility of the sy mbols between 0 a nd 1. We suppose that the input m essage consists of th e following sym bols: 2 0 0 136 0 and it st art from left to right. Figure 5 de picts the graph ical explanation o f the arithm etic algorithm of this message from left to right. As can b e seen, the first prob ability range i s 0.63 to 0.74 (Table 3) because the first sym bol is 2. 0.66417 0.6607 0.6638 0.657 0.663 4 0.6533 0.66303 The encoded interval for the mentioned example i s [0.6607, 0. 66303). A sequence of bits are assigned to a number that is lo cated in this r ange. Referring to Figure 2 and 4 and considering t he discussed example in Fig ure 3 and 5, we can say that implem entation com plexity of arithmetic coding is m ore than Huffman. We saw this beh avior in the programm ing too. 5 Implementation of the Algorith ms We have implemen ted Huffman and arithm etic algori thms using Matla b program ming tools . We execu ted the implem ente d prog ram s i n a p latfo rm th at i ts spe cif icat ion is depic ted in Ta ble 4 . Symbols Probability Range 0 0.63 [ 0 , 0.63 ) 2 0.11 [ 0.63 , 0.74 ) 14 0.1 [ 0.74 , 0.84 ) 136 0.1 [ 0.84 , 0.94 ) 222 0.06 [ 0.94 , 1.0 ) Input symbols : 2 0 0 136 0 Output : [ 0.6607 , 0.66303 ) Table 3. Pr obability and ranges distribution o f symbols 1 0.63 0.73 0 0.63 0.63 0.63 0.6 607 0.660 7 0.94 0.84 0.74 0.72 0.71 0.69 0.685 0.679 0.673 0.667 0.667 0.66303 0.662858 0.662635 0.662402 0.662168 0.6644 Figure 5. Grap hical display of shrinking ranges . 0 2 14 136 222 0 Laptop DELL XPS 15 58 RAM DDR3 - 4GB Processor Type i7 -820QM Number of Cores of Processor 4 Clock Speed of P rocessor 1.73 GHz Cache of P rocessor 8 MB Operating Sy stem Windows 7 - 64bit Tabl e 4: Spec ifica tio n of the p latfo rm sy stem tha t has bee n use d fo r exe cutio n o f the p rogr ams. A pa rt of the im plemen ted code s is depicted in Fig ure 6. We exec uted and tes ted bo th co des o n many stan dard and f amo us image s such as "Lena image ". These stand ard test i m ages hav e been use d by di ffer ent rese arche rs [25 , 2 6, 27, 28] relate d to image comp ressio n and i m age applic ation s . We use differe nt image size s such as 1 28 ×12 8, 256× 256, 512× 512 ,1024×1 024 and 2048 ×2048 . The sam e inpu ts are used for bot h algo rithm s. Figure 6. The segm ent codes of entro py coding . %**** *******Start Hu ffman C oding for tim e= 1:100 tic k=0; VECTOR-HUFF( 1) = V(1) ; for l = 1:m a=0; for q=1:k if(VECTOR (l) == VEC TOR-HUFF (q )); a=a+1; end end if (a==0 ) k=k+1; VECTOR-HU FF(k) = V(l ); en d end f or u=1:k a =0; for l=1:m if (V(l )== VECTOR-A RITH(u)) a=a+1; end VECTOR-HU FF-N UM (u)= a; end end fo r i=1:k P(i)= VECTOR- HUFF-N UM (i )/(m1); end dict = h uffm andict(VECTOR- HUFF,P); hcode = hu ffm anenco(VECTOR,dict ); [f1,f 2] = size(hc ode); Compressi on ratio = b0/f2 toc end %******* **Start Arithm etic Coding for tim e= 1:100 tic k=0; VECTOR-ARI TH(1) = V( 1); for l = 1:m a=0; for q=1:k if (V(l) == VECTOR- ARITH (q)); a=a+1; end end if (a==0 ) k=k+1; VECTOR-A RITH (k) = V(l); en d end fo r u=1:k a=0; for l=1:m if (V (l) == VECTOR- ARITH (u)) a=a+1; Varith(l )=u; en d VEC TOR-ARITH-NUM(u )= a; en d end code = ari thenco(Va rith,VECT OR-ARITH-NUM ); [f1,f2] = s ize(code ); Compressi on rati o = b0/f2 toc end 6 Experimental R esults The experimental results of the implemented algorithm s, Huffman and ar ithmeti c coding for com pression ratio and execution time are depicted in Table 5. As this table shows, on one hand, the compression ratio of the arithmetic cod ing for di fferent i mag e sizes is hig her than the Huffm an coding. On the other hand, arithmetic coding needs more execution time than Huffm an coding. This means that the high com pression ratio of the arithm etic algorithm is not free. I t needs more resourc es than Huffm an algorithm. Test Image Size Compression Ratio (bits/sample) Algorithm Execution Times(seconds) Comparison Arith metic to Huffman (%) Huffman Arithmetic Huffman Arithmetic Compression Time 2048 × 2048 6.37 12.02 32.67 63.22 47 48 1024 × 1024 5.64 7.73 8.42 20.37 27 58 512 × 512 5.27 6.55 2.13 5.67 19 59 256 × 256 4.78 5.40 0.55 1.63 11 66 128 × 128 4.38 4.65 0.14 0.45 5 68 Anoth er beh avio r that ca n be see n in Tabl e 5 is, by increa sing image siz es f rom 128 X128 t o 2048X 2048, the imp roveme nt of the compr essio n ratio o f the ari thme tic cod ing inc reas es more t han the H uffma n codi ng. For insta nce, th e compre ssio n ratio of Huffm an algo rithm for image siz es of 1024 X1024 and 2048 X204 8 is 5.64 and 6.37, resp ectiv ely. While fo r ari thme tic coding is 7.73 and 12.02 , respect ively. Figure s 7 and 8 depict a comp arison of the compres sion ratio and execu tion time for the arithm etic and Huffm an algori thms, resp ectiv ely. I n o ther words , the se f igures are the o ther repr esen tatio n of pres ente d resu lts in Tab le 5. Figure 7. Co mparison of compression ratio for Huffman and arithmetic algorithms using d ifferent image si zes. Table 5. Average of compression results on test image set . 7- Related Work Huffman[18] in 1952 pr oposed an elegant sequentia l algorithm which gene rates optim al prefix codes in O( n log n ) ti me. T he algorithm actually needs only linear time prov ided t hat the frequencies of appearance s are sorted in advance. There have been extensive researches on analysis, im plementation issues and improvem ents of the Huffm an coding theory in a variety of applications [31, 32]. In [33], a t wo- phase parallel algorithm for time efficient construction of Huffman codes has bee n proposed. A new multimedia functional unit for general-purpose processor s has been proposed in [3 4] in order to increase the performance o f Huffamn coding. Texts are always com pressed w ith lossless compression algorithms. This is because a loss in a text will change its original concept. Repeated data is important in text compression. I f a text has many repeated data, it can be compressed to a high ratio. This is due to the fact that compression algorithms generally eliminate repeated data. I n order to evaluate the compression algor ithms on the text data, a comparison between arithm etic and Huffman coding algori thms for different text files w ith different capacities has been performed in [30]. Experimental results showed that the compression ratio of the ar ithm etic coding f or text files is better than Huffam n coding, while the performance of the Huffm an coding is better than the arithmetic coding . 8- Concl usions Compression is an import ant technique i n the multim edia computing f ield. T his is because we can reduce the size of data and transmitting and storing the reduced data on the Internet and storage devices are faster and che aper than uncom pressed data. Many i m age and video compression standards such as JPEG, JPEG2000, and MPEG- 2, and MPEG-4 have been proposed and implemented. In all of them entropy coding, arithm etic and Hu ffman algorithms are almost used. I n other words, these algorithm s are i mporta nt parts of the multimedia data compression standard s. In this paper we have focused on these alg orithms in order to clarify their differ ences from different points of view such as implementation, com pression ratio, and perform ance. We have explained these algorithms in d etail, im plemented, and tested using different image sizes and contents. From im plementation poin t of v iew, Huf fman codi ng is easier than arithmetic coding. Arithm etic algorithm yields much more compression ratio than Huffm an algorithm while Huf fm an coding needs less execution time than the arithmetic coding. This means t hat in some applications that t ime is no t so important we can use arithm etic al gorithm to achiev e high compression ratio, while for som e applications tha t time is important such as real-tim e applications, Huffm an algorithm can be used. In order to achieve m uch more performance compared to software implementati on, both algorithms can be implem ented on hardware platform such as FPGAs using parallel processing techniques. T his is o ur futu re work. Figure 8 . Comparison o f performance for Huffman and arithmetic algorithms using d ifferent image sizes. References [1] Sharm a, M.: 'Com pression Using Huffman Coding'. Internati onal Journ al of Computer S cience and Netw ork Security , VOL.10 No .5, May 2010. [2] Wisem an, Y.: ' Take a Picture of Your Tire!'. Com puter Scien ce Departm ent Holon Instit ute of Techn ology Israel. [3] Li, Z., and Drew, M. S.: 'Fu ndamental of Multim edia, School of Com puting Sci ence Fraser Univ ersity , 2004 . [4 ] Gregory , K.: 'The JPEG Still Pictur e Compression Stan dard' . Wallace Multimedia En gineering Digital Equipm ent Corporati on Mayn ard, Massac husetts, De cember 1991. [5 ] M. Mansi, K. and M. Shalini B.: 'Comparis on of Different Fingerprint Com pression Techniqu es'. Signal an d Image Processin g : An In ternationa l Journa l (SIPIJ) Vol.1, No .1, Septem ber 2010. [6 ] Shilpa, S. D. an d DR. Sanjayl. N.: 'Image Compressi on Base d on I WT, IWPT an d DP CM-I WPT' . International Journal of Enginee ring Scien ce and Technology Vol. 2 (12), 201 0, 7413-7422. [7] Wang , J., Min, K., Jeung , Y. C. and Chong, J. W.: 'Improve d BTC Using Lu min ance Bitmap for Color I mag e Compressi on'. IEEE Image an d Signal Pr ocessing, 2009. [8 ] Mate os, J., I lia, C., Jimenez, B., Molina, R. an d Kats aggelos, A. K. : 'R eduction of Blocking A rtifacts in Block Transform ed Compress ed Color Imag es'. [9 ] O’Hanen, B ., and Wis an M.: 'J PEG Compressi on' . December 16, 200 5. [ 10 ] L i, J., Koivu saari, J., Takalal, J., G abbou j, M., and Ch en, H. : 'Hum an Visual Sy stem Bas ed Ada ptive Inter Quantizati on'. Department of Inf ormation Technol ogy, Tampere Unive rsity of T echnol ogy Tampere, FI-33720, Finland , S chool of Comm unication Engineerin g, Jilin Univer sity Ch angchun, China. [11 ] Garg, M.: ' Perform ance Analy sis of Chrominance Red and Chrominan ce Blue in JPEG'. World Academy of Science, Eng ineerin g and Tec hnology 43, 2008. [12 ] G anvir, N. N., Jadhav, A.D., and Sc oe, P.: 'Expl ore the Performance of th e A RM Process or Usin g JPEG' . Internati onal Journ al on C omputer Sci ence an d Engineerin g, Vol . 2(1), 2010, 12- 17 . [13 ] Baik, H., S am Ha, D. , Yook, H. G., Shin, S . C . and Par k, M. S.: 'Sele ctive Applicat ion of Burrows-Wheeler Transform ation for Enh ancem ent of JPEG Entropy Coding'. Internation al Confer ence on Inform ation, Commun ications & Sign al Pr ocessing, December 1999, Sin gapore. [14] Blell och, G.: ' Intr oduction t o Data C ompression'. C arnegie Mellon Univ ersity , September , 2010. [15] Dubey, R. B., and Gupta, R.: ' High Quality Image Compression'. Asst. Prof, E & IE, APJ College of En g., Sohna, Gurgaon, 121003 – India, Mar 2 011. [16] Klein, S . T., and Wisem an, Y.: 'Parallel Huff man Decoding w ith Applications to JPEG Files'. The Com puter Journal, 46 (5), British C omputer Soci ety, 2003. [17] Rosenthal, J.: 'JP EG Image Compression Using an FP GA'. U niversity of California, December 200 6 [18] Huffman, D. A. : ‘A Method fo r the Construction of M inimum Redundanc y Codes", Pr oc. IRE, Vol. 40, No. 9, pp. 1098-1101, September 1 952. [19] Penn ebaker, W. B. and M itchell. , J. L. : 'Arithm etic Codi ng Articles' , IBM J. Res. D ev., vol . 32, 1 988. pp. 717-774. [20 ] Mitzenm acher, M.: 'On the Hardn ess of Fin ding Optim al Multiple Preset Dicti onaries'. IEEE Transaction on Informati on The ory, VOL. 50, NO. 7 , JULY 200 4. [ 21 ] Wong, J., Tatikonda M., and Marczewski, J.: 'JPEG Compression Algorithm Using CUDA Course' . Departm ent of Computer Engineering Unive rsity of Toronto. [22 ] Riss anen, J. J. and Lang don, G. G.: 'A rithm etic Codin g'. IBM Journal of Rese arch and Development, 23(2):146 – 162, March 1979. [23 ] Redm ill, D. W. and Bull, D. R.: ' Error R esilient Ari thm etic Coding of Still Im ages'. Image C ommunicati ons Group, Centre fo r Comm unications R esearch, Un iversity of Brist ol, Brist ol. [24 ] Kavith a, V. and Easwara kumar, K. S. : 'Enh ancing Privacy in Arithm etic Coding'. ICGST -AIML Journal, Volum e 8, Issue I, June 2008. [25 ] Kao, Ch., H, and Hwang , R. J .: 'Inform ation Hiding in Lossy Compression Gray Scale Image', Tamkang Journal of Science an d Engine ering, Vol. 8, No 2, 2005, p p. 99- 10 8. [26] Ueno, H., and Morikaw a, Y.: 'A New Distribution Modeling f or Lossl ess Image Coding Using MMAE Predictors'. The 6th Int ernati onal Confe rence on Inform ation Technology and Appli cations , 2009. [27 ] Grgic, S., Mrak, M., and G rgic, M.: 'Comparison o f JPEG Im age Coders'. Univers ity of Zag reb, Faculty o f Electric al Enginee ring and Compu ting Unska 3 / XII, HR -1 0000 Zagr eb, Croatia . [28] http ://sipi.u sc.edu, a ccess ed Mar 2011 . [29] Shahbahra mi, A., Juurlink, B.H.H ., Vas siliadis, S. Acceleratin g Color Space Conversion Using Exte nded Subwords and the Ma trix Register File, Ei ghth IEEE International Symposium on Mult imedia, pp. 3 7 -46, San Diego, The USA, Dece mber 2006. [30] Jafari, A. and R ezva n, M. and Shahbahrami, A.:’A Comparison Between Arithmetic and Huffman Coding Algorithms’ The 6 th Iranian Machine Visio n and Image Processing Con ference, pp: 248 -254, Octo ber, 2010. [3 1 ] Buro. M.: ‘On the ma ximum length of Huffman cod es’, Information Proce ssing Letters, Vol. 45 , No.5, pp. 219 - 223, April 1993 . [3 2] Chen, H. C. and Wan g, Y. L. and Lan, Y. F.: ‘A Memory E fficient and Fast Huff man Decoding A lgor ithm’ Information Processing Letters, Vol. 69, No. 3, pp. 119 - 122, February 1999. [3 3] Ostadzadeh, S. A. and Elahi, B. M. and Zeialpour, Z. T , and Moulavi, M. M and Be rtels, K. L. M, : A T wo Phase Practical P arallel Algorithm for Construction of H uffman Codes, Proceed ings of International Conference on P arallel and Distributed Proce ssing Techniques and Applicatio ns, pp. 284 -291, Las Vega s, USA, June 2007. [3 4] Wong, S. and Cotofa na, D. and Vassiliadis, S.: Genera l-Purpo se Processor Huffman Enco ding Extension, Pro ceedings of the International Confere nce on Information Technolo gy: Coding and Computing (IT CC 2000), pp. 158 -163, Las Vegas, Nevada, Ma rch 2000

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment