Show Me Your Cookie And I Will Tell You Who You Are

With the success of Web applications, most of our data is now stored on various third-party servers where they are processed to deliver personalized services. Naturally we must be authenticated to access this personal information, but the use of personalized services only restricted by identification could indirectly and silently leak sensitive data. We analyzed Google Web Search access mechanisms and found that the current policy applied to session cookies could be used to retrieve users’ personal data. We describe an attack scheme leveraging the search personalization (based on the same SID cookie) to retrieve a part of the victim’s click history and even some of her contacts. We implemented a proof of concept of this attack on Firefox and Chrome Web browsers and conducted an experiment with ten volunteers. Thanks to this prototype we were able to recover up to 80% of the user’s search click history.

💡 Research Summary

The paper “Show Me Your Cookie And I Will Tell You Who You Are” investigates a privacy‑breaking attack that exploits Google’s session cookie named “sid”. Google uses a two‑level cookie model: the “ssid” cookie is secure, transmitted only over HTTPS, and is required for accessing user data such as Gmail, Calendar, and Contacts. In contrast, the “sid” cookie is a non‑secure session identifier that is sent in clear text to any *.google.com service, regardless of whether the request uses HTTP or HTTPS. Because it is sent with every request to Google domains, an attacker who can observe network traffic on an insecure network (public Wi‑Fi, rogue access point, or a compromised router) can easily capture the sid cookie.

Once the sid cookie is obtained, the attacker can impersonate the victim for any Google service that relies solely on identification, without needing full authentication. The authors focus on Google Web Search, which personalizes results based on the user’s search history, visited links, and social connections (Google+, Gmail contacts, etc.). Google’s search interface provides two useful filters: “Visited” (shows only results the user has previously clicked) and “Social” (highlights pages shared by the user’s contacts). By enabling these filters, the attacker can retrieve a list of URLs that the victim has actually visited, effectively leaking the victim’s click history and, through the Social filter, a subset of the victim’s contacts.

The attack proceeds as follows:

-

Cookie Capture – Using a network sniffer or a tool like Firesheep, the attacker captures the sid cookie when the victim accesses any unencrypted Google service (e.g., Google Search, Google Alerts). The paper also discusses how HTTPS‑Everywhere can be bypassed because not all Google services are available over HTTPS.

-

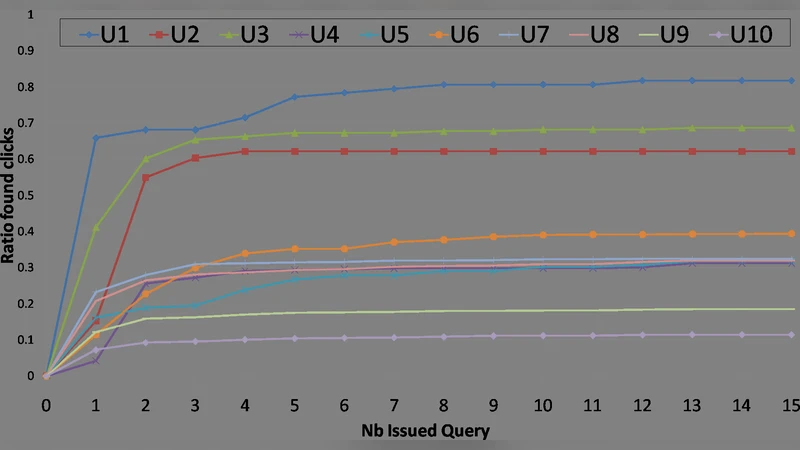

Automated Querying – With the stolen sid, the attacker opens a browser window on the victim’s account and issues a series of search queries while the “Visited” filter is active. The authors crafted a list of 15 generic terms consisting of common top‑level domain suffixes (.com, .net, .org, .us, .edu, .fr, .co), typical URL components (jsp, asp, php, html, index, www), and popular site names (google, facebook). These terms are highly likely to appear in the URLs of visited pages.

-

Result Parsing – The attacker’s script parses the first results page, clicks “Next” to retrieve subsequent pages, and continues until no more results are returned. By setting the “pref” cookie to display 100 results per page (a non‑secure preference cookie), the number of required queries is minimized.

-

Data Extraction – All URLs that appear in the filtered results are collected. Because the “Visited” filter removes unclicked links, the collected set directly corresponds to the victim’s click history. The “Social” filter can additionally reveal contacts and shared content.

The authors implemented a proof‑of‑concept as a Firefox extension built on top of Firesheep and a complementary Chrome extension that injects the sid cookie. They also released a measurement extension that extracts the victim’s actual Web Search History (from Google’s “My Activity” page) for comparison. Ten volunteers participated in the evaluation. Each participant had between 88 and 3,059 recorded search results between January and July 2011. The attack recovered between 72 and 467 links, representing 11 % to 82 % of each user’s total history. For the three users with the largest histories (1,340–3,059 clicks), the tool recovered roughly 628–644 links, i.e., about half of the total.

The experiment shows that, on average, 40 % of a victim’s click history can be recovered with a modest number of queries; in the best cases, up to 80 % is exposed. The attack is “destructive” in the sense that the automated queries appear in the victim’s search history, potentially alerting the user. However, by limiting the number of queries (the authors needed only four queries that yielded no visited results) the attacker can stay largely unnoticed.

The paper discusses several mitigation strategies:

- Enforce HTTPS for all Google services, eliminating clear‑text transmission of the sid cookie.

- Require full authentication (ssid) for any request that returns personalized data, not just identification.

- Disable or restrict the “Visited” and “Social” filters for unauthenticated sessions.

- Educate users about the risks of enabling Web Search History and the existence of “opt‑out” mechanisms.

In conclusion, the work demonstrates a concrete privacy breach that stems from the separation of authentication and identification in web services. A non‑secure session identifier, when combined with personalized search features, can be leveraged to reconstruct a substantial portion of a user’s browsing behavior and social graph without ever needing the user’s password. The authors argue that service designers must treat any identifier that grants access to personalized content as sensitive and protect it with the same rigor as authentication credentials.

Comments & Academic Discussion

Loading comments...

Leave a Comment