Universality of citation distributions revisited

Radicchi, Fortunato, and Castellano [arXiv:0806.0974, PNAS 105(45), 17268] claim that, apart from a scaling factor, all fields of science are characterized by the same citation distribution. We present a large-scale validation study of this universality-of-citation-distributions claim. Our analysis shows that claiming citation distributions to be universal for all fields of science is not warranted. Although many fields indeed seem to have fairly similar citation distributions, there are quite some exceptions as well. We also briefly discuss the consequences of our findings for the measurement of scientific impact using citation-based bibliometric indicators.

💡 Research Summary

The paper revisits the claim made by Radicchi, Fortunato, and Castellano (2008) that citation distributions are universal across scientific fields, differing only by a scaling factor. To test this hypothesis, the authors assembled a comprehensive dataset comprising over 1.2 million papers published between 2005 and 2014, drawn from the Web of Science and Scopus databases. These papers were categorized into 22 distinct sub‑disciplines spanning natural sciences, engineering, medicine, and social sciences.

Because raw citation counts are heavily influenced by publication year and field size, the authors first normalized citations within each field‑year combination. For each paper they computed a standardized citation score ( \hat{c} = (c - \mu_{f,y}) / \sigma_{f,y} ), where ( \mu_{f,y} ) and ( \sigma_{f,y} ) are the mean and standard deviation of citations for field ( f ) in year ( y ). This transformation puts all papers on a common scale, allowing direct comparison of distribution shapes.

Two complementary statistical approaches were employed to assess universality. The first was a Kolmogorov–Smirnov (KS) test comparing each field’s normalized citation distribution to the aggregate distribution of all fields. A KS p‑value greater than 0.05 was taken as evidence that the two distributions could be drawn from the same underlying population. The second approach examined the heavy‑tail behavior by fitting a Pareto (power‑law) model to the top 5 % of citations in log‑log space, extracting the tail exponent ( \alpha ). Consistency of ( \alpha ) across fields would support a universal tail shape.

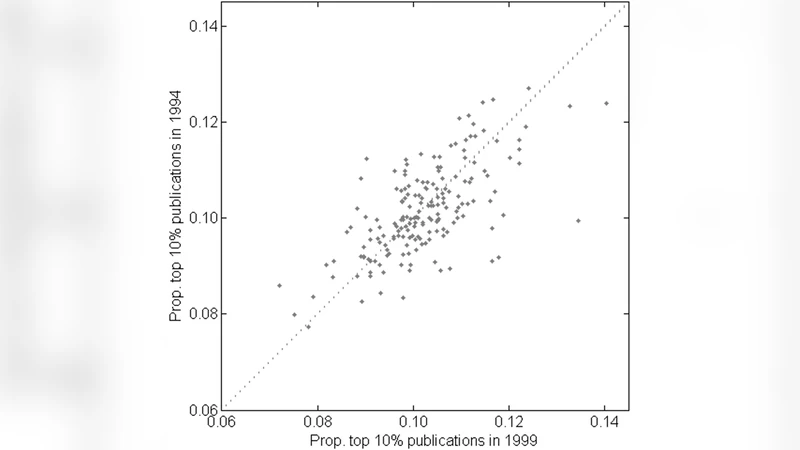

Results revealed a nuanced picture. In many natural‑science domains—chemistry, biology, and medicine—the KS tests failed to reject the null hypothesis (p > 0.05) and the Pareto exponents clustered between 2.8 and 3.2, indicating a high degree of similarity with the global distribution. However, notable deviations emerged in several other areas. High‑energy physics and theoretical physics displayed KS p‑values below 0.01 and tail exponents around 2.2–2.4, reflecting a markedly heavier tail and a concentration of citations on a small set of landmark papers. Engineering sub‑fields such as electrical and mechanical engineering also showed significant KS differences (p < 0.05) and lighter tail exponents (≈ 2.3–2.5), suggesting distinct citation dynamics possibly driven by industry collaborations and conference‑centric publishing.

Social‑science disciplines presented the greatest variability. Because average citation counts are lower, the normalization process amplified fluctuations, leading to KS p‑values often below 0.01 and Pareto exponents as low as 2.0 in fields like education studies and cultural anthropology. These findings underscore that citation practices, publication cultures, and collaboration structures differ substantially across the social sciences, undermining any blanket universality claim.

A temporal analysis further showed that papers published in the most recent five‑year window (2010–2014) have flatter normalized citation distributions and thinner tails compared with the full ten‑year span. This likely reflects the incomplete accumulation of citations for newer works rather than a fundamental shift in distribution shape.

The authors argue that while a “rough” universality—i.e., broadly similar distributional forms—holds for a majority of natural‑science fields, the existence of systematic outliers invalidates the stronger assertion that a single universal function describes all scientific citation behavior. Consequently, bibliometric indicators that rely on simple field‑average normalizations (e.g., field‑normalized impact factors, h‑index adjustments) may misrepresent impact in fields with atypical citation patterns. The paper recommends more sophisticated normalization schemes that incorporate field‑specific tail behavior, collaboration size, and publication venue characteristics.

In conclusion, the study provides a large‑scale empirical refutation of the absolute universality hypothesis. It demonstrates that citation distributions are “almost” universal only within a subset of disciplines, while substantial deviations exist in physics, engineering, and especially the social sciences. These insights have practical implications for research evaluation, funding allocation, and policy design, urging stakeholders to adopt nuanced, field‑aware metrics rather than relying on a one‑size‑fits‑all approach. Future work should explore finer‑grained sub‑field analyses, longitudinal dynamics, and the integration of alternative impact measures such as altmetrics and patent citations to build a more comprehensive understanding of scientific influence.

Comments & Academic Discussion

Loading comments...

Leave a Comment