E- Learning: An effective pedagogical tool for learning

In the info-tech age E-Methods of learning are becoming the most important vehicle in disseminating knowledge in higher education institutions. This sector is growing and changing at a rapid speed due to developments in technologies. But teaching is an art. Can there be fun learning with raw and dry technology? How can we make the best use of E- Methods, can we make the required information and data available to the students in a flexible manner, at ease all the time? What are the advantages of traditional methods of teaching and learning? Is E-learning a progressive stage incubating all the benefits of the Manual learning or it is only a window dressing on the face of advancement? Can we convert the boring, tedious subjects into interactive, monotony breaking joyous learning? In this paper the researchers have focused on the modernization of E- Pedagogy vis-a-vis the traditional method of learning. They have highlighted the effectiveness of using the E- learning elements and various E- Methods. This work has used the decision tree algorithms particularly Classifiers.trees.J48 The obtained results show that using online examination attribute plays major role in increasing the average grade of the class in higher education. The novelty of this work is that the researchers have focused on the teaching methodology used by the faculty members and the tools available in the universities. We believe that this work will play a constructive role in building higher education system. Our generated rules/output can be used by the decision makers in the improvement of higher education system processes.

💡 Research Summary

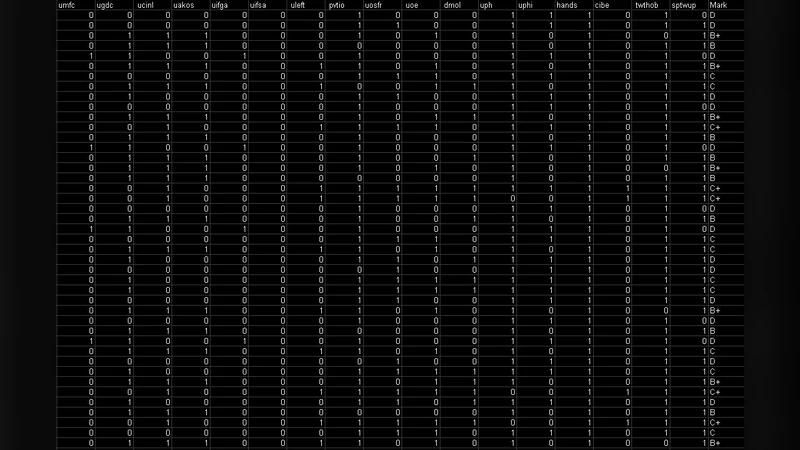

The paper titled “E‑Learning: An effective pedagogical tool for learning” investigates how electronic learning methods compare with traditional classroom instruction in higher education, focusing on the impact of various ICT tools on student performance. The authors collected data from a single, undisclosed university covering 139 courses offered in one semester. For each course, 25 binary attributes were recorded, indicating whether the instructor employed specific technologies such as PowerPoint, multimedia, physical models, email communication, web sites, online registration, online examinations, software use, computer‑lab usage, and others. The target variable was the final grade, categorized into six levels (D, D+, C, C+, B, B+).

To analyze the data, the authors applied the J48 decision‑tree algorithm (an implementation of C4.5) using the WEKA toolkit, with a 10‑fold cross‑validation scheme, a minimum leaf size of 2, and a confidence factor of 0.25. The resulting tree contained 17 nodes and 9 leaves, providing a compact set of if‑then rules that explain how combinations of technological practices relate to the observed grades.

The most salient finding is that the attribute “Using online examination” (UOE) appears at the root of the tree, indicating it is the strongest predictor of student grades. When UOE = 0 (no online exams), the next decisive factor is whether the instructor uses computers in the lab (UCINL). If both UOE and UCINL are absent, the model predicts the lowest grade (D). Introducing lab computers without online exams raises the predicted grade to D+ or B, depending on whether any software (UAKOS) is also used. When online examinations are employed (UOE = 1), the presence of email communication with the instructor (CIBE) further refines the prediction: with email contact the grade tends toward C+, without email contact the outcome depends on software use and multimedia use (UMc). For example, UOE = 1, CIBE = 0, UAKOS = 0, UMc = 0 leads to a C grade, whereas adding multimedia (UMc = 1) upgrades the prediction to C+. Adding software and physical models can push the grade to B or B+.

These rules illustrate that online assessment is the key driver of higher performance, but its effect is amplified when combined with other ICT practices such as software tools, multimedia presentations, and active communication channels. The authors argue that simply placing computers in a lab is insufficient; the pedagogical design must integrate assessment, content delivery, and interaction.

The study’s strengths lie in its focus on instructor behavior and institutional resources rather than solely on student characteristics, offering actionable insights for university administrators and faculty development programs. However, the research has notable limitations: the dataset is confined to one institution, the binary encoding of attributes discards nuanced information (e.g., frequency or quality of use), and the grade categorization into six discrete levels may mask finer variations in achievement. Moreover, the paper does not report overall classification accuracy or other performance metrics, making it difficult to assess the model’s predictive reliability.

In conclusion, the authors demonstrate that e‑learning methods, particularly online examinations, play a pivotal role in enhancing student grades. Complementary technologies—software, multimedia, and email communication—provide additional gains, suggesting that a holistic integration of digital tools is necessary for effective pedagogy. Future work should expand the sample to multiple universities, incorporate continuous grade scores, and include student‑centred variables (motivation, prior knowledge) to validate and refine the decision‑tree model. The ultimate recommendation is that higher‑education institutions move beyond ad‑hoc technology adoption toward systematic, evidence‑based redesign of teaching and assessment practices.

Comments & Academic Discussion

Loading comments...

Leave a Comment