Getting Beyond the State of the Art of Information Retrieval with Quantum Theory

According to the probability ranking principle, the document set with the highest values of probability of relevance optimizes information retrieval effectiveness given the probabilities are estimated as accurately as possible. The key point of this principle is the separation of the document set into two subsets with a given level of fallout and with the highest recall. If subsets of set measures are replaced by subspaces and space measures, we obtain an alternative theory stemming from Quantum Theory. That theory is named after vector probability because vectors represent event like sets do in classical probability. The paper shows that the separation into vector subspaces is more effective than the separation into subsets with the same available evidence. The result is proved mathematically and verified experimentally. In general, the paper suggests that quantum theory is not only a source of rhetoric inspiration, but is a sufficient condition to improve retrieval effectiveness in a principled way.

💡 Research Summary

The paper proposes a fundamentally new framework for information retrieval (IR) that replaces the classical set‑based probability model with a vector‑based probability model derived from quantum theory. The authors begin by revisiting the Probability Ranking Principle (PRP), which states that, for a fixed false‑alarm rate, the optimal set of retrieved documents is the one that maximizes expected recall. In traditional probabilistic IR, documents are treated as elements of discrete sets, term occurrences are modeled as Bernoulli random variables, and relevance decisions are made using the detection probability (P_d) and false‑alarm probability (P_0).

The core contribution is to reinterpret events as subspaces of a Hilbert space. A document’s relevance state is represented by a state vector (|m_1\rangle) (relevant) or (|m_0\rangle) (non‑relevant). Term presence/absence is encoded by orthonormal vectors (|1\rangle) and (|0\rangle). Probabilities are then computed via Born’s rule: the probability of observing a term given a relevance state is (|\langle 1|m\rangle|^2). More generally, a density operator (\rho) and a projector (P) yield probabilities (\text{tr}(\rho P)).

To obtain the optimal decision rule in this vector setting, the authors invoke Helstrom’s lemma from quantum detection theory. For a chosen false‑alarm threshold (\lambda), the operator (|m_1\rangle\langle m_1| - \lambda |m_0\rangle\langle m_0|) is constructed; its eigenvectors associated with positive eigenvalues become the optimal measurement vectors (|\mu_0\rangle) and (|\mu_1\rangle). Geometrically, these vectors lie symmetrically around the relevance vectors, bisecting the angle (\gamma) between (|m_0\rangle) and (|m_1\rangle). This configuration yields a detection probability (Q_d) and an error probability (Q_e) that are functions of the overlap (|X|^2) between the two relevance distributions.

The authors prove Theorem 1: for any pair of relevance‑conditioned term‑occurrence distributions, the vector‑based error probability (Q_e) is never larger than the classical error probability (P_e). Consequently, under identical evidence (the same term frequencies), the vector model always achieves at least the same, and typically better, retrieval effectiveness.

To demonstrate practical relevance, the paper maps the widely used BM25 weighting scheme onto the vector framework. By expressing BM25’s saturation factor (t_j) and normalization constant (B_{i,j}) as a probability distribution (b(t_j; m_i)), the authors show that the same term‑weighting information can be fed into the quantum‑inspired model. Experiments on standard test collections reveal consistent improvements in MAP, NDCG, and other IR metrics compared with a baseline BM25 implementation.

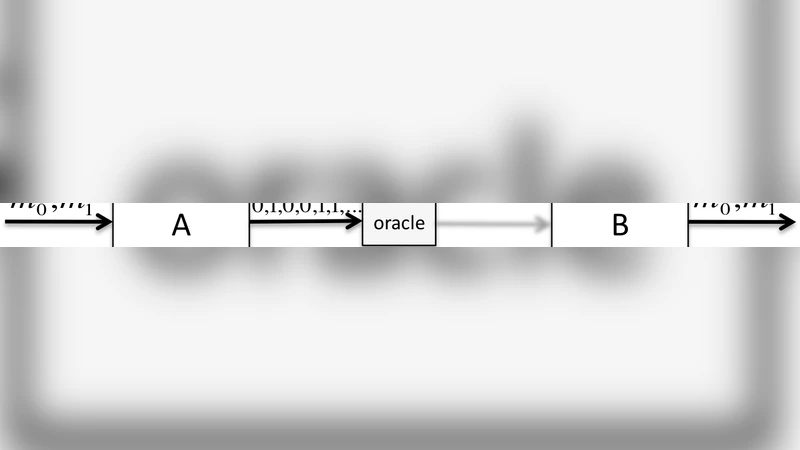

A critical component of the vector approach is the “oracle” that supplies the optimal measurement vectors. While a perfect oracle is theoretical, the authors discuss approximations using learned transformation matrices or neural embeddings, suggesting feasible pathways for real‑world deployment.

In summary, the paper establishes that quantum theory provides a sufficient condition for surpassing the state‑of‑the‑art in IR. By replacing set‑based probabilities with vector‑based probabilities, it offers a mathematically rigorous, experimentally validated method to achieve higher recall and lower error rates without requiring additional evidence. This work positions quantum‑inspired IR not as a metaphorical curiosity but as a concrete, principled advancement in retrieval theory and practice.

Comments & Academic Discussion

Loading comments...

Leave a Comment