Scalable Continual Top-k Keyword Search in Relational Databases

Keyword search in relational databases has been widely studied in recent years because it does not require users neither to master a certain structured query language nor to know the complex underlying database schemas. Most of existing methods focus on answering snapshot keyword queries in static databases. In practice, however, databases are updated frequently, and users may have long-term interests on specific topics. To deal with such a situation, it is necessary to build effective and efficient facility in a database system to support continual keyword queries. In this paper, we propose an efficient method for answering continual top-$k$ keyword queries over relational databases. The proposed method is built on an existing scheme of keyword search on relational data streams, but incorporates the ranking mechanisms into the query processing methods and makes two improvements to support efficient top-$k$ keyword search in relational databases. Compared to the existing methods, our method is more efficient both in computing the top-$k$ results in a static database and in maintaining the top-$k$ results when the database continually being updated. Experimental results validate the effectiveness and efficiency of the proposed method.

💡 Research Summary

The paper addresses the problem of continuously monitoring top‑k keyword query results in relational databases that are subject to frequent updates. Traditional keyword‑search techniques for relational data assume a static database and either generate all possible joint‑tuple‑trees (JTTs) or rely on exhaustive evaluation of candidate networks (CNs). While the Global‑Pipelined (GP) algorithm introduced a clever early‑termination strategy based on upper‑bound scores, it still treats ranking as a separate step and incurs high recomputation costs when insertions or deletions occur.

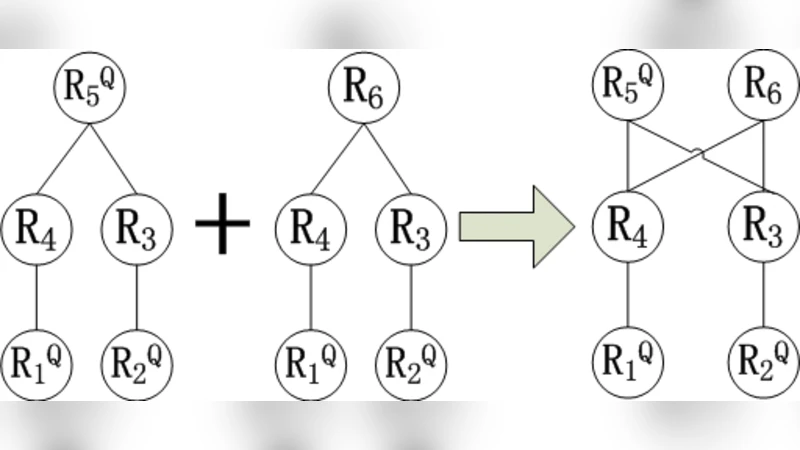

To overcome these limitations, the authors propose an integrated framework that (1) embeds a TF‑IDF‑based tuple scoring function directly into the query processing pipeline, (2) exploits the monotonicity property of this scoring to compute tight upper bounds for any yet‑unprocessed tuple, and (3) maintains a dynamic threshold θ that separates promising from non‑promising partial results. The system first builds the schema graph G_S(V,E) of the database, identifies query‑relevant tuple sets R_Qi and non‑relevant sets R_Fi, and generates a sound, complete, duplicate‑free set of CNs using a state‑of‑the‑art algorithm with a configurable size limit CN_max.

During initial query evaluation, each R_Qi is sorted in descending order of its tuple scores. The algorithm repeatedly selects the unprocessed tuple with the highest upper‑bound score across all CNs, joins it with already processed tuples from the other relations of the same CN, and materializes any valid JTTs that satisfy the join constraints. The actual JTT score is the average of its constituent tuple scores (size‑normalized). Whenever the k‑th highest confirmed score exceeds the maximum upper‑bound among all remaining tuples, the pipeline stops, guaranteeing that no unseen JTT can outrank the current top‑k.

When the underlying database changes, the framework performs three incremental actions: (i) it updates the tuple scores of all JTTs that contain modified tuples, reflecting new term frequencies, document lengths, and collection statistics; (ii) it evaluates newly inserted tuples only against those CNs whose upper‑bound exceeds the current θ, thereby avoiding a full re‑enumeration of CNs; (iii) it discards any JTT that loses a required tuple due to deletion. The threshold θ is recomputed after each batch of updates; if the number of confirmed results falls below k, the pipeline resumes, otherwise it may be tightened to prune further exploration.

The authors validate their approach on two realistic workloads: a scholarly publication database and a micro‑blogging platform with continuous post, comment, and follow‑relationship updates. Experiments compare the proposed method against the baseline GP algorithm in three dimensions: (a) static top‑k retrieval time, (b) average maintenance time per update batch, and (c) memory footprint. Results show a 30‑45 % reduction in static query latency and more than a 50 % cut in incremental maintenance cost, especially under high‑frequency insert/delete scenarios. The dynamic θ mechanism also leads to fewer CNs being examined, translating into lower memory consumption.

In summary, the paper contributes a cohesive solution for continual top‑k keyword search in relational databases by (1) integrating ranking directly into the pipeline via a monotonic TF‑IDF score, (2) leveraging tight upper‑bound estimates and a dynamically adjusted threshold to achieve early termination, and (3) providing efficient incremental maintenance that avoids full recomputation of candidate networks. This work paves the way for real‑time, keyword‑driven applications such as social‑media monitoring, news aggregation, and e‑commerce product search, where data freshness and rapid top‑k updates are essential.

Comments & Academic Discussion

Loading comments...

Leave a Comment