The inconsistency of the h-index

The h-index is a popular bibliometric indicator for assessing individual scientists. We criticize the h-index from a theoretical point of view. We argue that for the purpose of measuring the overall scientific impact of a scientist (or some other unit of analysis) the h-index behaves in a counterintuitive way. In certain cases, the mechanism used by the h-index to aggregate publication and citation statistics into a single number leads to inconsistencies in the way in which scientists are ranked. Our conclusion is that the h-index cannot be considered an appropriate indicator of a scientist’s overall scientific impact. Based on recent theoretical insights, we discuss what kind of indicators can be used as an alternative to the h-index. We pay special attention to the highly cited publications indicator. This indicator has a lot in common with the h-index, but unlike the h-index it does not produce inconsistent rankings.

💡 Research Summary

The paper provides a rigorous theoretical critique of the h‑index, a widely used bibliometric indicator for assessing individual scientists, and argues that it is fundamentally unsuitable for measuring overall scientific impact. After a brief historical overview of the h‑index and its many variants, the authors focus on two intuitive consistency properties that any reasonable impact measure should satisfy. The first property requires that if two scientists achieve the same relative performance improvement (i.e., their output and citations grow by the same proportion), their relative ranking should remain unchanged. The second property demands the same stability under identical absolute performance improvements.

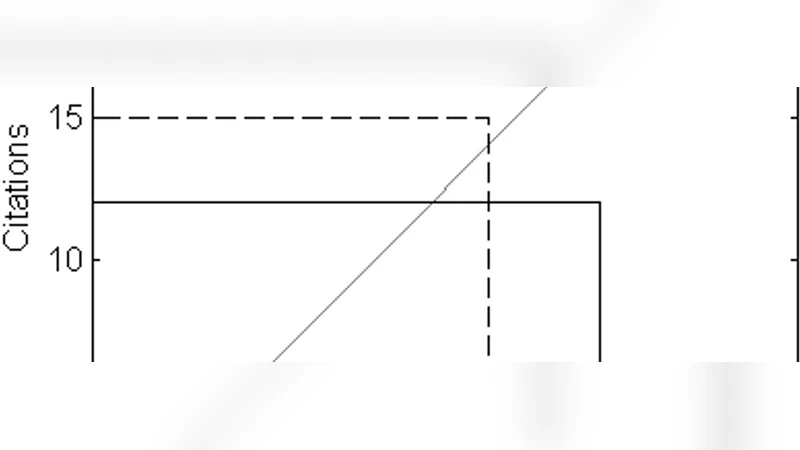

To demonstrate violations of these properties, the authors construct two concrete examples. In Example 1, scientist X initially has a higher h‑index (9) than scientist Y (7) despite both having comparable citation profiles. After each scientist doubles both the number of publications and the citations per paper, X’s h‑index rises to 12 while Y’s jumps to 14, reversing their ranking. This reversal occurs even though the relative improvement is identical for both, contradicting the first consistency property. In Example 2, the authors show a similar reversal when the same absolute increase in citations is added to both scientists’ records, thereby violating the second property. These examples illustrate that the h‑index can produce counter‑intuitive and inconsistent rankings when applied as a size‑dependent measure of overall impact.

The paper then turns to a deeper critique of the h‑index’s definition. The standard definition uses the intersection of the citation curve with a 45‑degree line (i.e., the point where the number of papers equals the number of citations). The authors argue that this choice is arbitrary: any other angle (e.g., 30° or 60°) would generate a different index and potentially a different ranking. Moreover, the h‑index inherently mixes two quantities with different units—publications and citations—so the selection of the 45‑degree line embeds an implicit, unexplained weighting scheme. This arbitrariness has been noted in earlier theoretical work, but the present paper emphasizes its practical consequences for ranking.

As an alternative, the authors advocate the “highly‑cited publications indicator,” which simply counts the number of papers whose citation count exceeds a predefined threshold (e.g., 10, 20 citations). Although this indicator requires the choice of a threshold, the parameter is explicit, transparent, and can be adjusted to the evaluation context. Crucially, because it does not rely on an arbitrary geometric construction, it yields consistent rankings under both relative and absolute performance improvements. The indicator also aligns with size‑dependent impact assessment, as it reflects the total volume of high‑impact work rather than an average per‑paper measure.

In the concluding discussion, the authors reiterate that the h‑index’s structural flaws—its arbitrary definition and its susceptibility to inconsistent rank changes—render it an unreliable proxy for overall scientific impact. They recommend that research evaluation policies move away from a heavy reliance on the h‑index and instead adopt theoretically sound alternatives such as the highly‑cited publications indicator or other metrics that satisfy consistency properties. By doing so, institutions can achieve more fair, transparent, and robust assessments of scientific contributions.

Comments & Academic Discussion

Loading comments...

Leave a Comment