Searching a bitstream in linear time for the longest substring of any given density

Given an arbitrary bitstream, we consider the problem of finding the longest substring whose ratio of ones to zeroes equals a given value. The central result of this paper is an algorithm that solves this problem in linear time. The method involves (…

Authors: Benjamin A. Burton

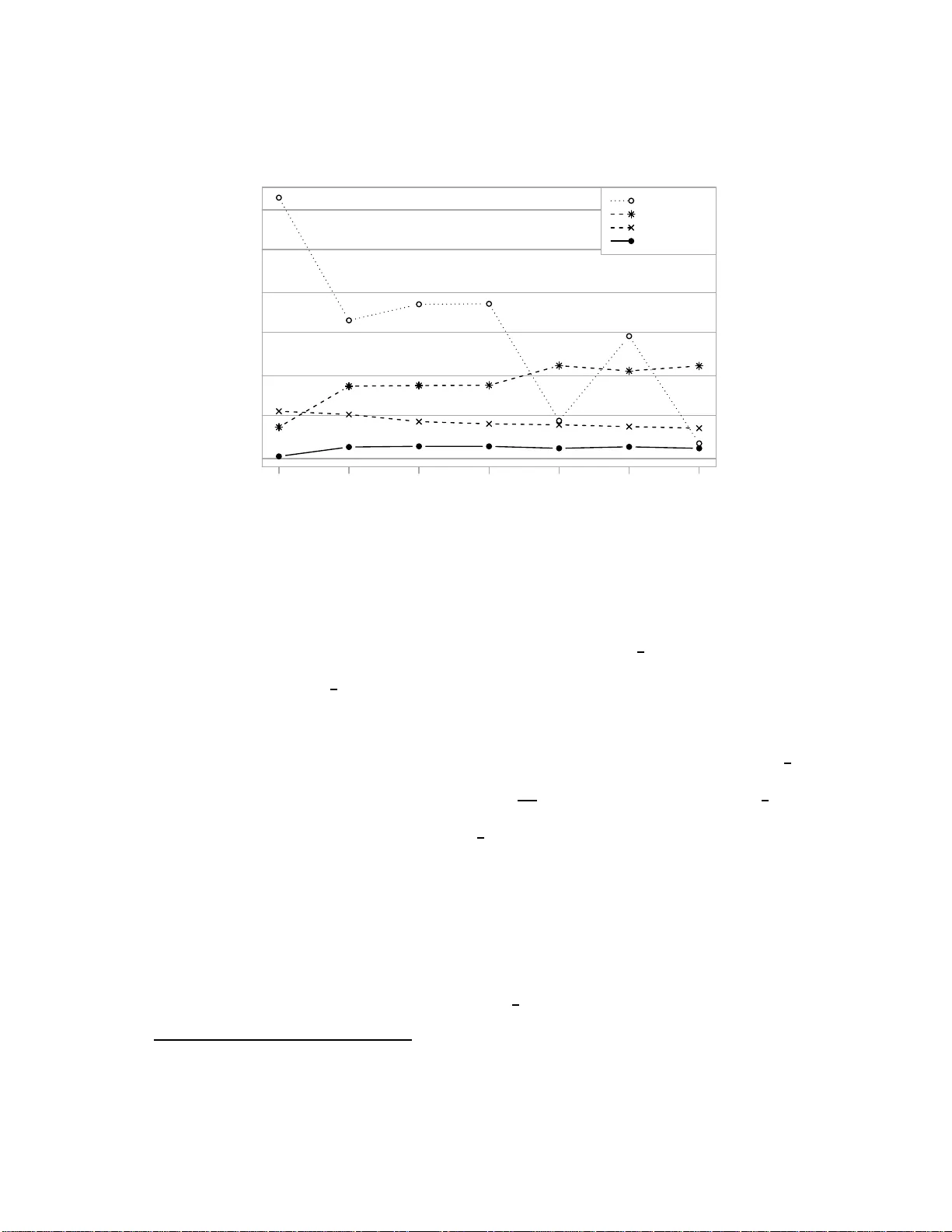

Searc hing a bitstream in linear time for the lo ngest substring of a n y giv en densit y Benjamin A. Burton June 3, 2010 Abstract Give n an arbitrary bitstream, w e consider t he problem of finding the longest substring whose ratio of ones to zero es equals a giv en va lue. The cen t ral result of this pap er is an algorithm that solv es this problem in linear time. The metho d involv es (i) reformulating the problem as a constrained w alk through a sparse matrix, and then (ii) developing a data structure for this sparse matrix that allo ws us to p erform eac h step of the walk in amortised constant time. W e also giv e a linear time algorithm to find the longest substring whose ratio of ones to zero es is b oun d ed b elow by a given v alue. Both problems hav e practical relev ance to cryptography and bioinformatics . 1 In tro d u ction Consider a bitstream of length n , tha t is, a sequence of bits x 1 , x 2 , . . . , x n where eac h x i is 0 or 1. W e define the density o f this bitstream to be the prop or tion of bits that ar e equal to one (equiv alent ly , P x i /n ). The density alw ays lies in the range [0 , 1]: a stream of zero e s has density 0, a stream of o ne s ha s density 1, and a str eam of random bits should have density close to 1 2 . In this paper we ar e interested in the densities of substrings within a bitstrea m. By a sub- string , we mean a co nt inuous sequence of bits x a , x a +1 , . . . , x b − 1 , x b , b eginning at so me a r bitrary po sition a a nd ending at some a rbitrary p osition b . The length of a substring is the num b er of bits that it co ntains (that is, b − a + 1), and the density of the substring is likewise the prop ortion of ones that it contains (that is, P b i = a x i / ( b − a + 1 )). In particular , we are in ter ested in the following t wo problems: Problem 1.1 (Fixed densit y problem) . Supp ose we ar e given a bitstr e am S of length n and a fixe d ra t io θ ∈ [0 , 1] . What is the longest substring of S whose density is equal to θ ? Problem 1.2 (Bounded density problem) . Supp ose we ar e given a bitstr e am S of lengt h n and a fixe d r atio θ ∈ [0 , 1] . What is t he longest su bstring of S whose density is at least θ ? F or example, supp os e we are given the bitstream S = 0101 1010 1 100 of leng th n = 12. Then the longest s ubstring with densit y e qual t o θ = 0 . 6 has length ten (0 101 1 010110 0), and the longest substring with density at le ast θ = 0 . 7 has length se ven (010 1101011 00). Note that each problem might have many so lutions or no s olution at all. Both o f these problems hav e imp or tant applica tions for cry ptography . Many cryptog raphic systems are de p endent on pseudo-random n umber generator s (PRNGs), and any unw anted predictability o r structure in a PRNG beco mes a potential attack p oint for the underly ing cryptosystem. F or this reason PRNGs are typically s ub jected to a string ent series o f ra ndomness tests, such as thos e describ ed in [13] or [1 5]. Bozta¸ s et a l. have r ecently des igned a new series of randomness tests based o n the densities of substr ing s [3]. T o construct these tests, they use the Erd˝ os-R´ enyi law of lar ge num b ers [1, 7] 1 to c ompute the limiting distributions for solutions to the fixed dens it y pro blem, the b ounded density problem and rela ted pro blems. They then compare observed v alues aga inst these limiting distributions, and they hav e identified a p os s ible w ea kness in the Dragon s tr eam cipher [4] as a result. Lo cating subs tr ings with v ario us density prop erties als o has imp ortant applications in bioin- formatics. A sequence of DNA consists o f a long string o f n ucleotides marked G, C, T or A, and subsequences with high pro po rtions of G and C are ca lled GC-rich r e gions . GC-richness is correla ted with factors such as gene dens ity [18], gene length [6], re c o mbination rates [8], co don usage [17], and the incr easing complexity of org anisms [2, 11]. T o iden tify GC-rich regio ns we conv er t a DNA seque nc e into a bitstream, where e a ch G or C b ecomes a o ne bit, and e a ch T or A b ecomes a zero bit. W e then sea rch for high-density substrings in this bitstrea m, using tech niq ues such as those dis cussed here. F urther applications of density problems in the field of bioinformatics are discusse d by Gold- wasser et al. [9] a nd Lin et al. [1 4]. In a ddition, Greenberg [1 0] signa ls p otential applicatio ns in the field of image pro c essing. The focus of this paper is on finding fast algo rithms to solve Pro blems 1 .1 and 1.2. Both problems allow simple brute-forc e algor ithms that run in O ( n 2 ) time. F or the fixed density problem, Bozta¸ s et al. impro ve o n this with their Ski pMisMatch algorithm [3], which re ma ins O ( n 2 ) in the worst case but has an improv ed average-case time complexit y of O ( n log n ). W e outline their contribution in Section 2. Our first contribution in this paper is a s e ries of simple algorithms that so lve b oth the fixed and b ounded density pr oblems in O ( n log n ) time, even in the worst case. These a lgorithms are easy to implement and effective in practice, and a re based up on a central geometric observ atio n. W e cov er these log- linear algorithms in Section 3. In Section 4 we follow with our ma in result, whic h is an a lgorithm that solves the fixe d density pro blem in O ( n ) time, again in the worst case. Based on one of the pr e vious log-linea r algorithms, this alg orithm introduces a specia lised data s tructure that allows us to pro ces s each bit of the bitstream in amortised constant time. Br oadly sp e aking, we: • express our bitstrea m as a s equence of steps through a spa rse matrix , where each step requires a lo ca lised search and p ossible insertio n into this matr ix ; • design a s p ecia lised data structure that “compr esses” this sparse matrix, so tha t ea ch lo calised sear ch and insertion can b e p erformed in a mortised constant time. The amortis e d analy sis is based on ag grega tion—in essence we count the “ interesting” steps of the algo rithm by asso ciating them with distinct elements o f the bitstream, thereby showing the nu mber o f such steps to b e O ( n ). Details o f the pro of are given in Section 4.3. Our final contribution is in Section 5, wher e we g ive an O ( n ) time algo r ithm for the b ounded density problem. In contrast to the fixed density proble m, this final algor ithm is quite simple, inv olving just a handful of linea r scans. T o conclude, we mea sure the prac tical p erformance of our algor ithms in Section 6 . It is reassur ing to find that our linear algo rithms ar e worth the extr a difficult y , consistently outp er- forming the other alg orithms for large bitstream lengths n . In related work, several authors hav e consider ed problems of finding m ax imal density s ub- strings in a bitstream sub ject to a v ar iet y of constra ints. See in particular work by Lin et al. [14], who place a low er bo und on the length of the substring; Goldwasser et a l. [9], who improv e the prior solution and a lso place b oth low e r and upp e r b ounds ; and Greenberg [10], who studies a v ariant rela ting to co mpressed bitstreams. Hsieh et al. [12] study a series of more gener al problems, where the bitstream is replace d by a sequence of real num b ers , and the densit y of a substring becomes the a verage of the corres p o nding subsequence. In addition to developing algorithms, they show that several such problems—including the fixed density problem—ha ve a lower b oun d of Ω( n lo g n ) time. Our 2 linear a lgorithm effectively breaks thr ough this low er b ound in the case where the input sequence consists entirely o f ze ro es and ones. Throughout this pap er we measur e time co mplexity in “num b er of op e rations”, where we treat basic arithmetical op er ations such as + and × a s co nstant-time. 2 Quadratic Algorithms: B ozta¸ s et al. In this section we outline the prio r work o f Boz ta¸ s et al., including a simple O ( n 2 ) brute for ce algorithm as w ell as their SkipMisM atch algor ithm, whic h remains O ( n 2 ) in the worst cas e but bec omes O ( n lo g n ) in the a verage case. Assumption 2.1. Thro ughout this pa pe r we ass ume that the ra tio θ is given as a rational θ = α/β , wher e α and β are integers in the rang e 0 ≤ α ≤ β ≤ n , and wher e gcd( α, β ) = 1. This a ssumption is not r estrictive in any w ay . If θ ca nnot b e expressed as above then the fixed densit y problem has no solution, and for the b o unded density pro blem we can harmles sly replace θ with a near by r ational that satisfies our r equirements. A na ¨ ıve br ute for ce solution runs in O ( n 3 ) time: fo r e a ch po ssible start p oint and end point, walk through the substring and co unt the ones. How ever, there ar e several different tricks that can easily conv er t this in to O ( n 2 ) b y replacing “ walk through the substring” with a consta nt time op eration. O ne such trick is to use a rank table. Definition 2.2 (Rank T able) . A r ank t able is an ar ray r 0 , r 1 , . . . , r n , where each entry r k counts the num b er of ones in the substr ing x 1 , . . . , x k . In other words, r k = P k i =1 x i . It is clear that the co mplete ra nk table ca n b e precomputed in O ( n ) time, and that it suppo rts cons ta nt time queries of the form “ho w many ones app ear in the substring x a , . . . , x b ?” by s imply computing r b − r a − 1 . F or the fixed density pro blem, the SkipMisMat ch alg orithm further o ptimises this O ( n 2 ) brute force metho d by making the following obser v ations: (i) W e are sea rching for the longest substring of density θ . W e can therefore reo rganise our search to work fr o m the long est subs tring down to the shor tes t, allowing us to terminate as so on as we find any substring o f densit y θ . (ii) If we find such a substring , its length must be a m ultiple of β (where θ = α/β a s ab ov e). W e can therefor e r estrict our search to substr ings of such lengths. (iii) When searching for s ubs trings o f length k β , we need to find prec isely kα ones to g ive a density of θ . If at some p oint we find k α ± ǫ ones, we must step forward at le ast ǫ p ositions in our bitstream b efore we can “undo the e rror” and po tent ia lly find the k α ones that we seek. Bundling these obser v ations together, we obtain the SkipMisMatch algorithm as illustrated in Figur e 1. The w or st-case complexity is clearly still O ( n 2 ), but for a r andom bitstream the exp e cte d p erfor mance can b e significantly b etter. In particula r, B ozta¸ s et al. prove the following result as a part of [3, Le mma 4]: Lemma 2.3. Supp ose we have a r andom bitstr e am, wher e e ach bit is one with pr ob ability ρ or zer o with pr ob ability 1 − ρ . Then Sk ipMisMatch has ex p e cte d t ime b ounde d by O | θ − ρ | − 1 × n β log n β . 3 procedure SkipMi sMa tch ( x 1 , . . . , x n , θ = α/β ) Build a rank table r 0 , r 1 , . . . , r n for k ← ⌊ n β ⌋ down to 1 do ⊲ Search for substrings of length kβ ( a, b ) ← (1 , kβ ) ⊲ Initial start and end for our sub string while b ≤ n do ǫ ← | k α − ( r b − r a − 1 ) | ⊲ Compute the “error” for this substring if ǫ = 0 then Output ( a, b ) and terminate else ( a, b ) ← ( a + ǫ, b + ǫ ) ⊲ W e can safely skip forward ǫ positions Output “no suc h substring” and terminate Figure 1: The Sk ipMisMatch algorithm for the fixed densit y pr o blem F or fixed θ and ρ , this reduces to an e xpe cted time of O ( n log n ), as long as θ 6 = ρ . How e ver, if we retain the dep endency on θ (and henc e its deno mina tor β ), we find that Skip MisMatch is rewarded by larg e denomina to rs β (which enhance the p ower of optimisatio n (ii)), and is pena lised by v alues of θ close to ρ (which limit the use o f optimisation (iii)). T o summarise, the SkipMi sMatch algor ithm is easy to co de and runs significantly faster than brute for ce, but its p erformance depe nds heavily on the given v a lue of θ . In addition, some broader iss ues might arise—the exp ected O ( n log n ) time is appr opriate for ra ndom bitstreams (as found in cry ptographic a pplications, for instance), but migh t not ho ld for applications s uch as bioinformatics and image pr o cessing where bitstreams b ecome more structure d. Mo r eov er, the algorithm do es not tr anslate well to the b ounded density problem. All of these r e asons highlight the need for faster and more robust alg orithms, which for m the sub ject of the rema inder of this pap er. 3 Log-Linear Algorithms: M aps and S orting In this section we int r o duce our firs t truly sub-quadratic algorithms for solving the fixed and bo unded density problems. W e describ e DistM ap , a s imple alg o rithm in volving a ma p struc tur e, and DistSo rt , a v ariation that replaces this ma p with a sort and a linear scan. Both of these algorithms run in O ( n log n ) time, even in the w o rst ca se. Although we present even faster algorithms in Sections 4 and 5, b oth Di stMap and DistSort are simple to desc rib e and easy to implement. Moreover, b oth alg orithms play imp ortant roles: DistMap is the founda tion upon which the linear algo rithm of Section 4 is built, a nd DistSort is a more flexible v ariant that can s olve bo th the fixed a nd b ounded density pro blems. 3.1 Graphical R epresen tations Our first step in developing thes e sub-quadra tic algor ithms is to find a g raphical repres ent a tion for our bitstreams. Definition 3. 1 (Grid Repres ent a tion) . W e can plot any bitstrea m as a walk through an infinite t wo-dimensional g r id as follows. 1 W e b egin at the o rigin (0 , 0), and then step one unit in the x -direction each time we encount er a zero, or o ne unit in the y - dir ection each time we encoun ter a one, as illustrated in Figur e 2. W e r efer to this as the grid r epr esentation o f the bitstream. All of the new algo rithms develop ed in this pap er are based up on the following simple geometric observ ation: 1 This is related to, but not the same as, the w alk through the sparse matrix that w e use for the l inear algorithm in Section 4. 4 P S f r a g r e p l a c e m e n t s Start (origin) End Mo vemen t s: x y 0 1 Figure 2: The g rid representation for the bitstream 01011 01011 00 Lemma 3. 2. A su bst ring of a bitstr e am has density θ if and only if t he line joining its start and end p oints in the grid r epr esentation has gr adient θ 1 − θ . T o illus trate, Figure 3 builds on the prev ious example by s earching fo r subs tr ings of densit y θ = 0 . 6. Sev era l pairs o f points sepa rated by gradient θ 1 − θ = 1 . 5 ar e marked (though there are several mor e such pa irs that are not mar ked). The fir st tw o pair s corres p o nd to substrings of length five, and the third pair corre s po nds to a substr ing of length ten. P S f r a g r e p l a c e m e n t s Gradien t θ 1 − θ = 1 . 5 Figure 3: Pairs of p oints that repr esent s ubstrings of density θ = 0 . 6 W e can find suc h pairs of po int s by dra wing a line L θ through the o rigin with slop e θ 1 − θ , and then measuring the distanc e of each p oint from this line (where distances ar e signed, so that po ints ab ov e o r b elow the line hav e p ositive or negative distance resp ectively). This is illustrated in Fig ure 4. It is clear that t wo p oints ar e joined by a line of gradient θ 1 − θ if and only if their distanc e s fr om L θ are the same. P S f r a g r e p l a c e m e n t s Start (origin) L θ Figure 4: Measur ing the distance of each p oint from the line L θ Although such distances can be messy to compute, with appropriate rescaling we can co n vert them into integers as follows. Definition 3 .3 (Distance Sequence) . Recall from Assumption 2.1 that θ = α/β , where g cd( α, β ) = 1. F or a given bitstream x 1 , . . . , x n , we define the distanc e se quenc e d 0 , d 1 , . . . , d n by the form ula d i = ( β − α ) · (num b er o f ones in x 1 , . . . , x i ) − α · (n umber of zero es in x 1 , . . . , x i ) . In other w o rds, d i = ( β − α ) r i − α ( i − r i ) = β r i − αi , where r i is the co rresp onding entry in the rank table. 5 With a little thought it can b e seen that d i is prop o r tional to the distance from L θ of the po int at the end of the i th step of the walk. This empowers the distance s equence with the following critical prop er t y : Lemma 3.4. The su bstring x a , . . . , x b has density e qual to θ if and only if d a − 1 = d b . Similarly, the substring x a , . . . , x b has density at le ast θ if and only if d a − 1 ≤ d b . Pr o of. Although this follows immediately from the g eometric argument ab ov e, we can also prov e it dir ectly . Using the fo rmula d i = β r i − αi , w e find that d a − 1 = d b if and o nly if β ( r b − r a − 1 ) = α ( b − a + 1), o r equiv alently density of x a , . . . , x b = r b − r a − 1 b − a + 1 = α β = θ . The argument for density ≥ θ is similar . 3.2 The DistMap Algor it hm With Lemma 3 .4 we now hav e a simple solution to the fixed density proble m. W e compute the distance sequence d 0 , . . . , d n as we pass through our bitstream, keeping track o f which distance s we hav e seen b efore and when w e first saw them. Whenever we find that a distance has b een seen be fo re, we have a substring of density θ and therefore a p otential solution. W e keep track o f previously-see n distances using a key 7→ value map structure with worst- case O (log n ) search and insertion, such as a red- black tree [5 ]. Here the key is a distanc e D that we have seen b efor e , and the v alue is the p osition at which w e first saw it (i.e., the sma llest i for which d i = D ). procedure DistMap ( x 1 , . . . , x n , θ = α/β ) ( a, b ) ← (0 , 0) ⊲ Best start/end found so far δ ← 0 ⊲ Current distance d i Initialise the emp ty map m Insert m [0] ← 0 ⊲ Record the starting p oint d 0 = 0 for i ← 1 to n do if x i = 1 then ⊲ Compute the new distance d i δ ← δ + ( β − α ) else δ ← δ − α if m has no key δ then ⊲ Hav e we seen this distance b efore? Insert m [ δ ] ← i ⊲ No, t h is is th e first time else if i − m [ δ ] > b − a + 1 then ⊲ Y es, back at position m [ δ ] ( a, b ) ← ( m [ δ ] + 1 , i ) ⊲ Longest sub string found so far Output ( a, b ) Figure 5: The DistMap algor ithm for the fixed densit y pr oblem The result is the alg orithm Di stMap , describ ed in Figure 5. Giv e n our choice o f map structur e , the following result is clea r: Lemma 3.5 . The algorithm DistMap solves the fixe d density pr oblem in O ( n log n ) time in the worst c ase. 6 W e could of cour se use a hash table instead o f a map structure—with a judicious choice of hash function this could yield O ( n ) exp ected time, though the worst case could p otentially be muc h slower. Because w e offer a worst-case O ( n ) alg orithm in Sec tio n 4, w e do not pursue hashing any further her e. 3.3 The DistSort Algorithm W e mov e now to a v ariant of DistMap that remov es an y need for a map structure at all. Ins tead, we replace this map with a s imple a rray that we s ort in-place after all n bits o f the bitstream hav e been pro cessed. The new alg orithm is named DistSort , and has the following a dv antages: • Whilst the map structure plays a key role in giving us O ( n log n ) running time, it a lso comes with a non-trivial memory ov erhea d. If n is lar ge and memor y b ecomes a pr o blem, the in-place sor t us ed by D istSort may b e a mo re economical choice. • DistMap relies on searching for precise matches d a − 1 = d b within the map structure. This makes it unsuitable for the b ounde d density problem, which requires only d a − 1 ≤ d b (Lemma 3.4). If we repla ce our ma p with an arr ay sorted by distance d i , then both problems beco me easy to solve. Indeed, w e find with DistSort that the solutions for the fixed and b ounded density problems differ by just one line. The key ideas b ehind DistSort are as follows: • W e walk throug h the bitstre a m and compute ea ch distance d i as we go , just as we did for DistMap . How ever, instead of storing distanc e s in a map, we sto r e eac h pair ( d i , i ) in a simple arr ay z [0 ..n ], so that each array entry z [ i ] is the pa ir ( d i , i ). • Once we hav e finished our walk through the bitstream, we sor t the arr ay z [0 ..n ] by distance . This gives us a sequence of (distance , p osition) pa ir s ( D 0 , P 0 ) ( D 1 , P 1 ) . . . ( D n , P n ) , where D 0 ≤ D 1 ≤ . . . ≤ D n and where each D i is the distance a fter the P i th step. • Finding p ositions with matc hing dis ta nces is now a simple ma tter of walking thro ugh the arr ay fro m left to righ t—all of the p ositions with the same dista nc e will b e clumped together. In each clump we track the sma llest and largest p ositions p min and p max , a nd these b ecome a candidate substr ing x ( p min +1) , . . . , x p max with density θ . The longest such substring is then o ur solution to the fixed dens it y pr oblem. • Solving the bounded density pro blem is just as easy . The only difference is that we now need o ur subs tring x ( p min +1) , . . . , x p max to satisfy d p min ≤ d p max , not d p min = d p max . T o achieve this, we simply change p min from the s mallest p osition in t his clump to the smallest p ositio n in al l clumps se en so far . The full algo rithm is given in Fig ure 6 . The fixed and b ounded density a lg orithms differ by only one line (marked with a co mmen t in b o ld), where in the b ounded ca se we do not reset p min upo n entering a new clump o f pairs with equa l distances. Regarding time complexit y , w e can c ho os e a worst-case O ( n log n ) sorting algor ithm, suc h as the introsort algorithm of Musser [16]. The subseq uen t sca n through the array runs in linear time, yielding the following ov erall result: Lemma 3.6 . The algorithm Dist Sor t solves b oth the fixe d and b ounde d density pr oblems in O ( n lo g n ) time in the worst c ase. 7 procedure DistSor t ( x 1 , . . . , x n , θ = α/β ) Initialise an arra y z [0 ..n ] of (dist , pos) pairs δ ← 0 ⊲ Current distance d i z [0] ← (0 , 0) ⊲ Record the starting p oint d 0 = 0 for i ← 1 to n do if x i = 1 then ⊲ Compute the new distance d i δ ← δ + ( β − α ) else δ ← δ − α z [ i ] ← ( δ, i ) ⊲ Store the pair ( d i , i ) in our arra y Sort z [0 ..n ] by d istance, giving a sorted sequ en ce of pairs ( D 0 , P 0 ) ( D 1 , P 1 ) . . . ( D n , P n ) ( a, b ) ← (0 , 0) ⊲ Best start/end p ositions found so far ( p min , p max ) ← ( P 0 , P 0 ) ⊲ Poten tial start/end p ositions i ← 0 while i ≤ n do p min ← P i ⊲ Do this for the fixe d dens i t y problem ONL Y p max ← P i i ← i + 1 ⊲ Run throu gh a clump of pairs with the same distance while i ≤ n and D i = D i − 1 do if P i < p min then p min ← P i ⊲ A smaller p osition with this distance if P i > p max then p max ← P i ⊲ A larger p osition with this distance i ← i + 1 if p max − p min > b − a + 1 then ( a, b ) ← ( p min + 1 , p max ) ⊲ Longest sub string found so far Output ( a, b ) Figure 6: The DistSort algor ithm fo r the fixed and b ounded dens ity problems 4 Solving the Fixed Densit y Problem W e pro ceed no w to an algo rithm for the fixed densit y problem that r uns in O ( n ) time, even in the w o rst cas e . This a lgorithm uses DistMap as a starting po int , but re places the gener ic map structure with a sp ecialis e d data s tructure for the task at hand. The central obse rv ation is the following. As we r un the DistMap a lgorithm, e ach suc c essive key in our map is always obtaine d by adding +( β − α ) or − α to t he pr evious key . W e ex ploit this constraint to design a data structur e that allows us to “jump” fr om o ne k ey to the next without requiring a full search, thereby eliminating the lo g n factor fr om our running time. The data structure is fair ly detailed, making it difficult to giv e a simple overview. The following o utline summarises the broad ideas inv olved, but for a c le a rer picture the reader is referred to the full descr iption in Sectio ns 4.1 a nd 4.2. The running time of O ( n ) is e s tablished in Section 4.3 using amo rtised analysis. • W e b egin b y arranging the integers into a n infinite t wo-dimensional lattice (Figure 7), s o that +( β − α ) represents a single step to the rig ht and − α represents a single step down. 8 This ma kes mo v ing from one key to the next a lo c al movement within the lattice. This lattice has infinitely many co lumns but o nly β − α rows, so a step down fro m the b ottom row wra ps back around to the top (but with a shift). • − 6 • − 11 • − 16 • − 3 • − 8 • − 13 • 0 • − 5 • − 10 • 3 • − 2 • − 7 • 6 • 1 • − 4 • 9 • 4 • − 1 • 12 • 7 • 2 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . Figure 7: The tw o -dimensional lattice of integers for β − α = 3 and α = 5. • W e now use this integer lattice a s the “domain” of our map, so that keys (the distances d i ) beco me p oints in the lattice, a nd v alues (the corresp onding po sitions i ) ar e s tored at these p oints. In this wa y our data structu re b e c omes a matrix , which is s parse b ecause only n p oints in the lattice corr esp ond to “r eal” keys with non-e mpt y v alues. • The next stag e in o ur de s ign is to “compr e s s” this sparse matrix by storing not individua l key 7→ value pairs but r ather horizontal runs of c onse cutive p airs , as illustr ated in Figure 8. Storing just the sta rt and end of each run allows us to co mpletely reconstr uct the missing keys and v alues in b etw een. • − 8 7→ 7 • − 5 7→ 8 • − 2 7→ 9 • 1 7→ 10 • 4 7→ 11 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . ⇐ ⇒ • − 8 7→ 7 • 4 7→ 11 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . Figure 8: Compre ssing a horizontal run of consecutive pa irs • W e finish by developing a linked structure for storing our matrix . The co mpressed r uns in each r ow are stored as a “horizo n ta l” linked list, with additio na l “vertical” links betw een rows for downw ard steps. W e a lso chain vertical links to gether, yielding a p erfect bal- ance that offers eno ugh informatio n to supp or t fast mo vement b et ween keys, but enough flexibility to supp or t fast insertion of new key 7→ value pairs. Before presenting the deta ils, it becomes use ful to strengthen our ba se assumptions as follows. Assumption 4.1. Recall from Assumption 2 .1 that θ = α/β , where 0 ≤ α ≤ β ≤ n . F r om here onw ards we strengthen this by a ssuming the stricter b ounds 0 < α < β ≤ n . In other words, we explicitly disallow the s pec ial cases θ = 0 and θ = 1. Like our e a rlier ass umptions, this is not restric tiv e in any w ay . If θ = 0 or θ = 1 then w e simply require the longest contin uous substring of zero es o r ones, which is trivia l to find in linear time. 4.1 The Mapping Matrix W e b egin the deta ils with a formal definition of the in teger la ttice depicted in Fig ure 7. Recall from Assumptions 2.1 and 4.1 that b o th β − α a nd α ar e s tr ictly p ositive, a nd that gcd( β − α, α ) = 1. Definition 4. 2 (Lattice Coor dinates) . Let z b e any in tege r. The lattic e c o or dinates of z a re the unique solutio ns ( r, c ) to the equa tion ( β − α ) c − αr = z , (1) 9 for which r and c are in teger s and 0 ≤ r < β − α . W e call r and c the r ow a nd c olumn o f z resp ectively . F or ex a mple, consider Figure 7 in which β − α = 3 a nd α = 5. The following table lists the lattice co ordinates of several in teg e rs z : Int eg er z − 3 0 3 6 1 − 4 Lattice co ordinates of z (0 , − 1) (0 , 0) (0 , 1) (0 , 2) (1 , 2) (2 , 2) These are pr ecisely the lo cations at which ea ch integer can b e found in Figure 7 , where we nu mber the rows and columns so that the int eg er zero app ears at co o rdinates (0 , 0). With a little mo dular arithmetic it c a n shown that every int eg er app ear s once and o nly o nce in our lattice, as expr e ssed fo rmally by the following res ult. The pro of is elementary , and w e do not rep eat it her e . Lemma 4.3. L attic e c o or dinates ar e always well- define d, that is, e quation (1 ) has a unique solution for every inte ger z . Mor e over, every p air of inte gers ( r , c ) with 0 ≤ r < β − α forms the lattic e c o or dinates of one and only one inte ger. It is w o r th reiter ating a key feature of this c o nstruction, which is that each bit of the bitstream gives rise to a lo c al movement within the la ttice: Lemma 4 .4. Consider some p osition i within the bitstr e am, wher e 0 ≤ i < n . Su pp ose that the lattic e c o or dinates of the distanc e d i ar e ( r , c ) . Then: • If the ( i + 1) th bit is a one, the lattic e c o or dinates of the su bse quent distanc e d i +1 ar e ( r , c + 1) . That is, we take one step to the right. • If the ( i + 1) th bit is a zer o and r < β − α − 1 , then the lattic e c o or dinates of d i +1 ar e ( r + 1 , c ) . That is, we take one step down. • If the ( i + 1) th bit is a zer o and r = β − α − 1 (i.e., we ar e on t he b ottom r ow of the lattic e), then the lattic e c o or dinates of d i +1 ar e (0 , c − α ) . That is, we wr ap b ack ar ound to the top with a shift of α c olumns to the left. This is a straig ht fo r ward consequence of Definitions 3.3 and 4.2, and again we omit the pr o of. The v arious mov ements describ ed in this r esult are indicated by the solid lines in Figur e 7. Recall that our overall strateg y is to build a r eplacement data structur e for the generic key 7→ value map, whos e keys are distances d i and who se v alues ar e the cor r esp onding p ositions i in the bitstr eam. Using Lemma 4.3 we can r eplace each distance d i with its lattic e c o or dinates ( r , c ), thereby r eplacing the old mapping d i 7→ i with the new mapping ( r , c ) 7→ i . This effectively gives us a matrix with β − α rows and infinitely many columns, which we for malise as follows. Definition 4.5 (Mapping Matrix) . W e define the mapping matrix to b e an infinite matr ix with precisely β − α rows (n umber ed 0 , . . . , β − α − 1) and infinitely many columns in b oth dir ections (n umber ed . . . , − 1 , 0 , 1 , . . . ). Each cell of this matrix may contain an integer, or may cont a in the symbol ∅ re presenting an empty c el l . The ent r y in row r and co lumn c of the mapping ma tr ix M is denoted M [ r , c ]. Our algor ithm now runs as fo llows. As w e pro cess eac h bit of the bitstream, we walk through the cells o f the ma pping matrix as des crib ed by Lemma 4.4. If we step into an empty cell, we store the cur rent p osition in the bitstream. If we step into a previo usly-o ccupied cell then w e hav e found a substring of density θ . The full pse udo c o de is given in Figure 9, under the algor ithm name Di stMatrix . The algorithm is of co urse remar k ably simila r to DistMap (Figure 5), since the key difference is in the under lying data structure. Our fo cus in Section 4 .2 is now to fully describ e this da ta s tr ucture, a nd thereby describ e the critical tas k s of ev aluating and setting the matrix entry M [ r , c ]. 10 procedure DistMa trix ( x 1 , . . . , x n , θ = α/β ) ( a, b ) ← (0 , 0) ⊲ Best start/end found so far ( r, c ) ← (0 , 0) ⊲ Current location in the matrix Initialise the emp ty mapping matrix M Insert M [ 0 , 0 ] ← 0 ⊲ Record the starting p oint d 0 = 0 for i ← 1 to n do if x i = 1 then c ← c + 1 ⊲ Step right else if r < β − α − 1 then r ← r + 1 ⊲ Step d o wn else ( r, c ) ← (0 , c − α ) ⊲ Step d o wn and wrap aroun d if M [ r, c ] = ∅ then ⊲ Hav e we b een here before? Insert M [ r, c ] ← i ⊲ No, t h is is th e first time else if i − M [ r, c ] > b − a + 1 then ⊲ Y es, back at position M [ r, c ] ( a, b ) ← ( M [ r, c ] + 1 , i ) ⊲ Longest sub string found so far Output ( a, b ) Figure 9: The DistMatrix algo rithm for the fixed density problem 4.2 The Data Struct ure W e cannot afford to s to re the mapping matrix as a t wo-dimensional array , b eca use—even ig- noring the infinitely many columns—ther e are O ( n 2 ) p otential c e lls that a bitstream of length n might reach. 2 How ever, o nly n + 1 cells a re vis ited (and hence no n- empt y ) for a n y p articular input bitstream. T ha t is, the mapping matrix is s p arse . W e ther e fore a im for a linked structure, wher e o nly the cells w e visit are stored in memor y , and where these cells include po inters to nearby cells to a ssist with navigation around the ma tr ix. How ever, b efor e describing this link ed structure we intro duce a form o f compress io n, where we only need to stor e the cells inv olved in downwar d steps . As we will see in Section 4 .3, this compressio n is critical for stepping through the matrix in a mortised constant time. Our compre s sion relies on the obse rv ation that a run of k consecutive steps to the r ight pro duces a sequence of k co nsecutive v alues in the matr ix: i i + 1 · · · i + k W e ca n des crib e such a sequence by sto ring o nly the start and end p oints, without having to store each individual cell in b etw een. This pattern b eco mes more co mplicated when new paths through the matr ix cr oss ov er old paths, but the core idea remains the s ame—we lo ok for horizontal runs of consecutive v alues in the matrix, a nd recor d only where they start and end. Figure 10 gives an example, w he r e four different paths from four different sections of the bitstream cro ss throug h the same r ow of the matrix. • Figure 1 0(a) shows the four pa ths, which are la b e lled A , B , C and D in chronologica l order as they a ppea r in the bitstr e am. F or ins ta nce, path A en ters the row at cell (8 , 1) and po sition 10 in the bitstream, takes t wo steps to the rig h t, and exits the row from cell 2 This of course depends up on the v alue of θ . If θ = 1 2 for instance, then there are only 2 n + 1 p otent i al cells and a more direct li near algorithm becomes p ossible. Here we treat the general case 0 < α < β ≤ n . 11 P S f r a g r e p l a c e m e n t s Column: R ow: 8 − 5 0 5 9 A (p os 10) B (p os 30) C (p os 50) D (p os 70) A (p os 12) B (p os 33) C (p os 50) D (p os 84) (a) Sev eral paths that cross through a single matrix row Column: − 5 0 5 9 ↓ ↓ ↓ ↓ R ow: 8 70 71 72 30 31 32 10 11 12 79 80 81 50 83 84 ↓ ↓ ↓ ↓ (b) The corresp onding v alues in the mapping matrix Cel l V alue in this c el l V alue to start this run (8 , − 5) 70 70 (8 , − 2) 30 30 (8 , 1) 10 10 (8 , 3) 12 78 (8 , 7) 50 82 (8 , 9) 84 ∅ (c) Storing these v alues in memory Figure 10: Co mpressing a row of the mapping ma tr ix (8 , 3) a t po sition 1 2 in the bitstream. Note that path B subsequently exits from the same cell that A entered, and that path C includes no r ight ward steps at all. • Figure 10(b) shows the state of the mapping matrix after all four paths hav e b een follow ed. Note that v alues from older paths take precedence ov er v alues fro m new er paths, since we alwa ys record the first p ositio n at which we ent e r each cell. V ertical arrows are included as reminders of the cells at whic h paths en ter a nd exit the r ow. • Figure 10(c) shows ho w this state can be “co mpressed” in memory . W e only stor e c el ls at which p aths ent er and exit t he r ow , and for each such cell ( r, c ) w e recor d the following information: – The v alue stored directly in that ce ll, i.e., M [ r , c ]; – The v alue that “b egins” the horizo nt a l run to the right, i.e., M [ r , c + 1 ] − 1. If the c e ll ( r, c ) is itse lf part of the run (such as (8 , − 5 ), (8 , − 2) and (8 , 1) in our e x ample) then b o th v alues will b e equal. If the cell ( r , c ) is the exit for an older path (such as (8 , 3) or (8 , 7) in our example) then these v alues will b e different. If there is no run to the rig ht (as with (8 , 9 ) in our example) then we stor e the symbol ∅ . W e collate this informa tion into a full linked data structure as des c r ib ed b elow in Data Struc- ture 4.6. A detailed example of this linked s tr ucture is illustrated in Fig ure 11. 12 P S f r a g r e p l a c e m e n t s Column Column + ∞ −∞ Entry / ex it cell Horizon tal links V ertical link Secondary link Row r Row r + 1 Row r + 2 Figure 11: An illus tr ation of the full linked data structure Data Structure 4. 6 (Mapping Matrix) . Supp ose we have pr o c esse d the fi rs t k bits of our bitstr e am. T o stor e the curr ent state of the mapping m atrix, we ke ep r e c or ds in memory for the fol lowing c el ls: • The entry and exit c el ls in e ach r ow, i.e., c el ls that c orr esp ond to p ositions imme diately b efor e or after a zer o bit; • The two c el ls c orr esp onding to the b e ginning of the bitstr e am and our curr ent p osition; • “Sentinel” c el ls ( r, −∞ ) and ( r, + ∞ ) in e ach r ow. The r e c or d for e ach such c el l ( r, c ) c ontains the fol lowing informa t ion: • The c olumn c ; • The values M [ r , c ] and M [ r, c + 1 ] − 1 as describ e d ab ove, wher e for the sentinels ( r, ±∞ ) these values ar e ∅ ; • Links t o the pr evious and next c el ls in the same r ow (c al le d horizontal links ). In addition, if we have pr eviously stepp e d down fr om this c el l then we also stor e: • A link to the endp oint of t his s t ep in the fol lowing r ow (c al le d a vertical link ), wher e this endp oint is ( r + 1 , c ) or (0 , c − α ) ac c or ding t o whether or not r < β − α − 1 ; • A link that jumps to the next vertic al link in this r ow, that is, a link to the ne ar est c el l to the right that also stor es a vertic al link (we c al l this new link a se c o ndary link ). We also insert vertic al links b etwe en the sentinels at ( r, ± ∞ ) , run ning fr om e ach r ow to the next, and join these into the chains of se c ondary links for e ach r ow. T o summarise: (i) the “ int er esting” cells in each row are stored in a horizontal doubly-linked list, (ii) we a dd vertical link s corresp onding to prev ious s teps down, and (iii) we chain tog ether the vertical links fr om each row into a secondary link ed list. W e return now to fill in the mis s ing pa rts of the DistMatrix algo r ithm (Figure 9 ), namely the ev aluation and setting of the matr ix entry M [ r , c ]. This can b e done a s follows. (i) A t all times we keep a po int er to the curr ent cell in the matrix (which, a ccording to Data Structure 4.6, always ha s a record explicitly sto r ed). 13 (ii) Each time we s tep right or down, we adjust the data structure to r eflect the new bit that has b een pro cess ed, and w e mov e o ur p ointer to reflect the new c urrent cell. (iii) Ev aluating and setting M [ r, c ] then beco mes a simple matter o f dereferencing our po int er . The o nly step that might not run in constant time is (ii), wher e we adjust the data structure and mov e our p ointer. The precise work in volved v aries a c cording to which type of step we ta ke. • Step right (pr o c essing a one bit): This is a lo cal o per ation inv o lv ing no vertical or secondar y links. W e migh t need to extend the endp oint of the curr ent horizontal run or start a new run fro m the curr ent cell, but these are all simple constant time adjustments inv olving only the immediate left and right horizo nt a l neighbo urs. • Step down (pr o c essing a zer o bit): This is a mor e complex op eration that uses all three link types. Supp ose that we b egin the step in ce ll ( r , c ); for co n venience w e as sume that we step down to ( r + 1 , c ), but the wr aparound cas e r = β − α − 1 is muc h the same. If there is alr eady a vertical link ( r, c ) → ( r + 1 , c ) then we s imply follow it. Other wise we do the following: (1) Find where the destination cell ( r + 1 , c ) should be inser ted in the horizontal list for row r + 1 (or find the cell itself if it is a lready explicitly stor e d). W e do this by: – w alk ing ba ck along r ow r until we find the near est vertical link to the left, which we denote L − ; – following the secondary link fro m L − to the nea rest vertical link to the right, which we deno te L + ; – following the link L + down to row r + 1 ; – w alk ing back along row r + 1 un til we find our insertion p oint. P S f r a g r e p l a c e m e n t s ( r, c ) ( r + 1 , c ) Row r Row r + 1 L − L + (a) The neigh b ourho o d of the source cell ( r, c ) P S f r a g r e p l a c e m e n t s ( r, c ) ( r + 1 , c ) R o w r R o w r + 1 L − L + (b) The path from ( r , c ) to ( r +1 , c ) P S f r a g r e p l a c e m e n t s ( r, c ) ( r + 1 , c ) Row r Row r + 1 L 0 L − L + (c) The new vertical and secondary links Figure 12: Stepping down from ( r , c ) to ( r + 1 , c ) This series of mov ements is illustrated in Figure 12 (b). Note that our sentinels at ( r , ±∞ ) ensure that the vertical links L − and L + will alwa ys exist. (2) If required, inser t the cell ( r + 1 , c ) into the horizontal list for r ow r + 1 a nd up date its immediate hor izontal neighbours. 14 (3) Insert the new vertical link ( r , c ) → ( r + 1 , c ), whic h we deno te L 0 . (4) Replace the secondary link L − → L + with tw o secondary links L − → L 0 → L + , as illustrated in Figure 12 (c). Op erations (2), (3) and (4) are all constant time op erations , but o per ation (1) ma y in volve a lengthy walk through the data struc tur e. The reason for the conv oluted pa th (and indeed the secondary links) is beca use by w a lking b ackwar ds alo ng each row we can ensur e that op era tion (1) runs in amortise d consta nt time, as shown in the following section. 4.3 Analysis of Running Time Through the discuss ions of the pre v ious section, we find that—with the sing le ex c eption of the walk from ( r, c ) to ( r + 1 , c ) when we step down in the ma pping matrix—ea ch bit of the bitstream can b e pro cessed in cons tant time. The following lemma shows that these e x ceptional walks can be pro cessed in amortise d constant time, giving DistMa trix an ov era ll running time o f O ( n ). As in the previous section, w e assume that we step down fro m ( r, c ) to ( r + 1 , c ); the arguments for the wrapa r ound case r = β − α − 1 are essentially the s ame. It is also imp orta n t to remember that the phr ases s t ep down a nd st ep right refer to the full mov ement when pro cessing some bit of the bitstrea m, and no t the many different links that we might follow through the data structur e in p erforming such a s tep. Lemma 4.7 . Consider the walk fr om c el l ( r, c ) t o ( r + 1 , c ) in the “st ep down ” phase of the DistMatrix algorithm, as il lu str ate d in Figur e 12(b), and define the leng th of this walk to b e t he total numb er of links that we fol low. After pr o c essing the entir e bitstr e am, the su m of the lengths of al l “step down ” walks is O ( n ) . In other wor ds, e ach such walk c an b e fol lowe d in amortise d c onstant time. Pr o of. W e prove this result using aggreg ate analys is, by “co un ting ” the num b er of links in each walk using a ro ugh upp er bound. The following links are excluded from this co unt: • all vertical and seco ndary links; • the leftmost horizo n ta l link o n each row o f e a ch walk; • any horizontal links that end at the star ting p oint (0 , 0); • any horizontal links that end at the curr e n t cell ( r, c ). Figure 13 shows a sa mple w a lk where the excluded links a r e ma rked with do tted arrows, and the remaining links (all hor izontal) are marked with b old so lid a rrows. It is clea r that we exclude O ( n ) links in to ta l, 3 and so if w e ca n show that at most O ( n ) ho rizontal links r emain then the pro of is complete. P S f r a g r e p l a c e m e n t s ( r, c ) ( r + 1 , c ) L − L + Figure 13: E xcluded links in a “step down” walk Within each walk from ( r , c ) to ( r + 1 , c ), the horizontal links that r emain hav e the following critical prop erties : 3 A hori zon tal link ending at (0 , 0) can occur at m ost twice p er walk (and at most once if β − α > 1). A horizon tal link ending at ( r, c ) can o ccur at most once p er walk, and only in the special case β − α = 1. 15 • The endp oint of each link in row r is also the endp oint of s ome earlier step down. Moreov e r , this ea rlier step down was followed immediately b y a succes sion of steps right tha t rea ched at least as far a long the row as ( r, c ). • The endpoint o f eac h link in ro w r + 1 is also the b eg inning of so me earlier step down. Moreov e r , this earlier step down was pre c eded immediately by a succe s sion of steps right that orig ina ted at least as far back alo ng the r ow as ( r + 1 , c ). P S f r a g r e p l a c e m e n t s ( r, c ) ( r + 1 , c ) Figure 14: E arlier successions of steps as so ciated with the remaining links These prop erties ar e a c o nsequence of our compress ion (recall that e a ch non-sentinel cell that we store is either (0 , 0 ), the current ce ll, a row en try or a row exit), a s well as the fact that there a re no vertical links b etw een L − and L + that join row r with row r + 1 . Figure 14 illustrates the succes sions of r ight ward steps that ar e describ ed ab ov e. W e can now as so ciate each remaining link ℓ with a po sition π ( ℓ ) in the bitstream: • If the link ℓ is on the “upp er” row r , consider the oldest seque nc e o f steps that stepp e d down to the endp oint of ℓ and then right a ll the wa y acr oss to ( r, c ), as illustrated in Figure 15(a). W e define π ( ℓ ) to b e the po sition in the bitstre a m that was r eached by this sequence when it pas sed through the cell ( r, c ). Note that 0 < π ( ℓ ) ≤ n . • If the link ℓ is on the “low er ” row r + 1, c o nsider the oldest sequence of steps that stepp ed right from ( r + 1 , c ) all the wa y acr oss to the endp oint of ℓ and then down , as illustrated in Figure 15 (b). W e define π ( ℓ ) to b e the p osition in the bitstream that w a s reached by this sequence when it passed through the cell ( r + 1 , c ), ne gate d so that − n ≤ π ( ℓ ) < 0. P S f r a g r e p l a c e m e n t s ( r, c ) ( r + 1 , c ) ℓ Sequence of steps (a) If ℓ is on the upp er row P S f r a g r e p l a c e m e n t s ( r , c ) ( r + 1 , c ) ℓ Sequence of steps (b) If ℓ is on the l o wer row Figure 15: The ear lier sequence of steps that defines π ( ℓ ) The key to achieving an O ( n ) total of walk lengths is to o bserve that the function π is one-to-one : • A link ℓ 1 on the upp er row of some walk can never have the s ame v alue of π as a link ℓ 2 on the lower row of some (p ossibly different) walk, since π ( ℓ 2 ) < 0 < π ( ℓ 1 ). • Within a single walk: – The v alues π ( ℓ ) for links ℓ on the upp er row r ar e distinct, b ecause each corr esp onds to a differ ent historic al p ath through ( r , c ), with a differ en t initial entry p oint into row r . 16 – Lik ewis e, the v alues π ( ℓ ) for links ℓ on the low er row r + 1 are distinct, b ecause each corres p o nds to a different histor ical path alo ng row r + 1 with a different final exit po int from row r + 1. • Bet ween different walks: – Because we insert a new vertical link after every w alk , each walk must hav e a distinct starting p oint ( r, c ). The v alues π ( ℓ ) fro m the upper rows of diff e r ent walks are therefore distinct b ecause they corres po nd to p ositions in the bitstrea m for distinct cells ( r , c ). – Lik ewis e, the v alues π ( ℓ ) from the low er rows o f different walks are distinct b ecause they corr esp ond to p ositio ns in the bitstream for distinct cells ( r + 1 , c ). Therefore π is a o ne-to-one function. Because π ( ℓ ) ∈ {− n, − n + 1 , . . . , n − 1 , n } , it follows that the num b er of links ℓ in the domain of the function can b e at most 2 n + 1 . Hence there are O ( n ) hor izontal links remaining that we have not ex cluded from o ur count, and the pr o of is complete. Through Lemma 4.7 we now find that each bit o f the bitstream can be completely pr o cessed in amortise d constant time, y ielding the following fina l result: Corollary 4.8. The alg orithm DistMatrix solves the fixe d density pr oblem in O ( n ) time in the worst c ase. 5 Solving the Bounded Densit y Problem W e finish o ur suite of a lgorithms with a linear time solution to the bo unded density problem, improving up on the log-linear Di stSort algo rithm o f Section 3. Unlik e our linear time solution to the fixed density problem, this alg o rithm is s imple to express, uses no sophisticated data structures, and essentially in volves just a handful of linea r sca ns . Once aga in we base o ur new a lgorithm on the distance seq uence d 0 , . . . , d n . Recall from Lemma 3.4 that we s e e k the long e s t substring x a , . . . , x b in the bitstr eam for whic h d a − 1 ≤ d b . W e b egin with the following simple observ atio n: Lemma 5. 1. Supp ose that x a , . . . , x b is the longest substring of density ≥ θ in our bitstr e am. Then t her e is no i < a − 1 for which d i ≤ d a − 1 , and ther e is no i > b for which d i ≥ d b . The pro o f is simple—if there were such an i , then w e could extend our s ubstring to p ositio n i and obtain a longer s ubstring with density ≥ θ . This r esult motiv ates the following definition: Definition 5.2 (Minimal and Maxima l Position) . Let k b e a p osition in the bitstream, i.e ., some integer in the range 0 ≤ k ≤ n . W e call k a minimal p osition if there is no i < k for which d i ≤ d k , and we c a ll k a maximal p osition if there is no i > k for which d i ≥ d k . Figure 5 plots the distance sequence fo r the bitstream 10011 01001 011 with target densit y θ = α/β = 3 / 5, and marks the minimal and maximal po sitions on this plot. Minimal and max imal po sitions hav e the following imp or tant prop erties: • They ar e t he only p ositions that we ne e d to c onsider. That is, the solution to the b ounded density problem must b e a substr ing x a , . . . , x b for which a − 1 is a minimal p osition and b is a maximal p ositio n (Lemma 5.1). • They ar e simple to c ompute in O ( n ) t ime. T o find all minimal positio ns, we simply walk through the distance sequence d 0 , . . . , d n and c o llect p os itions i for whic h d i is s maller than any distance seen b efore. T o find a ll maximal p o sitions, we w alk through the dista nce sequence in r everse ( d n , . . . , d 0 ) a nd collect p ositions i fo r which d i is lar ger than any distance seen b efore. 17 P S f r a g r e p l a c e m e n t s Po sition i Distance d i Maximal p ositions Minimal p ositions − 8 − 7 − 6 − 5 − 4 − 3 − 2 − 1 0 1 1 2 2 3 4 6 7 8 9 10 11 12 13 Figure 16: Minimal and maximal po sitions for the bitstrea m 1001 1010 0 1011 • They ar e or der e d by distanc e. That is, if the minimal p o sitions ar e a 1 , a 2 , . . . , a p from left to right ( a 1 < a 2 < . . . < a p ) then w e ha ve d a 1 > d a 2 > . . . > d a p . Likewise, if the maximal p os itio ns a re b 1 , b 2 , . . . , b q from left to rig h t ( b 1 < b 2 < . . . < b q ) then w e ha ve d b 1 > d b 2 > . . . > d b q . This is a n immediate consequence of Definition 5 .2. Our algor ithm then runs a s follows: 1. W e compute the dista nce se quence in O ( n ) time, b y incrementally adding +( β − α ) or − α as seen in DistMap a nd DistSort . 2. W e compute the minimal p ositions a 1 , a 2 , . . . , a p and the max ima l p os itio ns b 1 , b 2 , . . . , b q in O ( n ) time a s describ ed ab ov e. 3. F or each minimal p osition a i , we find the largest maximal p os ition b j for which d a i ≤ d b j . This gives a s ubstring of density ≥ θ and length b j − a i + 1, and we co mpa re this with the longest such substring fo und so far. The k e y o bserv ation is that, b ecause minimal and ma ximal positions are ordered by distance, step 3 can also b e p erformed in O ( n ) time. Sp ecifica lly , if the minimal p osition a i is matched with the maximal po sition b j , then the next minimal p osition a i +1 will b e matched with an e qual or later maximal p osition , i.e., one of b j , b j +1 , . . . , b q . W e can therefore keep a p ointer into the sequence of maximal p ositions a nd s lowly mov e it forward as we pr o cess ea ch of a 1 , . . . , a p , giving step 3 an O ( n ) running time in total. W e name this a lg orithm PositionSweep ; see Fig ure 17 for the pseudoco de. Through the discussion ab ov e we obtain the following final result: Lemma 5.3 . The algorithm P o sitionSweep solves the b ounde d density pr oblem in O ( n ) time in the worst c ase. 6 Measuring P erformance W e finish this pap er with a practical field test of the different algor ithms for the fixed den- sity pr oblem. 4 In pa r ticular, beca us e the linear DistMatrix algorithm in volv es a complex data 4 W e omit the b ounded densit y problem from this field test b ecause the linear algori thm Positio nSweep is simple and slick, with neither the complexit y nor the p oten tial ov erhead of DistMatrix . 18 procedure PositionSweep ( x 1 , . . . , x n , θ = α/β ) d 0 ← 0 ⊲ Compute the distance sequen ce for i ← 1 to n do if x i = 1 then d i ← d i − 1 + ( β − α ) else d i ← d i − 1 − α p ← 1 ; a 1 ← 0 ⊲ Compute minimal p ositions for i ← 1 to n do if d i < d a p then p ← p + 1 ; a p ← i q ← 1 ; b 1 ← n ⊲ Compute maximal p ositions for i ← n − 1 down to 0 do if d i > d b q then q ← q + 1 ; b q ← i ( a, b ) ← (0 , 0) ⊲ Best start/end found so far j ← 1 for i ← 1 to p do ⊲ Run through minimal positions while j < q and d a i ≤ d b j +1 do ⊲ Find b est maximal p osition j ← j + 1 if b j − a i > b − a + 1 then ( a, b ) ← ( a i + 1 , b j ) ⊲ Longest sub string found so far Output ( a, b ) Figure 17: The P o sitionSweep algor ithm for the b ounded dens it y problem structure with potentially significant ov e rhead, it is useful to compar e its practical p erforma nce against the log-linear but m uch simpler alg orithms DistMap and DistSor t . The tests are designed as follows: • W e use bitstreams of length n = 10 8 for all tests. This v alue of n was chosen to b e large but manageable. W e keep n fixed merely to simplify the data presentation—additional da ta has bee n colle cted for se veral sma ller v alues of n , and the results s how similar character is tics to those descr ib ed here. • All bitstreams ar e pseudo- random. 5 This is of par ticular b enefit to the Sk ipMisMatch algorithm, whose exp ected r unning time o f O ( n log n ) in a r andom scena r io is significa nt ly better than its worst ca se time of O ( n 2 ). • W e run tests with several different v a lues of the targ et density θ . This includes v alues close to and far aw ay from 1 2 , as well as v alues with small a nd larg e deno minators—our aim is to identify to what degree the p erformance o f different algor ithms dep ends up on θ . The v alues of θ that we use are 1 2 , 1 3 , 2 5 , 1 5 , 50 101 , 31 101 and 1 101 . • Each test inv olves the same 200 pre-ge nerated bitstreams of length n = 10 8 . F or e ach algorithm a nd each v alue of θ we measur e the mean running time over all 200 bitstrea ms. All running times are measure d as user + system time, running on a single 3 GHz In tel Core 2 CPU with 4GB of RAM. All algo rithms ar e co ded in C + + under GNU/Linux. 5 Bitstreams were generated using the rand() function f rom the Linux C Libr ary . 19 Comparison of running times for n = 100,000,000 Theta Mean running time (sec) 1/2 50/101 2/5 1/3 31/101 1/5 1/101 3 10 30 100 300 1000 3000 SkipMisMatch DistMap DistSor t DistMatrix Figure 18: Running times o f different a lgorithms for the fixed density pr oblem The res ults are plotted in Figur e 18; note tha t the time a xis uses a lo g s cale, with ea ch horizontal line repre s ent ing a factor of approximately × 3. E r ror bars are not included b ecause most s tandard er rors ar e within ± 1 %; the only exceptions are for θ = 1 2 , where DistMap has a standard err or of ± 1 . 6% and Skip MisMatch has a standard error o f ± 10%. The v alues of θ are ordered by distance fr om 1 2 . Happily , the r esults ar e what we hop e for. The lo g-linear a lgorithms DistMap a nd Di st- Sort p erfor m significantly b etter than SkipMis Match in mo st case s, and the linear algor ithm DistMatrix consistently outp erfo rms all of the other s. The dep endency of SkipMisMatch up on θ is evident—performanc e is b est when b oth | θ − 1 2 | and the denominator β are large (as exp ected from Lemma 2.3), bringing it close to the 4 second running time of DistM atrix for the extreme case θ = 1 101 . A t the other ex treme, for θ = 1 2 the SkipMisMa tch algor ithm runs or de r s of magnitude slow er , with a mean running time of ov er an hour and some individual ca ses taking up to 10 1 2 hours. Amongst the log-linea r algor ithms 6 , w e find that DistSort pe rforms no ticeably better than DistMap . Part of the reason is the memory ov er head due to the map structure —it was found that DistMap often exceeded the av ailable memor y o n the machine, burdening it with a r eliance on virtual memory (which of course is muc h slow er). The linear Di stMatrix algorithm also suffers from memory pro ble ms to a less er extent, but Figure 18 shows that that the effectiveness of the algo rithm mo re than comp ensates for this. Figure 19 plo ts the p eak memory usag e for each algorithm, again av e raged over a ll 200 bitstreams. An in tere s ting fea tur e o f the running times is tha t DistM ap dep ends up on θ in an opp osite manner to Skip MisMatch . This is beca use when θ ≃ 1 2 or the denominato r β is small, there a re few e r distinct distanc e s amongst d 0 , . . . , d n , and hence fewer elements stored in the map. 6 F or DistMap and DistSort , the map and sor t are implement ed using std::map and std::sort fr om the C + + Standard Librar y , as implement ed by the GNU C + + compiler version 4.3.2. 20 P eak memor y usage for n = 100,000,000 Theta Mean peak memory usage (GB) 1/2 50/101 2/5 1/3 31/101 1/5 1/101 0 1 2 3 4 5 6 SkipMisMatch DistMap DistSor t DistMatrix Figure 19: Peak memory usage of different alg orithms for the fixed density problem In conclusion, it is pleasing to note ho w consis tently DistMatrix perfor ms across all of the tested v alues of θ , with mean running times rang ing fr om 3 . 2 seconds to 4 . 2 seconds and stan- dard error s of just 0 . 1%. The exp eriments therefore sugg est that the added c o mplexity and ov erhea d of DistM atrix are well justified by the efficiency of the algo rithm a nd its underlying data structure. Ac kno wledgemen ts The author is supp orted by the Australian Research Council’s Discov er y Pro jects funding scheme (pro ject DP10 9 4516 ). He is g rateful to Serda r Bozta¸ s, Mathias Hiron and Casey Pfluger for fruitful discussions rela ting to this work. References [1] R. Arratia, L. Gordon, and M. S . W aterman, The Er d˝ os-R´ enyi law in di stribution, f or c oin tossing and se quenc e matching , A nn. Statist. 18 (1990), no. 2, 539–570. [2] Giorgio Bernardi, Iso chor es and the evolutionary genomics of vertebr ates , Gene 241 (2000), no. 1, 3–17. [3] Serdar Bozta¸ s, Simon J. Puglisi, and An drew T urpin, T esting str e am ciphers by finding the longest substring of a given density , Information Security and Priv acy , Lecture Notes in Comput. Sci., vol. 5594, Springer, Berlin, 2009, pp. 122–133. [4] Kevin Chen, Matt Henricksen, Willia m Millan, Joanne F uller, Leonie Simpson, Ed Daw son, Ho on- Jae Lee, and S angJae Moon, Dr agon: A f ast wor d b ase d str e am cipher , Information Security and Cryptology—ICISC 2004, Lecture Notes in Comput. Sci., vol. 3506, Springer, Berlin, 2005, pp . 33– 50. 21 [5] Thomas H. Cormen, Charles E. Leiserson, Ronald L. Rivest, and Clifford S tein, I ntr o duction to algorithms , 2nd ed., MIT Press, Cam bridge, MA, 2001. [6] Laurent Duret, Dominiq u e Mouchiroud, and Christian Gautier, Statistic al analysis of vertebr ate se quenc es r eve als that long genes ar e sc ar c e in GC-rich iso chor es , J. Mol. Evol. 40 (1995), n o. 3, 308–317 . [7] Pa u l Erd˝ os and Alfr´ ed R´ enyi, On a new law of lar ge numb ers , J. Analyse Math. 23 (1970 ) , 103– 111. [8] Stephanie M. F ullerton, Antonio Bernardo Carv alho, and And rew G. Clark, L o c al r ates of r e c ombi- nation ar e p ositively c orr elate d with GC c ontent in the human genome , Mol. Biol. Evol. 18 (2001), no. 6, 1139–1142. [9] Mic hael H. Goldw asser, Ming-Y ang Kao, and Hsueh-I Lu , Line ar-time algorithms for c omputing maximum-density se quenc e se gments with bioinf ormatics applic ations , J. Comput. System Sci. 70 (2005), no. 2, 128–144 . [10] Ronald I. Green b erg, F ast and sp ac e-efficient l o c ation of he avy or dense se gments in run-length en- c o de d se quenc es , Computing and Combinatorics , Lecture Notes in Comput. Sci., vol. 2697, Springer, Berlin, 2003, p p. 528–536. [11] Ross Hardison, Dan Krane, Da v id V andenbergh, Jan-F ang Cheng, James Mansb erger, John T addie, Scott Sch wartz, Xiao qiu Huang, and W ebb Miller, Se quenc e and c omp ar ative analysis of the r abbit α -like globin gene cl uster r eve als a r apid mo de of evolution in a G + C-rich r e gion of mamm alian genomes , J. Mol. Biol. 222 (1991), no. 2, 233–249. [12] Y ong-H siang H sieh, Chih-Chiang Y u, and Bii n g-F eng W ang, Optimal algorithms for the interval lo c ation pr oblem w i th r ange c onstr aints on length and aver age , IEEE/ACM T rans. Comput. Biol. Bioinformatic s 5 (2008), no. 2, 281–290. [13] Donald E. Knuth, The art of c omputer pr o gr amming, Vol. 2: Semi numeric al al gorithms , 3rd ed., Addison-W esley , R eading, MA, 1997. [14] Y aw-Ling Lin, T ao Jiang, and Kun- Mao Chao, Efficient algorithms for lo c ating the length- c onstr ai ne d he aviest se gments, with applic ations to biomole cular se quenc e analysis , Mathematical F ound ations of Computer Science 2002, Lectu re Notes in Comput. Sci., vol . 2420, Springer, Berlin, 2002, pp. 459–47 0. [15] G. Marsagli a, A curr ent view of r andom numb er gener ators , Computer S cience and Statistics: The Interfac e (L. Billard, ed.), Elsevier Science, Amsterdam, 1985, pp. 3–10. [16] Da v id R . Musser, Intr osp e ctive sorting and sele ction algorithms , Softw. Pract. Exp er. 27 (1997), no. 8, 983–993. [17] P aul M. Sharp, Michalis Avero f, Andrew T. Llo yd, Giorgio Matassi, and John F. P eden, DNA se quenc e evolution: The sounds of silenc e , Phil. T rans. R. So c. Lond. B 349 (1995), no. 1329, 241–247 . [18] Serguei Zoubak, Oliver Clay , and Giorgio Bernardi, The gene distribution of the human genome , Gene 174 (1996), no. 1, 95–102. Benjamin A. Burt on School of Mathematics and Physics, The Un iversi ty of Queensland Brisbane QLD 4072, Australia (bab@debian.org) 22

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment