Policy Iteration is well suited to optimize PageRank

The question of knowing whether the policy Iteration algorithm (PI) for solving Markov Decision Processes (MDPs) has exponential or (strongly) polynomial complexity has attracted much attention in the last 50 years. Recently, Fearnley proposed an example on which PI needs an exponential number of iterations to converge. Though, it has been observed that Fearnley’s example leaves open the possibility that PI behaves well in many particular cases, such as in problems that involve a fixed discount factor, or that are restricted to deterministic actions. In this paper, we analyze a large class of MDPs and we argue that PI is efficient in that case. The problems in this class are obtained when optimizing the PageRank of a particular node in the Markov chain. They are motivated by several practical applications. We show that adding natural constraints to this PageRank Optimization problem (PRO) makes it equivalent to the problem of optimizing the length of a stochastic path, which is a widely studied family of MDPs. Finally, we conjecture that PI runs in a polynomial number of iterations when applied to PRO. We give numerical arguments as well as the proof of our conjecture in a number of particular cases of practical importance.

💡 Research Summary

The paper investigates the computational behavior of the Policy Iteration (PI) algorithm when applied to PageRank Optimization (PRO) problems, a class of Markov Decision Processes (MDPs) that arise when one wishes to maximize (or minimize) the PageRank of a designated node by controlling a subset of edges in a web graph. The authors begin by recalling the definition of PageRank as the stationary visitation frequency of a random surfer performing a uniform random walk on a directed graph, and they describe practical scenarios—such as webmaster link selection, spam detection, and financial network analysis—where optimizing this metric is of interest.

A central observation is that maximizing the PageRank of a target node v is equivalent to minimizing the expected time between successive visits to v. By splitting v into a source copy v_s (retaining all outgoing links) and a sink copy v_t (retaining all incoming links and a zero‑cost self‑loop), the problem becomes one of minimizing the expected distance from v_s to the absorbing state v_t. In this formulation each free edge can be either activated or deactivated, and a policy corresponds to a particular subset of activated edges. Consequently, PRO can be cast as a special case of the Stochastic Shortest Path (SSP) problem, where the state space consists of the original graph vertices plus v_s and v_t, the action set consists of the 2^f possible activation patterns (or, more efficiently, one action per free edge), transition probabilities are uniform over outgoing edges, and costs are unit except for the absorbing target.

The authors formalize this connection by defining a Generalized PageRank Optimization (GPRO) model that allows arbitrary non‑negative weights on edges, arbitrary non‑negative costs, and “exclusivity constraints” that force exactly one of two free edges leaving a node to be active. They prove two reduction theorems: (1) any SSP instance with n states and m actions can be transformed in polynomial time into a GPRO instance with O(m) vertices and O(m) free edges preserving optimal solutions; (2) conversely, any GPRO instance with n vertices and f free edges can be turned into an SSP with O(n) single‑action states and O(f^2) two‑action states, again preserving optimality. The reductions rely on representing each SSP action as a weighted edge in GPRO and on encoding each free‑edge activation choice as a distinct SSP state with two exclusive actions.

Having established the equivalence, the paper turns to the complexity of PI on PRO. For general MDPs, the best known upper bound on the number of PI iterations is exponential (O(2^m/m)), and Fearnley’s recent construction shows that PI can indeed require an exponential number of steps. However, that construction cannot be embedded into PRO because PRO lacks the “exclusive action” structure that makes the worst‑case examples hard. In deterministic MDPs (DMDPs) the known lower bound is only quadratic, and strongly polynomial algorithms exist when a fixed discount factor is present. The authors conjecture that PRO belongs to this easier subclass: PI should converge in a number of iterations polynomial in the size of the graph and the number of free edges.

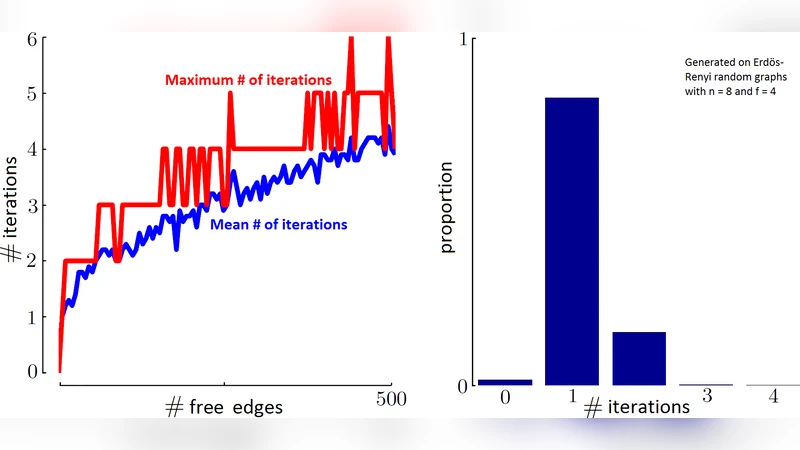

To support the conjecture, they present extensive numerical experiments. Randomly generated web‑graph instances with up to several thousand nodes and dozens of free edges were solved with PI; the observed iteration counts grew roughly linearly with the number of free edges and sublinearly with the total number of nodes. They also examine several analytically tractable special cases: (i) when each node has at most one free outgoing edge, (ii) when the underlying graph is a directed tree, and (iii) when all free edges share a common source. In each case they prove that PI reaches optimality in O(f) or O(n) iterations, confirming the polynomial behavior.

The paper concludes that the structural restrictions inherent to PRO—uniform transition probabilities, unit costs, and the absence of complex exclusive‑action patterns—render the PI algorithm far more efficient than in the general MDP setting. This insight opens the door to practical, scalable algorithms for PageRank manipulation tasks, offering a theoretically grounded alternative to linear‑programming approaches that are often too costly for large‑scale web graphs. Future work is suggested to extend the analysis to other MDP criteria (discounted cost, average cost) and to explore whether similar polynomial‑time guarantees hold for broader classes of MDPs that share PRO’s structural properties.

Comments & Academic Discussion

Loading comments...

Leave a Comment