Center-based Clustering under Perturbation Stability

Clustering under most popular objective functions is NP-hard, even to approximate well, and so unlikely to be efficiently solvable in the worst case. Recently, Bilu and Linial cite{Bilu09} suggested

Clustering under most popular objective functions is NP-hard, even to approximate well, and so unlikely to be efficiently solvable in the worst case. Recently, Bilu and Linial \cite{Bilu09} suggested an approach aimed at bypassing this computational barrier by using properties of instances one might hope to hold in practice. In particular, they argue that instances in practice should be stable to small perturbations in the metric space and give an efficient algorithm for clustering instances of the Max-Cut problem that are stable to perturbations of size $O(n^{1/2})$. In addition, they conjecture that instances stable to as little as O(1) perturbations should be solvable in polynomial time. In this paper we prove that this conjecture is true for any center-based clustering objective (such as $k$-median, $k$-means, and $k$-center). Specifically, we show we can efficiently find the optimal clustering assuming only stability to factor-3 perturbations of the underlying metric in spaces without Steiner points, and stability to factor $2+\sqrt{3}$ perturbations for general metrics. In particular, we show for such instances that the popular Single-Linkage algorithm combined with dynamic programming will find the optimal clustering. We also present NP-hardness results under a weaker but related condition.

💡 Research Summary

The paper tackles the long‑standing computational barrier of center‑based clustering problems—such as k‑median, k‑means, and k‑center—by exploiting a realistic structural property of data instances: perturbation stability. Building on the framework introduced by Bilu and Linial for Max‑Cut, the authors conjecture that if an instance remains optimal under only constant‑factor perturbations of the underlying metric, then the optimal clustering can be found in polynomial time. They prove this conjecture for all standard center‑based objectives.

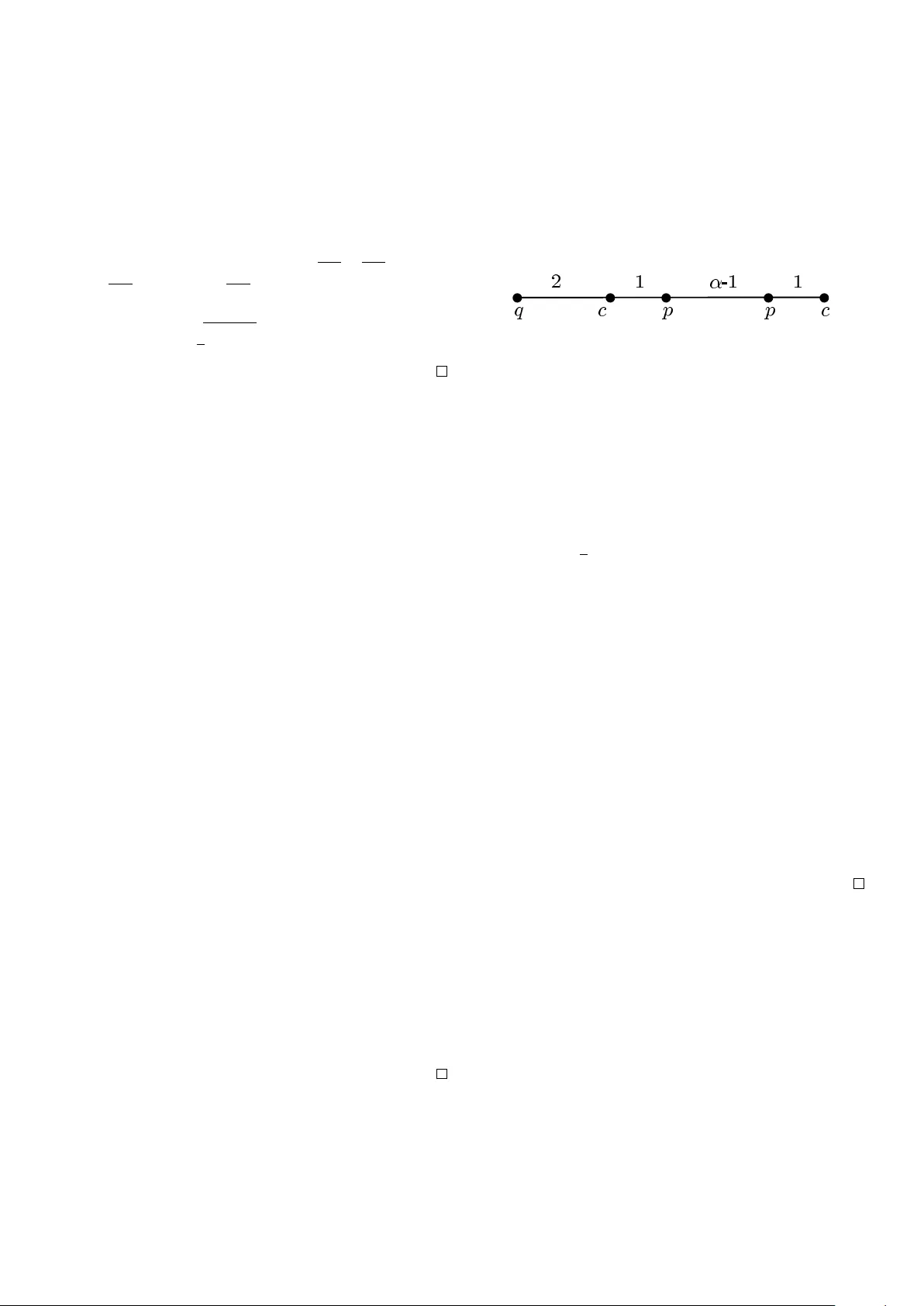

Perturbation stability definition.

Given a metric d on a set V, an α‑stable instance is one for which any α‑multiplicative perturbation d′ (i.e., d(u,v) ≤ d′(u,v) ≤ α·d(u,v) for all u,v) does not change the optimal clustering. The smaller α, the stronger the stability assumption.

Main results.

- Metrics without Steiner points (centers must be data points). If the instance is 3‑stable, the optimal clustering can be recovered in polynomial time.

- General metrics (centers may lie anywhere). A stability factor of 2 + √3 ≈ 3.732 suffices for the same guarantee.

Algorithmic approach.

The authors combine the classic Single‑Linkage hierarchical clustering (which builds a minimum‑spanning‑tree‑like hierarchy by repeatedly merging the closest pair of clusters) with a dynamic‑programming (DP) procedure that selects exactly k cuts in the resulting tree. The DP computes, for each subtree, the minimum cost of forming i clusters, and aggregates these values bottom‑up to obtain the global optimum.

Why the algorithm works under stability.

Perturbation stability implies a “δ‑separation” property: any two points belonging to different optimal clusters are at least a factor‑α larger apart than the farthest intra‑cluster distance. Consequently, the Single‑Linkage tree respects the optimal partition: the edges that cross optimal cluster boundaries are strictly longer than any edge inside a cluster. When the tree is cut at those long edges, the resulting components coincide exactly with the optimal clusters. The DP merely discovers the correct set of cuts; it does not need to guess the cluster centers. The authors provide rigorous proofs that the separation condition holds for α = 3 (no Steiner points) and α = 2 + √3 (general metrics).

Hardness under weaker assumptions.

To show that the stability requirement cannot be dramatically relaxed, the paper defines a “(α,β)‑weak stability” notion, where only a fraction β of the inter‑cluster distances need to be separated by factor α. They prove that even for modest values of α (close to 1) and small β, the clustering problem remains NP‑hard, essentially matching the hardness of the unrestricted version. This establishes that the constant‑factor stability thresholds identified above are near‑optimal for guaranteeing tractability.

Implications and future directions.

The results give a concrete, theoretically justified algorithm—Single‑Linkage plus DP—that works efficiently on any data set satisfying a modest constant‑factor stability condition. Because Single‑Linkage is already implemented in most data‑analysis libraries, the method is readily deployable. The paper also opens several research avenues: (i) quantifying how real‑world data sets (e.g., image collections, customer segmentation) align with the stability thresholds; (ii) extending the stability framework to other clustering paradigms such as spectral or density‑based methods; (iii) investigating whether tighter stability bounds (e.g., α = 2) can be achieved for specific metric families or under additional assumptions.

In summary, the authors demonstrate that the seemingly intractable center‑based clustering problems become polynomially solvable under a natural and practically plausible perturbation‑stability assumption, and they delineate the precise stability constants required for both Steiner‑free and general metric spaces, while also proving that weaker notions of stability do not suffice to escape NP‑hardness.

📜 Original Paper Content

🚀 Synchronizing high-quality layout from 1TB storage...