Steepest Descent Preconditioning for Nonlinear GMRES Optimization

Steepest descent preconditioning is considered for the recently proposed nonlinear generalized minimal residual (N-GMRES) optimization algorithm for unconstrained nonlinear optimization. Two steepest descent preconditioning variants are proposed. The…

Authors: Hans De Sterck

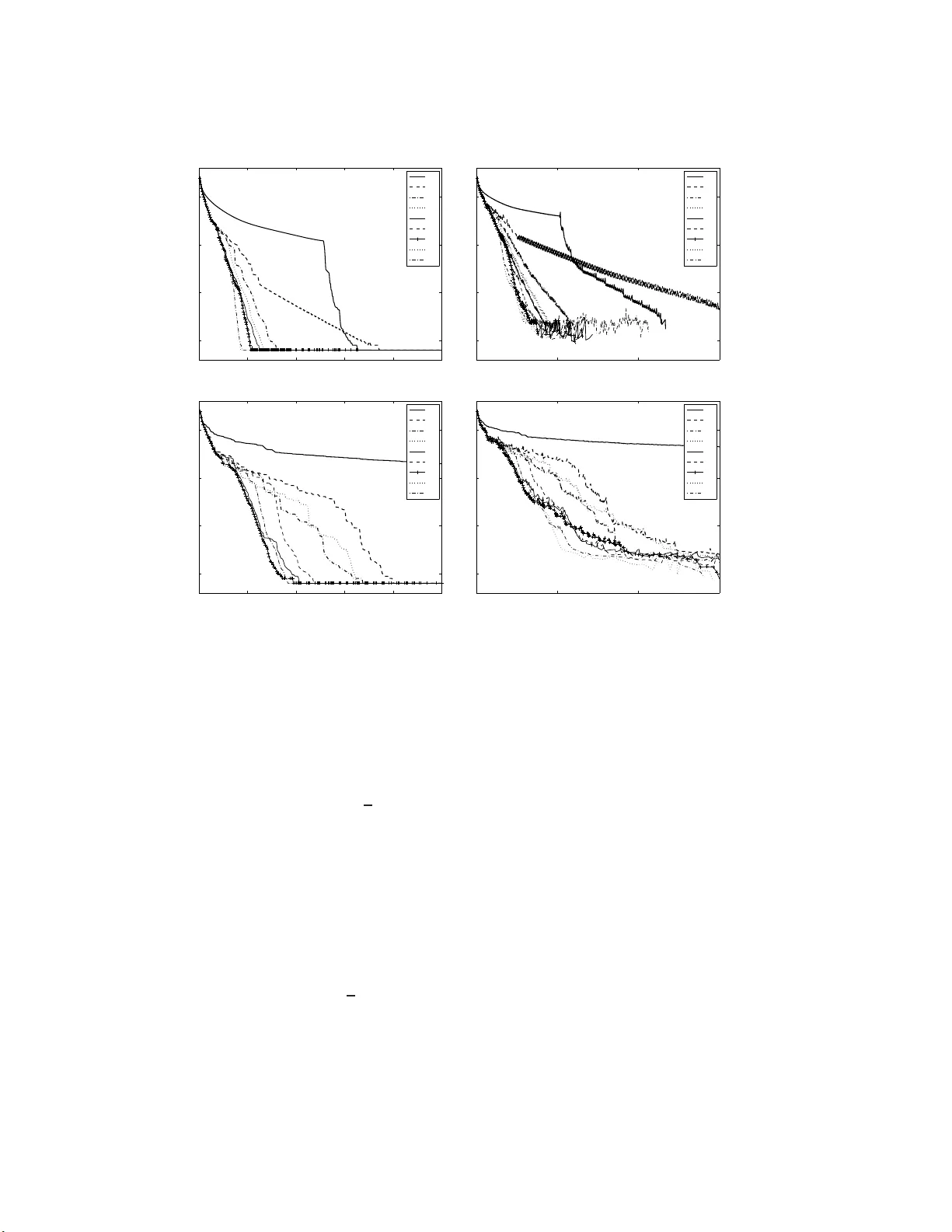

STEEPEST DESCENT PRECONDITIONING F OR NONLINEAR GMRES OPTIMIZA TION H. DE STER CK ∗ § Abstract. Steepest d escen t preconditioning is considered f or the recently prop osed nonlinear generalized minimal residual (N-GMRES) optimization algorithm for unconstrained nonlinear op- timization. Two steep est descent preconditioning v arian ts are prop osed. The first employs a line searc h, while the second emplo ys a predefined small step. A simpl e global conv ergenc e proof is prov ided f o r the N-GMRES optimization al g orithm with the first steep est descen t preconditioner (with line search), under mild standard conditions on the ob jectiv e function and the line search pro- cesses. Steepest descen t preconditioning for N-GM RES optimization is also motiv ated b y relating it to standard non-preconditioned GMRES for linear systems in the case of a standard quadratic optimization pr oblem wi t h symm e tric p o sitive definite operator. Numerical tests on a v ariety of model problems show that the N-GMRES optimization algorithm is able to very significan tly accel- erate con ve rgence of stand-alone steep est descen t optimization. Moreov er, p erformance of steepest- descen t preconditioned N-GMRES is shown to b e comp etitive with standard nonlinear conjugate gradien t and limited-memory Bro yden-Fletc her-Goldfarb-Shanno metho ds for the model problems considered. These results serve to theoretically and numerically establish steepest-descent precondi- tioned N-GMRES as a general optimization metho d for unconstrained nonlinear optimization, with perf ormanc e that appears promising compared to established tec hniques. In addition, it is argued that the real p ote nt ial of the N-GM RES optimization framework lies in the fact that it can make use of problem-dependent nonlinear pr e conditioners that are more p ow erful than steepest descent (or, equiv alen tly , N-GMRES can b e used as a simple wrapp er around any other iterative optimiza- tion process to seek acceleration of that pro c ess), and this p ot en tial is illustrated wi t h a fur t her application example. Key words. nonlinear optimization, GM RES, steepest descent AMS sub ject classificatio ns . 65K10 Optimization, 65F08 Preconditioners for iterativ e meth- ods, 65F10 Iterative m etho ds 1. In trodu ction. In recent w ork on canonical tensor appr oximation [3], we hav e prop osed a n a lgorithm that a c celerates conv ergence of the a lternating least squares (ALS) optimization metho d for the canonical tensor appr oximation pro blem consid- ered there. The alg orithm pro ceeds by linearly recombining previous iterates in a wa y tha t a pproximately minimizes the residual (the gra dient of the ob jective func- tion), using a no nlinear generalized minimal res idua l (GMRES) approa ch. The re- combination step is followed by a line sea rch step for globa lization, and the resulting three-step non-linear GMRES (N-GMRE S) optimization alg orithm is shown in [3] to significantly sp ee d up the convergence of ALS for the canonica l tensor approximation problem co nsidered. As ex plained in [3] (which w e refer to as Paper I in what follows), for the tensor approximation problem co nsidered ther e , ALS can a lso b e in terpreted as a precondi- tioner for the N-GMRES optimization algor ithm. The question then ar ises what other t yp es of preconditioners can b e conside r ed for the N-GMRES optimization algorithm prop osed in Pap er I, and whether there are universal preconditioning approa ches that can make the N-GMRES optimization algor ithm applica ble to nonlinea r optimiza tion problems more generally . In the present paper , we prop ose such a universal precon- ditioning approach for the N-GMRES optimization algorithm pro p osed in Pap er I, namely , steep est descent preco nditioning. W e explain ho w updates in the steep est descent direction can indee d naturally b e used as a preconditioning pr o cess for the ∗ Departmen t of Applied Mathematics, Universit y of W aterloo, W aterloo, Ontario, Canada § hdesterc k@uw aterloo.ca 1 2 H. De Sterc k N-GMRES optimization a lg orithm. In fact, we show that steep est descent precon- ditioning can b e seen as the most basic preconditioning pro cess for the N-GMRES optmization metho d, in the s ense that applying N-GMRES to a quadr a tic ob jective function with symmetric pos itive definite (SPD) o pe rator, cor resp onds mathemati- cally to apply ing standar d non-pr econditioned GMRES for linear systems to the linear system corres p o nding to the quadr atic ob jective function. W e pr op ose tw o v a riants of steep est des cent pr econditioning, one with line s e arch and o ne with a predefined small step. W e give a simple globa l conv er gence pro of for the N-GMRES optimization al- gorithm with our first pr o p osed v a riant of steep est descent pre c o nditioning (with line search), under standard mild conditions on the ob jective function a nd for line searches satisfying the W olfe conditions. The second preco nditioning approach, without line search, is of in terest becaus e it is more efficien t in n umerical tests, but there is no conv er gence guara ntee. Numerical results are employ ed for a v a riety of tes t problems demonstrating that N-GMRES optimization can significantly sp eed up stand-alo ne steep est descent optimiza tion. W e also compar e steep est-descent preconditioned N- GMRES with a standar d nonlinear co njugate gr adient (N-CG) metho d fo r all o ur test problems, and w ith a s ta ndard limited-memor y Broyden-Fletcher-Goldfarb-Shanno (L-BFGS) method. W e co nsider the following uncons tr ained nonlinear optimization pro ble m with a s- so ciated fir st-order optimalit y equations : optimiza tion pr oblem I : find u ∗ that minimizes f ( u ) . (1.1) first-order optimality equa tions I: ∇ f ( u ) = g ( u ) = 0 . (1.2) The N-GMRES optimization algor ithm prop osed in Pap er I for a c celerating ALS for canonical tensor approximation co nsists o f three steps tha t can be s ummarized as follows. (Fig. 1 .1 gives a schematic r e presentation of the algor ithm, and it is describ ed in pseudo-co de in Algorithm 1.) In the fir st s tep, a preliminar y new itera te ¯ u i +1 is generated fr om the la st iter a te u i using a o ne-step iter ative up date pr o cess M ( . ), which can be in terpreted as a preco nditio ning pro c e ss (see Paper I a nd b elow). ALS preconditioning is used for M ( . ) in Paper I. In the sec o nd step, an acceler ated iterate ˆ u i +1 is obtained by linearly recombining pre v ious iterates in a window of s ize w , ( u i − w +1 , . . . , u i ), using a nonlinear GMRES approa ch. (The details of this step will be recalled in Section 2 b elow.) In the third step, a line search is p er formed that minimizes ob jective function f ( u ) on a half line starting at preliminary iterate ¯ u i +1 , which was genera ted in Step I, and connecting it w ith ac c elerated iterate ˆ u i +1 , which was generated in Step I I, to obtain the ne w iterate u i +1 . The s econd step in the N-GMRES o ptimization algorithm (Step I I in Algo rithm 1) uses the no nlinea r extension o f GMRES for s olving no nlinea r systems o f equations that was prop os e d by W ashio and Oosterlee in [18] in the c ontext of nonlinear pa rtial differential equation (PDE) systems (see also [12] and [18] for further a pplica tions to P DE systems). It is a nonlinear extension of the celebr ated GMRES metho d for iteratively solving sys tems of linea r equa tions [15, 14]. W a shio a nd O osterlee’s no n- linear extensio n is related to Flexible GMRE S as describ ed in [13], and is also rela ted to the reduced rank extrap olatio n method [16]. An early de s cription o f this type of nonlinear iterate acceler ation ideas for solving nonlinear equa tion systems app ear s in Steepest Descen t Preconditioning for N-GMRES Optimization 3 so-called Anderso n mixing, se e , e.g ., [5 , 1 7]. More recent applications o f these ideas to nonlinear equation systems a nd fixed- po int problems are disc us sed in [5, 17]. I n Pa- per I we formulated a nonlinear GMRE S optimization a lg orithm for canonical tensor decomp osition tha t uses this type of accele r ation as one of its steps, combined with an ALS pre c onditioning s tep and a line sear ch for globalization. T he type o f no nlinear iterate acceleration in Step II o f Algorithm 1 has thus been co ns idered several times befo re in the context of solving nonlinear systems of equations, but we b elieve that its co mbination with a line search to obtain a general preconditioned no nlinear o pti- mization metho d as in Algorithm 1 (see Pap er I) is new in the o ptimization context. In the present pap er we show how this N-GMRES optimization approach can be ap- plied to a br oad class of sufficiently smo oth nonlinea r optimization pro blems by using steep est descent preconditioning. W e establish theoretica l conv ergence prop erties fo r this a pproach a nd demonstra te its effectiv eness in n umerical tes ts . Algorithm 1 : N-GMRES optimization a lgorithm (windo w size w ) Input: w initial itera tes u 0 , . . . , u w − 1 . i = w − 1 rep eat Step I: (gener ate pr eliminary iter ate by one-step up date pr o c ess M ( . ) ) ¯ u i +1 = M ( u i ) Step I I : (gener ate ac c eler ate d iter ate by nonline ar GMRES st ep) ˆ u i +1 =gmres( u i − w +1 , . . . , u i ; ¯ u i +1 ) Step I I I : (gener ate new iter ate by line se ar ch pr o c ess) if ˆ u i +1 − ¯ u i +1 is a desc ent dir e ction u i +1 =linesearch( ¯ u i +1 + β ( ˆ u i +1 − ¯ u i +1 )) else u i +1 = ¯ u i +1 end i = i + 1 un til c onver genc e criterion satisfie d (Note that the w initial iterates required in Algorithm 1 can na tur ally b e gener ated by applying the alg orithm with a window size tha t gradua lly increas es from one up to w , star ting from a single initial guess. Also, as in [3], we p erform a r estart and reset the window size bac k to 1 whenever ˆ u i +1 − ¯ u i +1 is not a descent direc tion.) The rest of this pa p er is structured as follows. In Section 2 we prop ose tw o t yp es of steep est descent preconditioners for N-GMRE S Optimization Algorithm 1. W e briefly recall the details of the no nlinear GMRES optimization step, give a motiv ation and in- terpretation for s tee p es t descent preconditioning that relate it to non- preconditioned GMRES for SPD linear s ystems, and giv e a simple proo f for global con vergence of the N-GMRES optimization algorithm using s teep e st descent preconditioning with line sear ch. In Section 3 w e pres ent extensive numerical results for N-GMRES opti- mization with the tw o prop osed s teep est desc e nt preconditioner s, applied to a v a riety of nonlinear optimization problems, a nd compar e with stand-alo ne steepest descent, N-CG a nd L-BFGS. Finally , Section 4 concludes . 2. Steep est Descent Preconditioning for N-GM RES Opti mization. In this section, we fir st prop ose t wo v aria nts of steep est descent preco nditioning. W e 4 H. De Sterc k 3 0 u 1 u 2 u 3 d 0 d 1 d 2 u 3 u u Fig. 1.1 . Schematic r epr esentation of one i t er ation of the N-GMRES optimization algorithm (fr om [3]). Given pr evious it er ations u 0 , u 1 and u 2 , new i t er ate u 3 is ge ner ate d as fol lows. In Ste p I, pr e liminary iter ate ¯ u 3 is ge ner ate d by the one-step up date pr o c ess M ( . ) : ¯ u 3 = M ( u 2 ) . In Step II, the nonline ar GMRES step, ac c eler ate d iter ate ˆ u 3 is obtaine d by determining the c o efficients α j in ˆ u 3 = ¯ u 3 + α 0 d 0 + α 1 d 1 + α 2 d 2 such that t he gr adient of the objective function in ˆ u 3 is appr oximately minimize d. In Step III, the new iter ate, u 3 , is final ly gener ate d b y a line sea r ch that minimize s the obje ctiv e funct ion f ( ¯ u 3 + β ( ˆ u 3 − ¯ u 3 )) . then br iefly recall the details o f the no nlinear GMRES recombination step (Step I I in Algorithm 1), and relate N-GMRES optimization to standard non- preconditioned GMRES fo r linear systems in the ca se of a simple quadratic optimization proble m with SPD o p erator. Finally , w e g ive a s imple g lobal co nv er gence pro of for the N-GMRES optimization a lg orithm using steep est descen t preco nditioning with line se arch. 2.1. Steep est Descen t Preconditioning Pro cess. W e pr o p ose a general steep- est des cent pre conditioning pr o cess for Step I of N-GMRES Optimization Algor ithm 1 with the following tw o v ar iants: Steepest D escent Preconditioning Process: ¯ u i +1 = u i − β ∇ f ( u i ) k∇ f ( u i ) k with option A : β = β sdls , (2.1) option B : β = β sd = min( δ , k∇ f ( u i ) k ) . (2.2) F or Option A, β sdls is the step length o btained by a line s earch pr o cedure. F or definiteness, we consider a line sea r ch pro cedure that satisfies the W olfe conditions (see below). W e r efer to the steep est descent preconditioning pro cess with line search (2.1 ) as the sd ls preconditioner . F or Option B, we pre define the step β sd as the minimum of a small p ositive co nstant δ , a nd the norm of the gradie nt. In the n umerical results to b e pr esented further on in the pap er, we use δ = 10 − 4 , except wher e no ted. W e refer to the stee p es t descent preconditioning pro cess with pr edefined step β sd (2.2) a s the sd preconditioner. Thes e t wo Options ar e quite different, and some discus s ion is in o rder. Preconditioning pro ces s A can be employ ed as a sta nd-alone optimization metho d (it can co nv er ge by itself ), and N-GMRE S ca n b e consider e d as a wra pp er that a c cel- erates this stand-alone pro cess. W e will show b elow that N-GMRES with precondi- tioning pro ce s s A has strong conv ergence prop erties, but it may b e exp ensive b eca use the line search may requir e a significant num ber of function and g radient ( f /g ) ev al- uations. How ever, the s ituation is very different for pre conditioning pr o cess B. Here, no additiona l f /g ev aluations are required, but conv er gence app ears ques tionable. It Steepest Descen t Preconditioning for N-GMRES Optimization 5 is c lear that preconditioning pro cess B cannot b e used a s a stand-alone optimizatio n algorithm; in most case s it would not co nv erg e. It ca n, how ever, still b e used a s a pre- conditioning pro ces s for N-GMRES. As is well-kno wn and will be further illustrated below, prec o nditioners used by GMRE S for linear systems do not nee d to be conv er- gent b y themselves, and this sugg ests that it may be interesting to cons ider this for N-GMRES optimization a s well. As will b e motiv ated further below, the role o f the N-GMRES preco nditio ning pro ce s s is to provide new ‘useful’ directions for the nonlin- ear genera lization of the Krylov spac e, and the itera tion can b e driven to co nv er g ence by the N-GMRES minimization, even if the preconditioner is not convergen t b y itself. How ever, fo r this to happ en in the three - step N-GMRES optimization a lgorithm with preconditioning pro cess B, it is r e q uired that ¯ u i +1 even tually approa ches u i and the step leng th β sd approaches 0. F or this reaso n, we select β sd = k∇ f ( u i ) k as so on as k∇ f ( u i ) k ≤ δ . The initial step leng th β sd is c hosen to b e not larger than a small constant bec a use the linear case (see b elow) suggests that a small step is sufficien t to provide a new direction for the Krylov space , a nd beca use the minimization of the residual is bas e d o n a linear ization arg ument (see a lso b elow), and small s teps tend to lead to small linearization errors. 2.2. N-GMRES Recombination Step. Befor e relating steep es t- de s cent pre- conditioned N-GMRES to non-preconditioned GMRES for linear s ystems, we fir st re- call fro m [3 ] some details o f the N-GMRES recombination step, Step II in Algorithm 1. In this step, we find an a ccelerated iterate ˆ u i +1 that is obtained by reco mbining previous itera tes as follows: ˆ u i +1 = ¯ u i +1 + i X j =0 α j ( ¯ u i +1 − u j ) . (2.3) The unknown co e fficie nts α j are determined by the N-GMRES alg orithm in such a wa y that the tw o -norm of the gradie nt of the ob jectiv e function ev a luated at the accelerated iterate is small. In general, g ( . ) is a nonlinear function of the α j , and linearization is used to a llow for inexp ens ive co mputation of coefficie nts α j that may approximately minimize k g ( ˆ u i +1 ) k 2 . Using the following approximations g ( ˆ u i +1 ) ≈ g ( ¯ u i +1 ) + i X j =0 ∂ g ∂ u ¯ u i +1 α j ( ¯ u i +1 − u j ) ≈ g ( ¯ u i +1 ) + i X j =0 α j ( g ( ¯ u i +1 ) − g ( u j )) (2.4) one ar rives a t minimization problem find co e fficients ( α 0 , . . . , α i ) that minimize k g ( ¯ u i +1 ) + i X j =0 α j ( g ( ¯ u i +1 ) − g ( u j )) k 2 . (2.5) This is a standard leas t-squares pr oblem that can b e s olved, for example, by using the normal equations, a s explained in [1 8, 3]. (In this pap er , we solve the least- s quares problem a s describ ed in [3].) In a windowed implementation with window size w , the memory cost incurred by N-GMRES acceleratio n is the stora ge of w previous approximations and residua ls. 6 H. De Sterc k The dominant parts of the CPU cost for ea ch accele r ation step ar e the cost o f building and solving the least-squar es system (which ca n b e done in approximately 2 n w flops if the normal equations are used and some prev ious inner pro ducts ar e stored, see [18]), and nw flops to compute the acceler ated iterate. F or problems with exp ensive ob jective functions, this cos t is o ften negligible compared to the cost o f the f /g ev aluations in the line sear ches [3]. 2.3. Motiv ation and Interpretation for Steep est Descent Precondition- ing. Consider a standard quadratic minimization problem with ob jective function f ( u ) = 1 2 u T A u − b T u , (2.6) where A is SPD. It is well-known that its unique minimizer sa tis fie s A u = b . Now consider applying the N-GMRES o ptimization algor ithm with steep est descen t pr econ- ditioner to the quadr atic minimization pr oblem. The gradient of f at appr oximation u i is g iven b y ∇ f ( u i ) = A u i − b = − r i with r i = b − A u i , (2.7) where r i is defined as the residual of the linear system A u = b in u i . N-GMRES steep est descen t preconditio ner (2.1)-(2.2) then reduces to the form ¯ u i +1 = u i + β r i k r i k , (2.8) and it can easily be s hown that this cor resp onds to the stationar y iterative metho d that g enerates the Krylov space in non-pre conditioned linear GMRES applied to A u = b . W e now briefly show this be cause it pr ovides further insight (recalling parts o f the discussion in [18, 3]). W e first explain ho w pr econditioned GMRES for A u = b works. Consider so- called statio nary itera tive metho ds for A u = b of the fo llowing form: u i +1 = u i + M − 1 r i . (2.9) Here, matrix M is a n approximation o f A tha t has an eas ily co mputable inv erse, i.e., M − 1 ≈ A − 1 . F o r example, M can b e chosen to corre sp ond to Ga uss-Seidel or Jacobi iteration, o r to a multigrid cycle [18]. Consider a s equence o f iterates u 0 , . . . , u i generated by update for mula (2.9), starting fro m some initial guess u 0 . Note that the residuals o f these iterates a re related as r i = b − A u i = ( I − AM − 1 ) r i − 1 = ( I − AM − 1 ) i r 0 . This motiv ates the definition o f the following vector spaces : V 1 ,i +1 = span { r 0 , . . . , r i } , V 2 ,i +1 = span { r 0 , AM − 1 r 0 , ( AM − 1 ) 2 r 0 } , . . . , ( AM − 1 ) i r 0 } = K i +1 ( AM − 1 , r 0 ) , V 3 ,i +1 = span { M ( u i +1 − u 0 ) , M ( u i +1 − u 1 ) , . . . , M ( u i +1 − u i ) } . V ector space V 2 ,i +1 is the so-called Krylov space K i +1 ( AM − 1 , r 0 ) o f order i + 1, generated by matr ix AM − 1 and vector r 0 . It is ea sy to show that these vector spa ces are e q ual (see, e.g., [1 8, 3]). Expressio n (2.9) shows that M ( u i +1 − u i ) ∈ K i +1 ( AM − 1 , r 0 ). The GMRES pro cedure can b e seen as a w ay to accelerate sta tionary iter a tive method (2.9), by Steepest Descen t Preconditioning for N-GMRES Optimization 7 recombining itera tes (or, equiv alently , by reusing res idua ls). In par ticular, w e seek a better approximation ˆ u i +1 , with M ( ˆ u i +1 − u i ) in the Krylov space K i +1 ( AM − 1 , r 0 ), such that ˆ r i +1 = b − A ˆ u i +1 has minimal t w o-norm. In other words, we seek o ptimal co efficients β j in M ( ˆ u i +1 − u i ) = i X j =0 β j M ( u i +1 − u j ) , and it is ea sy to show that this corresp onds to seeking optimal co efficients α j in ˆ u i +1 = u i +1 + i X j =0 α j ( u i +1 − u j ) , (2.10) such that k ˆ r i +1 k 2 is minimized (which leads to a small least-squar es pro blem equiv- alent to (2.5)). Note that V 1 ,i +1 and V 2 ,i +1 do not easily gener alize to the nonlinear case, but the ima ge of V 1 ,i +1 under M − 1 , span { u i +1 − u 0 , u i +1 − u 1 , . . . , u i +1 − u i } , do es ge ne r alize natura lly a nd is taken a s the ‘g eneralized Kr ylov space ’ that is us e d to seek the appr oximation in the nonlinea r ca se. Up to this po int, we have presented GMRES as a wa y to acceler ate one-step stationary iter ative metho d (2.9). A more customary way , howev er , to s ee GM- RES is in terms of preconditioning. The approach describ ed ab ove re duce s to ‘non- preconditioned’ GMRES when one sets M = I . A pplying no n-preconditioned GM- RES to the preconditio ned linear equation system AM − 1 ( M u ) = b a lso r esults in the expressions fo r preconditioned GMRES der ived ab ove. In this viewp oint, the matrix M − 1 is ca lled the preco nditioner matrix, b eca use its role is viewed as to pre- condition the sp ectrum o f the linear system op erator such tha t the (non-preco nditioned) GM- RES metho d applied to ( AM − 1 ) y = b b ecomes more effective. It is also customary to say that the s ta tionary iterative pro cess pr econditions GMRES (for exa mple, Gauss- Seidel or J acobi can pr econdition GMRES). W e can summarize that the r ole o f the stationary iterative metho d is to g e nerate preco nditioned r esiduals that build the Krylov space. In the presentation above, all iter ates u j for j = 0 , . . . , i (for instance, in the right-hand side of (2.10)) refer to the unaccelera ted iter ates generated by stationary iterative metho d (2.9). How ever, the formulas rema in v alid when accele r ated iterates are us e d instea d; this do es change the v alues of the co efficients α j , but lea ds to the same accelerated itera tes [1 8]. This is so b ecause the Krylov spa ces ge ne r ated in the t wo ca ses are iden tical due to linea rity , and co nsequently GMRES selects the same optimal improved iter ate. This brings us to the p o int where we c a n compare steepest-des cent preco nditio ned N-GMRES a pplied to quadra tic o b jective function (2.6) with SP D op erator A , to non-preconditione d linea r GMRES applied to A u = b . Assume w e hav e w previo us iterates u i and residuals r i . Stationary iterative pro cess (2.9) without preconditioner ( M = I ) w o uld a dd a v ec to r to the Krylov space which has the same direc tion as the vector that w ould b e a dded to it by the steepest descent preconditioning pro ce ss (2.8). This means that the acceler a ted iterate ˆ u i +1 pro duced by N-GMRES with steep est descen t preconditioner applied to quadratic ob jective function (2.6) with SPD op erator A is the same as the accelerated iterate ˆ u i +1 pro duced by linear GMRES with identit y preconditioner applied to A u = b . This motiv ates o ur prop osa l to use 8 H. De Sterc k steep est descent preconditioning a s the natural a nd most ba sic preconditioning pr o cess for the N-GMRES optimization alg o rithm applied to ge ner al nonlinear optimization problems. Note tha t, in the case of linear sys tems, the efficiency o f GMRES as an accelera tion techn ique for statio nary iter ative methods can b e understo o d in terms of how optimal po lynomials can damp mo des that ar e slow to converge [18, 14]. In the case of N-GMRES for nonlinear optimization, if the a pproximation is close to a stationary po int a nd the nonlinear residual v ector function g ( . ) can be appr oximated well b y linearization, then it c an b e exp ected tha t the use of the subspace span { u i +1 − u 0 , u i +1 − u 1 , . . . , u i +1 − u i } for acceler ation may give efficiency similar to the linea r case [18]. Note finally that the ab ove a ls o explains why a small step is a llowed in the sd preconditioner of (2.2) (basically , in the linear cas e , the size o f the co efficient do es not matter for the Krylov space), and the lineariz a tion arg umen t of (2.4 ) indicates that a small step may be b eneficial. 2.4. Con vergence Theory for N-GMRES Optimi zation with Steep est Descen t Preconditioni ng. W e now formulate and pr ov e a conv er gence theorem for N-GMRES O ptimization Algor ithm 1 using s teep e s t descent preco nditioning with line search (2.1). W e ass ume that all line sear ches provide step lengths that satisfy the W olfe co nditio ns [10]: sufficient decrease condition: f ( u i + β i p i ) ≤ f ( u i ) + c 1 β i ∇ f ( u i ) T p i , (2.11 ) cur v a ture condition : ∇ f ( u i + β i p i ) T p i ≥ c 2 ∇ f ( u i ) T p i , (2.12) with 0 < c 1 < c 2 < 1 . Condition (2 .1 1) e nsures that large steps a r e taken only if they lead to a pr op ortiona lly large decr ease in f . Condition (2.12) ensures that a s tep is taken tha t is larg e enough to sufficie ntly increas e the gradient of f in the line search direction (make it less ne g ative). Global conv ergence (in the sense of c onv er gence to a stationar y point from any initial g uess) can then be pr ov e d easily using s tandard approaches [6 , 1 0]. Theorem 2 . 1 ( Global conv ergence of N-GMRES optimization algor ithm with steep est des cent line se a rch preco nditio ning ). Consider N-GMRES Optimization Algo rithm 1 with ste ep est desc ent line se ar ch pr e c onditioning (2.1) for Optimiza- tion Pr oblem I, and assume that al l line s e ar ch solutions satisfy the Wolfe c ondi- tions, ( 2.11) and (2.12). Assu me that obje ctive function f is b oun de d b elow in R n and that f is c ontinuously differ entiable in an op en set N c ontaining the level set L = { u : f ( u ) ≤ f ( u 0 ) } , wher e u 0 is the starting p oint of the iter ation. Assume also that the gr adient ∇ f is Lipschitz c ontinuous on N , that is, ther e ex ists a c onstant L such that k∇ f ( u ) − ∇ f ( ˆ u ) k ≤ L k u − ˆ u k for al l u , ˆ u ∈ N . Then the se quenc e of N- GMRES iter ates { u 0 , u 1 , . . . } is c onver gent to a fi xe d p oint of Optimization Pr oblem I in the sense that lim i →∞ k∇ f ( u i ) k = 0 . (2.13) Pr o of . Cons ider the sequence { v 0 , v 1 , . . . } formed by the itera tes u 0 , ¯ u 1 , u 1 , ¯ u 2 , u 2 , . . . of Algo r ithm I, but with ¯ u i remov ed if ˆ u i − ¯ u i is not a descent direction in Steepest Descen t Preconditioning for N-GMRES Optimization 9 Step II I of the algo rithm. Then all iterates v i are o f the form v i = v i − 1 + β i − 1 p i − 1 , with p i − 1 a descent direc tio n and β i − 1 such that the W o lfe co nditions are satisfied. According to Theorem 3.2 of [1 0] (p. 38, Zoutendijk’s Theo rem), we hav e that ∞ X i =0 cos 2 θ i k∇ f ( v i ) k 2 < ∞ , (2.14) with cos θ i = −∇ f ( v i ) T p i k∇ f ( v i ) k k p i k , (2.15) which implies that lim i →∞ cos 2 θ i k∇ f ( v i ) k 2 = 0 . (2.16) Consider the subseq ue nc e {k∇ f ( u i ) k} of {k∇ f ( v i ) k} . Since all the u i are follow e d by a steepes t descent step in the alg orithm, the θ i corres p o nding to all the el- ement s of {k∇ f ( u i ) k} satisfy co s θ i = 1. Therefore, it follows from (2 .16) that lim i →∞ k∇ f ( u i ) k = 0 , which concludes the pro o f. Note tha t the no tion of conv ergence (2.13) we prove in Theorem 2 .1 for N-GMRES optimization with steep est descent line sear ch preconditioning is stronger than the t yp e of conv e r gence that ca n be prov ed fo r so me N-CG metho ds [6, 10], namely , lim i →∞ inf k∇ f ( u i ) k = 0 . (2.17) Also, it app ears that, in the pro o f of Theor em 2.1, we cannot guar antee that s equence {k∇ f ( ¯ u i ) k} converges to 0. W e know that sequence { f ( v i ) } conv erges to a v alue f ∗ since it is nonincreasing and bounded below, but it appear s that the pr op erties of the line searches do not guar antee that the sequence {k∇ f ( v i ) k} conv er ges to 0. They do gua rantee that the subse q uence {k∇ f ( u i ) k} conv e r ges to 0, but it cannot b e ruled out that, as the f ( u i ) approach f ∗ and the k ∇ f ( u i ) k approa ch 0 , large steps with very small decrease in f may still b e ma de from each u i to the next ¯ u i +1 (large steps with small decrease a re allow ed in this c a se since the u i approach a stationary po int) , while, a t the same time, la r ge steps with very small decrease in f may be made from the ¯ u i +1 to the next u i +1 (large steps with s mall decrease are allow ed in this case if the se a rch direction p from ¯ u i +1 is such that ∇ f ( ¯ u i +1 ) T p is very clo s e to 0). These la rge s teps may in principle preclude {k∇ f ( ¯ u i ) k} from converging to 0 (but we do not obs e rve such pa thological cases in our numerical tests). Nevertheless, w e are able to prov e the strong co nv er g ence result (2.13) for the itera tes u i of N-GMRES optimization with steep est des cent line search preconditioning: seq ue nc e {k∇ f ( u i ) k} conv er ges to 0. 3. Numerical Results. W e now present extensive numerical results for the N- GMRES optimization alg orithm with steepes t descent pr econditioners (2.1) and (2.2), compared with stand-alo ne steep est descent optimizatio n, N-CG and L-BFGS. In all tests, we utilize the Mo r´ e-Thuen te line sear ch method [8] and the N-CG a nd L-BFGS optimization metho ds as implement ed in the Poblano to olb ox for Matlab [4]. F or all e x p e riments, the Mor´ e-Thuen te line sear ch parameters used were as follows: function v alue toler ance c 1 = 1 0 − 4 for (2.11), gradient nor m tolerance c 2 = 1 0 − 2 for (2.12), s ta rting sear ch s tep length β = 1, and a maximum of 2 0 f /g ev alua tions are 10 H. De Sterc k used. Thes e v alues were a lso used fo r the N-CG and L-B FGS comparison runs . W e use the N-CG v ar iant with Polak-Ribi` ere up date formula, and the tw o -lo op r ecursion version of L-BFGS [10]. W e no rmally choose the N-GMRES window size w equal to 20, which is co nfirmed to b e a go o d choice in numerical tests describ ed below. The L-BFGS window size is chosen equal to 5 (we found that larger window sizes tend to harm L- BFGS p erfor mance for the tests we considered). All initial g uesses are determined uniformly randomly with comp onents in the in terv al [0 , 1], and when w e compare different metho ds they are given the same random initial guess. All n umerical tests were run on a la ptop with a dua l-core 2.53 GHz Intel Core i5 pro cesso r and 4GB of 1067 MHz DDR3 memory . Matlab version 7.11.0.584 (R2010b) 64-bit (maci64) was used for all tests. 3.1. T est Problem Des cription. W e first des crib e the seven test problems we consider. In what follows, all v ectors ar e chosen in R n , a nd a ll matrices in R n × n . Problem A. (Quadra tic objective function with spd diagonal ma trix.) f ( u ) = 1 2 ( u − u ∗ ) T D ( u − u ∗ ) + 1 , (3.1) with D = diag(1 , 2 , . . . , n ) . This problem has a uniq ue minimizer u ∗ in whic h f ∗ = f ( u ∗ ) = 1. W e choose u ∗ = (1 , . . . , 1). Note that g ( u ) = D ( u − u ∗ ) , and the condition num ber of D is given by κ = n . It is w ell-known that for pro blems of this t ype large condition num b er s tend to lead to slo w convergence of the s teepe st descen t metho d due to a zig-zag effect. Problem A can b e used to s how how methods like N-CG and N-GMRES improv e ov er steep est descen t and mitigate this zig-zag effect. Problem B. (Problem A with p araboloid coo rdina te transforma tion. ) f ( u ) = 1 2 y ( u − u ∗ ) T D y ( u − u ∗ ) + 1 , (3.2) with D = diag(1 , 2 , . . . , n ) and y ( x ) given by y 1 ( x ) = x 1 and y i ( x ) = x i − 10 x 2 1 ( i = 2 , . . . , n ) . This mo dification of P roblem A still has a unique minimizer u ∗ in which f ∗ = f ( u ∗ ) = 1 . W e choos e u ∗ = (1 , . . . , 1). The gradient of f ( u ) is given b y g ( u ) = D y ( u − u ∗ ) − 20 ( u 1 − u ∗ 1 ) ( P n j =2 ( D y ( u − u ∗ )) j ) [1 , 0 , . . . , 0] T . This mo dification of P roblem A increases nonlinearity (the o b jective function is now quartic in u ) and changes the level surfaces from ellipsoids into par ab olically skew ed ellipsoids . As such, the pro blem is more difficult for nonlinear optimization metho ds. F or n = 2, the level curves a re mo dified fro m elliptic to ‘ba nana-sha pe d’. In fact, the ob jective function of Pro blem B is a m ulti-dimensional g eneraliza tion of Rosenbro ck’s ‘banana’ function. Problem C. (Problem B with a random non-diagonal ma trix with condi- tion n u m ber κ = n .) f ( u ) = 1 2 y ( u − u ∗ ) T T y ( u − u ∗ ) + 1 , (3.3) with T = Q diag(1 , 2 , . . . , n ) Q T , where Q is a random ortho gonal matrix and y ( x ) is given by y 1 ( x ) = x 1 and y i ( x ) = x i − 10 x 2 1 ( i = 2 , . . . , n ) . Steepest Descen t Preconditioning for N-GMRES Optimization 11 This mo dification of Pro blem B still ha s a unique minimizer u ∗ in which f ∗ = f ( u ∗ ) = 1 . W e choos e u ∗ = (1 , . . . , 1). The gradient of f ( u ) is given b y g ( u ) = T y ( u − u ∗ ) − 20 ( u 1 − u ∗ 1 ) ( P n j =2 ( T y ( u − u ∗ )) j ) [1 , 0 , . . . , 0] T . The r andom matrix Q is the Q factor obtained fro m a QR-factorizatio n o f a r andom ma trix with e le ment s uniformly drawn from the int erv al [0 , 1 ]. This mo dificatio n of Pro blem B int ro duces nonlinear ‘mixing’ o f the co ordinates (cros s-terms) a nd further increases the difficulty of the problem. 0 50 100 150 200 10 −15 10 −10 10 −5 10 0 iterations (a) convergence to f* N−GMRES−sdls N−GMRES−sd N−CG sdls L−BFGS 0 50 100 150 200 10 −15 10 −10 10 −5 10 0 iterations (b) convergence of the gradient norm N−GMRES−sdls N−GMRES−sd N−CG sdls L−BFGS 0 100 200 300 400 500 10 −15 10 −10 10 −5 10 0 f and g evaluations (c) convergence to f* N−GMRES−sdls N−GMRES−sd N−CG sdls L−BFGS 0 200 400 600 800 1000 10 −15 10 −10 10 −5 10 0 f and g evaluations (d) convergence of the gradient norm N−GMRES−sdls N−GMRES−sd N−CG sdls L−BFGS Fig. 3.1 . Pr oblem A ( n = 100 ). Conver genc e histories of the 10-lo garithms of | f ( u i ) − f ∗ | and k g ( u i ) k as a function of ite ra tions and f /g evaluations. N-GMRES-sd ls is the N-GM R ES optimization algorithm using ste ep est descent pr e co nditioning with line se ar ch, N-GMRES-sd is the N-GMRES optimization algorithm using steep est desc ent pr e c onditioning with pr e define d step, N- CG is the Polak-Ribi ` er e nonline ar c onjugate gr adient metho d, L-BF GS is t he limite d-memory Br oyden- Fletcher-Goldfarb-Shanno metho d, and sd ls is the stand-al one ste ep est desc ent metho d with line se ar c h. Problem D. (Extended R osenbrock function, pr oblem (21) from [9].) f ( u ) = 1 2 n X j =1 t 2 j ( u ) , with n even and t j = 1 0 ( u j +1 − u 2 j ) ( j o dd), t j = 1 − u j − 1 ( j even). Note that g ( u ) ca n easily b e computed using g k ( u ) = P n j =1 t j ∂ t j /∂ u k ( k = 1 , . . . , n ). 12 H. De Sterc k 0 200 400 600 800 1000 10 −15 10 −10 10 −5 10 0 (a) convergence to f*, N−GMRES with sd preconditioner f and g evaluations 1 2 3 5 10 15 20 30 40 0 500 1000 1500 10 −15 10 −10 10 −5 10 0 (b) gradient norm convergence, N−GMRES with sd preconditioner f and g evaluations 1 2 3 5 10 15 20 30 40 0 200 400 600 800 1000 10 −15 10 −10 10 −5 10 0 (c) convergence to f*, N−GMRES with sdls preconditioner f and g evaluations 1 2 3 5 10 15 20 30 40 0 500 1000 1500 10 −15 10 −10 10 −5 10 0 (d) gradient norm convergence, N−GMRES with sdls preconditioner f and g evaluations 1 2 3 5 10 15 20 30 40 Fig. 3.2 . Pr oblem A ( n = 100 ). Effe ct of varying window size w on | f ( u i ) − f ∗ | and k g ( u i ) k c onver genc e for N-GMRES-sd ls and N-GMRES-sd optimization as a function of f /g evaluations. Window size w = 20 emer ges as a suitable choic e, le ading to r apid c onver ge nc e. These r e sults give some gener al indic ation that, if sufficient memory is available, w = 20 may b e a go o d choic e . However, if memory is sc ar ce, w = 3 alr e ady pr ovides go o d r esults, esp e c ial ly for N- GMRES-sd. Problem E. (Br own almost-linear function, problem (27) from [9] .) f ( u ) = 1 2 n X j =1 t 2 j ( u ) , with t j = u j + ( n X i =1 u i ) − ( n + 1) ( j < n ), t n = ( n Y i =1 u i ) − 1 . Problem F. (Trigonometric function, problem (26) from [9].) f ( u ) = 1 2 n X j =1 t 2 j ( u ) , with t j = n − ( n X i =1 cos u i ) − j (1 − co s u j ) − sin u j . Steepest Descen t Preconditioning for N-GMRES Optimization 13 Problem G. (Penal ty function I, problem (23 ) from [9].) f ( u ) = 1 2 (( n X j =1 t 2 j ( u )) + t 2 n +1 ( u )) , with t j = √ 10 − 5 ( u j − 1) ( j = 1 , . . . , n ), t n +1 = ( n X i =1 u 2 i ) − 0 . 25 . 0 50 100 150 200 250 300 10 −15 10 −10 10 −5 10 0 iterations (a) convergence to f* N−GMRES−sdls N−GMRES−sd N−CG sdls L−BFGS 0 50 100 150 200 250 300 10 −15 10 −10 10 −5 10 0 iterations (b) convergence of the gradient norm N−GMRES−sdls N−GMRES−sd N−CG sdls L−BFGS 0 200 400 600 800 1000 10 −15 10 −10 10 −5 10 0 f and g evaluations (c) convergence to f* N−GMRES−sdls N−GMRES−sd N−CG sdls L−BFGS 0 200 400 600 800 1000 10 −15 10 −10 10 −5 10 0 f and g evaluations (d) convergence of the gradient norm N−GMRES−sdls N−GMRES−sd N−CG sdls L−BFGS Fig. 3.3 . Pr oblem B ( n = 100 ). Conver genc e co mp arison. 3.2. Numerical Results for Problem s A–C. W e first prese nt some conv er- gence plots for instances of Problems A–C. Fig. 3.1 shows results for a n instance of Problem A. W e see that stand-alo ne steep est desc ent with line sea rch (sdls) c o nv er ges slowly , which is expe c ted b ecause the co ndition num b er of matrix D is κ = 100 . Both N-GMRES optimization using stee p es t descent pr e conditioning with line sear ch (2.1) (N-GMRES-sdls) and N-GMRES optimization using steep est des cent preconditioning with predefined step (2.2) (N-GMRES-s d) are sig nificantly fas ter than stand-a lone sdls, in terms of itera tio ns a nd f /g ev aluatio ns, confirming that the N-GMRES accel- eration mec hanism is e ffective, and steep est descent is an effectiv e preconditioner for it. As could b e exp ected, the preconditioning line sear ches of N-GMRE S- sdls add s ig- nificantly to its f / g ev a luation co st, a nd N-GMRES-sd is mor e effective. N-GMRES accelerates steepe s t descen t up to a p o int where p e rformance b e comes comp etitive 14 H. De Sterc k 0 200 400 600 800 1000 10 −15 10 −10 10 −5 10 0 10 5 iterations (a) convergence to f* N−GMRES−sdls N−GMRES−sd N−CG sdls L−BFGS 0 200 400 600 800 1000 10 −15 10 −10 10 −5 10 0 10 5 iterations (b) convergence of the gradient norm N−GMRES−sdls N−GMRES−sd N−CG sdls L−BFGS 0 2000 4000 6000 8000 10000 10 −15 10 −10 10 −5 10 0 10 5 f and g evaluations (c) convergence to f* N−GMRES−sdls N−GMRES−sd N−CG sdls L−BFGS 0 2000 4000 6000 8000 10000 10 −15 10 −10 10 −5 10 0 10 5 f and g evaluations (d) convergence of the gradient norm N−GMRES−sdls N−GMRES−sd N−CG sdls L−BFGS Fig. 3.4 . Pr oblem C ( n = 100 ). Conver genc e c omp arison. with N-CG and L-BFGS. It is imp ortant to note that convergence profiles like the ones presented in Fig. 3 .1 tend to show s ignificant v ariation dep ending on the random initial guess. The instances presented ar e a rbitrar y and not hand-picked with a sp e- cial purp os e in mind (they simply corresp o nd to se e d 0 in o ur matla b co de) a nd we show them b eca use they do provide interesting illustratio ns and show patterns that we hav e verified to b e quite gener al over many ra ndom instances. How ever, they can- not reliably b e used to conclude on detailed r elative p erforma nce of v arious metho ds. F or this purp o se, we provide tables below tha t compare p erfor mance averaged ov er a set o f rando m trials. Fig. 3 .2 shows the effect of v ar y ing the window size w on | f ( u i ) − f ∗ | and k g ( u i ) k conv er gence for N-GMRES-sdls and N-GMRES-sd optimization as a function of f /g ev aluations, for an instance of Pro blem A. Window size w = 20 emerges a s a suitable choice if sufficient memory is av ailable, leading to ra pid co nv er g ence. How e ver, win- dow sizes as small as w = 3 already pr ovide go o d results, esp e cially for N-GMRES-sd. This indicates that satisfactory results can be obtained with small windo ws, whic h may b e useful if memory is sca r ce. W e use window size w = 2 0 fo r a ll numerical results in this paper . Fig. 3.3 shows r e sults for an instance of Pr oblem B, whic h is a modificatio n of Problem A in tro ducing more nonlinearity , and Fig. 3.4 shows r esults for the ev en more difficult Problem C, with random nonlinear mixing o f the co ordinate dir ections. Both figures show that s tand-alone sdls is very slow, a nd confirm that N-GMRES- sdls and Steepest Descen t Preconditioning for N-GMRES Optimization 15 N-GMRES-sd significantly sp eed up steepes t descen t. F or Pro blem B , N-GMRES- sdls, N-GMRES-s d, N-CG a nd L-BFGS perfor m similarly , but for the more difficult Problem C N-GMRES-s dls, N-GMRE S- sd and L-B FGS p erfor m m uch better than N-CG. problem N-GMRES-sdls N-GMRES-sd N-CG L-BF GS A n = 100 242 111 84 73 A n = 200 406 171 127 104 B n =1 00 1200 395 198 170 B n =2 00 1338 752 606 321 C n =10 0 926(1) 443 131 56(7) 151 C n =20 0 1447 461 26861 (9 ) 204 T able 3.1 Aver age numb er of f /g evaluations ne ed e d to r e ach | f ( u i ) − f ∗ | < 10 − 6 for 10 instanc e s of Pr oblems A–C with r andom initial guess and with differ ent sizes. Numb ers in br ackets give the numb er of r andom t ri als (out of 10) that did not co nver ge to the r equir e d toler anc e within 1500 iter ations (if any). 0 50 100 150 200 10 −15 10 −10 10 −5 10 0 iterations (a) convergence to f* N−GMRES−sdls N−GMRES−sd N−CG sdls L−BFGS 0 50 100 150 200 10 −15 10 −10 10 −5 10 0 iterations (b) convergence of the gradient norm N−GMRES−sdls N−GMRES−sd N−CG sdls L−BFGS 0 200 400 600 800 1000 10 −15 10 −10 10 −5 10 0 f and g evaluations (c) convergence to f* N−GMRES−sdls N−GMRES−sd N−CG sdls L−BFGS 0 200 400 600 800 1000 10 −15 10 −10 10 −5 10 0 f and g evaluations (d) convergence of the gradient norm N−GMRES−sdls N−GMRES−sd N−CG sdls L−BFGS Fig. 3.5 . Pr oblem D ( n = 1000 ). Conver genc e c omp arison. T able 3 .1 confirms the trends that w ere alrea dy present in the sp ecific instances of test pro blems A–C that were shown in Figures 3.1, 3 .3 and 3.4. The ta ble gives the average num ber of f /g ev aluations that were needed to r each | f ( u i ) − f ∗ | < 10 − 6 16 H. De Sterc k for 10 random instances of Problems A–C with differen t s iz e s. F or Pr oblems A and B, N-GMRES-sdls and N-GMRES-s d cons istently give f /g ev aluation counts that are of the s ame o rder of magnitude as N-CG. N-GMRES-sd comes close to b eing comp etitive with N-CG. L-BFGS is the fa stest metho d for all problems in T a ble 3.1. F or the mo re difficult Problem C, b oth N-GMRES-sdls, N-GMRES-sd a nd L-BFGS are significa nt ly faster than N-C G, whic h app ear s to have conv ergence difficulties for this pr oblem. N-GMRES-sd is clea rly faster than N-GMRES- s dls for all tests. 3.3. Numerical Results for Problems D–G. Figur e 3.5 g ives conv ergence plots for a single instance of Problem D. It confir ms the observ ations from Figures 3.1, 3.3 a nd 3 .4: for this standar d test problem from [9], stand-alo ne sdls again is very slow, a nd N-GMRE S-sdls and N-GMRES-sd significantly sp eed up steep es t descent conv er gence. N-GMRES-sdls and N-GMRES-sd hav e iteration and f / g coun ts that are of the same or der o f magnitude as N-CG a nd L-BFGS, and in par ticular N- GMRES-sd is comp etitive with N-CG and L-BFGS. Conv ergence plots for instances of P roblems E– G show similar b ehaviour a nd are not presented. problem N-GMRES-sdls N-GMRES-sd N-CG L-BFGS D n = 5 00 525 172 222 166 D n = 1 000 445 211 223 170 E n =1 00 294 259 243 358 E n =2 00 317 243 240 394 F n = 200 140 102(1) 102 92 F n = 500 206(1) 175(1) 135 118 G n = 100 10 08(2) 152 181 358 G n = 200 629(1) 181 137 240 T able 3.2 Aver age numb er of f /g evaluations ne ed e d to r e ach | f ( u i ) − f ∗ | < 10 − 6 for 10 instanc e s of Pr oblems D–G with r andom initial guess and with differ ent sizes. Nu mb ers in br ackets give the numb er of r andom trials (out of 10) t hat did not co nver ge to the r e quir e d toler anc e within 500 iter ations (if any). T able 3 .2 on f /g ev aluation coun ts for Problems E–G aga in confirms the trends that were observed befor e. N-GMRES-sdls and N-GMRES-sd give f / g ev aluation counts that are of the same o rder of ma gnitude as N-CG and L-B FGS, and N-GMRES- sd in particular is comp etitive with N-CG and L - BFGS. 4. Conclusion. In this pape r , w e ha ve prop ose d and studied steep e st descent preconditioning a s a universal prec o nditioning appr o ach for the N-GMRES optimiza- tion alg orithm that we recently intro duced in the con text of a ca nonical tensor ap- proximation problem and ALS preconditioning [3] (Pap e r I). W e have conside r ed tw o steep est descen t pre c onditioning pr o cess v ariants, one with a line search, and the other one with a predefined step length. The first v ariant is significant b e c ause w e sho wed that it leads to a globa lly conv er g ent optimization metho d, but the second v ar iant prov ed more efficient in numerical tests, with no apparent degra dation in c o nv er gence robustness. Numerical tests show ed that the t wo steep est-desc e nt preconditioned N-GMRES metho ds b o th sp eed up stand-alone steep est descent optimization very significantly , and are comp e titiv e with standa rd N-CG and L-BFGS metho ds, for a v ariety of test problems. These res ults serv e to theoretically a nd n umerica lly es - tablish steep est-descent pr e conditioned N-GMRES a s a g eneral optimization method for unconstrained nonlinear optimization, with p er fo rmance tha t a pp e ars promising Steepest Descen t Preconditioning for N-GMRES Optimization 17 0 0.5 1 1.5 2 2.5 3 x 10 4 10 −15 10 −10 10 −5 10 0 f and g evaluations (a) convergence to f* N−GMRES−sdls N−GMRES−sd sdls N−GMRES−ALS N−CG ALS L−BFGS 0 200 400 600 800 1000 1200 10 −15 10 −10 10 −5 10 0 f and g evaluations (b) convergence to f* N−GMRES−sdls N−GMRES−sd sdls N−GMRES−ALS N−CG ALS L−BFGS Fig. 4.1 . Convergenc e histories of the 10-lo garithm of | f ( u i ) − f ∗ | as a funct ion of f /g eval- uations, for t he ca nonic al tensor appr oximation pr oblem of Figur es 1.2 and 1.3 in [3]. Panel (a) shows that stand-alone sd ls is very slow for this pr oblem, and N - GMRES-sd ls and N-GMRES-sd signific antly sp e e d up ste ep est desc ent. However, for this difficult pr oblem, it is beneficial to use a mor e p owerful nonline ar pr ec onditioner. Using t he ALS pr e c onditioner in stand-alone fashion alr e ady pr ovides faster co nver ge nc e than N-GMRES-sd ls and N-GMRES-sd. The zo ome d view in Panel (b) shows that N-CG and L-BFGS ar e faster than stand-alone AL S when high ac cur acy is r e quir e d, but N-GMRES pr e c onditione d with the p owerful AL S pr e co nditioner is the fastest metho d by far, be ating N-CG and L-BF GS by a factor of 2 to 3. This il lustr ates that the r e al p ower of the N-GMRES optimization algorithm may lie in its ability to e mploy p owerful pr oblem-dep endent nonline ar pr ec onditioners (ALS in this c ase). 18 H. De Sterc k compared to established techniques. How ever, we w ould like to argue that the r eal po tential of the N-GMRES op- timization fra mework lies in the fact that it c a n use problem-dep endent nonlinear preconditioners that a re more p ow erful than steep est descent. Preconditioning of N- CG in the form of (linea r) v ar iable tr a nsformations is an ar ea of a ctive research [7]. How ever, it is in teresting to note that our N-GMRES o ptimization framework natu- rally allows for a mo re gener al type o f preco nditioning: a ny nonlinear optimization pro cess M ( . ) ca n po tent ially b e used a s a no nlinear preconditioner in the fra me- work, or, equiv alent ly , N- GMRE S can b e used as a simple wrapp er aro und any other iterative optimization pr o cess M ( . ) to see k accelera tion of that pr o cess. This can be illustrated with the following example, in which w e first apply N-GMRES with the steep est descent preco nditioners pr op osed in this pap er, to a canonica l tenso r approximation pro blem from [3]. (In par ticular, we co nsider the canonical tensor a p- proximation problem of Figures 1.2 and 1.3 in [3], in whic h a ra nk -three cano nica l tensor approximation (with 450 v aria bles) is sough t fo r a three-way data tensor of size 5 0 × 50 × 50.) Panel (a) of Fig. 4.1 shows how stand-alone steepe s t descent (sdls) is very s low for this pro ble m: it requires more than 30,000 f / g ev aluations. (The tensor calcula tions are performed in matlab using the T ensor T o o lb ox [2 ]. F or this problem, we us e δ = 1 0 − 3 in (2.2).) The GMRES-sdls a nd N-GMRE S-sd conv ergence profiles c onfirm onc e more one of the main mess a ges of this pap er: steep es t-descent preconditioned N-GMRES sp eeds up stand-alo ne steep est descent very significantly . How ever, steepe st descent pr econditioning (whic h we have arg ued is in some sense equiv alent to non-prec onditioned GMRES for linear s y stems) is not p ow erful enough for this difficult pr oblem, and a more adv a nced pr e c onditioner is required. Indeed, Panel (a) of Fig. 4.1 s hows that the stand-alone ALS pr o cess is a lr eady more efficient than s teepe st-descent preconditioned N-GMRES. Panel (b) indicates, howev er , that N-GMRES preconditioned by ALS is a very effective metho d for this problem: it sp eeds up ALS very signficantly , and is m uch faster than N-CG a nd L-BFGS, by a factor of 2 to 3. (Panel (b) o f Fig. 4.1 illus trates the findings from extensive tests com- paring ALS, N-CG and ALS-pr econditioned N-GMRES that w ere rep orted in Pap er I a nd [1].) In the case of GMRES for linear sys tems, non-preconditio ne d GMRES (or: GM- RES with the identit y preconditioner) is often just a starting p oint. F or many difficult problems it conv erges to o s lowly , and there is a v er y extensive and e ver expanding resear ch literature on developing adv anced problem-dependent pre c onditioners that in many cases sp eed up co nv er gence very sig nificantly . In the same wa y , the prese nt pap er is likely not mo r e than a starting p oint in theoretically and numerically estab- lishing the N-GMRES optimization metho d with genera l steepes t descent precondi- tioning pro cess. As the results shown in Fig. 4.1 already indicate, we expect that the r eal p ower of the N-GMRES optimization framew ork will turn o ut to lie in its ability to use p owerful problem-dep endent no nlinear preconditioner s. This s uggests that further exploring N-GMRES optimization with adv anced preconditioner s ma y lead to efficient numerical metho ds for a v ariety of nonlinear optimization problems. Ac knowledgmen ts. This work was spo nsored by the Natura l Sciences and En- gineering Research Co uncil of Canada and b y Lawrence Livermore National Labo- ratory under sub contract B594099 . The research was conducted during a sabbatical visit at the Algorithms and Complexity Department of the Ma x Planck Institute for Informatics in Saar br ueck en, whose hospitality is greatly ac knowledged. Steepest Descen t Preconditioning for N-GMRES Optimization 19 REFERENCES [1] E. Ac ar, D.M. Dunla vy, and T.G. Kold a , A Sc alable Optimization Appr o ach for Fitting Canonic al T ensor De co mp ositions , Journal of Chemometrics, 25 (2011), pp. 67–86. [2] B.W. Bader an d T.G. Kolda , MA TLAB T ensor T o olb ox V ersion 2.4 , h ttp://csmr.ca.sandia.go v/ ∼ tgk olda/T ensorT oolb ox/ , M ar c h 2010. [3] H. De Sterck , A Nonline ar GMRES O ptimization Algorithm for Canonic al T ensor De c om- p osition , submitted to SIAM J. Sci. Comp., 2011, arXi v:1105.5331. [4] D.M. Dunla vy, T.G. K olda, and E. Acar , Poblano v1.0: A Matlab T o olb ox for Gr adient- Base d Optimization , T ec hnical Rep or t SAND2010-1422, Sandia National Labor atories, Al- buquerque, NM and Livermore, CA, March 2010. [5] H. F a ng and Y. Saad , Two classes of multise c ant metho ds for nonline ar ac c elera tion , Numer- ical Linear Algebra with Applications, 16 (2009), pp. 197–221. [6] J.C. Gilber t and J . Nocedal , Glob al Conver ge nc e Pr op erties of Conjugate Gr adient Metho ds for Optimization , SIAM J. Optim., 2 (1992), pp. 21–42. [7] W.W. Hager and H. Zhang , A Survey of Nonline ar Conjugate Gr adient Metho ds , Pacific Journal of Optimization, 2 (2006), pp. 35–58. [8] J.J. Mor ´ e a nd D.J. Thuente , Line se ar ch algorithms with guar ante e d sufficient de cr e ase , ACM T ransactions on Mathematical Softw are, 20 (1994), pp. 286–307. [9] J.J. Mor ´ e, B.S. Garbow, and K.E. Hillstrom , T esting Unc onstr aine d Optimization Soft- war e , ACM T rans. Math. Softw., 7 (1981), pp. 17–41. [10] J. Nocedal and S .J. Wright , Numeric al optimization , Second Edition, Springer, Berl i n, 2006. [11] C.W. Oosterlee , On multigrid for line ar c omplementarity pr oblems with applic ation to Am eric an- st yle options , Electronic T ransactions on Numerical Analysis, 15 (2003), pp. 165–185. [12] C.W. Oosterlee and T. W ashio , Krylov Subsp ac e A c c eler ation of Nonline ar M ultigrid with Applic ation t o R e cir culating Flows , SIAM J. Sci. C omput., 21 (2000), pp. 1670–16 90. [13] Y. Sa ad , A flexible inner-outer pr e c onditione d GMRES algorithm , SIAM J. Sci. Comp., 14 (1993), pp. 461–469. [14] Y. Sa ad , Iter ative Metho ds for Sp arse L ine ar Systems , Second Edi tion, SIAM, Philadelphia, 2003. [15] Y. Sa ad an d M.H. Schul tz , GMRES: A gener alize d minimal r esidual algorithm for solving nonsymmetric line ar systems , SIAM J. Sci. Comp., 7 (1986), pp. 856–869. [16] D.A. Sm ith, W.F. F ord, and A. Sidi , Ext ra p olation metho ds for ve c tor sequenc es , SIAM Rev., 29 (1987), pp. 199–234. [17] H. W alker an d P. Ni , Anderson ac c eler ation f or fixe d-p oint iter ations , to app ear in SIAM J. Numer. Anal (2011). [18] T. W ashio and C.W. Oosterlee , K rylov subsp ac e ac c eler ation for nonline ar multigrid schemes , Electronic T ransactions on Numerical Analysis, 6 (1997) , pp. 271–290 .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment