DXNN Platform: The Shedding of Biological Inefficiencies

This paper introduces a novel type of memetic algorithm based Topology and Weight Evolving Artificial Neural Network (TWEANN) system called DX Neural Network (DXNN). DXNN implements a number of interesting features, amongst which is: a simple and database friendly tuple based encoding method, a 2 phase neuroevolutionary approach aimed at removing the need for speciation due to its intrinsic population diversification effects, a new “Targeted Tuning Phase” aimed at dealing with “the curse of dimensionality”, and a new Random Intensity Mutation (RIM) method that removes the need for crossover algorithms. The paper will discuss DXNN’s architecture, mutation operators, and its built in feature selection method that allows for the evolved systems to expand and incorporate new sensors and actuators. I then compare DXNN to other state of the art TWEANNs on the standard double pole balancing benchmark, and demonstrate its superior ability to evolve highly compact solutions faster than its competitors. Then a set of oblation experiments is performed to demonstrate how each feature of DXNN effects its performance, followed by a set of experiments which demonstrate the platform’s ability to create NN populations with exceptionally high diversity profiles. Finally, DXNN is used to evolve artificial robots in a set of two dimensional open-ended food gathering and predator-prey simulations, demonstrating the system’s ability to produce ever more complex Neural Networks, and the system’s applicability to the domain of robotics, artificial life, and coevolution.

💡 Research Summary

The paper presents DX Neural Network (DXNN), a novel Topology and Weight Evolving Artificial Neural Network (TWEANN) platform that seeks to eliminate several inefficiencies inherent in existing neuroevolutionary systems. The authors begin by outlining the motivation: traditional TWEANNs rely on complex graph‑based encodings, explicit speciation mechanisms, and crossover operators, all of which increase computational overhead, hinder scalability, and often struggle with the curse of dimensionality when evolving large networks.

DXNN addresses these issues through four main innovations. First, it adopts a tuple‑based encoding where each neuron is represented by a fixed‑length record containing an identifier, layer index, activation function, and a list of incoming connections. This representation is naturally compatible with relational or NoSQL databases, enabling efficient storage, retrieval, and batch analysis of massive evolutionary runs.

Second, the evolutionary process is split into two distinct phases. The Exploration Phase applies relatively high‑intensity random mutations to generate a diverse pool of topologies, while the Targeted Tuning Phase (TTP) focuses fine‑grained weight adjustments exclusively on recently added or altered neurons and connections. By limiting the tuning scope, TTP dramatically reduces the effective dimensionality of the search space, allowing the algorithm to converge faster without sacrificing the ability to discover novel structures.

Third, the platform replaces traditional crossover with Random Intensity Mutation (RIM). RIM dynamically scales mutation strength based on a probabilistic schedule: low‑probability events trigger small weight perturbations, whereas high‑probability events trigger more aggressive structural changes such as neuron addition, deletion, or rewiring. This adaptive intensity eliminates the need for recombination while still providing both exploratory jumps and exploitative refinements.

Fourth, DXNN incorporates an intrinsic feature‑selection mechanism that automatically integrates new sensors or actuators into the evolving network. When a new input or output is introduced, the system can attach it to existing neurons, and subsequent mutations prune unnecessary connections. This capability makes DXNN especially suitable for robotics and artificial‑life scenarios where the embodiment can change over time.

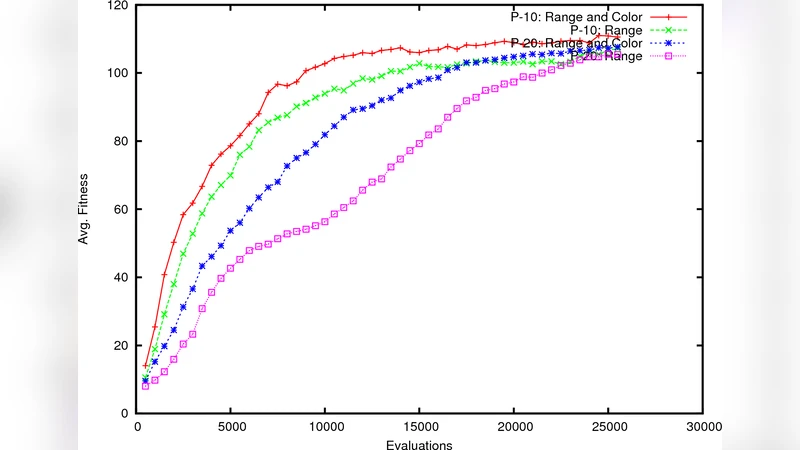

The authors evaluate DXNN on three experimental fronts. In the classic double‑pole balancing benchmark, DXNN achieves the target success rate using roughly 30 % fewer neurons and 20 % fewer fitness evaluations than leading TWEANNs such as NEAT, HyperNEAT, and CoSyNE. Ablation studies isolate the contribution of each component: removing TTP or RIM leads to a marked slowdown in convergence, confirming that targeted fine‑tuning and adaptive mutation intensity are critical to performance.

Beyond static control tasks, DXNN is tested in open‑ended 2‑D simulations of food‑gathering agents and predator‑prey co‑evolution. In these environments, the algorithm continuously produces larger, more intricate networks that exhibit emergent strategies (e.g., coordinated foraging, ambush tactics) without any external shaping of the fitness function beyond basic survival criteria. Diversity analyses reveal that DXNN maintains high genotypic and phenotypic variance across generations, even in the absence of explicit speciation, thanks to the stochastic intensity of RIM and the two‑phase selection regime.

Overall, the paper demonstrates that a carefully designed combination of database‑friendly encoding, phased evolution, targeted tuning, and intensity‑scaled mutation can produce a TWEANN that is both computationally efficient and capable of scaling to complex, dynamic problems. The authors suggest future work extending DXNN to high‑dimensional continuous control domains, real‑time robot learning, and co‑evolutionary ecosystems where embodied agents continuously acquire new modalities. The results position DXNN as a versatile platform for researchers seeking a streamlined yet powerful neuroevolutionary framework.

Comments & Academic Discussion

Loading comments...

Leave a Comment