On the Weakenesses of Correlation Measures used for Search Engines Results (Unsupervised Comparison of Search Engine Rankings)

The correlation of the result lists provided by search engines is fundamental and it has deep and multidisciplinary ramifications. Here, we present automatic and unsupervised methods to assess whether or not search engines provide results that are comparable or correlated. We have two main contributions: First, we provide evidence that for more than 80% of the input queries - independently of their frequency - the two major search engines share only three or fewer URLs in their search results, leading to an increasing divergence. In this scenario (divergence), we show that even the most robust measures based on comparing lists is useless to apply; that is, the small contribution by too few common items will infer no confidence. Second, to overcome this problem, we propose the fist content-based measures - i.e., direct comparison of the contents from search results; these measures are based on the Jaccard ratio and distribution similarity measures (CDF measures). We show that they are orthogonal to each other (i.e., Jaccard and distribution) and extend the discriminative power w.r.t. list based measures. Our approach stems from the real need of comparing search-engine results, it is automatic from the query selection to the final evaluation and it apply to any geographical markets, thus designed to scale and to use as first filtering of query selection (necessary) for supervised methods.

💡 Research Summary

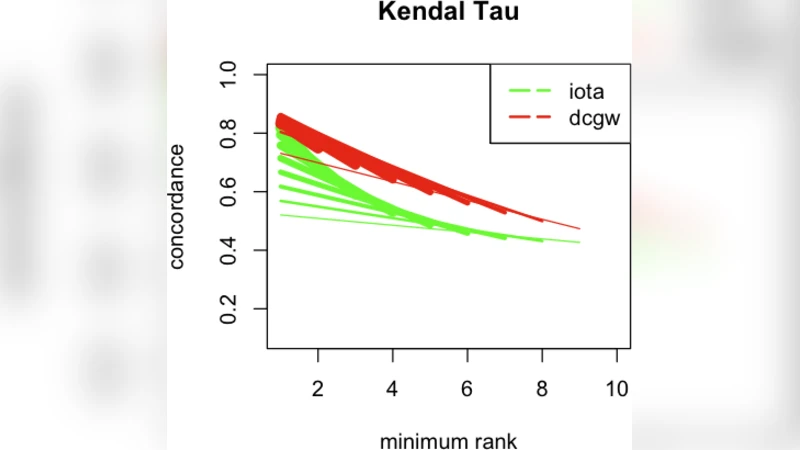

The paper investigates the reliability of traditional correlation measures used to compare search‑engine result rankings and demonstrates that these measures break down when the overlap between the result lists is low—a condition that occurs for more than 80 % of queries in the authors’ dataset. By collecting a large corpus of real‑world queries and the top‑10 results from two major engines (Google and Yahoo), the authors show that in the majority of cases the two engines share three or fewer URLs. Under such “divergence” conditions, set‑based Jaccard similarity, weighted Spearman footrule, and weighted Kendall’s tau all produce values close to zero or otherwise lack statistical confidence, because the few common URLs dominate the calculation while the many non‑common URLs dilute the signal.

To address this fundamental weakness, the authors propose two content‑based similarity measures that operate on the actual landing‑page text rather than on URL identifiers. The first measure constructs a bag‑of‑words representation for each result page, forms a set of unique terms, and computes the Jaccard ratio on these term sets. This approach captures semantic overlap even when the URLs differ. The second measure treats each page as a term‑frequency distribution, builds cumulative distribution functions (CDFs), and applies the φ‑measure (a distribution‑similarity metric) to quantify how closely the two distributions match. The authors argue that these two measures are orthogonal: the set‑based Jaccard captures lexical overlap, while the φ‑measure captures distributional similarity, providing complementary information.

The theoretical contribution includes a concise review of set, list, and distribution similarity, and a formal extension of Spearman’s footrule and Kendall’s tau to weighted, partial lists. By extending partial rankings to full permutations (appending missing items at the end) and defining a consistent weighting scheme, the authors prove that the weighted footrule and weighted Kendall’s tau remain equivalent under their formulation. Normalization to the interval

Comments & Academic Discussion

Loading comments...

Leave a Comment