Adaptive Channel Recommendation For Opportunistic Spectrum Access

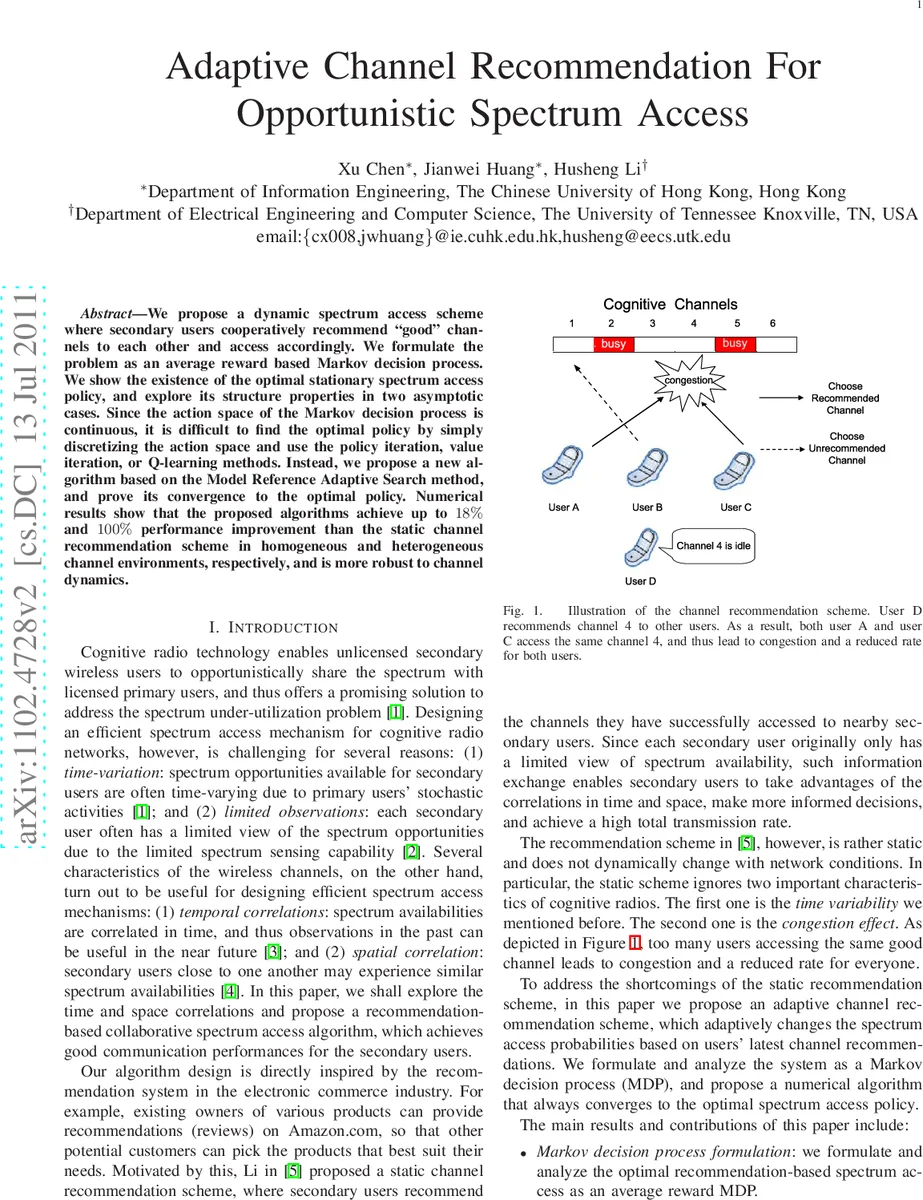

We propose a dynamic spectrum access scheme where secondary users recommend “good” channels to each other and access accordingly. We formulate the problem as an average reward based Markov decision process. We show the existence of the optimal stationary spectrum access policy, and explore its structure properties in two asymptotic cases. Since the action space of the Markov decision process is continuous, it is difficult to find the optimal policy by simply discretizing the action space and use the policy iteration, value iteration, or Q-learning methods. Instead, we propose a new algorithm based on the Model Reference Adaptive Search method, and prove its convergence to the optimal policy. Numerical results show that the proposed algorithms achieve up to 18% and 100% performance improvement than the static channel recommendation scheme in homogeneous and heterogeneous channel environments, respectively, and is more robust to channel dynamics.

💡 Research Summary

The paper addresses the problem of opportunistic spectrum access in cognitive radio networks where secondary users share multiple primary channels. Existing static channel‑recommendation schemes assign a fixed branching probability to recommended channels, which can cause severe congestion when many users select the same “good” channel. To overcome this limitation, the authors propose an adaptive channel‑recommendation framework in which each secondary user broadcasts the ID of a successfully accessed idle channel at the end of every time slot, and all users adjust their branching probability (P_{rec}) based on the number of recommended channels observed in the most recent slot.

The system is modeled as an average‑reward Markov decision process (MDP). The state is the integer (R) representing the number of recommended channels (0 ≤ R ≤ min{M,N}), and the action is the continuous variable (P_{rec}\in(0,1)). Transition probabilities (P_{P_{rec}}(R,R’)) capture how the number of recommended channels evolves given the current state and the chosen branching probability. The reward function is the expected total throughput in the next slot, which equals (R’ \times B) where (B) is the per‑user data rate on an idle channel. The objective is to find a stationary policy (\pi^*) that maximizes the long‑term average throughput for any initial state.

Because the action space is continuous, conventional discrete‑action methods such as policy iteration, value iteration, or Q‑learning are unsuitable. The authors therefore adopt the Model Reference Adaptive Search (MRAS) algorithm, a stochastic optimization technique that iteratively refines a probability distribution over policies. In each iteration, a set of candidate policies is sampled, evaluated, and the top (\rho)‑fraction is used to update the reference distribution by minimizing the Kullback‑Leibler divergence. The paper proves that MRAS converges to the optimal stationary policy under mild conditions.

Two asymptotic regimes are analytically examined. When the number of secondary users (N) tends to infinity, the optimal policy sets (P_{rec}=R/N), ensuring that on average only one user selects each recommended channel. When the number of channels (M) grows without bound, the optimal policy reduces to uniform random selection ((P_{rec}=1/M)). These structural results simplify implementation and provide insight into the behavior of the optimal policy.

Extensive simulations compare the proposed adaptive scheme with the static recommendation method from prior work and with a Q‑learning baseline. In homogeneous channel settings the adaptive algorithm achieves up to 18 % higher average throughput than the static scheme; in heterogeneous environments the improvement reaches 100 %. Moreover, the adaptive approach remains robust when channel transition probabilities become more dynamic, degrading more slowly than the static method.

In summary, the paper contributes a rigorous MDP formulation for collaborative channel recommendation, establishes the existence and structural properties of the optimal stationary policy, introduces a convergent MRAS‑based algorithm for continuous‑action optimization, and demonstrates substantial performance gains and robustness through numerical experiments. This work advances the design of distributed, adaptive spectrum‑access mechanisms for cognitive radio networks.

Comments & Academic Discussion

Loading comments...

Leave a Comment