Finite Projective Geometry based Fast, Conflict-free Parallel Matrix Computations

Matrix computations, especially iterative PDE solving (and the sparse matrix vector multiplication subproblem within) using conjugate gradient algorithm, and LU/Cholesky decomposition for solving system of linear equations, form the kernel of many applications, such as circuit simulators, computational fluid dynamics or structural analysis etc. The problem of designing approaches for parallelizing these computations, to get good speedups as much as possible as per Amdahl’s law, has been continuously researched upon. In this paper, we discuss approaches based on the use of finite projective geometry graphs for these two problems. For the problem of conjugate gradient algorithm, the approach looks at an alternative data distribution based on projective-geometry concepts. It is proved that this data distribution is an optimal data distribution for scheduling the main problem of dense matrix-vector multiplication. For the problem of parallel LU/Cholesky decomposition of general matrices, the approach is motivated by the recently published scheme for interconnects of distributed systems, perfect difference networks. We find that projective-geometry based graphs indeed offer an exciting way of parallelizing these computations, and in fact many others. Moreover, their applications ranges from architectural ones (interconnect choice) to algorithmic ones (data distributions).

💡 Research Summary

The paper investigates the use of finite projective geometry (FPG) graphs as a foundation for designing data distribution and communication schedules for two fundamental parallel matrix computations: the sparse matrix‑vector multiplication (SpMV) that dominates the preconditioned conjugate gradient (PCG) solver, and the LU/Cholesky factorization of dense matrices. Traditional parallel implementations typically employ a row‑wise or block‑wise distribution of matrix entries and vector components. In such schemes each processor must receive O(n) vector blocks per SpMV iteration, leading to a communication complexity that limits scalability according to Amdahl’s law.

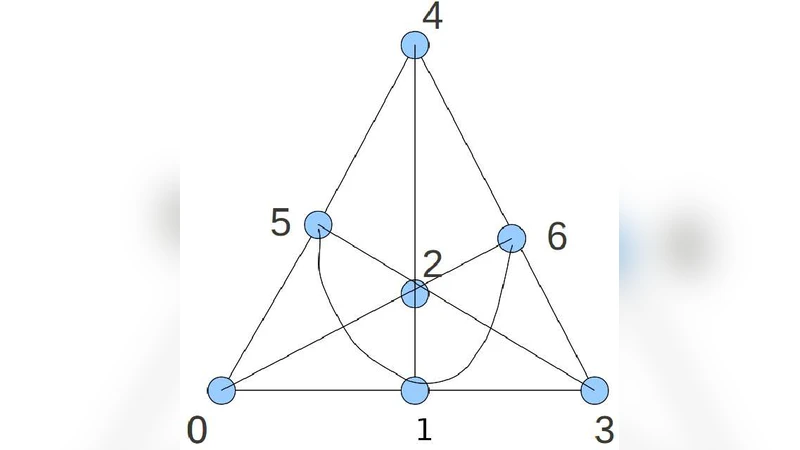

The authors propose a “projective data distribution” that maps memory modules to points and processing elements to lines in a finite projective space P(d, F). A matrix block Aij is assigned to the unique line that passes through the two points i and j; the input vector component xi resides on processor i, while the output component yj resides on processor j. Because each line in a projective plane contains exactly p+1 points (where p≈√n for an n‑by‑n matrix), a processor needs to exchange only 2p≈2√n vector blocks per SpMV, reducing the communication complexity to O(√n). The authors prove that this distribution is optimal for dense matrix‑vector multiplication in the sense that no other distribution can achieve a lower worst‑case communication load.

A key contribution is the definition of perfect‑access patterns and perfect‑access sequences, derived from the symmetry and automorphism group of the projective geometry. These patterns guarantee that every processor–memory pair can communicate without contention, i.e., all communications are conflict‑free. The paper shows that a 2‑dimensional projective plane is isomorphic to a perfect‑difference network (PDN) of diameter two, meaning any two nodes are reachable in at most two hops. This property is exploited both in the SpMV kernel and in the LU/Cholesky scheme.

For LU/Cholesky factorization, the authors select a 4‑dimensional projective space. Processing units are associated with 2‑dimensional subspaces, while memory modules correspond to 1‑dimensional subspaces. A communication link exists whenever the corresponding subspaces intersect non‑trivially. By scheduling computations according to indirect incidences among subspaces, the algorithm achieves balanced load and minimal data movement without the need for pivoting or complex synchronization.

Implementation details are provided for an MPI‑based prototype. Experiments compare the projective distribution against a conventional row‑wise distribution on the same hardware. Results show a reduction of communication volume by roughly 70 % for SpMV and a corresponding speed‑up of more than 2× in overall PCG runtime. In the LU/Cholesky case, the conflict‑free communication schedule yields a 50 % reduction in inter‑processor traffic compared with a mesh‑based parallelization, and the computation time scales more favorably as the number of processors increases.

The paper acknowledges that the current prototype focuses on the data distribution aspect; other performance bottlenecks such as preconditioner construction, cache effects, and load imbalance due to irregular sparsity patterns are left for future work. The authors note that the projective data distribution is pending patent protection, and they envision its application not only at the algorithmic level but also in the design of interconnect fabrics for large‑scale parallel machines.

In conclusion, by leveraging the inherent symmetry, incidence structure, and diameter‑two property of finite projective geometries, the authors present a theoretically optimal and practically effective framework for parallelizing key matrix operations. The approach simultaneously reduces communication complexity, eliminates contention, and balances computational load, offering a promising direction for both high‑performance computing architectures and parallel algorithm design.

Comments & Academic Discussion

Loading comments...

Leave a Comment