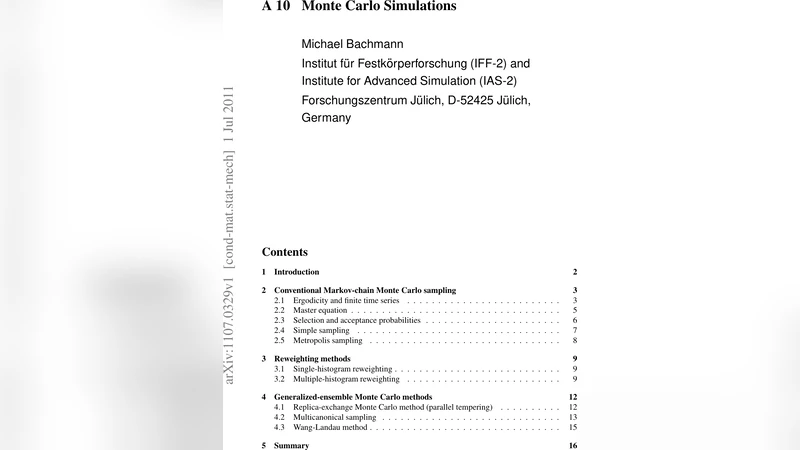

Monte Carlo Simulations

Monte Carlo computer simulations are virtually the only way to analyze the thermodynamic behavior of a system in a precise way. However, the various existing methods exhibit extreme differences in their efficiency, depending on model details and relevant questions. The original standard method, Metropolis Monte Carlo, which provides only reliable statistical information at a given (not too low) temperature has meanwhile been replaced by more sophisticated methods which are typically far more efficient (the differences in time scales can be compared with the age of the universe). However, none of the methods yields automatically accurate results, i.e., a system-specific adaptation and control is always needed. Thus, as in any good experiment, the most important part of the data analysis is statistical error estimation.

💡 Research Summary

The paper provides a comprehensive review of Monte Carlo (MC) simulation techniques used to study thermodynamic properties of complex systems. It begins by contrasting molecular dynamics (MD), which suffers from prohibitive time‑scale limitations for processes such as protein folding, with MC methods that focus on equilibrium statistics rather than explicit dynamics. The authors argue that MC simulations are indispensable for exploring collective phenomena, phase transitions, and cooperative effects across a wide range of length scales.

The core of the review is organized around three major categories: conventional Markov‑chain Monte Carlo, reweighting methods, and generalized‑ensemble (or extended‑ensemble) techniques.

In the conventional Markov‑chain MC section, the authors lay out the mathematical foundations: ergodicity, finite‑time series, the master equation, and the detailed‑balance condition. They emphasize that each MC update consists of a selection step (probability s) and an acceptance step (probability a). The acceptance rule is expressed in the familiar Metropolis form a = min(1, σ·w), where σ accounts for any asymmetry in the proposal distribution and w is the ratio of target‑ensemble probabilities. Autocorrelation functions A_mn and the associated autocorrelation time τ_ac are introduced to quantify statistical inefficiencies. The effective number of independent samples, M_eff = M/τ_ac, leads to the error estimate ε_O = σ_O / √M_eff, highlighting the central role of error analysis.

Reweighting methods are then discussed. Single‑histogram reweighting allows one to extrapolate observables from a simulation performed at a given temperature to nearby temperatures using the Boltzmann factor. Multiple‑histogram reweighting (the WHAM approach) combines data from several simulations at different temperatures (or other control parameters) into a single optimal estimate of the density of states g(E). This technique dramatically extends the usable temperature range and improves statistical accuracy without additional simulations.

The third and most extensive part of the review covers generalized‑ensemble algorithms, which are designed to overcome the sampling bottlenecks caused by rugged energy landscapes. Three representative methods are described in detail:

-

Replica‑Exchange Monte Carlo (Parallel Tempering) – Independent replicas are simulated at a set of temperatures. Periodically, neighboring replicas attempt to exchange configurations with a Metropolis‑type acceptance probability that preserves detailed balance. High‑temperature replicas explore configuration space freely, while low‑temperature replicas benefit from occasional swaps that help them cross energy barriers.

-

Multicanonical Sampling – The target distribution is flattened by weighting configurations with the inverse of the density of states, w(E) ∝ 1/g(E). As a result, the simulation performs a random walk in energy space, visiting both low‑ and high‑energy regions with equal probability. The required weight factors are typically obtained iteratively from preliminary runs.

-

Wang‑Landau Algorithm – This method builds an estimate of g(E) on the fly. Starting with a modification factor f > 1, the algorithm updates g(E) multiplicatively each time an energy level is visited and records a histogram H(E). When H(E) becomes sufficiently flat, f is reduced (commonly by taking its square root) and H(E) is reset. The process repeats until f approaches unity, yielding an accurate estimate of the density of states without prior knowledge.

Throughout the discussion, the authors stress that none of these methods automatically guarantees accurate results. System‑specific adaptation—choice of move sets, tuning of parameters, and careful monitoring of equilibration—is essential. Moreover, rigorous statistical error estimation remains a cornerstone of reliable MC studies. Techniques such as binning analysis, jackknife resampling, and bootstrap are recommended to assess uncertainties, especially when data are correlated.

In the concluding section, the paper reiterates that Monte Carlo simulations are the only practical tool for obtaining precise thermodynamic information for many-body systems with competing interactions and complex energy landscapes. By providing a clear taxonomy of methods, their theoretical underpinnings, practical implementation issues, and strategies for error analysis, the review serves as a valuable guide for researchers seeking to select and tailor the most efficient Monte Carlo approach for their specific scientific problems.

Comments & Academic Discussion

Loading comments...

Leave a Comment