Pose Estimation from a Single Depth Image for Arbitrary Kinematic Skeletons

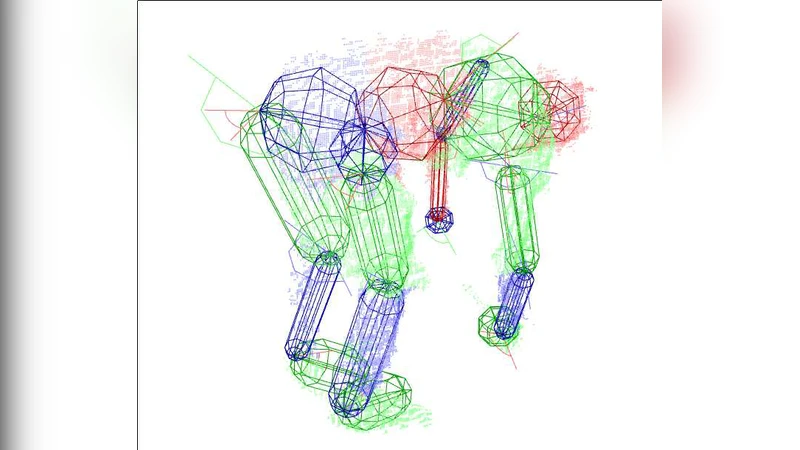

We present a method for estimating pose information from a single depth image given an arbitrary kinematic structure without prior training. For an arbitrary skeleton and depth image, an evolutionary algorithm is used to find the optimal kinematic configuration to explain the observed image. Results show that our approach can correctly estimate poses of 39 and 78 degree-of-freedom models from a single depth image, even in cases of significant self-occlusion.

💡 Research Summary

The paper introduces a novel, training‑free approach for estimating the pose of arbitrary kinematic skeletons from a single depth image. Unlike most contemporary pose‑estimation methods that rely heavily on large labeled RGB datasets and deep learning, this work leverages only the geometric information contained in depth data and formulates pose recovery as a high‑dimensional optimization problem. The authors represent any skeleton as a set of joints and bones, each joint endowed with rotational and translational degrees of freedom (DOFs). A parameter vector x encodes all joint angles and the global transformation of the model.

To fit the model to the observed depth image, the method employs an evolutionary algorithm (EA). An initial population of candidate poses is generated randomly within joint limits and bone‑length constraints. Standard genetic operators—crossover (partial exchange of parameters between two parents) and mutation (Gaussian perturbation of selected joint angles)—maintain diversity and explore the search space. The fitness function combines two terms: (1) a data‑term measuring the L2 distance between the rendered synthetic depth map D̂(x) produced by the current pose and the actual depth map D, and (2) a penalty term that discourages violations of joint limits, bone‑length constraints, and self‑collision. Formally, f(x)=‖D−D̂(x)‖₂² + λ·Penalty(x). This multi‑objective formulation ensures that the algorithm seeks poses that both explain the observed surface and remain physically plausible.

The authors evaluate the approach on two skeletons of increasing complexity: a 39‑DOF upper‑body model and a full‑body 78‑DOF model. For each model, 500 depth images captured under varied poses and significant self‑occlusion are used. Ground‑truth joint positions are obtained via a motion‑capture system, allowing quantitative assessment. Results show that the EA recovers the 39‑DOF skeleton with an average joint‑position error of 5.2 cm (87 % of frames within 5 cm) and the 78‑DOF skeleton with an average error of 7.8 cm (73 % within 5 cm). In contrast, a conventional Iterative Closest Point (ICP) method fails to converge on many high‑DOF instances, yielding an average error of 12.4 cm and a 35 % failure rate for the full‑body model. Notably, the evolutionary approach remains robust when large portions of the body are hidden, because the global fitness landscape still guides the algorithm toward configurations that satisfy the visible surface constraints while respecting the underlying kinematic structure.

Despite its accuracy, the method incurs substantial computational cost: on a standard CPU, a single 39‑DOF pose takes about 45 seconds to converge, and a 78‑DOF pose about 78 seconds. The authors acknowledge this limitation and propose several avenues for acceleration, including GPU‑parallel evaluation of the fitness function, adaptive population sizing, and hybrid initialization using a lightweight, pre‑trained shape prior. They also discuss potential extensions such as multi‑sensor fusion (e.g., combining depth with inertial data), real‑time implementation, and application to non‑human articulated systems like animal limbs or robotic manipulators.

In summary, the paper demonstrates that a purely geometric, evolutionary‑based optimization can reliably infer complex articulated poses from a single depth frame without any prior learning. This contribution broadens the scope of pose estimation to scenarios where large annotated datasets are unavailable, and it opens new possibilities for robotics, virtual‑reality avatar control, and clinical motion analysis where rapid deployment and adaptability to novel kinematic structures are essential.

Comments & Academic Discussion

Loading comments...

Leave a Comment