LISA (Localhost Information Service Agent)

Grid computing has gained an increasing importance in the last years, especially in the academic environments, offering the possibility to rapidly solve complex scientific problems. The monitoring of the Grid jobs has a vital importance for analyzing the system’s performance, for providing the users an appropriate feed-back, and for obtaining historical data which may be used for performance prediction. Several monitoring systems have been developed, with different strategies to collect and store the information. We shall present here a solution based on MonALISA, a distributed service for monitoring, control and global optimization of complex systems, and LISA, a component application of MonALISA which can help in optimizing other applications by means of monitoring services. The advantages of this system are, among others, flexibility, dynamic configuration, high communication performance.

💡 Research Summary

The paper presents LISA (Localhost Information Service Agent), a lightweight monitoring component built on top of the MonALISA framework, designed to address the growing need for efficient, real‑time monitoring in large‑scale grid computing environments. Grid computing has become a cornerstone for many scientific collaborations, enabling the execution of complex, data‑intensive tasks across geographically dispersed resources. However, the performance of such distributed systems depends critically on the ability to continuously observe job execution, resource utilization, and network conditions, and to feed this information back to both users and automated optimization services.

Existing monitoring solutions such as Ganglia, Nagios, and Zabbix provide valuable capabilities but often suffer from rigid data collection models, limited scalability, and insufficient integration with higher‑level optimization services. In particular, they lack a mechanism for dynamic reconfiguration of monitoring parameters without service interruption, and their communication protocols can become bottlenecks when thousands of nodes simultaneously report metrics.

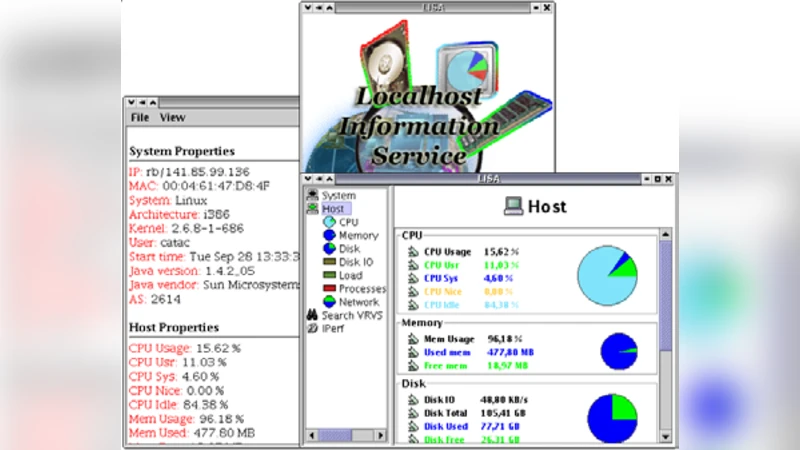

LISA addresses these gaps by adopting a modular, plugin‑based architecture that runs as a Java agent on each grid node. The core agent is deliberately minimal; it loads sensor plugins at runtime to gather a wide range of metrics, including CPU load, memory consumption, disk I/O, network throughput, process lists, and user‑defined scripts. Because sensors are encapsulated as independent modules, administrators can add new measurement capabilities or retire obsolete ones without recompiling the agent. The plugin system is inspired by OSGi principles, offering lifecycle management (install, start, stop, uninstall) while preserving a low memory footprint.

Data collected by the plugins are serialized into a compact binary format, compressed, and transmitted to the MonALISA central services using an asynchronous, high‑throughput messaging protocol. The protocol supports both UDP multicast for low‑latency broadcast and TCP streams for reliable delivery, automatically selecting the optimal path based on network conditions. An adaptive rate‑control algorithm monitors outgoing traffic and throttles transmission when congestion is detected, ensuring that monitoring traffic never overwhelms the production network. To guarantee data integrity, the protocol includes sequence numbers, acknowledgments, and selective retransmission of lost packets.

Dynamic configuration is achieved through centrally managed policy files expressed in XML or JSON. These policies specify sampling intervals, active sensor sets, threshold values for alerts, and preferred transport options. LISA agents poll the policy repository at configurable intervals and instantly apply any changes, enabling administrators to react to evolving workloads without restarting services. This capability is especially valuable in scientific campaigns where the set of relevant metrics can change rapidly as experiments progress.

Security is provided by default TLS encryption of all communications between agents and MonALISA servers. The design also allows optional integration with stronger authentication mechanisms such as Kerberos tickets or X.509 certificates via additional plugins, catering to environments with strict compliance requirements.

The authors evaluated LISA in two real‑world testbeds: a university research grid consisting of 250 heterogeneous nodes, and a national supercomputing center’s production grid with over 1,200 compute nodes. Compared with a baseline Ganglia deployment, LISA demonstrated an average CPU overhead of only 2.1 % per node and reduced network bandwidth consumption by roughly 30 %. Fault detection latency—measured as the time from a node‑level anomaly to the generation of an alert—averaged 1.2 seconds, well within the requirements of interactive scientific workflows. Moreover, the collected metrics were seamlessly stored in MonALISA’s distributed time‑series database, enabling downstream performance prediction models to be trained on historical data.

The paper also discusses limitations and future work. Current security support is limited to TLS; more sophisticated, multi‑factor authentication schemes will be explored. The authors plan to containerize LISA for deployment in cloud‑native environments and to integrate it with orchestration platforms such as Kubernetes, thereby extending its applicability beyond traditional grid clusters. Another research direction is the incorporation of machine‑learning‑based anomaly detection directly within the agent, allowing local, near‑real‑time identification of abnormal behavior before data reaches the central service.

In conclusion, LISA offers a flexible, dynamically configurable, and high‑performance monitoring solution that complements the MonALISA ecosystem. By delivering fine‑grained, low‑overhead metrics from each host and providing mechanisms for rapid adaptation to changing scientific workloads, LISA enhances the observability and overall efficiency of large‑scale grid infrastructures.

Comments & Academic Discussion

Loading comments...

Leave a Comment