Relative Density-Ratio Estimation for Robust Distribution Comparison

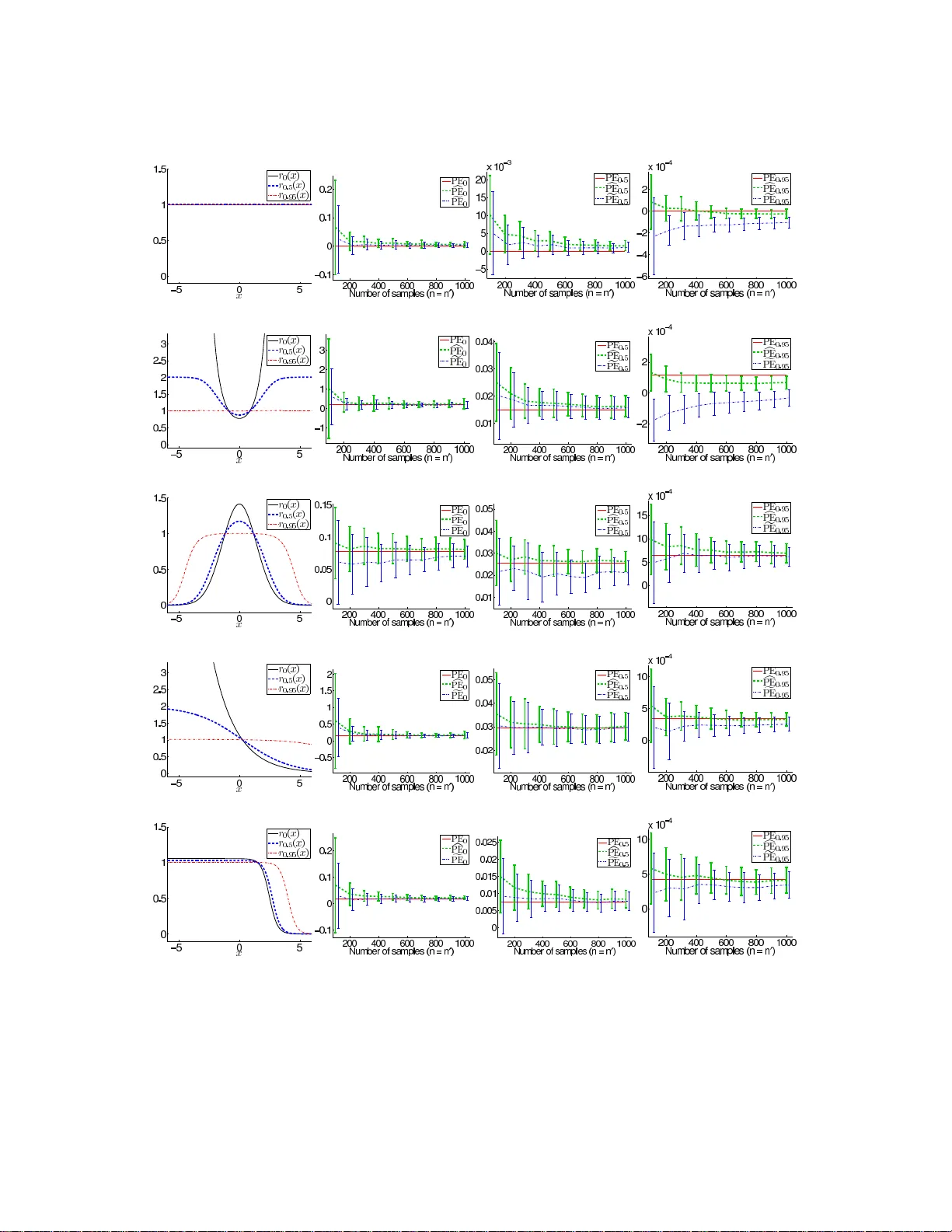

Divergence estimators based on direct approximation of density-ratios without going through separate approximation of numerator and denominator densities have been successfully applied to machine learning tasks that involve distribution comparison su…

Authors: Makoto Yamada, Taiji Suzuki, Takafumi Kanamori