Due to space limitations, our submission "Source Separation and Clustering of Phase-Locked Subspaces", accepted for publication on the IEEE Transactions on Neural Networks in 2011, presented some results without proof. Those proofs are provided in this paper.

Deep Dive into Source Separation and Clustering of Phase-Locked Subspaces: Derivations and Proofs.

Due to space limitations, our submission “Source Separation and Clustering of Phase-Locked Subspaces”, accepted for publication on the IEEE Transactions on Neural Networks in 2011, presented some results without proof. Those proofs are provided in this paper.

2 IN RPA

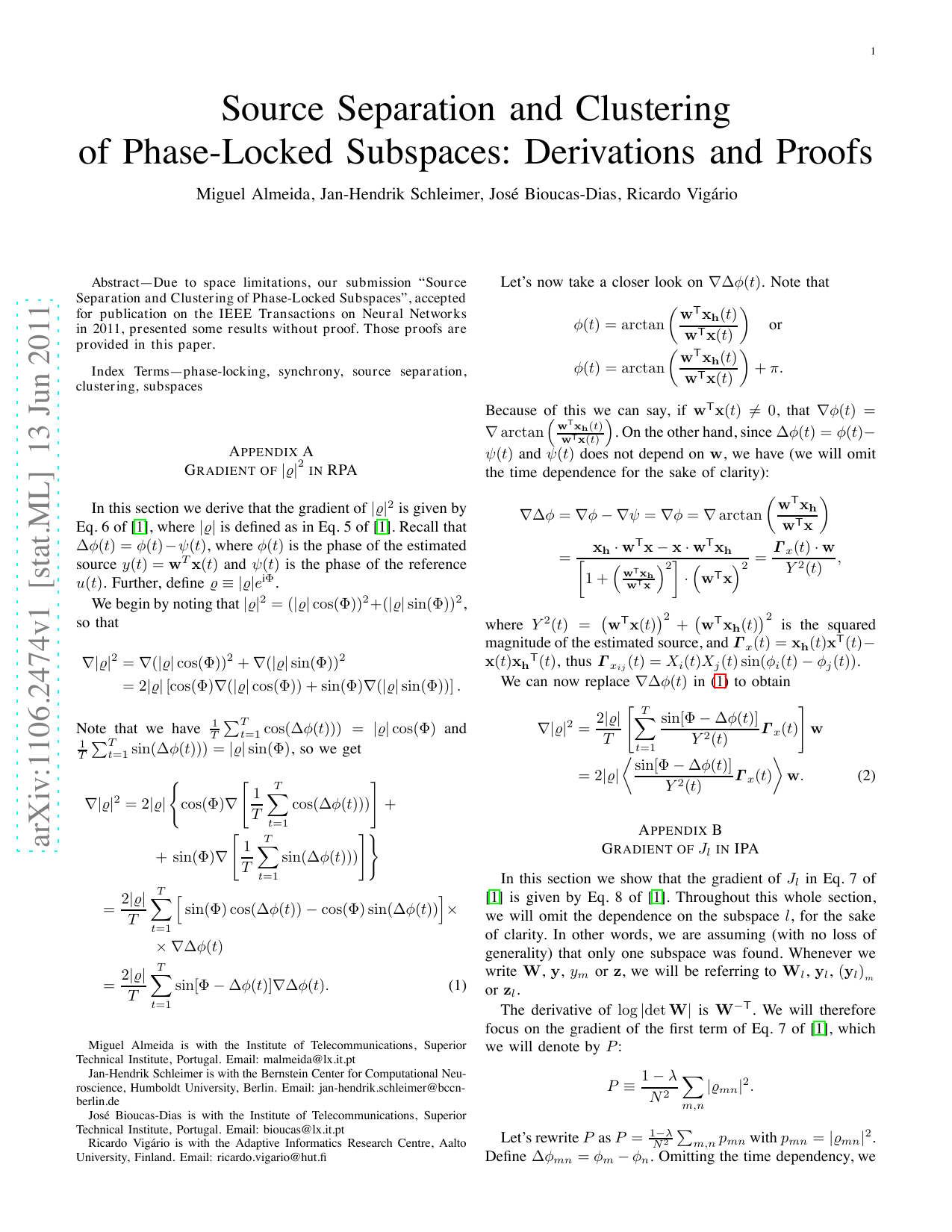

In this section we derive that the gradient of |̺| 2 is given by Eq. 6 of [1], where |̺| is defined as in Eq. 5 of [1]. Recall that ∆φ(t) = φ(t)-ψ(t), where φ(t) is the phase of the estimated source y(t) = w T x(t) and ψ(t) is the phase of the reference u(t). Further, define ̺ ≡ |̺|e iΦ .

We begin by noting that |̺| 2 = (|̺| cos(Φ)) 2 +(|̺| sin(Φ)) 2 , so that

Miguel Almeida is with the Institute of Telecommunications, Superior Technical Institute, Portugal. Email: malmeida@lx.it.pt Jan-Hendrik Schleimer is with the Bernstein Center for Computational Neuroscience, Humboldt University, Berlin. Email: jan-hendrik.schleimer@bccnberlin.de José Bioucas-Dias is with the Institute of Telecommunications, Superior Technical Institute, Portugal. Email: bioucas@lx.it.pt Ricardo Vigário is with the Adaptive Informatics Research Centre, Aalto University, Finland. Email: ricardo.vigario@hut.fi Let’s now take a closer look on ∇∆φ(t). Note that

Because of this we can say, if w T x(t) = 0, that ∇φ(t) = ∇ arctan w T x h (t) w T x(t) . On the other hand, since ∆φ(t) = φ(t)ψ(t) and ψ(t) does not depend on w, we have (we will omit the time dependence for the sake of clarity):

2 is the squared magnitude of the estimated source, and

). We can now replace ∇∆φ(t) in (1) to obtain

In this section we show that the gradient of J l in Eq. 7 of [1] is given by Eq. 8 of [1]. Throughout this whole section, we will omit the dependence on the subspace l, for the sake of clarity. In other words, we are assuming (with no loss of generality) that only one subspace was found. Whenever we write W, y, y m or z, we will be referring to W l , y l , (y l ) m or z l .

The derivative of log |det W| is W -T . We will therefore focus on the gradient of the first term of Eq. 7 of [1], which we will denote by P :

Let’s rewrite P as P =

where we have interchanged the partial derivative and the time average operators, and used cos(∆φ mn ) 2 + i sin(∆φ mn )

Since φ m is the phase of the m-th measurement, its derivative with respect to any w j is zero unless m = j or n = j. In the former case, a reasoning similar to Appendix A shows that

where

It is easy to see that ∇ wj ∆φ jk = -∇ wj ∆φ kj . Furthermore, p mm = 1 by definition, hence ∇ wj p mm = 0 for all m and j. From these considerations, the only nonzero terms in the derivative of P are of the form

We now define Ψ jk ≡ φ jφ k = ∆φ jk . Plugging in this definition into Eq. ( 5) we obtain

where we again used sin(ab) = sin a cos b -cos a sin b in the last step. Finally,

which is Eq. 8 of [1].

In this section we derive Eq. 10 of [1] for the gradient of J. Recall that J is given by

where the w kj are real coefficients that we want to optimize and the v ik are fixed complex numbers. Also recall that Re(.) and Im(.) denote the real and imaginary parts.

We begin by expanding the complex absolute value:

When computing the derivative in order to w kj , only one term in the leftmost sum matters. Thus,

In the sums inside the derivatives, the sum on k can be dropped as only one of those terms will be nonzero. Therefore,

where we used vi ≡ k v ki to denote the sum of the i-th column of V. Similarly,

These results, with the notation ūj ≡ k v jk as the sum of the j-th column of U, can be plugged into Eq. ( 6) to yield

In this section we derive Eq. 9 of [1] for the interaction of an oscillator with the cluster it is part of. We will assume that there are N j oscillators in this cluster, coupled all-toall with the same coupling coefficient κ, and that all intercluster interactions are weak enough to be disregarded. We begin with Kuramoto’s model (Eq. 1 of [1]) omitting the time dependency:

κ ik e i(φj -φi)e -i(φj -φi) 2i

= ω i + e -iφi 2i k∈cj κ ik e iφ k -e iφi 2i k∈cj κ ik e -iφ k .

We now plug in the definition of mean field ̺ cj e iΦc j = 1 Nj k∈cj e iφ k to obtain φi = ω i + N j e -iφi 2i κ̺ cj e iΦc j -N j e iφi 2i κ̺ cj e -iΦc j = ω i + N j κ̺ cj sin(Φ cjφ i ) -sin(φ i -Φ cj ) = ω i + 2N j κ̺ cj sin(Φ cjφ i ).

1-λ N 2 m,n p mn with p mn = |̺ mn | 2 . Define ∆φ mn = φ mφ n . Omitting the time dependency, we have ∇

This content is AI-processed based on ArXiv data.