A Novel Adaptive Channel Equalization Method Using Variable Step-Size Partial Rank Algorithm

Recently a framework has been introduced within which a large number of classical and modern adaptive filter algorithms can be viewed as special cases. Variable Step-Size (VSS) normalized least mean square (VSSNLMS) and VSS Affine Projection Algorith…

Authors: Sayed A. Hadei (Student Member IEEE), Paeiz Azmi (Senior Member, IEEE)

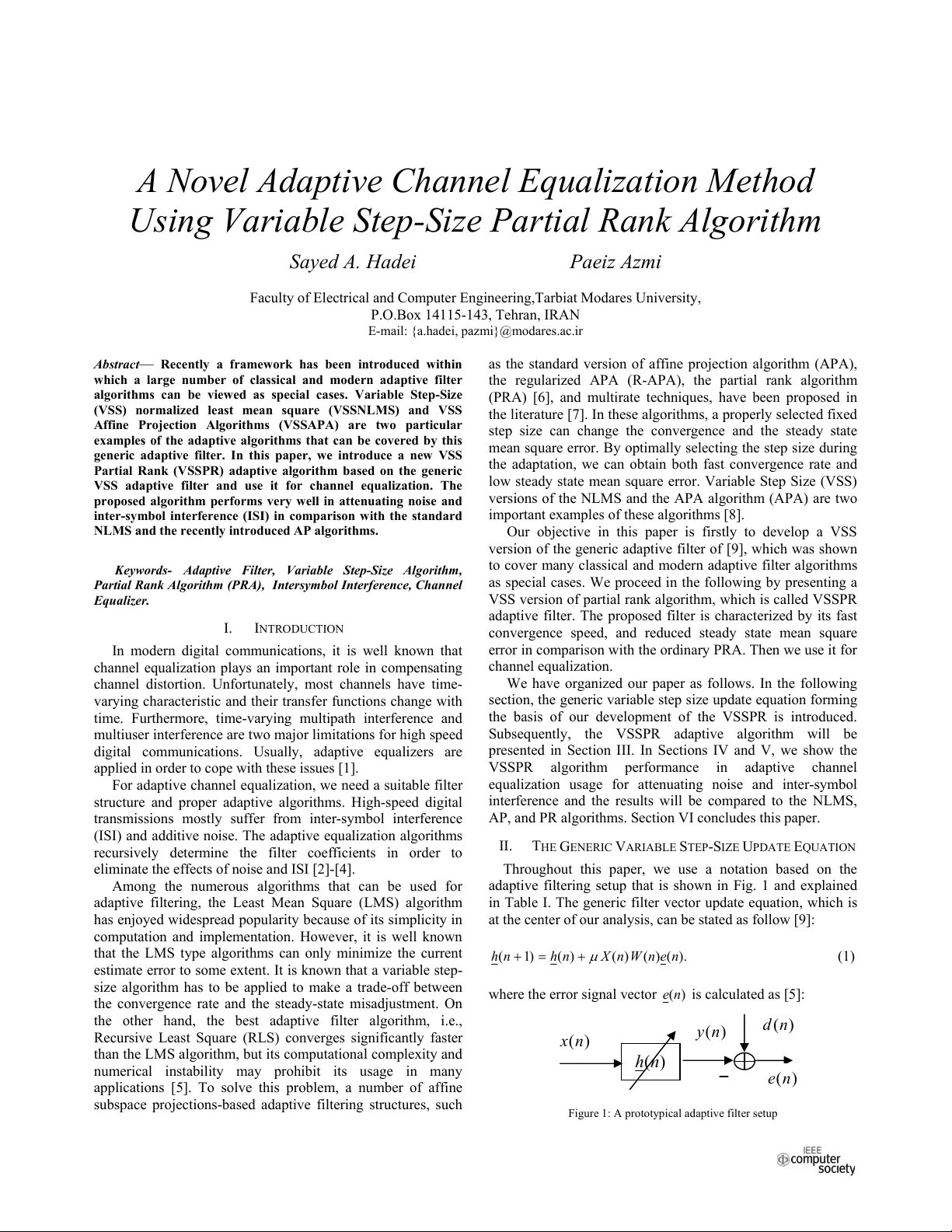

A Novel Adaptive Channe l Equalization Method Using Variable Step-Size Partial Rank Algorithm Sayed A. Hadei Paei z Azmi F a c u l t y o f Elec t r i c a l a nd Com pu ter Eng i neer i n g, Tarbia t Mo da res Uni v e r s ity, P.O.Bo x 1 4115 -14 3 , Teh r a n , IRAN E - m a il: {a.h adei, pazm i } @m od a r es.ac. ir Abstr ac t — R e cen tl y a fram ew o r k h a s b e en i n trodu ced w i th i n w h ich a la rge n u m b er of cl assica l an d m o d e rn a d a p tiv e filter a l go rithm s c a n b e v i e w ed as sp eci a l cas e s. Va riab le S t e p -S i ze (V SS ) n o r m a l ized lea s t m e a n squ a re (V SS NLMS ) a nd V S S A f fi n e Pro jectio n Al gori t hm s (VSS AP A) a re t w o p a rti c u l a r ex am pl es o f th e ad a p ti ve algo r i thm s th at ca n b e cov ered b y t h is g e neri c ad ap ti v e fil t er. I n this p a p er, w e in tro d u ce a n e w V S S P a rtia l Ran k (VS S PR ) ad ap tiv e a l go rithm bas e d on th e gen eri c V SS a d a p tiv e fil t er a n d use i t fo r ch ann e l eq u a li z a tio n . Th e p r op o s ed alg o rith m p erform s ver y w e l l i n atten u ati ng n o i s e a n d i n ter-s y m b ol interfe ren c e (IS I ) in com p ariso n w i th th e stan da rd N L M S and th e recen tl y i n trodu ced AP algo rith m s. Keywo r ds - Ada pti ve Filter, V a riable St ep-S iz e Alg o rit h m , P a r t ial Rank A l gor i t hm (PRA) , I n tersymbol Interfer e nce, Channel Equali z er. I. I NTR O D U C T I O N I n m odern di g ita l com m unic a t i o ns, i t i s w e ll kn ow n t h at c h an ne l e qua l i z ati on p l a y s a n i m p o rtan t ro le i n com p en s ati n g c h an ne l d i s t or tio n. U n for tuna t e ly, m o st c h a n n e ls have t i m e - v a ryi ng ch a r act e r i s t i c and th ei r t r an s f e r fun ct i o n s ch a n g e wi th time . F u rther m ore , t i m e -va ryi ng m u lt i p at h i n terfe renc e a n d m u lti user in t e rfe renc e are two ma j o r limi tat i o n s for hi gh s p ee d di git a l com m unica tions. Usua ll y, a d apt i ve e q u a l i z e rs are a ppl ied in orde r to c o p e wit h t h ese issue s [1] . Fo r a d ap t i v e ch a n n e l eq u a l i zati o n , we n e ed a su i t a b l e fil t e r struc ture and pr ope r a d aptive algori t hms. High-spee d di gi ta l transm issions mostl y suffe r from inter -symbo l inter fer e nce (ISI) and a d d i t i ve n o i se . The adap t i ve e q u a l i z a t i o n a l gor it hm s rec u rsi v ely d e t e rmi n e th e fi lt er co e f fi ci e n t s i n o r d e r t o e l im ina t e t h e effec t s of n o i se and I S I [2]- [4]. A m ong t h e nume r ous a l gor it hm s tha t can be use d for a d ap t i ve fil t eri ng, t h e Lea st Mea n Squa re (LMS) a l gor it hm ha s e n j oye d w i des p re ad p o p u l a r it y be ca use o f its si m p l i c i t y in c o mpu t a t io n a n d im ple m e n ta t i o n . Howe ver, it i s well kno wn tha t the L M S t y pe a l g o r ithm s c a n on ly m i nim i ze the c u rr ent e s t i m a te er ror to som e e x t e n t . It i s know n t h at a variable st ep- size alg ori t hm has to be a ppl ie d to m ake a trade - off bet w ee n the conve rgenc e rate a nd the stea dy-sta te misadjus tme nt . On the othe r hand, the best adapt i ve fi lt er algor it hm, i.e., Rec u r s ive Least S q ua re (RL S) c onverge s si gn ifica nt l y fa ste r tha n t h e L M S a l g o ri thm , but it s c o mp uta t io nal c o mpl e x i ty a n d num eric a l ins t a b ili t y m ay prohi bit its usa g e in ma ny a ppl ica t i o n s [5] . To solve t h is pro blem , a nu m b er of aff i n e su bsp a c e p r oj ec t i on s - b a sed ad apt i v e fi lt e r ing st ru ctu re s, s u ch a s the sta nda rd ve rsion of affine pro jec t i o n a l gor it hm (A PA ), the r e g u l arize d A P A (R-AP A ), the pa rtia l r a nk a l gor i t hm ( P RA) [6], a nd mul t i rate t e c h n i que s, ha ve be en pro pose d in the lit erature [7]. In these algorit hms, a properly selected fixed ste p size c a n cha n ge the co nver g ence an d t h e ste a dy sta t e mean sq u a re erro r. B y o p ti mal l y sel ecti ng th e st ep si ze duri ng the ada p ta t ion, w e c a n ob ta in bo t h fa st conver g ence r a te and low stea dy st ate mean square error . Variable S t ep S i ze (VSS) ve rsi ons of the NLMS and th e APA a l gor it hm (APA) a r e two i m p o r t a nt ex amp l e s o f t h ese al go ri th ms [ 8 ]. Our objec ti ve i n this pape r i s fi r s t l y to de ve lop a VSS ve rsi on of the gene r i c ada p ti ve fi l t er of [9], whic h was shown to c ove r m a ny cla ssica l and m o de rn ada p ti ve fi l t er algori t h ms a s special c a se s. We pr ocee d in t h e fo ll o w ing b y pre sent i n g a V S S ve rsi on of pa rt i a l ra nk algori t hm , whic h is cal le d VSS P R a d ap t i ve fi lter. The propos ed fi l t er is cha r ac t e rize d by i t s fast c o n v erge nce sp e e d, and red u c e d s t ea d y state m e a n sq u a r e e rror in com p a r ison with the ordinar y P R A. T h e n we use it for c h an ne l equ a l i zati o n. We have orga niz e d o u r pa pe r a s fol l o ws. I n the fol lowi ng se ct ion, t h e ge neric va ria b le ste p size u pda te e qua ti on for ming t h e b a s i s o f ou r d e v e lo p m e n t o f th e VS S P R i s in t r oduc e d . S ubseq ue n t l y , the VS SP R a d apt ive algor it hm w ill be pr esented i n Sec t i o n III. In Sec t i o ns IV a nd V, we show the V S SP R a l go rit h m pe rform anc e in a d ap t i ve c h a nne l e qua l i za ti o n u s age f o r atte n u a t i ng no ise and in ter -symbo l inter fere nce and the resul t s wi l l be c o mpa r ed to the NLMS, A P , and PR a l gor it hm s. S ecti on V I c o n c l u d e s th i s paper . II . T HE G ENE R I C V A R I A B LE S TEP -S IZE U P D ATE E QUA TI O N Thr o u g h o u t thi s paper , w e use a not ati o n base d o n th e a d ap t i ve f i l t eri ng se t u p t h a t i s show n in F i g. 1 and ex p l a i ne d in Ta bl e I. The gene ric fil t er ve ct or up da te equa t i o n , whic h is a t the ce nter of our a n al ysis, c a n be sta t ed as fol l ow [9] : ). ( ) ( ) ( ) ( ) 1 ( n e n W n X n h n h P (1) w h e r e the e rror signal ve c t or ) ( n e is c a lc ula t e d as [ 5 ] : Fi gur e 1: A prototy p i c a l a d a p t i v e filt e r s e tup ) ( n h ) ( n x ) ( n y ) ( n d ) ( n e 2010 Sixth Advanced International Conference on Telecommunications 978-0-7695-4021-4/10 $26.00 © 2010 IEEE DOI 10.1109/AICT.2010.85 201 TABLE I. E XPLA INATIO N OF N OTATIONS ) ( n h Length- M column vector o f filter coeffi cients to b e adjusted at each time instant n ) ( n x Length - M vector of input signal sam ples to adaptive filter, T M n x n x n x )] 1 ( , ), 1 ( ), ( [ ) ( n e Length - L vector o f erro r samples. T L n e n e n e )] 1 ( , ), 1 ( ), ( [ ) ( n X L M u signal matrix whose colum ns are given by ) 1 ( , ), 1 ( ), ( L n x n x n x ) ( n W L L u symmetric weighting matrix P Step-si ze TABLE II . C ORRESPONDENCE B ETWEEN S PECI AL C ASES O F EQ. 1 AND V ARIOUS A DAPTIVE F ILT ERING A LGORITHMS L ) ( n W Algorithm 1 1 LMS 1 2 ) ( n x NLMS M L 1 1 )] ( ) ( [ n X n X T APA M L 1 1 )] ( ) ( [ n X n X I T H R-APA ) ( ) ( ) ( ) ( n h n T X n d n e (2) Based on Eq. 1 and by specific choosing L and ) ( n W several adaptive filter algorith ms such as LMS, NLM S, and AP algorithms can be derived [9]. The pa rticular choices and their corresponding algorithms ar e summarized in Table II. Eq. 2 can be stated in terms of weight error vector, ) ( ) ( n h t h n , where t h is the unknown true filter vec tor [10, p.91, Eq. 4]: ), ( ) ( ) ( ) ( ) 1 ( n e n W n X n n P (3) Taking, the squared norm and expe ctations from both sides of Eq. 3 we have, ^ ` ^ ` ^` ^ ` , ) ( ) ( ) ( 2 ) ( ) ( ) ( ) ( 2 2 ) ( 2 ) 1 ( n n T B n T e E n e n B n T B n T e E n E n E P P H (4) where ) ( ) ( ) ( n W n X n B . Eq. 4 can be repr esented as follows: ^ ` ^ ` 22 (1 ) ( ) nn E E P ' (5) where P ' is: ^ ` ^ ` , ) ( ) ( ) ( 2 ) ( ) ( ) ( ) ( 2 n n B n e E n e n B n B n e E T T T T ' P P P (6) If P ' is max imized, th en mean -square de viation (MSD) will undergo the large st decrease from iterat ion n to iteration 1 n . So, the optimum step-size ) ( n o P will be foun d as, ^ ` ^` () 0 () T T En B n n n T T En B n B n e en e P . (7) Introducing a-pri or-error vector as follows [10, p.92]: ), ( ) ( ) ( n n X n e T a (8) we find that the er ror vectors are related to the a-priori- error vectors as follo w: ) ( ) ( ) ( n v n e n e a (9) Assuming the noise se quence ) ( n v is identically and independe ntly distribute d and statistica lly independen t of the regression da ta, and neglec ting the depe ndency of ) ( n on the past noises, we estab lish the following t wo sub equations: Part I: ^ ` ^` ^` ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( n n B n X n E n n B n n X n E n n B n e E T T T T T T T X (10) Part II: ^ ` ^` ^` ^` ^` ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( 2 n B n B E Tr n n X n B n B n X n E n n B n B n E n n X n B n B n X n E n e n B n B n e E T T T T T T T T T T T X V X X (11) Finally, with definin g ) ( ) ( ) ( n T X n B n C , the optimum step- size in Eq. 11 becom es: ^ ` ^` () ()() () () () ()() T T En C n n o n T T En C n C n n P \ (12) where ^ ` ) ( ) ( 2 n B n B E Tr T X V \ (13) 202 Substituting the ) ( n o P of Eq. 12 instea d of P in Eq. 1 the general variab le step-size update equat ion that covers VSSNLMS, VSSA PA, and other VSS algorithm s as special cases, will be obta ined. We now focus on the develo pment of the VSSPR adaptive filter. III. T HE V ARIA BLE S TEP -S IZE P ARTI AL R ANK A DAPTIVE F ILTER A LGORITHM The vector update equati on for PRA can be stated as [6] : ), ( ) ( ) ( ) ( ) ( ) 1 ( 1 n e n X n X I n X L n h n h T c P (14) where ) 1 ( c L L D . Selecting 0 D results in affine projection alg orithm. When 1 D , the partial r ank (PR) adaptive filte r algorithm is obtained and it means that the weight vector is up dated only once after L iterations. From Eq.12, the op timum step size can be found as: ^` ^` \ P ) ( ) ( ) ( n Q E n P E n o (15) where, ), ( ) ( 1 ) ( ) ( ) ( ) ( ) ( n n T X n X n T X I n X n T n P (16) and, ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( ) ( 1 1 n n X n X n X I n X n X n X n X I n X n n Q T T T T T (17) by defining, ), ( ) ( ) ( ) ( ) ( ) ( 1 n n X n X n X I n X n p T T (18) and also by using the followi ng approximation, [8, P. 134]: I n X n X n X n X I T T | ) ( ) ( ) ( ) ( 1 (19) we obtain, ^ ` 2 () () 2 () Ep n o n Ep n P \ ½ ®¾ ¯¿ (20) where . repres ents the squared Euclidean norm of a ve ctor and < is a positive constant and can be fo und from: ¸ ¹ · ¨ © § ¿ ¾ ½ ¯ ® 1 2 ) ( ) ( n X n X I E Tr T X V \ (21) In calculating the optimum step size from Eq.20, the main pro blem is tha t ) ( n p is not available; this is because t h is unknown. Therefore we need to estimate this qua ntity. Taking expectation from both s ide of Eq.18 we have: ^` ^ ` ) ( ) ( 1 )) ( ) ( )( ( ) ( n n T X n X n T X I n X E n p E (22) and subs tituting ) ( ) ( ) ( n v n e n e a into Eq.22 yields: ^ ` ^ ` 1 () () ( () () ) () () TT E p n E X n I Xn X n Xn e n (23) We can estima te this quant ity with the recur sion presented in Eq.24 as follo w: ) ( 1 )) ( ) ( )( ( ) 1 ( ]) 1 ([ ˆ ) ( ˆ n e n X n T X I n X n p n p E E (24) where E is a smoo thing f actor and 1 0 d d E . Finally th e recursion F or Variable Step Size P artial Rank Adaptive Filte r (VSSP R) is given by: ), ( ) ( ) ( ) ( ) ( ) ( ) 1 ( 1 n e n X n X I n X n L n h n h T c P (25) \ P P 2 ) ( ˆ 2 ) ( ˆ max ) ( n p n p n (26) The step-size cha nges with the 2 ) ( ˆ n p , and the constant < , as it can be seen in Appendix I, can be approxima ted as SNR L . To guarantee update sta bility, max P is selected less than 2 [10]. The final results of VSSPR adaptive filter algorithm have been summarized in Table III. IV. A DAPTIVE C H ANNEL E QU ALIZER The application consi dered in this paper for adaptive filters is linear channe l equalizatio n. The block diagram a communicati on system with an adaptive equalizer, is shown in Fig. 2, in which da ta symbols (.)} { s are transmitte d through a channel and th e output seque nce is measured in the presenc e of additive noise (.) Q . The signals (.)} (.), { s Q are assu med to be uncorrelate d. The noisy output of the c hannel is denote d by (.) u and is fed into a l inear equalizer with M taps. At any particular time instant n , the state of the equa lizer is given b y )] 1 ( ),...., 1 ( ), ( [ ) ( M n x n x n x n x . It is desired to determ ine the equaliz er tap vector W in order to estimate the si gnal ) ( ) ( ' n s n d optimally in the least-mean-squar e sense. At each time instant n , the symbol ) ( ) ( ' n s n d is compared with the output of the ada ptive filter ) ( ˆ ' n s and an error signal ) 1 ( ) ( ) ( ) ( n w n x n d n e 203 is generated. The error is then used to adjust the filter coe ff ici ent fro m ) ( i n w to ) ( n w by using an algor ithm like LMS or NLMS. TABLE II I. VS S P ARTIAL R ANK A DAPTI VE F ILTER A LGORITHM () , ( 1 ) , ,( 1 ) () () ( [ 1 ] ) 1 (1 ) ( ) ( ( ) ( ) ) ( ) ˆˆ xn xn xn L Xn nn T Xn I X n Xn e n pp E E ªº ¬¼ 2 ˆ () () , / max 2 ˆ () pn nL S N R pn PP \ \ ), ( ) ( ) ( ) ( ) ( ) ( ) 1 ( 1 n e n X n X I n X n L n h n h T c P In this paper, we propose to adjust these filter coefficients with VSSPR algorithm. In steady state case, the error signal will have sm all values and, hence, the output ) ( ˆ ' n s of the adaptive fil ter will get values close to ) ( ' n s . It should be noted that t his scheme for adaptive chan nel equalizat ion does not require know ledge of t he channel. In pr actice, foll owing a training pha se with a kn own refe rence sequence )} ( { n d , an equalizer could con tinue to operate in one of two modes . In the first mode, its coefficient vector w would be frozen and used thereafte r to generate future out puts )} ( ˆ { ' n s . This mode of opera tion is appropriate w hen the training pha se is successful enoug h to result in a reliable es timate ) ( ˆ ' n s , which is an estimate that lea d to a low probability of error after feeding i nto a decision device m apping ) ( ˆ ' n s to the closet point i n the symbol cons tellation ) ( ' n s . Howev er, if the channel v aries slowly wit h time, it may be necessar y that the equalizer continue to o perate in a decision- directed mode. In this mode, the weight vector of the equalizer continue s to be adapted eve n when the training phase has ended. V. E XPERIMEN TAL R ESUL TS In computer simulatio ns, we consider a channe l with the transfer function 12 3 ( ) 0.5 1.2 1.5 Cz Z z z and proceed to design an adap tive linear equalize r for it. As it is seen in f igure 2, s ymbols )} ( { n s are transmitted through the channel and c orrupted by ad ditive comple x valued white noise )} ( { n Q . The received signal )} ( { n x is processed by the FIR equalizer to generate estimates ) ( ˆ ' n s , which are fed into a decision de vice. The equalizer processe s in two modes of operation: a training mo de during whic h a delaye d replica of the input sequence is used as a reference sequenc e, and a decision-dire cted mode during which the outpu t of the decision-device re places with the reference sequence . In the training method, a signification fract ion of channel bandwid th is wasted to do the train ing action. Therefore, we must optimize the train ing signal to minimize the performance loss caused by channel estimat ion. In the simulati ons, the input sequ ence )} ( { n s is chosen from a QPSK. The adaptive filter is tra ined with 500 symbols from a QPSK, followed by decision-directe d operation during 5000 symbols from a 256-QAM constella tion. Figure 2: Adaptive lin ear equalizer o perating in two modes: trai ning mode and dec ision-directed m ode. The noise vari ance 2 Ȟ ı has been chosen in or der to enforce an SNR level of 30dB at the input of the e qualizer. We have selected ¨ =15, M=35. The NLMS, P R, AP and VSSPR algorithm s have been used to train the equal izer with step size μ =0.4 for NLMS, μ =0.4 , L=4 fo r PR, μ =0.06 , L=4 for AP and μ max=1.7, 0.0001 \ , and L=4 for the VSSPR algorithm. Figure 3 show the learning curves. The simulated lear ning curves were obtaine d over 300 independe nt realizations and show the converge nce of the VSSPRA has faster rate than other algorithms. Figure 4 shows sym bol error rate (SER) curves versus signal to noise ratio at the input of the equalizer. The SER for the pr oposed algor ithm is substa ntially low er than those of the other algor ithms. We assumed that the receiver has know ledge of the transmitted in formation sequence informing the error signal between the desired symbol and its estima te. Such knowledge can be ma de available durin g a short training period in whic h a signal with a known information seque nce is transmitted to the receive r for initially adjusting the tap we ights. Figure 5 shows the training seque nce and transm itted seque nce. Figure 6 s hows scatter diagrams of the received sequence and eq ualizer output using NLMS , PRA, AP A and VSSP RA with 500 tra ining symbols. The results show tha t the output of the eq ualizer with VSSPR algorithm is better tha n the other algorithms. VI. C ONCLUSIONS In this paper, we have develope d a new Variable Step Size Partial Rank (VSSPR) algorithm based on unified framework of [9]. An application of this algorithm in channel equaliza tion has been presented and its performance ha s been compared with those of some other algo rithms. The proposed algorithm has good conv ergence rate and lower steady state mea n square error in comparison with the ordinary PR, NLMS and APA algorithm s. A CKNOWLEDGME NT The authors a re very grateful to the an onymous review ers for their very insightful com ments. This work is supported in S ( n ) e ( n ) Channel E q ualize r ' Z d ( n ) Ȟ ( n ) Trainin g Decisin direct ed ) ( ˆ ' n s ) ( ' n s x(n) 204 -20 -10 0 10 20 -20 -10 0 10 20 Equali z er output (c ) -20 -10 0 10 20 -20 -10 0 10 20 Equal iz er out put (d) -20 -10 0 10 20 -20 -10 0 10 20 Equal iz er out put (e) part by t he Iran Teleco mmunicatio n Research Center (ITRC ) Tehran, Iran. 0 50 100 150 20 0 250 300 350 400 450 500 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 Iterat ion Numb er MSE Learnin g Curv e (a) NLM S (b) P RA (c ) A P A ( d) VSSPR A c b a d Figure 3: Lea rning Curve for the NLMS, PR, AP and VSSPR Algorithms with ( μ =0.4 for NLMS, μ =0 .4 , L=4 for PR, μ =0.06 , L=4 for AP and μ max=1.7, 0001 . 0 \ , L=4 for the VSSPR). 6 8 10 12 14 16 18 20 10 -4 10 -3 10 -2 10 -1 10 0 SN R( dB) SER NLMS APA PRA VSSPR A (a) 10 15 20 25 30 10 -4 10 -3 10 -2 10 -1 10 0 S NR(db) SER SER u SNR (lo ga rithmic sc ale) NLM S PR A APA VSSPRA (b) Figure 4: A plot of t he SER as a function of the SNR a t the input of the equalizer and the order of the QAM constellation ((a) 16-QAM , (b) 256- QAM). The adaptive filter is trained with NLMS, PRA, APA, and VSSPRA using 500 QPSK trai ning symbols. -1 0 1 -1 -0. 5 0 0.5 1 T raini ng s equen c e (a) -20 0 20 -20 -10 0 10 20 T rans m it t ed sequ enc e (b) Figure 5: (a) scatter dia gram of the Q-PSK training se quence. (b) Transmitted data. -100 -50 0 50 100 -100 -50 0 50 100 Recei v ed s equenc e (a) -20 -1 0 0 10 20 -20 -10 0 10 20 Equa li z er out put (b) Figure 6: (a) Sca tter diagram of the receive d sequence. (b) The output of the equalizer through the NLMS algorithm. (c) The output of the equalizer through the PR algorit hm. (d) The output of the equal izer through the AP algorithm. (e) T he output of the equalizer through the VSS PR algorithm. R EFERENCES [1] J. G. Proakis and M. Salehi, Digital Communic ations, McGraw -Hill, 5 th edition, 2008. [2] I. Lee and J. M. Cioffi, “A Fast Computation A lgorithm for the Decision Feedback E qualizer” IEEE Trans. C ommun , vol. 43, pp. 2742- 2749, Nov. 1995. [3] T. Wang and C. Wang “ A New Bloc k Adaptive Filtering Algorithm for Decision Feedbac k Equalization of Multipath Fading Channel” IEEE Trans. Circuit Syst. -II: Analog and Digital Signal Proce ssing , vol. 44, pp. 877-881, oc t. 1997. [4] M. S. E. Abadi and J.H. husøy “ Channe l Equalization using Adaptive Matching Pursuit A lgorithm” in Proc 13 th ICEE2005, vol.2, z anjan, iran, may 10-12, 2005. [5] A. H. Sayed, Adaptive Filters , Wiley, 2008. [6] S. G. Kr atzer and D. R . Morg an “The partia l-rank algor ithm for adapti ve beamforming” Proc . SPIE , vol. 564, pp. 9-14, 1985. [7] K. A. Lee and W. S. Gan, “ Improving convergence of the NLMS and affine projection a lgorithm using constrained s ubban updates” IEE E Signal Processing Le tters , vol.11,pp. 736-739, 2004. [8] H. C. Shin, A. H. Sayed a nd W. J . Song “Variable step-size NLMS and affine projection algorithm s” IEEE Signal Proce ssing Letters , vol. 11, pp. 132-135, Fe b. 2004. 205 [9] J. H. Husoy and M. S. E. Abadi “Unified approach to a daptive filters and their performance” IET Signal Proc essing , vol. 2 , No. 2 , pp. 97- 109, 2008. [10] H. C . Shin and A. H. Sayed “Mean square performance of a fam ily of affine projection algorithm s” IEEE Trans. Signal Proce ssing , vol. 52, pp. 90-102, 2004. APPENDIX I F INDING A N A PPROXIMATION F OR \ The positive constant \ is related t o ^ ` 21 (( ( ) ( ) ) ) T Tr E I X n X n v \V . Using the assumption that is small, the \ quantity can be fou nd as follows: ) ) 2 ( ) ( ) 1 ( ) ( ) 2 ( ) 1 ( ) 1 ( ) 1 ( ) ( ) 2 ( ) ( ) 1 ( ) ( ) 2 ( ) 1 ( ) 1 ( ) 1 ( ) ( ( 1 2 ¸ ¸ ¸ ¸ ¸ ¸ ¸ ¸ ¸ ¸ ¸ ¹ · ¨ ¨ ¨ ¨ ¨ ¨ ¨ ¨ ¨ ¨ ¨ © § ¸ ¸ ¸ ¸ ¸ ¹ · ¨ ¨ ¨ ¨ ¨ © § u ¸ ¸ ¸ ¸ ¸ ¹ · ¨ ¨ ¨ ¨ ¨ © § M L n x M n x M n x L n x n x n x L n x n x n x M L n x L n x L n x M n x n x n x M n x n x n x E Tr v V \ (27) To approxim ate the Eq.27, we assume that the seq uence ) ( n x is independen t and identica lly distributed. Using this assumption, we can a ssume that ) ( ) ( n X n X T is close to diagonal. Therefore we focus ed just on the diagona l elements of Eq.27. Simplifying this equation, we obtain ) ) 1 ( ) 1 ( ) ( 1 ( 2 2 2 2 ¸ ¸ ¸ ¸ ¸ ¸ ¸ ¸ ¸ ¸ ¹ · ¨ ¨ ¨ ¨ ¨ ¨ ¨ ¨ ¨ ¨ © § u u u u u u L n x 1 n x 1 n x E Tr v V \ (28) Applying the expecta tion and trace operators, we obtain 11 2 ( 22 () ( 1 ) 1 ) 2 (1 ) Tr E E v xn xn E xn L \V ½ ½ °° ° ° ®¾ ® ¾ °° ° ° ¯¿ ¯ ¿ ½ °° ®¾ °° ¯¿ (29) Eq.30 can be st ated as : 1 2 . 2 () Lr E v xn \V ½ °° ®¾ °° ¯¿ (30) Now from [8], we obtain that \ can be approxima ted as SNR L . Therefore \ is inversel y proportional t o SNR and proporti onal to L. 206

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment