Cloud-based Evolutionary Algorithms: An algorithmic study

After a proof of concept using Dropbox(tm), a free storage and synchronization service, showed that an evolutionary algorithm using several dissimilar computers connected via WiFi or Ethernet had a good scaling behavior in terms of evaluations per second, it remains to be proved whether that effect also translates to the algorithmic performance of the algorithm. In this paper we will check several different, and difficult, problems, and see what effects the automatic load-balancing and asynchrony have on the speed of resolution of problems.

💡 Research Summary

This paper investigates the algorithmic performance of a distributed evolutionary algorithm (EA) that leverages a free cloud storage service—Dropbox—as a synchronization medium for multiple heterogeneous computers. While earlier work demonstrated that such a setup scales well in terms of raw evaluations per second, it remained unclear whether this scaling translates into faster convergence to optimal solutions or higher success rates on challenging problems. To answer this, the authors selected two benchmark problems of differing difficulty: P‑Peaks, a multimodal binary problem with 100 peaks of length 64 bits, and the deceptive MMDP (Massively Multimodal Deceptive Problem), composed of 20 sub‑problems each of 6 bits, known for its large number of local optima.

Methodology

The EA follows a pool‑based, island‑free model. Each participating node maintains a population of 1,000 binary individuals. Selection is performed via 3‑tournament, variation through bit‑flip mutation and uniform crossover. After each generation, a migration step may occur depending on a predefined migration interval (e.g., every 100, 200, or 400 generations). During migration, the best individual of the local population is written to a shared Dropbox folder (the “pool”), and the best individual currently stored in the pool (potentially contributed by another node) is inserted into the local population, replacing the worst individual. If the best individual has not improved since the previous migration, a random individual is added to preserve diversity. A one‑second pause follows each migration to allow Dropbox’s daemon to propagate the file to all peers.

Experimental Setup

Four laptops located in the University of Granada, connected via campus Wi‑Fi, served as the computational nodes. The machines differed in hardware capability and operating system, providing a realistic heterogeneous environment. Experiments were conducted with 1, 2, and 4 nodes, each time varying the migration interval (100, 200, 400 generations). The primary metrics recorded were: (1) success rate (percentage of runs that found the global optimum), (2) wall‑clock time to solution, and (3) total number of evaluations performed (which naturally grows with the number of nodes). The minimum evaluation budget was set to four million to ensure sufficient search depth.

Results – P‑Peaks

All configurations achieved a 100 % success rate, confirming that the problem is relatively easy for the chosen EA parameters. The average time to solution was about two minutes, and adding more nodes yielded only marginal improvements. The dominant factor in total runtime was the Dropbox synchronization delay; consequently, larger migration intervals (e.g., every 60 generations) produced slightly faster runs because fewer file transfers reduced overhead. The intermediate migration interval (every 40 generations) offered the most consistent reduction in time, but overall gains remained modest.

Results – MMDP

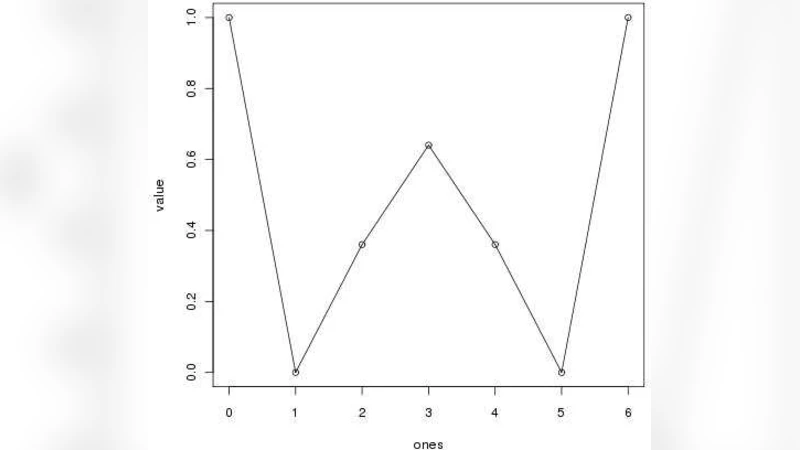

MMDP exhibited a more nuanced behavior. Success rates increased with the number of nodes: a single node solved the problem in roughly 70 % of runs, while four nodes approached 100 % success. The relationship between migration interval and success was non‑monotonic. The highest success (≈ 95 %) occurred with the most frequent migration (every 100 generations), while the second‑best success (≈ 88 %) was observed with the least frequent migration (every 400 generations). An intermediate interval (200 generations) performed worse, suggesting that both excessive and insufficient migration can be detrimental, likely due to a trade‑off between maintaining diversity and exploiting good solutions from other nodes.

Regarding time to solution, the addition of nodes dramatically reduced the wall‑clock time for MMDP. The best runtime was achieved with four nodes and a migration interval of 100 generations, reflecting the benefit of parallel evaluations. However, very frequent migrations introduced additional synchronization overhead, while very sparse migrations delayed the spread of high‑quality individuals, leading to longer runtimes. The authors conclude that an “intermediate” migration frequency (around every 100 generations for this problem) strikes a practical balance.

Discussion

The study confirms that cloud‑based file synchronization can serve as a lightweight, server‑less mechanism for distributed EAs, automatically achieving load balancing because more powerful nodes contribute more evaluations. Nevertheless, the effectiveness of this approach depends heavily on problem difficulty and the chosen migration policy. For easy problems like P‑Peaks, the overhead of Dropbox outweighs any benefit from parallelism. For deceptive, multimodal problems like MMDP, parallelism yields clear speed‑ups, but only when migration is tuned to avoid both excessive homogenization and insufficient information exchange.

Limitations and Future Work

Key limitations include the reliance on Dropbox’s proprietary synchronization protocol, which introduces unpredictable latency and limits fine‑grained control over data transfer. The one‑second post‑migration pause is a heuristic that may not be optimal across different network conditions. Moreover, the experiments allowed the total population size to grow with the number of nodes; future studies should keep the global population constant to isolate the pure effect of parallel evaluations. The authors suggest exploring dedicated cloud APIs (e.g., AWS S3, Google Drive) or custom peer‑to‑peer synchronization, implementing adaptive migration intervals based on observed network latency, and extending the benchmark suite to continuous and multi‑objective problems.

In summary, the paper demonstrates that a Dropbox‑mediated, asynchronous, pool‑based EA can achieve higher success rates and reduced solution times on complex optimization tasks when multiple heterogeneous computers are employed. However, careful tuning of migration frequency and consideration of synchronization overhead are essential to realize these benefits.

Comments & Academic Discussion

Loading comments...

Leave a Comment