Context models on sequences of covers

We present a class of models that, via a simple construction, enables exact, incremental, non-parametric, polynomial-time, Bayesian inference of conditional measures. The approach relies upon creating a sequence of covers on the conditioning variable and maintaining a different model for each set within a cover. Inference remains tractable by specifying the probabilistic model in terms of a random walk within the sequence of covers. We demonstrate the approach on problems of conditional density estimation, which, to our knowledge is the first closed-form, non-parametric Bayesian approach to this problem.

💡 Research Summary

The paper introduces a novel non‑parametric Bayesian framework for estimating conditional probability measures, with a particular focus on conditional density estimation and variable‑order Markov modeling. The central construct is a “sequence of covers” – a hierarchy of set partitions (C₁, C₂, …) over the space of input sequences X*. Each level k provides a cover Cₖ such that any observed input x belongs to at least one set (context) M in every level. This hierarchical covering generalizes traditional context‑tree models and can be instantiated as a tree, a kd‑tree, or a more general lattice structure.

For each context M a local probability model φ_M on the output space Y is defined. The selection of which context’s model will generate the next observation is governed by a random walk that proceeds from the finest (deepest) cover toward coarser levels. At each step k the walk either stops with probability w_M (the “stopping probability”) and draws y from φ_M(·|x), or it moves to a parent context N with transition probability v_{M,N}. The pair (w, v) together with the prior over the local models Φ constitutes a “cover model” ξ = (C, W, V, Φ). Sampling a full context model (P, f) from ξ involves drawing each φ_M from its prior, drawing a Bernoulli variable d_w_M ~ Bernoulli(w_M), and a multinomial d_v_M ~ Multinomial(v_M); the context map f(x) is the shallowest context for which d_w_M = 1.

Given observations (x₁,…,x_t) and (y₁,…,y_t), the posterior over the cover model can be updated incrementally. The key recursion (equations (9)–(12) in the paper) expresses the predictive distribution ψ_t(y_{t+1}|x_{t+1}) as a mixture of the local predictive densities weighted by the current stopping probabilities, while the stopping and transition parameters are themselves updated by Bayes’ rule using the newly observed (x_{t+1}, y_{t+1}). The update formulas are closed‑form because the local models are chosen from conjugate families (e.g., Dirichlet for discrete Y, Normal‑Wishart for continuous Y). Consequently, inference remains exact and runs in polynomial time with respect to the number of reachable contexts.

Complexity depends on how densely the covers overlap. If the number of contexts containing any given x grows at most by a factor ζ from level k to k+1, the total number of reachable contexts after d levels is bounded by O((ζ^{d+1}−1)/(ζ−1)). Hence, with modest ζ (e.g., tree‑like partitions) the algorithm scales linearly in the number of contexts.

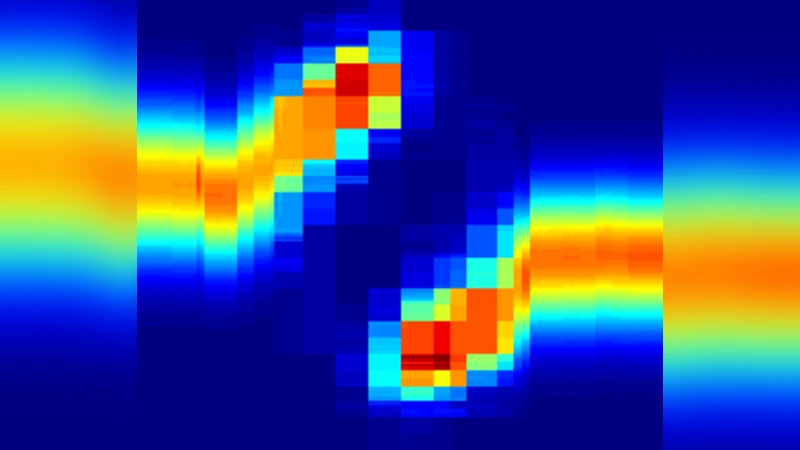

Two concrete applications illustrate the framework. First, variable‑order Markov models are obtained by using a suffix‑tree partition of the input alphabet; only stopping probabilities are needed because each node has at most one child containing the current suffix. The resulting model coincides with earlier context‑tree weighting schemes but is derived here from the more general cover‑walk perspective. Second, conditional density estimation employs a kd‑tree to partition a continuous input space. At each node two alternative local density estimators are possible: a conjugate Normal‑Wishart model or a Bayesian tree density estimator extending Hutter’s method to higher dimensions. The random walk selects among these alternatives, yielding a double‑pseudo‑tree structure that supports exact, incremental updating of the conditional density ψ(y|x).

The paper situates its contribution among related work on tree‑based density estimation, kernel conditional density methods, and incremental probability estimation. Unlike kernel approaches, the proposed method maintains a full posterior distribution over models, enabling principled uncertainty quantification and online learning. Compared with earlier tree‑based Bayesian density estimators, it extends the idea to conditional settings and to arbitrary cover structures beyond simple partitions.

In summary, the authors present a flexible, theoretically grounded framework that unifies and generalizes several existing non‑parametric Bayesian models. By representing context selection as a terminating random walk over a sequence of covers, they achieve exact, closed‑form, and computationally efficient inference for conditional measures, opening new possibilities for online, non‑parametric Bayesian learning in a variety of domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment