On the correlation between bibliometric indicators and peer review: Reply to Opthof and Leydesdorff

Opthof and Leydesdorff [arXiv:1102.2569] reanalyze data reported by Van Raan [arXiv:physics/0511206] and conclude that there is no significant correlation between on the one hand average citation scores measured using the CPP/FCSm indicator and on the other hand the quality judgment of peers. We point out that Opthof and Leydesdorff draw their conclusions based on a very limited amount of data. We also criticize the statistical methodology used by Opthof and Leydesdorff. Using a larger amount of data and a more appropriate statistical methodology, we do find a significant correlation between the CPP/FCSm indicator and peer judgment.

💡 Research Summary

The paper by Waltman et al. is a methodological rebuttal to the claim made by Opthof and Leydesdorff (2011) that there is no significant correlation between the CPP/FCSm citation indicator and peer‑review quality judgments. Opthof and Leydesdorff based their conclusion on a very limited subset of the data originally reported by Van Raan (2006): they used only the summary values presented in two tables, which amount to 12 observations for the first hypothesis and a coarse three‑category version of CPP/FCSm for the second. This severe reduction of information, the authors argue, undermines any reliable statistical inference.

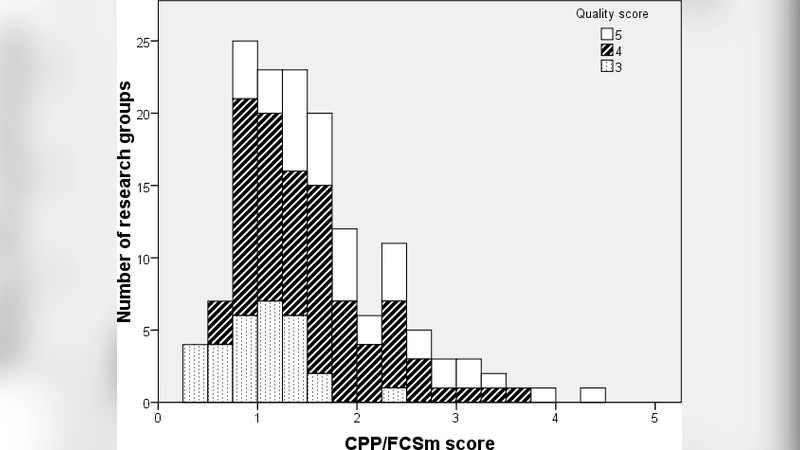

Waltman et al. therefore retrieve the full dataset used by Van Raan, which comprises 147 Dutch chemistry and chemical‑engineering research groups. For each group the CPP/FCSm score (average citations per publication, field‑normalized) and a peer‑review quality score on a five‑point scale (3 = satisfactory, 4 = good, 5 = excellent) are available. No group received a score of 1 or 2, and the distribution of quality scores is 30 groups with 3, 78 with 4, and 39 with 5.

The authors first present descriptive statistics: the mean CPP/FCSm values increase monotonically with quality score (1.02 for score 3, 1.55 for score 4, and 1.99 for score 5). The differences between adjacent quality categories are substantial: the mean difference between scores 5 and 4 is 0.44 (95 % bootstrap confidence interval 0.15–0.74), and between scores 4 and 3 is 0.53 (95 % CI 0.31–0.73). These differences are not only statistically significant but also substantively meaningful, indicating that groups judged “excellent” have roughly 30 % higher citation impact than those judged merely “good”.

To quantify the strength of the relationship, the authors compute a Spearman rank correlation of 0.45 (95 % CI 0.31–0.57) and a modified Kendall’s τ (Adler, 1957) of 0.46 (95 % CI 0.32–0.59). Both measures point to a moderate positive association, contradicting Opthof and Leydesdorff’s first conclusion that no correlation exists.

The paper also critiques the methodological focus of Opthof and Leydesdorff on null‑hypothesis significance testing. Waltman et al. argue that with a sufficiently large sample, any non‑zero relationship will eventually become statistically significant; what matters more is the magnitude of the effect and its practical relevance. By using confidence intervals, effect‑size measures, and preserving the continuous nature of the CPP/FCSm variable, the authors provide a more nuanced assessment.

Finally, the authors discuss the broader context of comparing citation‑based metrics with peer review. They note that peer review itself suffers from reliability issues and various biases, as documented in the literature (e.g., Bornmann, 2011). Consequently, discrepancies between bibliometric indicators and peer judgments cannot automatically be interpreted as failures of the indicators; they may also reflect limitations of the peer‑review process.

In summary, Waltman et al. demonstrate that, when the full dataset and appropriate statistical techniques are employed, the CPP/FCSm indicator correlates moderately but significantly with peer‑review quality judgments. Their analysis refutes the claim that the indicator cannot distinguish “good” from “excellent” research and underscores the importance of using robust methods and recognizing the inherent imperfections of both citation metrics and peer review in research evaluation.

Comments & Academic Discussion

Loading comments...

Leave a Comment