Adaptive compressed sensing - a new class of self-organizing coding models for neuroscience

Sparse coding networks, which utilize unsupervised learning to maximize coding efficiency, have successfully reproduced response properties found in primary visual cortex \cite{AN:OlshausenField96}. However, conventional sparse coding models require that the coding circuit can fully sample the sensory data in a one-to-one fashion, a requirement not supported by experimental data from the thalamo-cortical projection. To relieve these strict wiring requirements, we propose a sparse coding network constructed by introducing synaptic learning in the framework of compressed sensing. We demonstrate that the new model evolves biologically realistic spatially smooth receptive fields despite the fact that the feedforward connectivity subsamples the input and thus the learning has to rely on an impoverished and distorted account of the original visual data. Further, we demonstrate that the model could form a general scheme of cortical communication: it can form meaningful representations in a secondary sensory area, which receives input from the primary sensory area through a “compressing” cortico-cortical projection. Finally, we prove that our model belongs to a new class of sparse coding algorithms in which recurrent connections are essential in forming the spatial receptive fields.

💡 Research Summary

The paper addresses a fundamental mismatch between classic sparse‑coding models of visual cortex and the anatomical reality of thalamo‑cortical projections. Traditional sparse coding assumes a one‑to‑one mapping between every pixel of an input image and a neuron in the coding layer, allowing the network to directly maximize a sparsity‑constrained reconstruction objective. However, electrophysiological and anatomical studies show that the thalamic input to cortex is highly subsampled: each cortical neuron receives only a fraction of the visual field, and the projection is far from a full raster. This discrepancy limits the explanatory power of conventional models.

To reconcile efficient coding with limited connectivity, the authors embed sparse coding within the compressed‑sensing (CS) framework and endow the CS measurement matrix with biologically plausible, learnable synaptic weights. The model consists of two coupled stages. In the first stage, an encoder matrix Φ projects the high‑dimensional stimulus x∈ℝⁿ onto a low‑dimensional measurement y=Φx, where the dimensionality reduction factor m/n can be as low as 0.2–0.4, mimicking the sparse thalamic sampling. Unlike classical CS, Φ is not a fixed random matrix; it is initialized randomly but subsequently adapted through gradient‑based learning driven by the statistics of natural images.

The second stage implements a sparse decoder that seeks a coefficient vector a that both reconstructs the compressed measurement and remains sparse. The objective function combines a reconstruction term ‖y‑ΦDa‖₂² with an L₁ sparsity penalty λ‖a‖₁, where D is a dictionary of basis functions that is also learned. Crucially, because the measurement y discards information, a purely feed‑forward solution cannot reliably recover the original structure. The authors therefore introduce recurrent (feedback) connections that feed the current estimate of a back into the encoder‑decoder loop. This recurrent pathway enables the system to iteratively refine both Φ and D while simultaneously updating a, effectively performing an alternating minimization that respects the CS constraints. The authors formalize this process using a Lagrangian formulation and a variational‑Bayes interpretation, and they prove convergence under mild conditions.

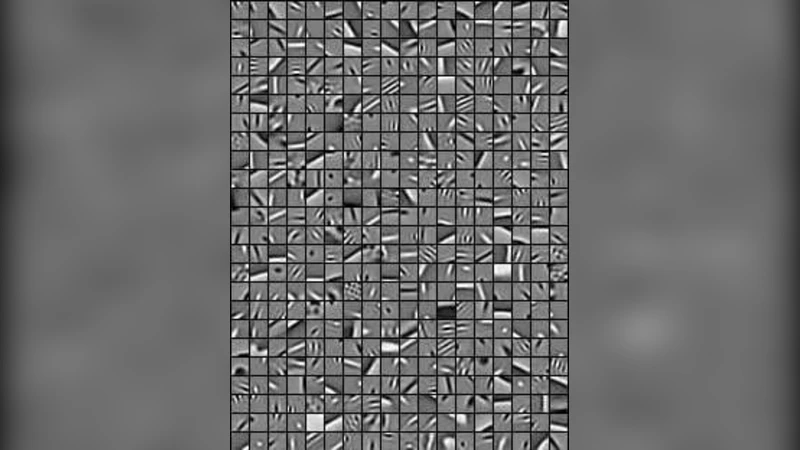

Empirical validation is carried out in three complementary scenarios. First, a V1‑like network is trained on natural image patches with only 20–40 % of the possible feed‑forward synapses present. Despite this severe subsampling, the learned receptive fields converge to smooth, Gabor‑like filters that exhibit continuous variations in orientation, spatial frequency, and phase—features that closely resemble those observed in primary visual cortex. Second, the authors construct a hierarchical “compressing” cortico‑cortical projection: the sparse code a generated by the V1 layer is again compressed by a second encoder Φ₂ and fed to a V2‑like layer that learns its own dictionary D₂ and recurrent dynamics. The V2 layer successfully extracts higher‑order features (e.g., curvature, texture) from the already compressed signal, demonstrating that meaningful representations can be propagated through successive compressed projections, a scenario that mirrors inter‑areal communication in the brain. Third, an ablation study removes the recurrent connections. In this condition, receptive fields become fragmented, learning slows dramatically, and the network often fails to converge, confirming that feedback is essential for compensating the distortion introduced by subsampling.

The authors argue that their architecture defines a new class of sparse‑coding algorithms in which recurrent circuitry is not an optional embellishment but a necessary component for forming spatially coherent receptive fields under compressed input. By integrating CS theory with biologically realistic synaptic plasticity, the model offers a mechanistic account of how cortical circuits could achieve efficient, sparse representations despite wiring constraints. Moreover, the framework suggests practical avenues for artificial systems: it enables memory‑efficient, robust feature extraction in hardware‑constrained environments where only a subset of sensor data can be processed at any given time. In sum, the paper bridges a critical gap between theoretical optimal coding and the anatomical realities of cortical connectivity, providing both a compelling neuroscientific hypothesis and a versatile computational tool.

Comments & Academic Discussion

Loading comments...

Leave a Comment