Optimal Camera Placement to measure Distances Conservativly Regarding Static and Dynamic Obstacles

In modern production facilities industrial robots and humans are supposed to interact sharing a common working area. In order to avoid collisions, the distances between objects need to be measured conservatively which can be done by a camera network.…

Authors: Maria H"anel, Stefan Kuhn, Dominik Henrich

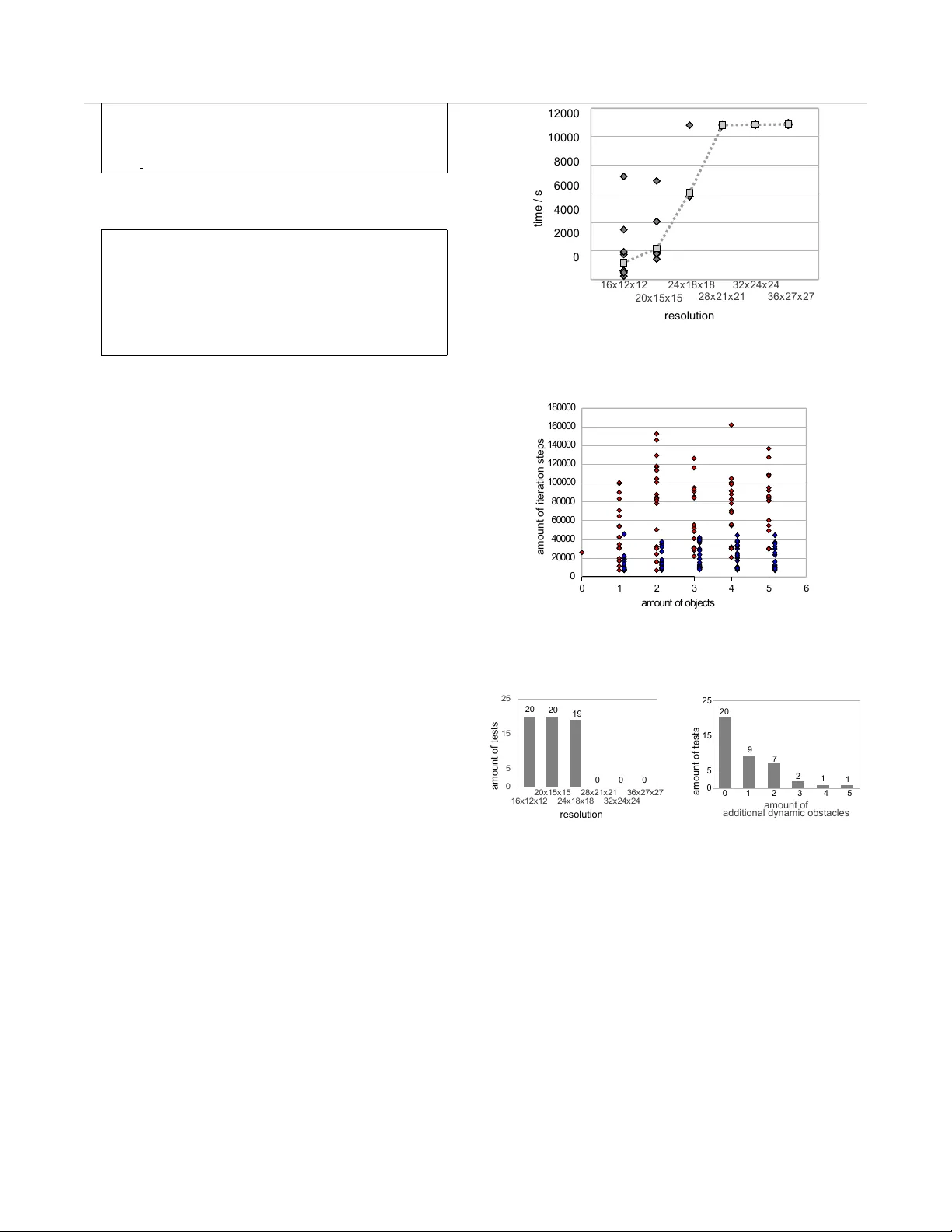

Optimal Camera Placement to measure Distances Conserv ati vly Reg arding Static and Dynamic Obstacles M. H ¨ anel and S. Kuhn and D. Henrich Ange wandte Informatik III Uni versit ¨ at Bayreuth 95440 Bayreuth, Germany { maria.haenel,stefan.kuhn, dominik.henrich } @uni-bayreuth.de J. Pannek and L. Gr ¨ une Lehrstuhl f ¨ ur Ange wandte Mathematik Uni versit ¨ at Bayreuth 95440 Bayreuth, Germany { juergen.pannek,lars.gruene } @uni-bayreuth.de Abstract — In modern production facilities industrial robots and humans are supposed to interact sharing a common working area. In order to avoid collisions, the distances between objects need to be measured conservatively which can be done by a camera network. T o estimate the acquired distance, unmodelled objects, e.g., an interacting human, need to be modelled and distinguished from premodelled objects like workbenches or robots by image processing such as the background subtraction method. The quality of such an approach massively depends on the settings of the camera network, that is the positions and orien- tations of the individual cameras. Of particular inter est in this context is the minimization of the error of the distance using the objects modelled by the background subtraction method instead of the r eal objects. Her e, we sho w how this minimization can be formulated as an abstract optimization problem. Moreov er , we state various aspects on the implementation as well as reasons for the selection of a suitable optimization method, analyze the complexity of the proposed method and present a basic version used f or extensive experiments. Index T erms — Closed range photogrammetry , optimization, camera network, camera placement, error minimization I . I N T RO D U C T I O N Now adays, human/machine interaction is no longer re- stricted to humans programming machines and operating them from outside their w orking range. Instead, one tries to increase the efficienc y of such a cooperation by allowing both actors to share the same w orking area. In such a context, safety precautions need to be imposed to av oid collisions, i.e., the distance between human and machine interacting in a common area needs to be reconstructed continuously in order to detect critical situations. T o this end, usually a network of cameras is installed to, e.g., ensure that e very corner of the room can be watched, ev ery trail can be followed or ev ery object can be reconstructed correctly . W ithin this work, we focus on computing an optimal configuration of the camera network in order to measure the distances as correct as possible b ut still conservati vely . After a brief revie w on previous results concerning the predescribed distance measurement, we show ho w an unmo- delled (human) object can be contoured by a 3D background This work is part of the project SIMERO 2 and is supported by Deutsche Forschungsgemeinschaft. J ¨ urgen Pannek was partially supported by DFG Grant Gr1569/12-1 within the priority research program 1305. subtraction method. W e extend this scheme to cover both static and dynamic obstacles, some of which are modelled in advance b ut still occlude the vision of the sensor . In Section III, we rigorously formulate the problem of minimizing the error made by using the associated model instead of the original collective of unmodelled objects. Considering the implementation of a solution method, we discuss various difficulties such as, e.g., the ev aluation of the intersection of the cones corresponding to each camera in Section IV and also gi ve an outline of concepts to w ork these issues. In the final Sections V and VI, we analyze the complexity of our basic implementation by a series of numerical experiments and conclude the article by given an outlook on methods to further improve the proposed method. I I . S T A T E O F T H E A RT Many camera placement methods hav e to deal with a trade-off between the quality of observations and the quantity of pieces of information which are captured by the cameras. The latter aspect is important for camera networks which ha- ve to decide whether an item or an action has been observed. There hav e been in vestigations about how to position and orientate cameras subject to observing a maximal number of surfaces [8] and different courses of action [1,5,6] as well as maximizing the v olume of the surveillance aread [14] or the number of objects [11]–[13]. Another common goal in this context is to be able to observ e all items of a gi ven set b ut minimize the amount of cameras in addition to obtain their positions and orientations [4,11]–[13]. This issue is called “ Art Gallery Problem” especially when speaking of two– dimensional space. Apart from deciding whether an object has been detected by a camera network, another task is to obtain detailed geometrical data of the observed item like its position and measurements of its corners, curves, surfaces, objects etc. As described in [10] determining this information for distances smaller than a few hundred meters by cameras belongs to the field ‘close range photogrammetry’. In order to configure a camera network to cope with such tasks, one usually minimizes the error of observed and reconstructed items. Often the phrase ‘Photogrammetric Network Design’ is used to express minimizing the reconstruction error for several (three-dimensional) points. The default assumption in this 2 context, howe ver , is that no occlusions occur , cf. [7,16]–[19] for details. Optimally localizing an entire object which is not occluded is an assignment treated in [3]. Furthermore, many approaches compensate for the increasing complexity of the problem by oversimplifying matters: One common approach is to restrain the amount of cameras (in [7] two cameras are used) or their position and orientation. Considering the latter , known approaches are the vie wing sphere model given in [18,19] or the idea of situating all cameras on a plane and orientating them horizontally , cf. [3]). In contrast to these approaches, we discuss optimizing positions and orientations of cameras in a network in the context of the background subtraction method which is used to determine a visual hull of a solid object. By means of this visual hull, distances can be computed easily which renders this approach to be a dif ferent simplification. Occlusions of solids to be reconstructed obscure the view and enlar ge the visual hull. In order to get the minimal error of the construc- tion of the hull, [23] assumes that minimizing the occuring occlusions of solids also reduces and thus specifies their possibile locations. Howe ver , neither obstacles nor opening angles other than π are discussed in [23] and additionally the orientation of the camera is neglected as a variable since it is simply orientated towards the object. In [2], static obstacles are considered but the amount of cameras is chosen out of a preinstalled set. Since we are not interested in optimizing the quantity of observed objects but the quality of data, our approach is dif- ferent to most of the discussed results. Note that the quality of information can be obtained by various types of image processing. Here, we consider the background subtraction method to obtain a visual hull of a gi ven object. Within our approach, we optimize the positions and orientations of a fixed amount of cameras as to minimize the error that is made by e valuating distances to the visual hull. In contrast to existing results, our goal is to incorporate the aspects of occuring static or dynamic obstacles into our calculations but also to exploit all degrees of freedom av ailable in an unconstrained camera network. Ne vertheless, distances are to be ev aluated conservati vly . I I I . V I S I B I L I T Y A N A L Y S I S W ithin this section, it will be shown ho w to condition the objectiv e function on the cameras position and orientation, thus, we successiv ely build up a mathematical representation of the optimization problem. W e start off by defining the critical area as well as the to be reconstructed unmodelled objects and corresponding abstract models in Section III-A. This will allow us to formally state the objectiv e function which we aim to minimize. In the following Section III-B, we define the camera network and its degrees of freedom, i.e. the position and orientation of each camera. These degrees of freedom allow us to parametrize the model of the to be reconstructed object. Additionally , this tuple of degrees of freedom will serve as an optimization v ariable in the minimization problem stated in Section III-C. T o cov er all possible scenarios, this problem is extended by incorporating both static and dynamic obstacles as well as an evolving time component. A. F ormalizing the Pr oblem Let U ⊂ R 3 be a spacial area based on which information about humans, perils, obstacles and also cameras can be giv en. Consider S ⊂ U to be the surveillance area, where critical points of the set C ⊂ S as well as objects, such a human or a robot, are monitored. For the moment, we neglect obstacles completely , we just distinguish two types of objects, to explain the basic idea of reconstructing an object by the means of a camera network: If a detailed model of an object e xists describing its appearance like location, shape, color or else, the object is called modelled . If this is not the case the object is called unmodelled . This is motiv ated by the follo wing scenario: If humans mov e unpredictably within the surveillance area, i.e. without a giv en route, their appearance is unmodelled and needs to be reconstructed to be used for further calculations. The model of an unmodelled object can be reconstructed by the means of a camera network. Therefore, let O u ( a ) ⊂ S be a complete set of points included in one or more unmodelled objects, depending on the appearance of unmodelled objects specified by the parameter a ∈ R k . W e refer to these objects as unmodelled collective . Since automaticly placing the cameras for such a scenario is incomputable without information on the unmodelled collective, we impose the assumption that the distrib ution P : 2 R k → [0 , 1] of the appearance a ∈ R k is known. As the safety of a human being must be guaranteed in any case, the distance d ( C, O u ( a )) := min { d ( x, y ) | x ∈ C, y ∈ O u ( a ) } has to be computed conservati vely and security measures need to be taken if the unmodelled collectiv e O u ( a ) appoa- ches the critical points C . Here d ( · , · ) denotes a standard distance function. If the exact set O u ( a ) was known, this distance could be ev aluated easily . As we do not directly know the v alue of a ∈ R k and therefore can only guess the points that are included in O u ( a ) , we need to approximate a (as a consequence also conservati ve) model M ( a ) ⊂ S , see Fig. 1. Fig. 1. Surveillance area S : Distance between critical points C and unmodelled collecti ve (black) and distance to the approxi- mated model (green) 3 Note that for now M ( a ) is an abstract approximation of O u ( a ) with respect to the parameter a only . In order to actually compute M ( a ) , a sensor network and its degrees of freedom come into play , see Section III-B for details. Still, the abstract approximation allo ws us to formalize our ov erall task, i.e. to minimize the difference between the approximation based distance d ( C, M ( a )) and the real di- stance d ( C, O u ( a ))) . T aking the assumed distrib ution of the parameter a into account, we aim to minimize the functional Z a ∈ R k h d ( C, O u ( a )) − d ( C , M ( a )) i 2 d P ( a ) . (1) Note that for the optimization we need to be aware of possible appearances of the object in order to let the integral pass through their space a ∈ R k . Thus all appearances a ∈ R k of the unmodelled collecti ve should be known. B. Building a Model with the Camera Network In the previous section we saw that in order to ev aluate the functional (1), a model M ( a ) of the unmodelled collectiv e O u ( a ) ⊂ U is required. T o obtain such a model, we impose a camera network N consisting of n ∈ N Cameras. Each camera can be placed and orientated with a setting E = ( U × [ − π , π ] × [ − π 2 , π 2 ]) . Here, the first term corresponds to the position of the camera whereas the second and third denote the angles ‘ya w’ and ‘pitch’ respectiv ely . F or simplicity of exposition, we exclusi vely considered circular cones in our implementation which allo wed us to neglect the angle ‘roll’ as a degree of freedom in the setting of a single camera. Hence, each camera exhibits five degrees of freedom, three for the position and two for its orientation . Thus, each camera can be regarded as a tupel ( e, p ) ∈ E × U whereas its produced output regarding the parameter a ∈ R k of unmodelled collective is a function κ : ( E × U × R k ) → V ( e, p, a ) 7→ κ e,a ( p ) that is - gi ven the setting e ∈ E and the appearance of the unmodelled collective a ∈ R k - each point p ∈ U is mapped onto a sensor v alue v ∈ V where V := { free , occupied , undetectable } . This set is adjusted to the ev aluation of the network’ s images by the change detection method (e.g. background subtraction). The sensor v alue κ e,a ( p ) of a point p ∈ U is fr ee if this point is perceived as not part of the unmodelled collectiv e. The value occupied resembles the possibility that the point could be part of the unmodelled collecti ve (i.e. the point might be occupied by the collective). If the sensor cannot make the decision, e.g. this is the case for cameras that cannot ‘see’ behind walls, the value is undetectable . Obstacles lik e walls will be discussed in Section III-C. T o obtain the values of set V one could apply the method of background subtraction, which is discussed in [9] elaborately . Although our method is not restricted to a pixel model which is considered in [9], the idea of this work remains the same. Thus, we will only provide the prior formulization of the values, as to e xplain their role in b uilding the model of an unmodelled collectiv e. According to the definition of the set V , all cameras split the set U into three different subsets: P f ( e, a ) = { u ∈ U | κ e,a ( u ) b = ‘free’ } P oc ( e, a ) = { u ∈ U | κ e,a ( u ) b = ‘occupied’ } P nd ( e, a ) = { u ∈ U | κ e,a ( u ) b = ‘undetectable’ } W e state here without proof that we hav e constructed these parts to be a pairwise disjoint conjunction of U , i.e. U = P f ( e, a ) ∪ P oc ( e, a ) ∪ P nd ( e, a ) with P f ( e, a ) ∩ P oc ( e, a ) = P f ( e, a ) ∩ P nd ( e, a ) = P oc ( e, a ) ∩ P nd ( e, a ) = ∅ hold. The unmodelled collecti ve O u ( a ) cannot be situated inside P f ( e, a ) , all we kno w is O u ( a ) ⊂ P oc ( e, a ) ∪ P nd ( e, a ) = U \ P f ( e, a ) . Since this inclusion holds for the parameter a ∈ R k and one camera with settings e ∈ E , obviously the follo wing is true if we consider a camera network N consisting of n cameras with settings e i , i = 1 , . . . , n : O u ( a ) ⊂ ( U \ P f ( e 1 , a ) ∩ . . . ∩ U \ P f ( e n , a )) = U \ P f ( e 1 , a ) ∪ . . . ∪ P f ( e n , a ) Note that this set is already a good approximation of the unmodelled collective if we considered the entire set U . Howe ver , as we only monitor the surveillance area S , we define the desired model M ( a ) of the unmodelled collectiv e O u ( a ) as the intersection with the set S , i.e., M ( a ) ≡ M ( a, e 1 , . . . , e n ) := S ∩ U \ P f ( e 1 , a ) ∪ . . . ∪ P f ( e n , a ) (2) This is the basic model that can be used to calculate Formula (1). In the following, we will extend our setting to incorporate a time dependency and to cov er for different types of obstacles. C. Adding T ime and Obstacles So far , we have only considered a static scene to be analyzed. Motiv ated by moving objects, we extend our setting by introducing a time dependency to the process under surveillance. Therefore, we declare the time interv al of interest I = [ t 0 , t ∗ ] , in which t 0 denotes the moment the reference image is taken and t ∗ corresponds to the last instant the surveillance area ought to be observed. Thus, the unmodelled collecti ve O u ( a ( t )) , its probability distribution P ( a ( t )) and its approximation M ( a ( t )) ⊂ S as well as the set of critical points C ( t ) change in time t ∈ I . As a simple extension of (1) we obtain the time dependend error functional t ∗ Z t 0 Z a ( t ) ∈ R k h d ( C ( t ) , O u ( a ( t ))) − d ( C ( t ) , M ( a ( t ) , e 1 , . . . , e n )) i 2 d P ( a ( t )) dt (3) 4 In a second step, we add some more details to the scene under surveillance. T o this end, we specify se veral categories and properties of objects O ⊂ S , which we are particularly interested in and which affect the reconstruction of the current scene. Right from the beginning we hav e considered unmodelled objects. In contrast to modelled objects, these objects need to be reconstructed in order to track them. In the following, we additionally distinguish objects based on the characteristical behavior “static/dynamic”, “target/obstacle” and “rigid/nonrigid”, neglecting those objects that cannot be noticed by the sensors (lik e a closed glass door for cameras without distance sensor). W e define a targ et T ⊂ O of a sensor network as an object which ought to be monitored and in our case reconstructed. An object B ⊂ O which is not a target is called obstacle . Furthermore, we distinguish obstacles based on their physical character: An obstacle B features a rigid nature (like furniture), if the inpenetrability condition T ∩ B r = ∅ holds, and is denoted by the index r in B r . The method proposed in [9] constructs a visual hull of an object by background subtraction, i.e. via change detection. In context of change detection methods another characteristical beha vior of objects is rele vant: A static object is an object O s ⊂ O which is known to af fect the giv en sensors in the same way at any time. If this is not the case, it is called dynamic , which we indicate by adding a subscript and a time dependency O d ( t ) . More specifically , within the proposed background subtraction method the value of each pixel of a current image is subtracted from its counterpart within the reference image which has been taken beforehand. Thus, any change (like size/color/location) occuring after the reference image has been taken leav es a mark on the subtracted image, i.e., if the scene consists of static objects only , then the subtracted image is blank. For this reason, static objects must be placed in the scene before the reference picture is taken, and dynamic objects must not. W ithin the rest of this work, we consider all unmodelled objects to be reconstructed, i.e. in (3) we ha ve O u ( a ( t )) := T ( a ( t )) (4) Consequently , the unmodelled collecti ve and its distance to the critical points are dynamic tar gets. Thus, we always consider an obstacle to be a modelled obstacle since all our unmodelled objects are targets. Furthermore, all obstacles are considered rigid. T o formalize the human-robot-scene let B r s ⊂ O and B r d ( t ) ⊂ O be the collective of static and of dynamic obstacles with time t ∈ [ t 0 , t ∗ ] , respecti vely . W e incorporate these ne w aspects into the model of the unmodelled collectiv e in (3) by intuitively extending our notation to M ( a ( t ) , e 1 , . . . , e n ) := M ( a ( t ) , e 1 , . . . , e n , B r s , B r d ( t )) . (5) Last – as a robot is a dynamic obstacle in addition to a security thread (f.e. when mo ving too fast) – we define the critical points in (3) as the collectiv e of dynamic obstacles C ( t ) := B r d ( t ) . (6) Note that there are dynamic obstacles next to dynamic targets i.e. the unmodelled collectiv e. Thus a dynamical obstacle could easily be regarded as an object of the un- modelled collectiv e since both ev oke akin reactions of the change detection method. In our approach the obstacles are fully modelled and thus define a target free zone since the y are physical obstacles. Still, inaccuracies of the acciden- tal change detection lea ve fragments outside the dynamic obstacle, in our case outside the critical points. As a con- sequence the required distance between critical points and target is reduced to zero. Publication [9] solves this issue by introducing plausibility checks, in which predicates that characterize the target (like volume, height, etc.) are used to sort out the fragments. In conclusion, our aim is to solve the problem Minimize (3) using definitions (4), (5) and (6) subject to e 1 , . . . , e n ∈ E i.e., to compute the optimal positions of n cameras with settings e 1 , . . . , e n such that the measurement error is mi- nimized. I V . A S P E C T S O F O P T I M I Z A T I O N There are various ways to compute Equation (3) referring to: Representing the model, solving the integral and solving the optimization, as can be seen further on. A. Discretization of time and distribution W e would at first like to state that the distance d ( C, M ( a, . . . 3)) between the model and another set does not need to be continuous at ev ery appearance a e ven if the distance d ( C, O u ( a )) to the unmodelled collecti ve is continuous at a . This point can also be made for Equation (3) but we stick to Equation (1) for reasons of simplicity . Such a case is illustrated in Fig. 2. As the original unmodelled collectiv e O u ( a ) of the appearance a ∈ R k does not necessarily need to be conv ex or e ven connected, giv en the settings e i , i = 1 , . . . , n ∈ E , the unfree parts of the sensors U \ P f ( e i , a ) do not need to be connected, either . The model is constructed of an intersection of these parts (see Equation (2)). But, as intersections of disconnected parts do not need to be continuous on a ∈ R k (e.g. referring to Hausdorf f– metrics), the distance d ( C, M ( a, . . . )) between the model and another set does not need to be continuous at ev ery appearance a . Since only integrals with continuous integrants can gene- rally be calculated as a whole or else need to be splitted, such a discontinuous function becomes a problem when being an integrant as of Formula (1). In our case a point of discontinuity of the distance as a function of a cannot be deri ved easily , as it would hav e to be e xtracted from an individual nonrelated analysis depending not only on a or t but also on the sensor settings e i , i = 1 , . . . , n . While 5 Fig. 2. Discontinuity of the distance between perilous points C and the approximated model, consisting of and intersection the nonfree part of camera 1, U \ P f ( e 1 , a ) (green), and the nonfree part of camera 2, P f ( e 2 , a ) (orange). in simple cases this is possible, we spare such an altering analysis by discretizing appearance and time. Here, just the l = 1 , . . . , L most important appearances of the unmodelled collectiv e a l ∈ R k and h = 1 , . . . , H most important time steps t h ∈ [ t 0 , t ∗ ] with t 0 = t 1 and t ∗ = t H and with their weights ω l,h = P ( a l ( t h )) ∈ [0 , 1] are modelled. Accordingly , the following weighted sum approximates the integral of Formula (3): E rr L,H ( e 1 , . . . , e n ) = (7) H X h =1 L X l =1 ω l,m · h d C ( t h ) , O u ( a l ( t h )) − d C ( t h ) , M ( a l ( t h ) , e 1 , . . . , e n , t h ) i 2 B. Discretizing space by voxels The next challenge – building an intersection of (free- form) solids – has claimed to be subject of discussion for more than a quater of a century and still is an issue of recent in vestigations. The publication [22] describes three main areas of solving this issue depending on their representation, each going with pros and cons. Solids represented by polygonial meshs can be intersected by exact arithmetic and intervall computation, checking surface membership afterwards. The major concerns of this approach are robustness and efficienc y (e.g., while intersec- ting two tangetially connected polyhedra/polygons inside-out facettes are computed). Approximate methods (e.g. applying exact methods to a rough mesh of solids and refine the result) exist for meshs, too. Robustness problems (constructing breaks in the boundary) are in this case compensated by time consuming perturbation methods or interdependent operations which prev ent parallel computing. There are also techniques for solids transfered to image space (ray representation). While many of these mainly help rendering rather than ev aluating the boundary , there are some that can be applied to intersection purposes (Layered Depth Image). Unfortunately , when computing these representati- ons back into meshs many geometric details are destroyed. Loosing geometric details is also the case for v olumetric approaches. Conv erting surfaces with sharp corners and edges into volumetric data (lik e voxels) and not loosing data for reconstruction purpose is a challenging task even with ov ersampling. This also holds true for a voxel representation, but voxels on the other hand are easily obtained and rob ustly being checked by boolean operations. In addition to that we need a data structure, distances and volumes which are calculated easily , properties which are ensured for voxels. For these reasons our approach uses a vox el based model which is obtained by boolean operations on the free parts of the sensor . C. Optimization method After ha ving e valuated existing solutions by plugging them in the objecti ve function of a problem, the solv er of an optimization problem is a strategy to improv e solutions until an optimum of the objecti ve function is reached. T o choose a suitable solver for the specified problem, there are dif ferent characteristics of the objective function E r r ( e 1 , . . . , e n ) that need to be considered. At first, we associate the cone of a camera subtracted from the surveillance area as the ’undetectable’-part of this camera, depending on the setting e of the camera. Remember that the undetectable area could be part of the model of the unmodelled collective. Now imagine the cone rotating in ’yaw’-direction continuously . One can easily see that the distance between any giv en point of the surveillance area and this cone is not con vex in e (as an exception, the chosen point can be included in the ’undetectable’-part and the distance is therefore 0 for all e ). The second characteristic to be discussed is the discon- tinuity of E r r ( e 1 , . . . , e n ) with respect to e . Due to the vox el based model distances are only ev aluated to a finite set of points. When calculating the distances we need to jump from one point to the next ev en if settings are just altered gradually . Thus, the objective function is discontinuous and constant in between these discontinuities. Even if we used a non–vox elbased model, discontinuities would appear due to the intersections of disconnected parts mentioned in Section IV -A. The objecti ve function’ s properties complicate the search for a suitable solver . As elucidated in standard references on nonlinear optimization like [15], most algorithms take advantage of a characteristical behavior like con vexity , dif- ferentiability or at least continuity which cannot be guaran- teed in our case. This applies to all determinisic solvers for nonlinear programs such as the Sequential Quadratic Programming, all kinds of local search algorithms (Do wnhill- Simplex, Bisection, Newton, Le venber g-Marquard etc.) and many others. Moreo ver , the problem cannot be transformed to a standard form of solvers like branch-and-bound, decom- positions, cutting planes or outer approximation. This leav es us with non-deterministic, e.g., stochastic solv ers. W e have chosen the method MID A CO which is based on the ant- colony algorithm and samples solutions randomly where they appear to be most promising, see [20,21] for details. D. Complexity The solver is an iteration which generates a tuple of settings e i , i = 1 , . . . , n (one setting for each camera) 6 within each iteration step stochastically , based on knowledge of previous generations. Gi ven these settings the model, the distances and the objectiv e function consisting of the weighted sum gi ven in Equation (8) are e valuated. This continues until a stopping criteria is fulfilled. In order to compute the complexity of the method, assume that upon termination the I -th iteration step has been reached. The process of obtaining the objectiv e value of Formula (8) is only implemented in a basic version, whereas for the giv en tuple of settings e i all of the H time steps and L appearances are to be ev aluated to test all of the r v oxels whether they are included in the intersection in question. The intersection test uses all of the f max u facets of the unmodelled collectiv e as well as most of f s static facets and f max d dynamic facets. Summing up these components gi ve us the complexity O I · r · { n f s + H ( n + L ) f max d + H L n f max u } of the method. V . E X P E R I M E N T S Since we use a stochastical solver on the non-con vex problem of camera configuration, the obtained solutions (i.e. tuple of settings) most likely differ from one another although the same objecti ve v alue (i.e. de viation of distances) might hav e been found. Therefore, we ran groups of 20 solver calls with the same parameters to perceive the average outcome. One examination consists of a fe w groups of test runs which only differ in one parameter . W e made exami- nations about changing resolutions, facets, objects, amount of e vents, amount of cameras and starting point. As long as there are no other assumptions the basic setup stated in T ab . I is used. modelled part of the scene dimension/amount surveillance area S: cuboid 4 m × 3 m × 3 m vox el resolution: (16 × 12 × 12) critical points: all point inside the dynamic collective static collective: 8 facets at 2 objects dynamic collective: 24 facets at 6 objects in 2 timesteps unmodelled collecti- ve: 24 f acets at 6 objects in 3 e vents (of distrib .) camera placement: 6 cameras all over the surveillance area starting solution: cameras are placed and orientated ran- domly all over S stop criteria: maximal time limit 3h optimization tolerance ( diag on.o.voxel ) 2 T ABLE I BA S I C S ET U P , W H I CH I S U SE D I F N O O TH E R A S SU P T I ON S A R E M A D E W e additionally assumed that the dynamic collectiv e is also considered to be the set of critical points. Thus, we were able to model a robot (dynamical object and critical points) spinning too fast in direction of a human (unmodelled object). Furthermore, T ab . II contains all test parameters and their ranges. The aim of this section is to summarize all the examinations defined in T ab . II and, in particular , to answer the following central questions: Can the desired optimization modelled part of the scene alterations vox el res.: (16 + 4 i × 12 + 3 i × 12 + 3 i ) for i = 0 , 1 , 2 , 3 , 4 , 5 static coll.: 8 + 60 i facets for i = 0 , . . . , 5 at 2 obj. 6 + 4 i facets at 2 + i objects i = 0 , . . . , 3 dynamic coll.: 24 + 60 i facets i = 0 , . . . , 5 at 3 obj./2 timest. 2 + i obj. i = 0 , . . . , 3 w . 24 + 4 i fac./1 timest. objects placed randomly i = 1 , . . . , 5 timest. w . 2 i obj 8 i fac. 3 events unmodelled coll.: 24 + 60 i facets i = 0 , . . . , 5 at 2 obj./3 events 2 + i obj. i = 0 , . . . , 3 w . 24 + 4 i fac./1 event objects placed randomly i = 1 , . . . , 5 events w . 2 i obj 8 i fac. 3 timest. cameras: i = 3 , . . . , 9 restrictions of the settings’ domain: cameras placed only at ‘ceilling’ cameras placed only in the ‘upper fourth’ T ABLE II T H IS I S A N OV E R V I E W O F A L L E X AM I NAT IO N S . A N E X A M IN A T I ON C O NS I S T S O F A F EW G RO U PS O F T E ST R U NS , E AC H G RO U P D I FFE R S O N L Y I N O N E PA R AM E T E R . tolerance be satisfied in time, ie. will the target be appro- ximated as accuratly as needed? How man y iteration c ycles are needed? What is the operating time of one cycle, of each iteration step and the components of one step? What is the highest memory consumption? A. Hardwar e and Softwar e W e implemented the optimization problem in C++ and compiled it with ’gcc’ version 4.0.20050901 (prerelease) optimized with the setting ’-O3’ on SuSE Linux version 10.0. W e have used only one of the two cores of an AMD Opteron(tm) Processor 254 with 2.8 GHz Power(dynamical from 1GHz - 2.8GHz).Further information can be taken from T ab. III and IV. B. Optimization toler ance As a second stopping criteria ne xt to the three hour time limit we introduced the optimization tolerance, which is the maximal objecti ve value a tuple of settings must be mapped at, for the optimization to terminate. This is desinged to depend on the length of a vox el’ s diagonal. In many cases the solver w as able to satisfy the desired optimization tolerance in the predefined maximal time. Follo wing exceptions have exceeded the time limit: W e recorded an increasing time consumption of one iteration step (beyond linear) when gradually raising the resolution of the v oxel discretization. Due to the time criterion, approaches with a resolutions of more than 24 × 18 × 18 were terminated before satisfying 7 model name: AMD Opteron(tm) Processor 254 cpu MHz: 1004.631 cache size: 1024kB clflush size: 64 cache alignment: 64 T ABLE III PART O F T H E O U T P UT O F $: C A T / P RO C / C P U I NF O MemT otal: 4038428kB MemFree: 886856kB Buffers: 431016 Cached: 2079360 SwapCached: 0kB Activ e: 1431004kB Inactiv e: 1138428kB SwapT otal: 12586916kB SwapFree: 12586916kB T ABLE IV PART O F T H E O U T P UT O F $: C A T / P RO C / M E M I NF O the optimization tolerance (see Fig. 3 and 5). Increasing the number of dynamic obstacles resulted in too man y iteration steps (over 160000 at most compared to less than 45000 when increasing the amount of static obstacles, cf. Fig. 4) and thus decreasing the amount of tests the optimization tolerance was satisfied for, in time, as illustrated in Fig. 6. In rare cases, a similar outcome was observed if theamount of randomly placed unmodelled objects is increased. A combination of both occurrances – the time loss in each iteration step and the requirement of too many iteration steps – has been observed for test runs utilizing a small number of cameras (considering three cameras it was literally im- possible to compute a satisfactory result, see Fig. 7). In case of the tests on dynamic obstacles and too fe w cameras, the model of the unmodelled collective could not be produced optimally before the maximal computing time was up. W e experienced similar results for all tests concerning restrictiv e domains: None of the tests reached the optimization tolerance (0.046 m 2 ) b ut all of them stayed below the value 0.25 m 2 . This could be a sign, e.g. that in our test setting six cameras on the ceilling cannot assimilate the unmodelled collecti ve close enough by the model. C. T ime consumption When raising the amount of e vents, time steps, facets or objects of any of the collectiv es we have also recorded a linearly increasing time consumption for one iteration step. Out of these, the resolution of v oxel discretization and the amount of dynamic objects appear to be the most critical ones. Using more cameras, howe ver , resulted in a lower time loss in one iteration step in our range of camera amounts (for three cameras we required about 315 ms on av erage whereas for nine cameras ca. 170 ms were needed). Of course, this ef fect can only last until optimization tolerance is satisfied (i.e. the model assimilates the unmodelled collectiv e as accurat as needed), and hence time consumption will slope up when using a greater amount of cameras. W ithout gi ving a detailed explanation about the way one iteration step is calculated with our test setting’ s camera 1 6 x 1 2 x 1 2 2 0 x 1 5 x 1 5 2 4 x 1 8 x 1 8 2 8 x 2 1 x 2 1 3 2 x 2 4 x 2 4 3 6 x 2 7 x 2 7 12 00 0 10 00 0 8000 6000 4000 2000 0 tim e / s r eso lu t i on Fig. 3. Scatter plot: W ith refined resolution the mean (light grey squares) of the amount of iteration steps (each represented in a dark grey sqare) in one group was higher . Columns: groups of 20 iterations with different resolutions; 0 1 2 3 4 5 6 0 2 0 0 0 0 4 0 0 0 0 6 0 0 0 0 8 0 0 0 0 1 0 0 0 0 0 1 2 0 0 0 0 1 4 0 0 0 0 1 6 0 0 0 0 1 8 0 0 0 0 a m o u n t o f o b j e c t s am o un t of i t e r atio n st e p s Fig. 4. Scatter plot: Dynamic obstacles (and perilious points) are complicating the iteration. Columns: groups of 20 iterations of additional dynamic objects (red) and static objects (blue) 1 6 x 1 2 x 1 2 2 0 x 1 5 x 1 5 2 4 x 1 8 x 1 8 2 8 x 2 1 x 2 1 3 2 x 2 4 x 2 4 3 6 x 2 7 x 2 7 0 5 1 5 2 5 am o un t of tests r eso lu t i on 20 20 19 0 0 0 Fig. 5. Bar Chart: More refined resolution than (24 × 18 × 18) made it impossible to satisfy the predefined optimization tolerance in time 20 am o un t of ad di t i on al d yna m ic ob s tacles am o un t of tests 0 1 2 3 4 5 25 15 5 0 9 7 2 1 1 Fig. 6. Bar Chart: The more dyna- mic objects were spread across the surveillance area, the less test runs satisfied the desired optimization tolerance network, we would like to state that e xtending the amount of facets, cameras and refining the v oxel resolution enlarges time consumption of the intersection test. Howe ver , no intersection test e xcept for those with refined v oxel resolution has exceded 15 ms on av erage. The test runs with 36 × 27 × 27 vox el, six cameras and 24 facets hav e reached an average of 50 ms . After intersecting areas the related voxels need to be combined to clusters, as to be able to check a free part’ s height or volume (and to compare whether it could be human). This task took about twice up to four times as long as the intersection test, a f act which is mainly due to its direct dependence on the resolution, but also due to the misshaping 8 3 4 5 6 7 8 9 2 1 8 20 am o un t of ca m er a s am o un t of tests 25 15 5 0 20 20 20 20 Fig. 7. Bar Chart: Five till nine cameras could contour the unmodelled collective best. Cl uster Inter sectio n tim e of p ar ts o f on e iter a t i on step / m s am o un t of ca m er a s 60 50 40 30 20 10 0 3 4 5 6 7 8 9 Fig. 8. Bar Chart: The to- tal time consumption of an incre- ased amount of cameras sloped down because the clustering (oran- ge) weighted more than the actual intersection test (blue). of the model (as the clustering seems to depend indirectly on the amount of cameras). As the period of an iteration step is mostly filled with intersecting and clustering, Fig. 8 also shows the decreasing time consumption while using more cameras. D. Memory 1 6 x 1 2 x 1 2 2 0 x 1 5 x 1 5 2 4 x 1 8 x 1 8 2 8 x 2 1 x 2 1 3 2 x 2 4 x 2 4 3 6 x 2 7 x 2 7 2 3 0 0 0 2 4 0 0 0 2 5 0 0 0 2 6 0 0 0 2 7 0 0 0 2 8 0 0 0 2 9 0 0 0 3 0 0 0 0 3 1 0 0 0 3 2 0 0 0 3 3 0 0 0 2 4 9 5 2 2 5 6 9 2 2 6 7 7 6 2 8 0 0 8 2 9 7 1 2 3 2 8 6 8 r e s o l u t i o n v ir tua l m em o r y / kB Fig. 9. Plot of increasing maximal virtual memory that was used when refining the resolution of voxels Measurements of the maximal virtual memory while al- tering the resulution resulted an ascending graph (beyond linear), cf. Fig. 9. The highest demand for virtual memory was measured while testing with the resolution 36 × 27 × 27 (a total of 32868 kB). The graphs concerning the maximum demand for virtal memory versus facets and amount of cameras are only ascending slowly . Both show a linear slope of about 350 kB to 450 kB in our range of parameters. V I . C O N C L U S I O N A N D F U T U R E P R O S P E C T S W e managed to build up a camera placement optimization algorithm that computes location and orientation of a gi ven amount of cameras inside of a specified surveillance area. Only randomly placed dynamic obstacles, too fe w cameras or too restricted placements and a too refined v oxel resolution are a critical for this method. Apart from that we have suc- ceeded to minimize the error made by e valuating distances to the visual hull of a gi ven object up to the optimization tolerance. In contrast to existing results, we are able to model a surrounding area with static and mo ving obstacles without limiting camera positions or orientations and still ev aluate distances conservati vely . Still, as to assimilate the model and the unknonwn collec- tiv e even better , higher resolutions are desired. This leads to the fact that some improvements of the algorithm still need to be implemented. Follo wing alterations of the algorithm may lead to an improved time consumption: First of all, it is possible to parallelize the iterations of the solver as well as some intersection tests. But as the amount of iteration steps of the solver ranged in between about 500 and 160000 , the first goal should be to decrease both the expected number of iteration steps as well as their variance. Placing the initial position of the cameras roughly around the surveillance area and leaving the fine tuning to the algorithm could do the trick. Some consideration should also be paid to save man y clus- tering and intersecting processes by leaving out unnecessary caculations. One of these calculations is the summing up L · H addends (the number of appearances times number of time steps), which all hav e to be simulated. Time loss will be minimized if cancelling the ev aluation of the sum when it trespasses the current optimal value. Also, appropriate data structures like Oct-Trees and BSP-Trees for intersection and inclusion tests ha ve not been implemented, yet, which improv e the time loss during the intersection test. R E F E R E N C E S [1] B O DO R , R . et al. : Optimal camera placement for automated surveil- lance tasks . Journal of Intelligent and Robotic Systems, 50(3):257– 295, 2007. [2] E R CA N , A ., G A MA L , A ., and G U IB A S , L . : Camera network node selection for tar get localization in the pr esence of occlusions . In In SenSys W orkshop on Distributed Cameras , 2006. [3] E R CA N , A . et al. : Optimal placement and selection of camera network nodes for targ et localization . Distributed Computing in Sensor Systems, pp. 389–404, 2006. [4] E R DE M , U . and S C LA RO FF , S .: Optimal placement of cameras in floorplans to satisfy task requir ements and cost constraints . In OMNIVIS W orkshop . Citeseer , 2004. [5] F I OR E , L . et al. : Multi-camera human activity monitoring . J Intell Robot Syst, 52(1):5–43, 2008. [6] F I OR E , L . et al. : Optimal camera placement with adaptation to dynamic scenes . In IEEE International Conference on Robotics and Automation , pp. 956–961, 2008. [7] H A RTL E Y , R .: Estimation of r elative camera positions for uncali- brated cameras . In Computer V ision-ECCV’92 , pp. 579–587, 1992. [8] H O L T , R . et al. : Summary of results on optimal camera placement for boundary monitoring . In Pr oceedings of SPIE , vol. 6570, p. 657005, 2007. [9] K U HN , S . and H EN R I C H , D .: Multi-view reconstruction of unknown objects in the presence of known occlusions . T echn. rep., Univer - sit ¨ at Bayreuth, Angewandte Informatik III (Robotik und Eingebettete Systeme), 2009. [10] L U HM A N N , T. et al. : Close Range Photogrammetry , Principles, tech- niques and applications . Whittles Publishing, 2006. [11] M I TTA L , A .: Generalized multi-sensor planning . European Confer- ence on Computer V ision 2006, pp. 522–535, 2006. [12] M I TTA L , A . and D A V I S , L .: V isibility analysis and sensor planning in dynamic envir onments . European Conference on Computer V ision 2004, pp. 175–189, 2004. [13] M I TTA L , A . and D A V I S , L .: A general method for sensor planning in multi-sensor systems: Extension to random occlusion . International Journal of Computer V ision, 76(1):31–52, 2008. [14] M U RR AY , A . et al. : Coverage optimization to support security moni- toring . Computers, En vironment and Urban Systems, 31(2):133–147, 2007. [15] N O CE DA L , J . and W R I G HT , S . J .: Numerical optimization . Springer Series in Operations Research and Financial Engineering. Springer- V erlag, New Y ork, second ed., 2006. 9 [16] O L AG UE , G .: Design and simulation of photogr ammetric networks using genetic algorithm . In Pr oceedings of the 2000 Meeting of the American Society for Photogr ammetry and Remote Sensing (ASPRS 2000) , 2000. [17] O L AG UE , G . and D U N N , E . : Developement of a practical pho- togrammetric network design using evolutionary computing . The Photogrammetric Record, 22:22–38, 2007. [18] O L AG UE , G . and M O H R , R .: Optimal 3d sensors placement to obtain accurate 3d points positions . In Pr oceedings of the F ourteenth International Confer ence on P attern Recognition , vol. 1, pp. 16–20, 1998. [19] O L AG UE , G . and M O H R , R .: Optimal camera placement for accurate r econstruction . Pattern Recognition, 35:927–944, Januar 1998. Publisher: Else vier . [20] S C HL ¨ U T ER , M ., E GE A , J ., and B A NG A , J .: Extended ant colony optimization for non-conve x mixed inte ger nonlinear pro gramming . Comput. Oper . Res, 36(7):2217–2229, 2009. [21] S C HL ¨ U T ER , M . and G ER D T S , M .: The or acle penalty method . Springer Science+Budiness Media, LLC, 30, 2010. [22] W A NG , C .C . : Appr oximate boolean operations on lar ge polyhedral solids with partial mesh r econstruction . T ransactions on V isualization and Computer Graphics, NA, 2010. [23] Y A NG , D . et al. : Sensor tasking for occupancy reasoning in a network of cameras . In Proceedings of 2nd IEEE International Conference on Br oadband Communications, Networks and Systems (BaseNets’ 04) . Citeseer , 2004.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment