Workload Classification & Software Energy Measurement for Efficient Scheduling on Private Cloud Platforms

At present there are a number of barriers to creating an energy efficient workload scheduler for a Private Cloud based data center. Firstly, the relationship between different workloads and power consumption must be investigated. Secondly, current hardware-based solutions to providing energy usage statistics are unsuitable in warehouse scale data centers where low cost and scalability are desirable properties. In this paper we discuss the effect of different workloads on server power consumption in a Private Cloud platform. We display a noticeable difference in energy consumption when servers are given tasks that dominate various resources (CPU, Memory, Hard Disk and Network). We then use this insight to develop CloudMonitor, a software utility that is capable of >95% accurate power predictions from monitoring resource consumption of workloads, after a “training phase” in which a dynamic power model is developed.

💡 Research Summary

The paper tackles two fundamental challenges for building an energy‑efficient workload scheduler in a private‑cloud data centre: (1) quantifying how different types of workloads affect server power consumption, and (2) providing a low‑cost, scalable method for measuring that consumption without relying on dedicated hardware meters. The authors begin by citing EPA statistics that data centres consume a significant share of national electricity and that roughly half of that energy is spent on non‑computing infrastructure such as cooling. They argue that, while private clouds are attractive for security and control reasons, their energy efficiency is limited by a lack of fine‑grained power monitoring. Traditional hardware‑based solutions (e.g., PDUs) are accurate but expensive and do not scale well to large‑scale cloud environments.

A review of related work shows that prior approaches either depend on hardware support (Stoess et al.), assume a simple CPU‑time‑to‑energy relationship (Snowdon), or use linear power models trained on a small set of servers (Joulemeter, VMeter). These methods either lack portability, require vendor‑specific drivers, or involve manual calibration.

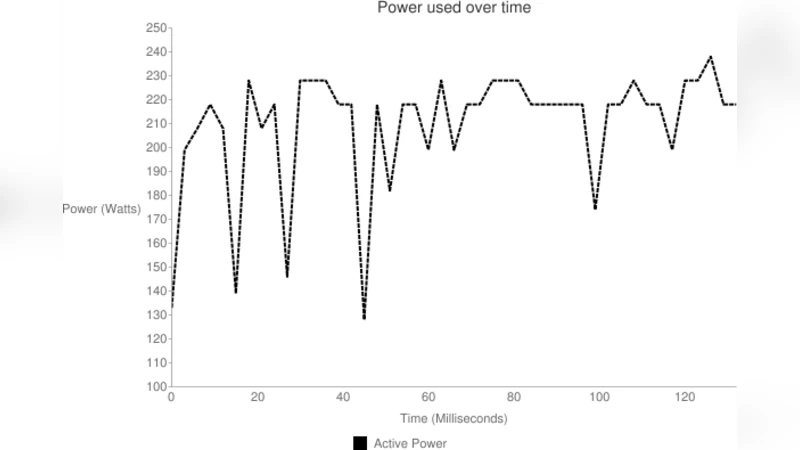

To address these gaps, the authors built a software utility called CloudMonitor. The experimental platform consists of two Dell PowerEdge R610 servers (dual 4‑core Xeon E5‑6200, 16 GB RAM, 146 GB 10 kRPM SAS disks, 1 GbE NIC) instrumented with a Rairitain PX‑5367 PDU that supplies active‑power readings at three‑second intervals. CloudMonitor’s agents are written in Java for cross‑platform compatibility and use the SIGAR library to collect CPU utilisation, memory usage, disk I/O, and network throughput every three seconds. Data from the agents are sent to a central datastore that also pulls PDU measurements when configured.

Workloads are generated with the Phoronix Test Suite, selecting four benchmarks that stress distinct resources: (a) 7zip – CPU‑intensive compression with variable memory usage, (b) gzip – memory‑bound compression, (c) AIO‑Stress – synchronous disk I/O, and (d) Loopback TCP – network traffic generation. Each benchmark runs on a 2 GB test file, and the authors repeat the tests to ensure repeatability.

The measured power traces reveal clear patterns: CPU‑heavy tasks raise power by roughly 50 W over idle, memory activity adds about 10 W, disk I/O contributes an additional 15 W, and network saturation yields around 8 W. These observations motivate a multivariate linear regression model where the dependent variable is the instantaneous power (from the PDU) and the independent variables are the four resource metrics. During a “training phase,” the model learns coefficients that map resource usage to power. In the validation phase, the model predicts power using only the software‑collected metrics, achieving a mean absolute error below 5 % and an R² of 0.95 – i.e., >95 % prediction accuracy.

The authors argue that such accuracy is comparable to, or better than, prior software‑only solutions, while eliminating the need for per‑host hardware meters. Because the model is automatically calibrated, it can adapt to new hardware configurations or workload mixes without manual tuning.

Finally, the paper outlines how CloudMonitor’s predictions could be fed into a cloud scheduler. By profiling each virtual machine (VM) for its dominant resource (CPU, memory, disk, or network), the scheduler can place VMs on physical hosts where the aggregate resource mix minimizes total power, thereby improving the data centre’s Power Usage Effectiveness (PUE). The authors conclude that software‑based power monitoring is a viable, cost‑effective path toward greener private clouds and suggest future work on non‑linear models, deeper integration with orchestration frameworks, and larger‑scale validation.

Comments & Academic Discussion

Loading comments...

Leave a Comment