MCA Based Performance Evaluation of Project Selection

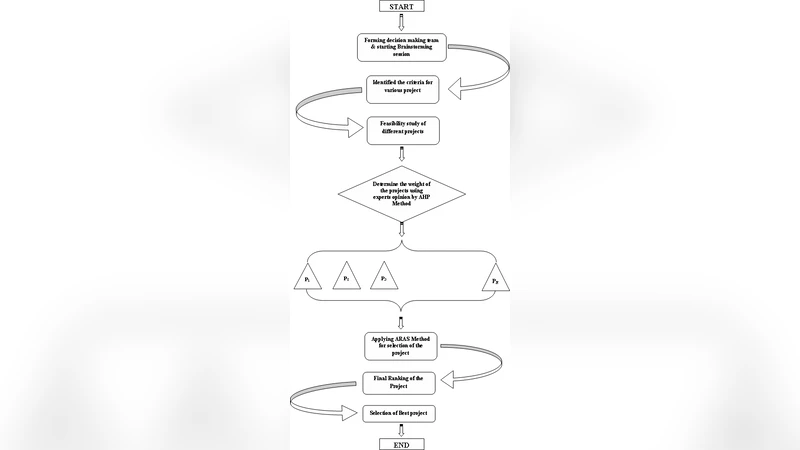

Multi-criteria decision support systems are used in various fields of human activities. In every alternative multi-criteria decision making problem can be represented by a set of properties or constraints. The properties can be qualitative & quantitative. For measurement of these properties, there are different unit, as well as there are different optimization techniques. Depending upon the desired goal, the normalization aims for obtaining reference scales of values of these properties. This paper deals with a new additive ratio assessment method. In order to make the appropriate decision and to make a proper comparison among the available alternatives Analytic Hierarchy Process (AHP) and ARAS have been used. The uses of AHP is for analysis the structure of the project selection problem and to assign the weights of the properties and the ARAS method is used to obtain the final ranking and select the best one among the projects. To illustrate the above mention methods survey data on the expansion of optical fibre for a telecommunication sector is used. The decision maker can also used different weight combination in the decision making process according to the demand of the system.

💡 Research Summary

The paper presents a hybrid multi‑criteria decision‑making (MCDM) framework that combines the Analytic Hierarchy Process (AHP) for determining criteria weights with the Additive Ratio Assessment (ARAS) method for ranking alternatives. The authors argue that AHP is well‑suited for eliciting expert judgments and producing consistent weight vectors, while ARAS offers a straightforward, arithmetic‑based procedure for evaluating alternatives once the weights are known.

The study applies this integrated approach to a real‑world case: the expansion of an optical‑fibre network in a region of Iran. Four evaluation criteria are selected: Net Present Value (NPV), Rate of Return (ROR), Payback Period (PB), and Project Risk (PR). NPV and ROR are treated as benefit criteria (higher is better), whereas PB and PR are cost criteria (lower is better). Five candidate projects (P1–P5) are assessed against these criteria.

First, a panel of five experts performs pairwise comparisons of the criteria using AHP. The resulting weight vector is reported as 0.29 for NPV, 0.34 for ROR, 0.22 for PB, and 0.15 for PR. The paper does not detail the consistency ratio or the exact comparison matrix, which limits verification of the weight‑derivation step.

Next, the ARAS procedure is executed. The raw performance scores are assembled into a decision‑making matrix (DMM). For each criterion, the optimal value (maximum for benefit criteria, minimum for cost criteria) is identified; all other values are normalized relative to this optimum. The normalized matrix is then multiplied by the AHP‑derived weights to obtain a weighted normalized matrix. The sum of each row yields an “optimality function” S_i for each project. The best S_i (denoted S_0) is used as a divisor to compute a utility index K_i = S_i / S_0. The project with the highest K_i is declared the most desirable.

The calculations, presented in a series of tables, lead to the ranking: P2 > P3 > P5 > P1 > P4, with P2 identified as the optimal project. The authors highlight that the ARAS method requires only basic arithmetic, making it computationally light compared with more complex techniques such as TOPSIS or VIKOR.

While the paper provides a clear step‑by‑step illustration of the combined AHP‑ARAS workflow, several methodological gaps are evident. The AHP stage lacks a discussion of consistency checks (e.g., CR < 0.1) and does not disclose the underlying pairwise comparison matrix, making the weight results difficult to reproduce. In the ARAS stage, the transformation of cost criteria into a “maximization” form is described but the exact formulas are ambiguously presented, and the impact of scaling or unit differences is not examined. Moreover, the study does not conduct any sensitivity analysis; the robustness of the ranking to variations in the weight vector or to alternative normalization schemes remains untested.

The literature review enumerates many MCDM methods (AHP, ANP, DEA, TOPSIS, VIKOR, COPRAS, SAW, LINMAP, etc.) but offers limited justification for selecting ARAS over these alternatives beyond a claim of simplicity. No comparative experiments are performed to substantiate the superiority or suitability of ARAS for the specific project‑selection context.

The case study itself is confined to a single sector and a modest dataset (five alternatives, four criteria). While this suffices to demonstrate the mechanics of the method, it does not prove scalability or applicability to larger, more complex decision problems. The expert panel size (five) is also relatively small, which may affect the reliability of the derived weights.

In conclusion, the paper contributes an operationally simple hybrid AHP‑ARAS framework and illustrates its application to a telecom infrastructure project. However, the lack of methodological rigor in the AHP weighting stage, the absence of robustness checks, and the missing comparative analysis limit the scholarly impact. Future research should address these shortcomings by (1) providing full AHP matrices and consistency diagnostics, (2) performing sensitivity and scenario analyses on weight variations, (3) benchmarking ARAS against other MCDM techniques on larger datasets, and (4) exploring the integration of fuzzy or stochastic elements to handle uncertainty in criteria measurements. Such extensions would strengthen the case for ARAS as a viable alternative in multi‑criteria project selection.

Comments & Academic Discussion

Loading comments...

Leave a Comment