A statistical analysis of multiple temperature proxies: Are reconstructions of surface temperatures over the last 1000 years reliable?

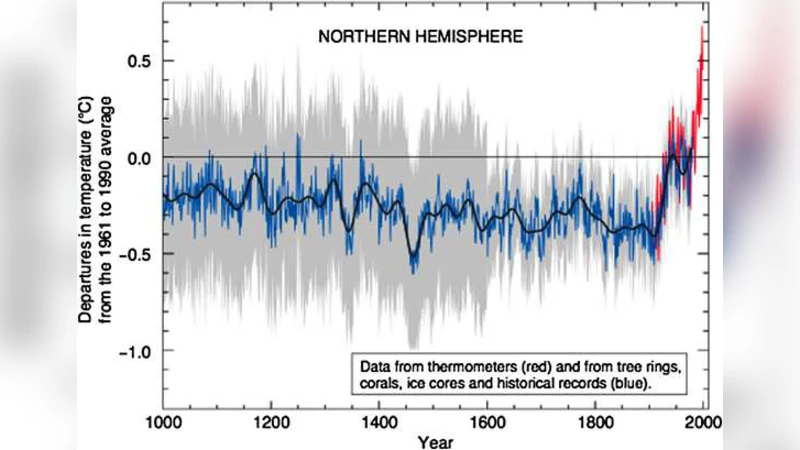

Predicting historic temperatures based on tree rings, ice cores, and other natural proxies is a difficult endeavor. The relationship between proxies and temperature is weak and the number of proxies is far larger than the number of target data points. Furthermore, the data contain complex spatial and temporal dependence structures which are not easily captured with simple models. In this paper, we assess the reliability of such reconstructions and their statistical significance against various null models. We find that the proxies do not predict temperature significantly better than random series generated independently of temperature. Furthermore, various model specifications that perform similarly at predicting temperature produce extremely different historical backcasts. Finally, the proxies seem unable to forecast the high levels of and sharp run-up in temperature in the 1990s either in-sample or from contiguous holdout blocks, thus casting doubt on their ability to predict such phenomena if in fact they occurred several hundred years ago. We propose our own reconstruction of Northern Hemisphere average annual land temperature over the last millennium, assess its reliability, and compare it to those from the climate science literature. Our model provides a similar reconstruction but has much wider standard errors, reflecting the weak signal and large uncertainty encountered in this setting.

💡 Research Summary

The paper by McShane and Wyner (2011) provides a rigorous statistical assessment of temperature reconstructions over the last millennium that rely on a large collection of natural proxies such as tree rings, ice cores, sediments, and corals. The authors begin by highlighting the fundamental difficulty of the problem: the number of proxy series (p ≈ 1200) far exceeds the number of instrumental temperature observations (n ≈ 150 years), creating a classic p ≫ n setting where over‑fitting is a serious concern.

To evaluate whether the proxies contain genuine climate signal, the authors compare the out‑of‑sample predictive performance of several models: (i) simple linear regressions (including ridge and Lasso regularization) that use the full proxy set, (ii) null models generated independently of temperature (white noise, AR(1), ARMA processes), and (iii) more sophisticated time‑series models that also ignore any climate signal. Using contiguous hold‑out blocks of 30–50 years, they compute root‑mean‑square error (RMSE) for each approach. The key finding is that the proxy‑based models do not outperform the null models; their RMSE is statistically indistinguishable from that of pure random series. This demonstrates that, in this data set, the proxies provide little predictive information beyond what could be obtained from a random walk.

The authors then turn to model selection. They show that many different specifications—varying the number of principal components, the regularization strength, or the inclusion of lagged terms—achieve essentially the same cross‑validated RMSE. However, when these equally‑performing models are used to back‑cast temperature over the past 1000 years, the resulting historical trajectories diverge dramatically. Some produce the familiar “hockey‑stick” shape with a sharp rise in the 1990s, while others show a pronounced medieval warm period or a relatively flat pre‑industrial climate. This illustrates that cross‑validation alone is insufficient for selecting a model that yields a reliable historical reconstruction; the validation criterion does not capture the model’s behavior in the unobserved past.

A substantial portion of the paper revisits the controversy surrounding the original Mann‑Bradley‑Hughes (MBH) reconstruction. McShane and Wyner replicate the critique that MBH’s use of “skew‑centering” when computing principal components artificially forces the first component to resemble a hockey‑stick. When the same data are centered in the conventional way, the hockey‑stick disappears and the signal shifts to later components. The authors argue that methodological choices in preprocessing can dramatically alter the shape of the reconstruction, raising concerns about the robustness of earlier claims.

To address these issues, the authors propose a fully Bayesian linear model that treats the relationship between proxies and temperature as a set of unknown regression coefficients with hierarchical priors. They fit the model using Markov chain Monte Carlo, thereby obtaining a posterior distribution for the entire temperature path. The posterior mean reconstruction is similar to the MBH and other published curves, but the associated 95 % credible intervals are substantially wider—often exceeding ±1 °C for centuries before the instrumental era. This wider uncertainty band reflects the weak signal and the high dimensionality of the proxy set, and it suggests that previous reconstructions may have understated their true uncertainty.

Finally, the paper examines the proxies’ ability to capture the rapid warming of the 1990s. Even when the model is trained on the instrumental period and tested on contiguous hold‑out blocks, it fails to reproduce the steep temperature increase observed in the data. This failure implies that, if a similar rapid warming had occurred several centuries ago, the existing proxy network would likely have missed it, casting doubt on the capacity of proxy‑based reconstructions to resolve abrupt climate events.

In sum, the study reaches six major conclusions: (1) proxies exhibit only a weak correlation with temperature; (2) in a p ≫ n context, over‑fitting is a real danger and simple null models perform as well as sophisticated proxy‑based models; (3) cross‑validation alone cannot guarantee a historically plausible reconstruction; (4) the choice of preprocessing (especially principal‑component centering) materially influences the shape of the reconstruction; (5) a Bayesian framework yields reconstructions with realistic, broader uncertainty bands; and (6) the proxy record does not reliably capture the sharp warming of the 1990s, suggesting limited ability to detect similar past events. These findings call for more cautious interpretation of millennium‑scale temperature reconstructions and for the development of statistical methods that explicitly account for high dimensionality, temporal dependence, and model uncertainty.

Comments & Academic Discussion

Loading comments...

Leave a Comment