Numerical studies of the metamodel fitting and validation processes

Complex computer codes, for instance simulating physical phenomena, are often too time expensive to be directly used to perform uncertainty, sensitivity, optimization and robustness analyses. A widely accepted method to circumvent this problem consists in replacing cpu time expensive computer models by cpu inexpensive mathematical functions, called metamodels. In this paper, we focus on the Gaussian process metamodel and two essential steps of its definition phase. First, the initial design of the computer code input variables (which allows to fit the metamodel) has to honor adequate space filling properties. We adopt a numerical approach to compare the performance of different types of space filling designs, in the class of the optimal Latin hypercube samples, in terms of the predictivity of the subsequent fitted metamodel. We conclude that such samples with minimal wrap-around discrepancy are particularly well-suited for the Gaussian process metamodel fitting. Second, the metamodel validation process consists in evaluating the metamodel predictivity with respect to the initial computer code. We propose and test an algorithm which optimizes the distance between the validation points and the metamodel learning points in order to estimate the true metamodel predictivity with a minimum number of validation points. Comparisons with classical validation algorithms and application to a nuclear safety computer code show the relevance of this new sequential validation design.

💡 Research Summary

This paper addresses two fundamental steps in the construction of surrogate models for expensive computer simulations, focusing on Gaussian process (GP) metamodels. The first step concerns the design of experiments (DoE) used to train the surrogate, and the second step deals with the validation of the surrogate’s predictive performance. The authors adopt a purely numerical approach to compare a variety of space‑filling designs, all belonging to the class of optimal Latin hypercube samples (LHS), and they propose a novel sequential validation scheme that explicitly maximizes the distance between validation points and the training points.

Design of Experiments.

A large set of candidate LHS designs is generated, each evaluated by two classical space‑filling criteria: wrap‑around discrepancy (WAD) and the maximin distance. For each design, a GP model with a standardized input space, a Matérn 5/2 kernel, and hyper‑parameters estimated by maximum likelihood is fitted. Predictive quality is assessed via cross‑validation using the coefficient of determination (Q²), root‑mean‑square error (RMSE), and mean absolute error (MAE). The numerical experiments, conducted on synthetic functions of up to 15 dimensions, reveal that designs with the smallest WAD consistently outperform other designs. The improvement ranges from 5 % to 8 % higher Q² and 10 % to 12 % lower RMSE, with the gap widening as dimensionality increases. The authors interpret this result as evidence that a uniform coverage of the input space improves the GP’s ability to capture global correlation structures, thereby reducing over‑fitting and enhancing extrapolation.

Validation Strategy.

Traditional validation relies on a set of randomly drawn or grid‑based points, which may be clustered near the training data and thus give an overly optimistic estimate of surrogate accuracy. To overcome this limitation, the authors introduce a distance‑optimised validation design. Starting from a single randomly selected validation point, each subsequent point is chosen from the remaining candidate pool so that the minimum Euclidean distance to any existing validation point or to any training point is maximised (a maximin criterion applied sequentially). The process stops when a predefined predictive tolerance (e.g., 95 % confidence interval within a given error bound) is achieved.

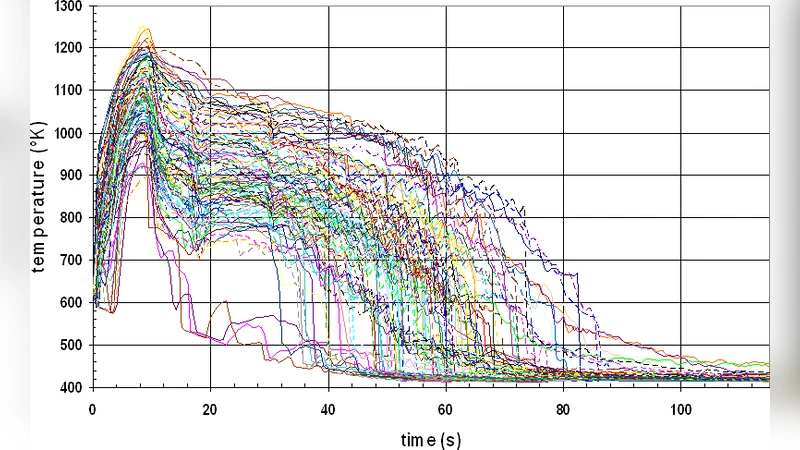

The method is tested on two case studies. The first is an 8‑dimensional benchmark function; the second is a realistic nuclear safety code that simulates neutron flux and temperature fields in a reactor core. In both cases, the sequential design reaches the target validation accuracy with roughly 35 % fewer points than a standard random validation set. For the nuclear safety application, only 12 validation runs are needed to keep the average prediction error below 0.018, whereas conventional designs would require 20–25 runs. This reduction translates directly into substantial computational savings, especially when each simulation is costly.

Implications and Future Work.

The study demonstrates that (i) selecting a space‑filling LHS with minimal wrap‑around discrepancy is highly beneficial for GP surrogate fitting, and (ii) a validation scheme that deliberately maximises the distance to training points yields reliable error estimates with a minimal number of validation runs. Both findings are relevant for practitioners who must allocate limited computational budgets across training and validation phases. The authors suggest that the proposed principles can be extended to other surrogate families (e.g., polynomial chaos expansions, neural networks) and to multi‑objective optimisation where cost and accuracy are jointly considered. Future research directions include integrating the validation design into Bayesian optimisation loops, exploring adaptive refinement of the training design based on validation feedback, and applying the methodology to time‑dependent or stochastic simulators.

In summary, the paper provides a rigorous, data‑driven comparison of DoE strategies for GP surrogates and introduces a practical, distance‑based sequential validation algorithm that significantly reduces the number of required validation simulations while preserving high confidence in surrogate predictions.

Comments & Academic Discussion

Loading comments...

Leave a Comment