Simultaneous model-based clustering and visualization in the Fisher discriminative subspace

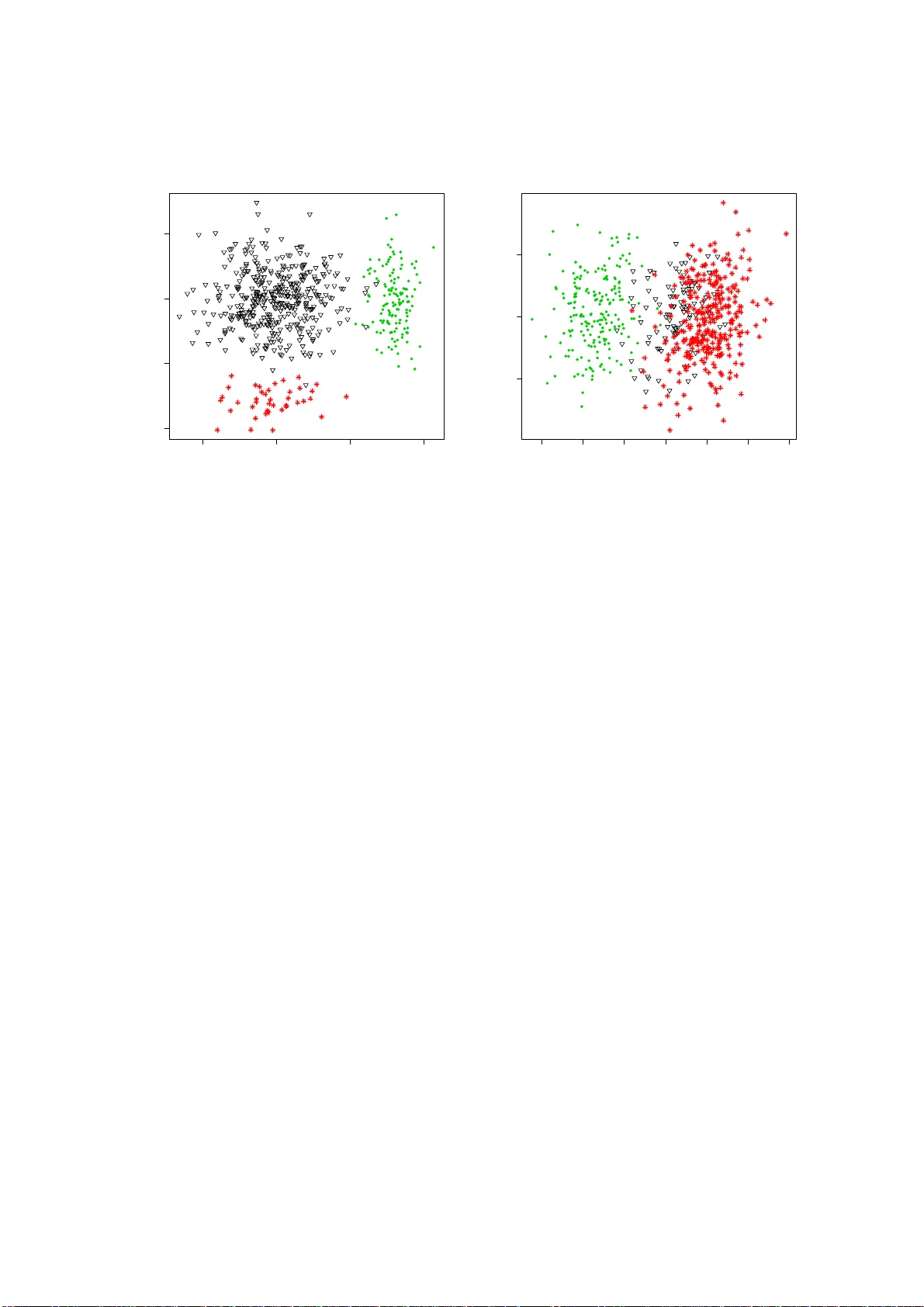

Clustering in high-dimensional spaces is nowadays a recurrent problem in many scientific domains but remains a difficult task from both the clustering accuracy and the result understanding points of view. This paper presents a discriminative latent m…

Authors: Charles Bouveyron, Camille Brunet