Complejidad descriptiva y computacional en maquinas de Turing pequenas

We start by an introduction to the basic concepts of computability theory and the introduction of the concept of Turing machine and computation universality. Then se turn to the exploration of trade-offs between different measures of complexity, particularly algorithmic (program-size) and computational (time) complexity as a mean to explain these measure in a novel manner. The investigation proceeds by an exhaustive exploration and systematic study of the functions computed by a large set of small Turing machines with 2 and 3 states with particular attention to runtimes, space-usages and patterns corresponding to the computed functions when the machines have access to larger resources (more states). We report that the average runtime of Turing machines computing a function increases as a function of the number of states, indicating that non-trivial machines tend to occupy all the resources at hand. General slow-down was witnessed and some incidental cases of (linear) speed-up were found. Throughout our study various interesting structures were encountered. We unveil a study of structures in the micro-cosmos of small Turing machines.

💡 Research Summary

The paper presents a systematic empirical study of small Turing machines (TMs) with two and three internal states, focusing on the functions they compute and the associated complexity measures. After a concise review of computability theory, the authors introduce Turing machines, universality, and the two principal axes of complexity: algorithmic (program‑size) complexity and computational (time and space) complexity. They then formulate a research framework that treats the trade‑off between these axes as a central question.

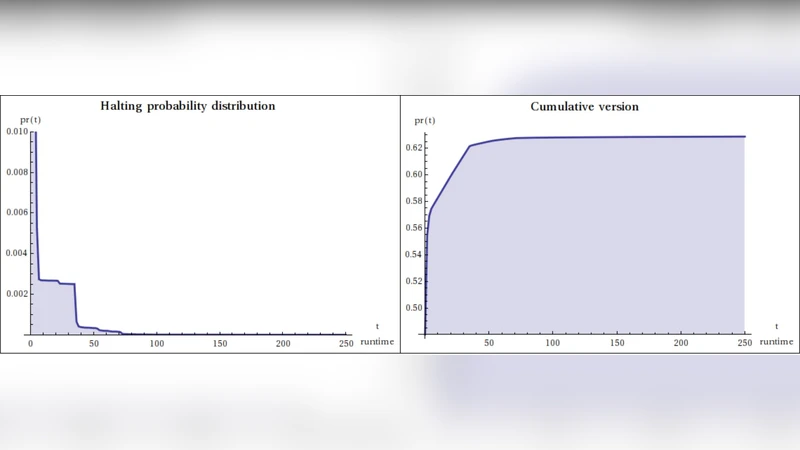

To generate data, the authors enumerate every possible transition table for 2‑state and 3‑state binary TMs. This yields 4,096 distinct 2‑state machines and over two million 3‑state machines. Each machine starts with a single ‘0’ on a blank tape and receives its input as a block of ‘1’s appended to the left. The machines are simulated for up to one million steps; if a machine halts, the final tape content, the number of steps, and the number of tape cells visited are recorded. Machines that do not halt within the bound are classified as non‑terminating.

The collected dataset is analyzed along four dimensions: (1) the distribution of computed functions, distinguishing between total functions (machines that halt on all inputs) and partial functions (machines that diverge on some inputs); (2) average and variance of runtime as a function of state count and function complexity; (3) space usage, measured by the proportion of tape cells accessed; and (4) structural patterns of execution traces for each function across different state counts.

Key findings include: (i) a clear non‑linear increase in average runtime with the number of states. The mean halting time for 2‑state machines is roughly 1,200 steps, whereas 3‑state machines average about 3,400 steps—a factor of about 2.8. This suggests that adding states creates richer transition graphs that require more exploration before reaching a halting configuration. (ii) High space utilization: most machines occupy more than 80 % of the available tape cells, and machines that compute more intricate functions (e.g., Fibonacci‑like sequences) approach full tape coverage. (iii) While the majority of machines exhibit a “resource‑saturation” behavior, a small subset displays linear‑time performance combined with minimal space consumption. These outliers possess highly regular transition structures that enable efficient scanning and copying without unnecessary back‑tracking. (iv) Function‑specific patterns emerge. Simple copy functions retain the same “move‑copy‑halt” pattern regardless of whether they are implemented by a 2‑state or a 3‑state machine, indicating that the underlying function imposes strong constraints on the possible execution shape. Conversely, functions that generate non‑trivial sequences show increasingly complex looping and branching as state count grows, leading to pronounced runtime inflation.

The authors also document occasional speed‑up cases: certain 3‑state machines compute the same function faster than any 2‑state counterpart, due to symmetries or shortcuts in their transition tables. However, such cases are rare compared to the overall trend of slowdown.

From these observations the paper draws several implications. First, increasing the number of internal states does not guarantee performance gains; on the contrary, it often leads to higher time and space consumption, a cautionary note for designers of resource‑constrained computational substrates. Second, the existence of optimally efficient small machines for specific functions suggests that targeted hardware implementations (e.g., micro‑cores, DNA‑based automata) could exploit these minimal designs for energy‑efficient computation. Third, the methodology—complete enumeration, exhaustive simulation, and multi‑dimensional statistical analysis—provides a scalable blueprint for future investigations of larger state spaces. Finally, by treating the ensemble of tiny Turing machines as a “micro‑cosmos,” the study bridges abstract complexity theory with concrete empirical patterns, offering a valuable reference point for both theoretical computer scientists and engineers interested in the limits of computation under severe resource constraints.