When is social computation better than the sum of its parts?

Social computation, whether in the form of searches performed by swarms of agents or collective predictions of markets, often supplies remarkably good solutions to complex problems. In many examples, individuals trying to solve a problem locally can …

Authors: Vadas Gintautas, Aric Hagberg, Luis M. A. Bettencourt

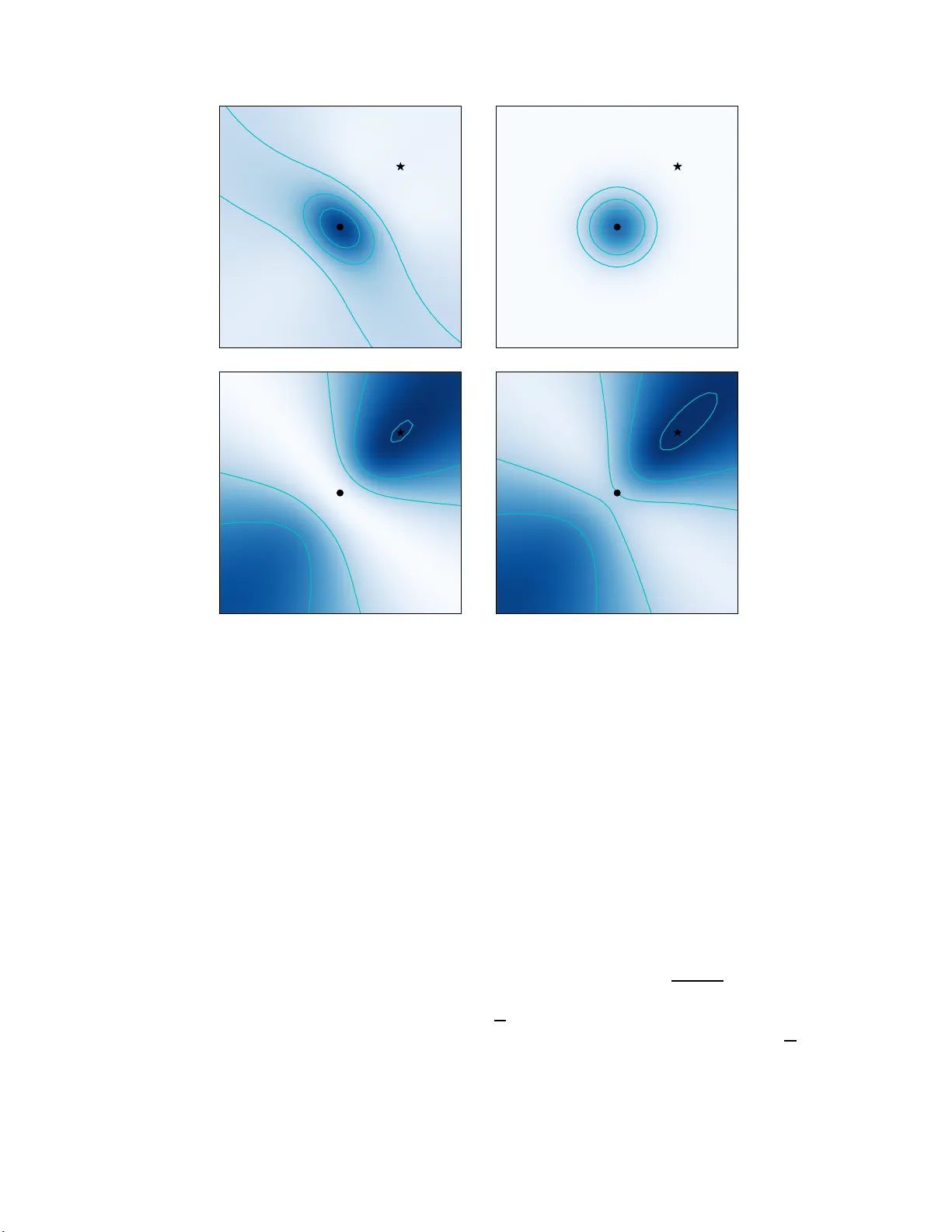

When is so cial computation b etter than the sum of its parts? V adas Gintautas, ∗ Aric Hagb erg, † and Lu ´ ıs M. A. Bettencourt ‡ Center for Nonline ar Studies, and Applie d Mathe matics and Plasma Physics, The or etic al Division, L os Alamos Nat ional L ab or ator y, L os A lamos NM 87545 (Dated: No vem b er 20, 2018) Social comp u tation, whether in the form of searc hes p erformed by swarms of agents or collective predictions o f mark ets, often s upp lies remark ably go od solutions t o complex p roblems. In many examples, individuals trying to so lve a problem l o cally can agg regate their information and work together t o arriv e at a sup erior global solution. This suggests that there may b e general p rinciples of information agg regation and coordination that can transcend particular app licatio ns. Here we sho w that the general structure of this problem can b e cast in terms of informatio n th eory and derive mathematical cond itions that lead to optimal multi-agen t searches. Sp ecifically , w e illustrate the problem in terms of lo cal search algorithms for autonomous agen ts looking for the spatial location of a stochastic source. W e exp lore the types of searc h p roblems, defin ed in terms of the statistical properties of the source and the nature of measuremen ts at eac h agent, for whic h coordination among multiple searc hers yields an advan tage b eyond that gained by ha ving the same num b er of indep endent searc hers. W e show th at effective co ordination corresp onds to synergy an d that ineffective co ordination corresp onds to indep enden ce as defined using information theory . W e classify explicit types of sources in terms of their p otential for synergy . W e sho w that sources that emit uncorrelated signals p ro vide no opp ortunity f or synergetic coordination while sources th at emit signals th at are correlated in some wa y , d o allo w for strong synergy b etw een searchers. These general considerations are crucial for designing optimal algorithms for particular search problems in real world settings. INTRO DUCTION The ability of agents to shar e informatio n and to co or- dinate actions and decisions can provide significant prac- tical adv an tages in real-world searches. Whether the tar- get is a pers on trappe d by an a v alanch e or a hidden cache of n uclear materia l, b eing able to deploy mu ltiple a u- tonomous sea r c hers c a n b e mor e a dv antageous a nd s a fer than s ending human o pera tors. F or e x ample, small au- tonomous, pos sibly ex pendable rob ots could b e utilized in ha rsh winter climates o r on the battlefield. In some pro blems, e.g. lo cating a c ellular telephone via the signal strength at several towers, there is often a simple geometrical sea rch stra tegy , such as triangula- tion, which w orks effectiv ely . How ever, in search prob- lems where the sig nal is sto c hastic or no geometrica l so - lution is known, e.g. searching for a weak scent s o urce in a turbulen t medium, new metho ds need to b e developed. This is esp ecially true when designing a uto nomous and self-repair ing algorithms for rob otic agents [1]. Informa- tion theor etical methods provide a promising appro ac h to develop ob jective functions a nd search a lgorithms to fill this ga p. In a recent pa per, V erg assola et al. demon- strated that infotaxis , which is motion based on exp ected information gain, can b e a mor e e ffective sea r c h stra teg y when the source signal is weak than conven tional meth- o ds such as moving along the gr adient of a chemical co n- centration [2 ]. The infota xis algo rithm combines the tw o comp eting g oals of exploratio n of po s sible s earch mov es and explo itation of received s ignals to g uide the searcher in the direction with the highest probability of finding the s o urce [3]. T o improv e the efficiency of sear ch by using more than one searcher r equires determining under wha t circum- stances a c ollectiv e (par allel) sea rch is better (faster) than the independent c ombination o f the individual searches. Muc h heuristic w ork, anecdota lly inspired by strategies in so cial ins ects and flo c king birds [4, 5], has suggested that co llectiv e action s ho uld b e a dv a n tageous in searches in real world complex problems, such as fo r- aging, s patial mapping, and navigation. How ever, all approaches to date rely on simple heuristics that fail to make explicit the general informatio nal a dv antages of such stra tegies. The simplest extensio n o f infotaxis to collective searches is to hav e m ultiple indep endent (unco or dinated) searchers that sha re information; this corresp onds in gen- eral to a linear increase in p erformance with the num- ber o f searchers. Ho wev er, given some general knowledge ab out the structure of the search, substant ial increa s es in the search perfor mance of a collective of ag en ts can be a c hieved, o ften leading to exp onential reductio n in the search effort, in terms of time, energy or num ber of steps [6 – 8]. In this w ork we explore how the co ncept of information sy ner gy can b e leveraged to improv e info- taxis of m ultiple co ordinated searchers. Synergy corr e- sp onds to the g eneral situation when measuring tw o or more v ariables to gether with resp ect to another (the ta r - get’s s ignal) res ults in a gr eater information gain than the sum of that from each v ariable sep ar ately [9, 10]. W e ident ify the types of spatial s earch problems for whic h co ordination a mo ng mult iple sear c hers is effective (syner- getic), as w ell as w hen it is ine ffective, and corresp onds to independenc e . W e find that classes o f statistica l sources , 2 such a s those that emit uncorrelated signals (e.g . Pois- son pro cesses) provide no opp o rtunit y for synergetic co- ordination. On the other ha nd, source s that emit par ti- cles with spa tial, temp ora l, o r categor ical correlations , do allow for strong synergy betw een s e archers that can b e exploited via co ordinated motio n. These consider ations divide collective search problems int o different g eneral classes and are crucial for designing effectiv e algorithms for par ticular applications. INFO RMA T ION THEOR Y APPR OA CH TO STOCHASTIC SEARCH Effective and robust s earch metho ds fo r the lo catio n o f sto c hastic source s must balance the comp eting strateg ies of explora tion a nd exploitation [3]. On the one ha nd, searchers must exploit measured cues to g uide their o pti- mal nex t move. On the other hand, becaus e this informa- tion is s tatistical, mo re measurements need to typically be made that are guided by different s earch scenarios. Information theory appro a c hes to se a rch achiev e this bal- ance b y utilizing mov ement str ategies that increase the exp ected information gain, which in turn is a functional of the ma n y p ossible so urce lo cations. In this s ection we define the necessary fo r malism and use it to set up the general str ucture of the s tochastic sear c h problem. Synergy and Re dundancy First we define the concepts of infor mation, syn- ergy and redundancy explicitly . C o nsider the sto c has- tic v ariables X i , i = 1 . . . n . E ac h v a riable X i can take on s pecific states , denoted by the corr espo nding low- ercase letter, that is X can take o n a set o f states { x } . F or a single v ariable X the Sha nnon entropy (henceforth “entrop y”) is S ( X ) = − P x P ( x ) log 2 P ( x ), where P ( x ) is the probability that the v ariable X take on the v alue x [11]. The entropy is a measure of uncertaint y a bout the state of X , therefore en tropy can only decr ease or remain unchanged as more v ari- ables are mea sured. The conditiona l entropy of a v ari- able X 1 given a s econd v ariable X 2 is S ( X 1 | X 2 ) = − P x 1 ,x 2 P ( x 1 , x 2 ) log 2 ( P ( x 1 , x 2 ) /P ( x 2 )) ≤ S ( X 1 ). The m utual information b et ween tw o v ariables, which plays an impor tan t role in sea rch stra tegy , is defined as the change in entropy when a v ariable is measured I ( X 1 , X 2 ) = S ( X 1 ) − S ( X 1 | X 2 ) ≥ 0 . These defini- tions can b e directly extended to multiple v ariables . F or 3 v ariables, we make the following definition [12]: R ( X 1 , X 2 , X 3 ) ≡ I ( X 1 , X 2 ) − I ( { X 1 , X 2 }| X 3 ). This quantit y meas ures the degre e of “ov erla p” in the infor- mation co n tained in v ariables X 1 and X 2 with r espec t to X 3 . If R ( X 1 , X 2 , X 3 ) > 0, there is overlap a nd X 1 and X 2 are sa id to b e re dunda n t with resp ect to X 3 . If R ( X 1 , X 2 , X 3 ) < 0 , more informa tion is a v ailable when these v ariables are considered together than when con- sidered separ ately . In this case X 1 and X 2 are sa id to be synergetic with resp ect to X 3 . If R ( X 1 , X 2 , X 3 ) = 0, X 1 and X 2 are independent [9, 10]. Two-dimensional spatial search W e now formulate the tw o-dimensional sto chastic search pr oblem. W e consider, for simplicity , the case of tw o searchers seeking to find a stochastic s ource lo- cated in a finite tw o-dimensio nal plane. This is a gen- eralization of the single searcher formalism pre sen ted in Ref. [2]. At any time step, the searchers hav e p ositions { r i } , i = 1 , 2 and obse rv e some num ber of particles { h i } from the source. The searchers do not get information ab out the tra jectories o r sp eed of the particles ; they only get infor mation if a particle was o bserved or not. There- fore simple geometrica l metho ds such as triang ulation are not p ossible. Let the v ariable R 0 corres p ond to all the p ossible lo cations of the s ource r 0 . The searchers compute and share a pr obability distribution P ( t ) ( r 0 ) for the source at each time index t . Initially the pro b- ability for the source is assumed to be to be uniform. After each meas ur emen t { h i , r i } , the searchers update their estimated probability distributio n of sour ce po si- tions via Bay esian inference. First the conditional prob- ability P ( t +1) ( r 0 |{ h i , r i } ) ≡ P ( t ) ( r 0 ) P ( { h i , r i }| r 0 ) / A , is calculated, where A is a nor malization over all p ossi- ble source lo cations as required by Bay esian inference. This is then assimila ted via Bayesian upda te so that P ( t +1) ( r 0 ) ≡ P ( t +1) ( r 0 |{ h i , r i } ). If the sea rchers do not find the source a t their present lo cations they c ho ose the next lo cal mov e using an in- fotaxis step to maximize the expec ted information ga in. T o descr ibe the infotaxis step w e first nee d some defini- tions. The en tropy of the distribution P ( t ) ( r 0 ) at time t is defined as S ( t ) ( R 0 ) ≡ − P r 0 P ( t ) ( r 0 ) log 2 P ( t ) ( r 0 ). In terms of a s pecific measur emen t { h i , r i } the en- tropy is ( b efo r e the Bay esian up date) S ( t ) { h i ,r i } ( R 0 ) ≡ − P r 0 P ( t ) ( r 0 |{ h i , r i } ) log 2 P ( t ) ( r 0 |{ h i , r i } ). W e define the differe nc e b et ween the entropy at time t and the en- tropy a t time t + 1 after a mea s uremen t { h i , r i } to be ∆ S ( t +1) { h i ,r i } ≡ S ( t +1) { h i ,r i } ( R 0 ) − S ( t ) ( R 0 ). Initially the entrop y is a t its max imum fo r a unifor m prior: S (0) ( R 0 ) = log 2 N s , where N s is the num b er of po ssible lo catio ns for the so urce in a discre te spac e . F or each po ssible join t mov e { r i } , the change in exp ected ent ro py ∆ S is co mputed and the move with the minimum (most negative) ∆ S is exec uted. The expected en tropy is computed b y cons idering the reduction in entrop y for all of the po ssible joint mov es 3 ∆ S = − X i P ( t ) ( R 0 = r i ) S ( t ) ( R 0 ) + 1 − X i P ( t ) ( R 0 = r i ) ∆ S ( t +1) { h i ,r i } × X h 1 ,h 2 X r 0 P ( t ) ( r 0 ) P ( t +1) ( { h i , r i }| r 0 ) . (1) The firs t term in Eq. (1) cor r espo nds to o ne of the searchers finding the source in the next time step (the final en tropy will b e S = 0 so ∆ S = − S ). The second term considers the reductio n in entrop y for all p o ssible measurements at the pro pos e d lo cation, weigh ted by the probability of e ac h o f those mea surements. The proba- bilit y o f the sea rc hers obtaining the measurement { h i } at the lo cation { r i } is g iv en by the trace of the pro babilit y P ( t +1) ( { h i , r i }| r 0 ) over all po ssible sour ce lo cations . CORRELA TED STOCHAST IC SOURCE AND SYNERG Y OF SEARCHERS The expected entrop y reduction ∆ S is calculated for joint mov es of the searchers, that is, all p ossible com- binations of individual moves. Compar e d with multiple independent se a rchers this calcula tion incur s some extra computational cost. Th us, when designing a sea rc h al- gorithm, it is imp ortant to know whether an adv ant age (synergy) can b e gained b y co nsidering join t mov es in- stead of individual mo ves. Since the search is based on optimizing the maximum information gain we need to ex- plore if joint mov es a re s ynergetic or redunda nt. In this section we will s ho w how cor relations in the s ource affect the s y nergy and redundancy of the search. In the following we will assume there are no r adial cor - relations b etw een particles emitted from the source and that the pr obability of detecting particles decays with distance to the source. F or each particle emitted from the source, the sear c her i has an a sso ciated a ctual pro babil- it y π i ( r 0 ) of catching the particle. The proba bilit y π i ( r 0 ) is defined in terms of a pos sible sour ce lo cation r 0 and the loc ation r i of searcher i : π i ( r 0 ) = B exp ( −| ~ r i − ~ r 0 | 2 ), where { r i } is the set of all the sear cher p ositions and B is a normalization co nstan t. Note that this is just the radial comp onent of the probability; if there are angular correla tions these a re treated separa tely . W e may no w write R , as a function of the v ariables R 0 , H 1 , and H 2 , in ter ms of the co nditional proba bilities : R ( H 1 , H 2 , R 0 ) = X h 1 ,h 2 ,r 0 P ( r 0 , h 1 , h 2 ) log 2 P ( h 1 | r 0 ) P ( h 2 | r 0 ) P ( h 2 | h 1 ) P ( h 2 ) P ( h 1 , h 2 | r 0 ) . (2) It is sufficient for R 6 = 0 that the argument of the loga- rithm differs fro m 1. This c an b e achiev ed even if mea- surements are conditionally independent (redunda nc y ), m utually indep e ndent (synergy), or when neither of these conditions a pply . Uncorrelated signal s: Po isson s ource First, consider a source which emits par ticles a ccord- ing to a P oisso n pro cess with kno wn mean λ 0 so emit- ted particles are co mpletely uncorrelated spatially and tempo rally . If sear c her 1 is able to get a par ticle that has alre ady be en detected by searcher 2, it is clear that the searchers ar e c ompletely independent and there is no chance o f synerg y . It may app ear at firs t that imple- men ting a s imple ex clusion where t wo sear c hers ca nnot get the s ame pa rticle would b e enough to foster coo p- eration b etw een sea rc hers. W e will instead show that it is the Poisson na ture of the source that makes synergy impo ssible, e ven under mutual e xclusion of the measur e- men ts. The proba bilit y of the measurement { h i } is given by P ( { h i , r i }| r 0 ) = ∞ X h s = P i h i P 0 ( h s , λ 0 ) M ( { π i ( r 0 ) } , { h i } , h s ) . (3) The s um is over all p ossible v alues o f h s , weigh ted by the Poisson pro ba bilit y mas s function with the known mean λ 0 . M is the probability mass function of the multino- mial distribution for that mea surement; it handles the combinatorial degeneracy and the exclusion. It is not difficult to show by summing ov er h s that P ( { h i , r i }| r 0 ) can be wr itten as a pro duct of Poisson distributions with effective means λ 0 π i , P ( { h i , r i }| r 0 ) = λ P i h i 0 e − λ 0 P i π i Q i π h i i Q i ( h i !) = Y i P 0 ( h i , λ 0 π i ) . (4) A t this p oint we consider whether a sea rc h like this can be syner g etic for the 2 searcher case. Eq. (4) s ho ws that the tw o measurements ar e co nditionally indepen- dent and therefore P ( h 1 , h 2 | r 0 ) = P ( h 1 | r 0 ) P ( h 2 | r 0 ). It follows fr om Eq. (2) that R ( H 1 , H 2 , R 0 ) = I ( H 1 , H 2 ) ≥ 0. Therefore the searchers are either redundant (if the mea - surements in terfere with each other) or indep enden t with resp ect to the s ource. Synerg y is imp ossible so that searchers ga in no adv antage by considering join t mov es. The only a dv a n tage of co or dina tion comes p ossibly from av oiding p ositions that lea d to a decrease in p erformance of the collective due to comp etition for the same signal. 4 S ea r c h e r 1 ( a ) R ( H 1 , H 2 , R 0 ) = I ( H 1 , H 2 ) − I ( H 1 , H 2 | R 0 ) ( b ) P ( R 0 ) ( c ) I ( H 1 , H 2 ) ( d ) I ( H 1 , H 2 | R 0 ) FIG. 1: Synergy for th e tw o searc her problem with angular correlations. (a) R ( H 1 , H 2 , R 0 ) as a function of the p osition of searc her 2 ( r 2 ) for a fixed lo cation of searc her 1 ( r 1 , sho wn as a black star). The most probable source location is in the center (b lack dot). The white to b lue scale indicates R = 0 to R = − 2 × 10 − 5 and w e n ote that R ≤ 0 ev erywhere. The darker color indicates stronger synergy v alues when searcher 2 is near the source. The synergy is less when searc her 2 is aw a y from or on the opp osite side ( R ≈ 0) of the source. ( b) The probabilit y distribu t ion of source locations, p eak ed at t h e center: P ( ~ r 0 ) = A exp ( −| ~ r 0 | 2 / 0 . 02), where A = 1 / P ~ r 0 P ( ~ r 0 ) is a n ormaliza tion factor. W h ite to blue indicates P = 0 to P = 0 . 02. (c) I ( H 1 , H 2 ); ( d) I ( H 1 , H 2 | R 0 ). In ( c) and (d) white to blue indicates I = 0 to I = 6 × 10 − 5 . Con tour lines have b een added to gu id e the eye. In all frames the data is plotted in a tw o-dimensional spatial domain of x, y = [ − 0 . 5 , 0 . 5] and all vectors are measured from the origin x = 0 , y = 0. The parameter σ 2 = 1 . 1 in Eq. 5. Correlated si gnals: angular bi ases W e now consider a sour ce that emits particles tha t a r e spatially co rrelated. W e ass ume for simplicity that a t each time step the source emits 2 pa rticles. T he first particle is emitted at a random angle θ h 1 chosen uni- formly fr om [0 , 2 π ). The second particle is e mitted at a n angle θ h 2 with pr obabilit y P ( θ h 2 | θ h 1 ) = D exp [ − ( | θ h 1 − θ h 2 | − π ) 2 /σ 2 ] ≡ f , (5) where D is a normalization factor. The sear c hers are assumed to know the v ariance σ for simplicity; this is a reasona ble assumption if the sea rchers hav e any infor- mation ab out the nature of the target (just as for the Poisson source they had statistical knowledge o f the pa- rameter λ 0 ). The calculation o f the conditional pro b- ability P ( { h i , r i }| r 0 ) requires some ca re. Sp ecifically , this quantit y is the probability of the measurement { h i } , assuming a ce r tain source p osition. Since there a re 2 particles emitted at each time step, there are 4 p ossible cases, each with a differen t proba bility , as shown in T a - ble I. Here θ h 1 and θ h 2 are calculated from r 1 and r 2 , resp ectively: θ h i ≡ arctan r 0 ,y − r i,y r 0 ,x − r i,x . Note that the π i are functions of r 0 . The co efficient D is chosen s uc h that 1 N P h 2 P r 2 P ( { h 1 , h 2 , r 1 , r 2 }| r 0 ) = P ( { h 1 , r 1 }| r 0 ), cor - resp onding to the norma lization condition 1 N P r 2 f = 1. Figure 1 shows the v alue o f R ( H 1 , H 2 , R 0 ) and the v alues of the mutual informations I ( H 1 , H 2 ) and I ( H 1 , H 2 | R 0 ) for each p ossible po sition r 2 of searcher 2 . W e assume a nonuniform, p eaked probability distribu- 5 { h 1 , h 2 } { 1 , 1 } { 1 , 0 } { 0 , 1 } { 0 , 0 } P ( { h 1 , r 1 }| r 0 ) π 1 π 1 1 − π 1 1 − π 1 P ( { h 2 , r 2 }| r 0 ) π 2 π 2 1 − π 2 1 − π 2 P ( { h 1 , h 2 , r 1 , r 2 }| r 0 ) π 1 π 2 f 2 π 1 f 1 − π 2 f π 2 f 1 − π 1 f (1 − π 1 f )(1 − π 2 f ) T ABLE I: Probability calculation for all p ossible states in the correlated source search. Here π i ( r 0 ) = B exp ( −| ~ r i − ~ r 0 | 2 ) is written as π i to save space. tion for the source [Figur e 1(b)] and that the p osition of searcher 1 is fixed. In this setup we see that R < = 0 for every p ossible p osition of sea rcher 2 indica ting that only synergy is p ossible. This is a consequence of the ang ular spatial corr elation b et ween the particles emitted by the source. The synerg y is highest near the source lo cation, where the so urce probability is stro ngly p eaked, and falls off rapidly awa y fro m the source lo cation. F urthermo re there is little to no synergy nea r sear c her 1 sinc e in that region it is very unlikely that b oth sear c hers would sim ul- taneously observe a particle. The area of greatest syn- ergy corresp onds to the most probable source lo cations for both searchers to simultaneously observe a particle. P ( r 0 ) is very flat at the bo unda ries; th us R 0 contributes little to I ( H 1 , H 2 | R 0 ) in the lo wer left corner and R is small. CONCLUSION In the r eal world, communication b et ween a gen ts, as well as centralized or decent raliz e d real-time computa- tion can b e difficult or exp ensive. Therefor e it is imp o r- tant to consider the c la sses o f search problems for which co ordination b et ween sear c hers can achiev e q uan titative adv an tages ov er independent agents. In this work we studied search alg o rithms for autonomous agents lo ok- ing for the spatial lo cation o f a sto c hastic source. W e defined the search pro blem for multiple a gen ts in terms of infota xis [see Eq . (1)]. W e also sho wed why synerg y gives rise to an a dv antage in this type of se a rch. W e considered tw o types of s o urces. W e first demons trated that a sour ce emitting unco r related pa rticles will afford no oppo rtunit y for synergy (see Section ). In a sear c h for a Poisson so ur ce, multip le co ordinated searchers (ones that consider sets of joint mov es rather than each con- sidering an indepe ndent mov e) can not hop e to do be tter than multiple independent searchers. Next we s howed that, for a so urce emitting particles with (ang ular) cor- relations (see Section ), only synergy or indep endence is pos sible (see Fig. 1). The abilit y of the searchers to leverage synerg y dep ends strong ly on their ability to esti- mate with some accura cy the probability distribution of source lo cations. These gener al co nsiderations are cru- cial for the exploitation of so cial computation in terms of the design of o ptimal co lle ctiv e a lgorithms in pa rticular applications. The nex t step to making this appro ac h ap- plicable to a broader cla s s of problems, including those not limited to spatial sea rch es, is to g eneralize the results to more than 2 searchers and to explore how synergy may be b est leveraged to give increas es in search sp e e d a nd efficiency . This pap er is r eleased under LA-UR 0 9-0043 2 . ∗ Electronic address: v adasg@gmail .com † Electronic address: hagberg@lanl.gov ‡ Electronic address: lm b ett@lanl.go v [1] F. Bourgault, T. F uruk aw a, and H.F. Du rrant-Whyte. Coordinated decentral ized search for a lost target in a Ba yesian w orld. Intel ligent R ob ots and Systems, 2003. (IROS 2003). Pr o c e e dings. 2003 IEEE/RSJ Interna- tional C onfer e nc e on , 1:48, O ct. 2003. [2] M. V ergasso la, E. Villermaux, and B. I. Shraiman. “In- fotaxis” as a strategy for searc hing without gradients. Natur e , 445:406, 2007. [3] R . S. Sulton and A. G. Barto. R einfor c ement le arning: an i ntr o duction . MIT Press, Cambrigde MA, 1998. [4] I ain D . Couzin, Jens Krause, Nigel R. F ranks, and Si- mon A. Levin. Effective leadership and decision-making in animal groups on the mov e. Natur e , 433:513, 2005. [5] E. Bonabeau and G. Theraulaz. Sw arm smarts. Scientific Amer ic an , pages 72–79, Mar. 2000. [6] H . S . Seung, M. Op per, and H. Somp olinsky . Query by committee. In COL T ’92: Pr o c e e dings of the fifth annual workshop on Computational le arning the ory , page 287, 1992. [7] Y . F reund, E. Shamir, and N. Tish by . Selectiv e sampling using the q uery by committee algorithm. In Machine L e ar ning , page 133, 1997. [8] S . Fine, R . Gilad-Bac hrach, and E. Shamir. Query by committee, linear separation and random w alks. The or. Comput. Sci . , 284(1):25, 2002. [9] Lu is M. A. Bettencourt, Greg J. Stephens, Mic hael I. Ham, and Guenter W. Gross. F u nctional structure of cortical neuronal netw orks grown in v itro. Phys. R ev. E , 75:0219 15, 2007. [10] L. M. A. Bettencourt, V. Gin tautas, and M. I. Ham. I den- tification of functional information sub graph s in complex netw orks. Phys. R ev. L ett. , 100:2387 01, 2008. [11] T. M. Co ver and J. A. Thomas. Elements of Information The ory . Wiley , New Y ork, 1991. [12] E. Schneidman, W. Bialek, and M. J. Berry I I. Sy nergy , redundancy , and in d epend ence in p opulation codes. J. Neur osci. , 23:11539, 2003.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment