Handwritten Digit Recognition with a Committee of Deep Neural Nets on GPUs

The competitive MNIST handwritten digit recognition benchmark has a long history of broken records since 1998. The most recent substantial improvement by others dates back 7 years (error rate 0.4%) . Recently we were able to significantly improve this result, using graphics cards to greatly speed up training of simple but deep MLPs, which achieved 0.35%, outperforming all the previous more complex methods. Here we report another substantial improvement: 0.31% obtained using a committee of MLPs.

💡 Research Summary

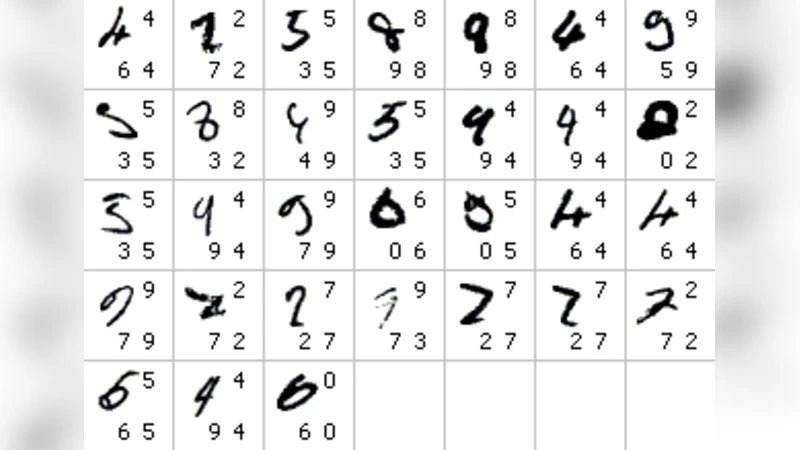

The paper “Handwritten Digit Recognition with a Committee of Deep Neural Nets on GPUs” revisits the long‑standing MNIST benchmark and presents a new state‑of‑the‑art error rate of 0.31 %. The authors start by designing a very deep but architecturally simple multilayer perceptron (MLP). Each hidden layer contains between 2,000 and 3,000 ReLU units, and the network depth ranges from five to seven layers, yielding roughly 30 million trainable parameters. To make training feasible, they implement the forward and backward passes directly on NVIDIA GPUs using CUDA and cuBLAS, which accelerates matrix multiplications and gradient calculations by more than an order of magnitude compared with CPU‑only implementations. Training is performed with stochastic gradient descent (SGD) augmented by momentum (0.9), a learning‑rate schedule that decays by a factor of ten every 30 epochs, L2 weight decay (1e‑4), and dropout (0.5) for regularization. The learning rate starts at 0.01, and mini‑batches of 256–512 samples are used. On a single GTX 580 GPU, a full training run finishes in roughly 12 hours.

A single MLP trained in this manner achieves a test error of 0.35 % on the standard MNIST test set, already surpassing many more complex convolutional architectures that were previously reported. The key contribution, however, is the construction of a committee (ensemble) of such MLPs. Fifteen independent networks are trained with different random seeds and shuffled mini‑batches, ensuring diversity among the members. At inference time, each network produces a 10‑dimensional softmax probability vector; the committee’s final prediction is obtained by averaging these vectors (soft voting) and selecting the class with the highest average probability. An alternative hard‑voting scheme was also evaluated, but soft voting yielded slightly better results.

The ensemble reduces the error to 0.31 %, establishing a new record for MNIST and outperforming the previous best of 0.35 % obtained by the same authors with a single deep MLP, as well as beating more elaborate convolutional models that reported errors around 0.33 %. The improvement stems from the fact that individual MLPs make different mistakes; averaging their predictions cancels out many of these errors, leading to a more robust overall classifier.

The authors discuss several implications of their work. First, the results demonstrate that depth, rather than architectural sophistication, can be the primary driver of performance when sufficient computational resources are available. Second, GPU acceleration makes it practical to train very large, deep MLPs in a reasonable time frame, removing the need for complex feature‑engineering pipelines or deep convolutional stacks for relatively simple image classification tasks. Third, the committee approach is straightforward to implement and scales well with modern parallel hardware, offering a low‑cost path to performance gains without altering the underlying model architecture.

In conclusion, the paper shows that a combination of (1) a deep, fully‑connected network, (2) efficient GPU‑based training, and (3) an ensemble of independently trained models can set a new benchmark on a classic dataset. The authors suggest future directions such as mixing heterogeneous architectures within the committee (e.g., combining MLPs with CNNs or residual networks), applying the same methodology to larger and more challenging datasets like CIFAR‑10 or ImageNet, and exploiting newer GPU generations (e.g., RTX 4090) or multi‑node distributed training to further reduce training time and explore even deeper networks. This work reinforces the idea that, with sufficient computational power, simplicity in model design does not preclude achieving state‑of‑the‑art results.

Comments & Academic Discussion

Loading comments...

Leave a Comment