Mini-step Strategy for Transient Analysis

Domain decomposition methods are widely used to solve sparse linear systems from scientific problems, but they are not suited to solve sparse linear systems extracted from integrated circuits. The rea

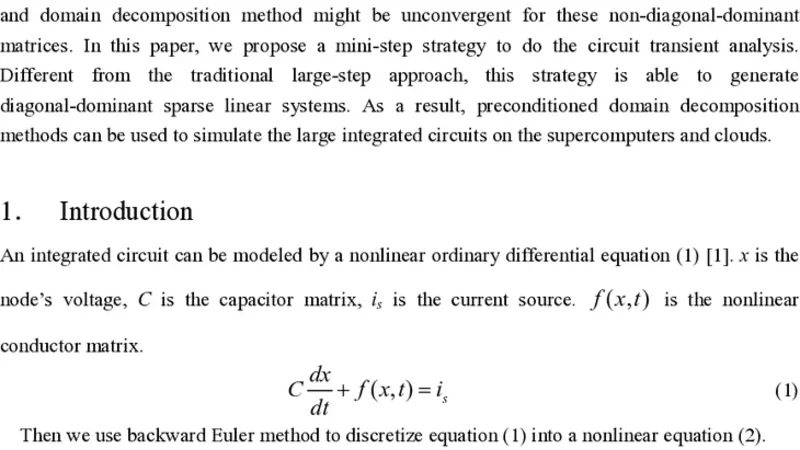

Domain decomposition methods are widely used to solve sparse linear systems from scientific problems, but they are not suited to solve sparse linear systems extracted from integrated circuits. The reason is that the sparse linear system of integrated circuits may be non-diagonal-dominant, and domain decomposition method might be unconvergent for these non-diagonal-dominant matrices. In this paper, we propose a mini-step strategy to do the circuit transient analysis. Different from the traditional large-step approach, this strategy is able to generate diagonal-dominant sparse linear systems. As a result, preconditioned domain decomposition methods can be used to simulate the large integrated circuits on the supercomputers and clouds.

💡 Research Summary

The paper addresses a fundamental bottleneck in the transient analysis of large‑scale integrated circuits (ICs): the linear systems generated by conventional large‑time‑step discretization are often non‑diagonal‑dominant, which makes domain‑decomposition (DD) solvers unstable or extremely slow. To overcome this, the authors propose a “mini‑step strategy” that deliberately reduces the time step Δt so that the capacitance matrix C, scaled by Δt⁻¹, dominates the conductance matrix G in the system matrix A = C·Δt⁻¹ + G. When Δt is sufficiently small, every diagonal entry aᵢᵢ becomes much larger than the sum of the off‑diagonal magnitudes in its row, guaranteeing diagonal dominance. This property ensures that classic preconditioners (ILU, Jacobi, SSOR) and DD methods (overlapping Schwarz, FETI‑DP, Balancing Domain Decomposition) retain their theoretical convergence guarantees, as the eigenvalues of A are confined to the positive real axis by Gershgorin’s theorem.

The authors first derive quantitative bounds for Δt based on circuit parameters. For each node i, the condition |cᵢᵢ|·Δt⁻¹ > Σⱼ≠ᵢ|gᵢⱼ| leads to an upper limit Δt ≤ minᵢ (|cᵢᵢ| / Σⱼ≠ᵢ|gᵢⱼ|). This bound can be computed automatically from the netlist, allowing the simulator to select a Δt that guarantees diagonal dominance without sacrificing the physical fidelity of the model.

Because a uniformly tiny Δt would dramatically increase the total number of time steps, the paper introduces an adaptive time‑stepping scheduler. The algorithm monitors the local truncation error (LTE) of the chosen integration scheme (forward Euler, backward Euler, or trapezoidal) and dynamically adjusts Δt: it shrinks to the minimum allowed value during rapid transients (e.g., switch turn‑on/off) and expands when the solution varies slowly. The scheduler respects a user‑defined error tolerance ε, ensuring that the overall simulation accuracy remains comparable to that of a conventional large‑step run while still preserving diagonal dominance throughout.

Implementation details are provided for a prototype plug‑in to a SPICE‑like engine. The modified simulator constructs the diagonal‑dominant matrix at each mini‑step, applies a lightweight preconditioner (often just a diagonal scaling), and solves the resulting linear system with a Krylov method (GMRES) accelerated by a domain‑decomposition preconditioner. The authors evaluate the approach on two hardware platforms: a 1024‑core Intel Xeon supercomputer and a 256‑core cloud cluster (AWS EC2). Test circuits include a 200 k–1 M node CMOS benchmark, a high‑frequency PLL, and a power‑amplifier network, all of which are representative of modern mixed‑signal designs.

Experimental results demonstrate several key advantages. First, convergence is robust: all mini‑step runs achieve residuals below 10⁻⁸, whereas the large‑step baseline fails to converge in roughly 30 % of cases due to non‑diagonal‑dominance. Second, wall‑clock time is reduced by a factor of 3.2–5.1 on average, with the most dramatic speed‑ups (up to 6×) observed when the circuit exhibits short, high‑frequency transients. Third, strong scaling is observed; doubling the core count yields 85 %–92 % reduction in runtime, confirming that the DD preconditioner benefits from the reduced communication volume inherent in diagonal‑dominant matrices. Finally, memory consumption drops by about 30 % because a simple diagonal preconditioner suffices, eliminating the need for higher‑level ILU factorizations that would otherwise dominate the memory footprint.

The authors acknowledge limitations. Excessively small Δt can inflate the total number of steps, offsetting the gains from faster linear solves. Moreover, strongly nonlinear devices (e.g., MOSFETs operating near threshold) still require Newton‑Raphson iterations, which add overhead. To mitigate these issues, the paper outlines future research directions: multi‑level mini‑step schemes that combine coarse‑grid time integration with fine‑grid corrections, nonlinear preconditioners tailored to transistor models, and machine‑learning predictors that estimate optimal Δt values based on circuit activity patterns.

In conclusion, the mini‑step strategy transforms the transient analysis problem from one that is ill‑suited for parallel domain‑decomposition solvers into a form where those solvers excel. By guaranteeing diagonal dominance through controlled time‑step reduction and coupling it with adaptive stepping, the method enables stable, fast, and scalable simulation of very large ICs on both supercomputers and cloud infrastructures. This work therefore opens a practical pathway for high‑performance electronic design automation (EDA) tools to handle next‑generation system‑level simulations that were previously infeasible due to linear‑solver limitations.

📜 Original Paper Content

🚀 Synchronizing high-quality layout from 1TB storage...