Minimizing the sum of many rational functions

We consider the problem of globally minimizing the sum of many rational functions over a given compact semialgebraic set. The number of terms can be large (10 to 100), the degree of each term should be small (up to 10), and the number of variables ca…

Authors: Florian Bugarin (LAAS, CROMeP), Didier Henrion (LAAS

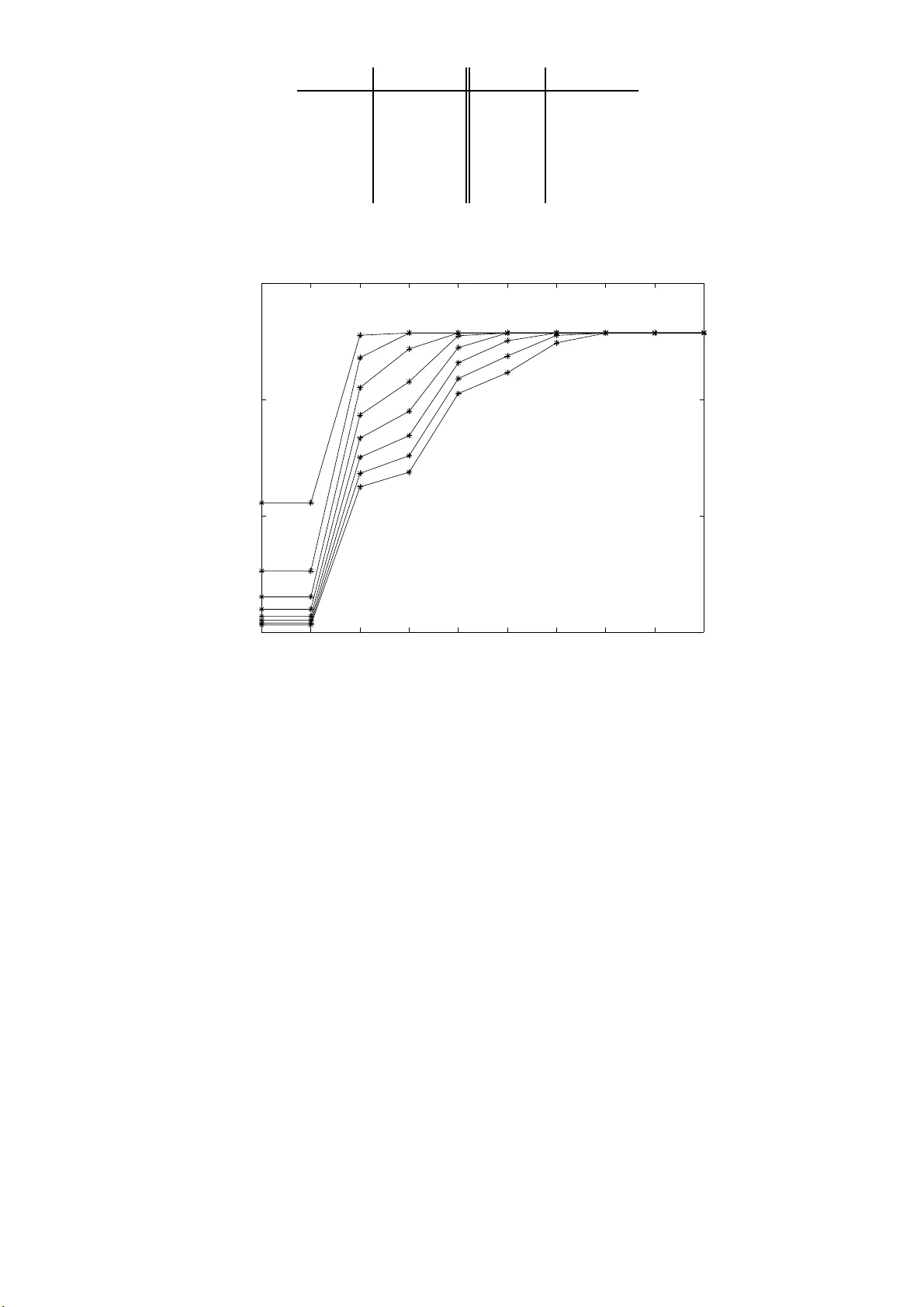

Minimizing the sum of man y rational functions ∗ Floria n Bugari n, 1 , 2 Didier Henrion , 2 , 3 Jean-Bernar d Lasserre 2 , 4 No v em b er 5, 2018 Abstract W e consider the problem of g lobally minimizing the sum of many rational f unc- tions o v er a giv en compact semialgebraic set. The n um b er of terms can b e large (10 to 100), the d egree of eac h term should b e small (up to 10), and th e num b er of v ariables can b e large (1 0 to 100 ) pro vid ed some ki nd of sparsit y is p resen t. W e describ e a form ulation of the r ational optimization pr oblem as a generalized mo- men t p roblem and its hierarc h y of conv ex semidefi n ite r elaxatio ns. Under some conditions we pro v e that the s equence of optimal v alues conv erges to the globally optimal v alue. W e sho w ho w public-domain soft w are can b e used to mo d el and solv e such pr oblems. Keyw ords: rational optimization; globa l optimization; semidefinite relax ations; sparsit y . AMS MSC 201 0: 46 N10, 65K05, 90C22, 90C26. 1 In t r o duct i on Consider the o ptimization problem f ∗ := inf x ∈ K N X i =1 f i ( x ) (1) o v er the basic se mi-algebraic set K := { x ∈ R n : g j ( x ) ≥ 0 , j = 1 , . . . , m } , (2) for give n p olynomials g j ∈ R [ x ], j = 1 , . . . , m , and where each term f i : R n → R is a rational function x 7→ f i ( x ) := p i ( x ) q i ( x ) , 1 Univ ersit´ e de T o ulouse; Mines Albi; Institut Cl ´ ement Ader (ICA); Campus Jarlard, F-81013 A lbi, F ra nce 2 CNRS; LAAS; 7 av en ue du colo nel Ro che, F-3107 7 T oulouse, F rance; Universit ´ e de T oulouse; UPS, INSA, INP , ISAE; LAAS; F-3107 7 T oulouse, F r ance 3 F aculty of Electric al Engineering, Czech T echnical Univ ersity in Prague, T echnic k ´ a 4, CZ-1 6607 Prague , Czech Republic 4 Institut de Math´ ematiques de T o ulo use (IMT), Universit ´ e de T oulous e , F rance ∗ The first autho r acknowledges supp ort by the F rench National Research Agency (ANR) throug h COSINUS prog ram (pro ject ID4CS ANR-09-COSI-0 05). The second author ac knowledges s uppor t b y Research Progr am MSM68407 70038 of the Czech Ministry of Education and Pr o ject 103 /10/0 628 of the Grant Agency of the Czech Republic. 1 with p i , q i ∈ R [ x ] and q i > 0 on K , for eac h i = 1 , . . . , N . Problem (1) is a fr ac tion a l pr o gr amming problem o f a ra t her general form. Nev ertheless, w e a ssume that t he degree of each f i and g j is relative ly small ( up to 10), but the n um b er of terms N can b e quite large (10 to 100). F o r dense data the n um b er of v ariables n should also b e small (up to 1 0). Ho w ev er, this n um ber can b e also quite lar g e (10 to 1 00) pro vided that the problem data feature some kind of sparsity (to b e sp ecified later). Ev en though pro blem (1) is of self-inte rest, our initial motiv ation came from some applications in computer vision, where suc h problems are t ypical. These applications will b e describ ed elsewhere . In suc h a situation, fractio na l programming problem (1) is quite c hallenging. Indeed, w e mak e no a ssumption o n the p olynomials p i , q i whereas ev en with a relativ ely small n um b er of fractions and under conv exit y (resp. conca vit y) assumptions on p i (resp. q i ), problem (1) is hard to solve (esp ecially if o ne w a n ts to compute t he global minim um); see for example t he surv ey [10 ] and references therein. W e are in terested in solving problem (1 ) globally , in the sense that we do not con ten t ourselv es with a lo cal optim um satisfying first order o ptima lity conditions, as typic ally obtained with standard lo cal optimization algorithms suc h as Newton’s metho d o r its v arian ts. If problem (1) is to o difficult to solve globally (b ecause of ill- conditio ning and/or to o larg e a n um b er of v ariables or terms in t he ob jectiv e function), we w ould lik e to hav e at least a v alid low er b ound on the glo bal minim um, since upp er b ounds can b e obtained with lo cal optimization algorithms. One p ossible approach is to reduce all fractions p i /q i to same denominator a nd o bt a in a single rational fraction to minimize. Then one ma y try t o apply the hierarch y of semidef- inite programming (SDP) relaxations defined in [6], see also [9, Section 5.8]. But suc h a strategy is not appropriate b ecause the degree of the common denominator is p oten- tially large and ev en if n is small, one may not ev en implem en t the first relaxation of the hierarc h y , due to the presen t limitations of SDP solv ers. Moreo v er, in general this strategy also destro ys p oten tial sparsit y patterns presen t in the original formulation (1), and so precludes f rom using an appropr ia te version (for the ratio nal fraction case) of the sparse semidefinite relaxations introduced in [11] whose c on v ergence w as prov ed in [7] under some conditions o n t he sparsit y pattern, see also [9, Sections 4.6 and 5.3.4]. Another p ossibilit y is to intro duce additional v ariables r i (that we ma y call liftings) with asso ciated constrain ts p i ( x ) q i ( x ) ≤ r i , i = 1 , . . . , N , and solv e the equiv alen t problem: f ∗ := inf ( x , r ) ∈ b K N X i =1 r i (3) whic h is now a p olynomia l optimization problem in the new v ariables ( x , r ) ∈ R n × R N , and where the new feasible set b K = K × { ( x , r ) ∈ R n + N : r i q i ( x ) − p i ( x ) ≥ 0 } is mo deling t he epigraphs o f the rational terms. The sparsit y pattern is preserv ed and if K is compact one ma y in g eneral obtain upp er a nd low er b ounds r i , r i on the r i so as to mak e b K compact b y adding the quadratic (redundant) cons train ts ( r i − r i )( r i − r i ) ≥ 0, i = 1 , . . . , N , and apply t he sparse semidefinite relaxations. How ev er, in doing so one in tro duces N additional v a riables, and this may ha v e an impact o n the ov erall p erfor mance, esp ecially if N is large. In the sequel this approac h is referred to as t he epigr aph appr o ach . 2 The goal of the presen t pap er is to circum v en t all ab o v e difficulties in the following t w o situations: either n is r elat ively small, or n is p otentially large but some sparsit y is presen t, i.e., each f i and eac h g j in (1) is concerned with only a small subset of v a riables. In the approac h that w e prop ose, w e do not need the epigraph liftings. The idea is to form ulate (1) as a n equ iv alen t infinite-dimensional linear pro blem whic h a particular instance o f the generalized momen t problem (GMP) as defined in [8], with N unkno wn measures (where eac h measure is asso ciated with a fraction p i /q i ). In turn this problem can b e easily mo deled and solv ed with our public-domain softw are GloptiP oly 3 [5], a significan t up date of GloptiP oly 2 [4]. In the sequel this approa ch is referred to as the GMP ap pr o ach . The outline of the pap er is as follo ws. In Section 2 w e intro duce the SDP relaxations first in the case that n is small and the data ar e dense p olynomials. Then in Section 3 w e extend the SD P relaxations to the case that n is large but sparsit y is presen t . In Section 4 we sho w ho w the GMP form ulation can b e exploited t o mo del the SDP relaxatio ns of problem (1) easily with GloptiPoly 3. W e also provide a collection of n umerical exp eriments sho wing the relev ance of our GMP a pproac h, especially in comparison with the epigraph approac h. 2 Dense SDP relaxations In this section we assume that n , the n um b er o f v a r ia bles in problem (1) , is small, say up to 10. 2.1 GMP form ulation Consider the infinite dimensional linear problem ˆ f := inf µ i ∈M ( K ) N X i =1 Z K p i dµ i s.t. Z K q 1 dµ 1 = 1 Z K x α q i dµ i = Z K x α q 1 dµ 1 , ∀ α ∈ N n , i = 2 , . . . , N , (4) where M ( K ) is the space of finite Borel measures supp orted on K . Theorem 2.1 L et K ⊂ R n in (2 ) b e c omp a ct, and assume that q i > 0 on K , i = 1 , . . . , N . Then ˆ f = f ∗ . Pro of: W e first pro v e that f ∗ ≥ ˆ f . As f = P i p i /q i is con tinuous on K , there exists a global minimizer x ∗ ∈ K with f ( x ∗ ) = f ∗ . Define µ i := q i ( x ∗ ) − 1 δ x ∗ , i = 1 , . . . , N , where δ x ∗ is the D irac measure at x ∗ . The n obviously , the measures ( µ i ), i = 1 , . . . , N , are feasible for (4) with asso ciated v alue N X i =1 Z K p i dµ i = N X i =1 p i ( x ∗ ) /q i ( x ∗ ) = f ( x ∗ ) = f ∗ . Con v ersely , let ( µ i ) b e a feasible solutio n of (4). F or every i = 1 , . . . , N , let dν i b e the measure q i dµ i , i.e. ν i ( B ) := Z K ∩ B q i ( x ) dµ i ( x ) 3 for all sets B in the Borel σ -algebra of R n , and so the supp o r t of ν i is K . As measures on compact sets are momen t determinate, the momen ts constraints of (4) imply that ν i = ν 1 , for ev ery i = 2 , . . . , N , and from R K q 1 dµ 1 = 1 w e also deduce that ν 1 is a proba bilit y measure on K . But then N X i =1 Z K p i dµ i = N X i =1 Z K p i q i q i dµ i = N X i =1 Z K p i q i dν 1 = Z K N X i =1 p i q i ! dν 1 = Z K f d ν 1 ≥ Z K f ∗ dν 1 = f ∗ , where w e hav e used that f ≥ f ∗ on K a nd ν 1 is a probabilit y measure o n K . W e next make the follo wing assumption meaning that set K admits an algebraic certificate of compactness. Assumption 2.1 The se t K ⊂ R n in (2) is c omp act and the quadr atic p olynomial x 7→ M − k x k 2 c an b e written as M − k x k 2 = σ 0 + m X j = 1 σ j g j , for some p olynomials σ j ∈ R [ x ] , al l sums of squar es of p olynomials. 2.2 A hierarc h y of dense SDP rel axations Let y i = ( y iα ) b e a real sequenc e indexed in the canonical basis ( x α ) of R [ x ], i = 1 , . . . , N , and for ev ery k ∈ N , let N n k := { α ∈ N n : P j α j ≤ k } . Define the moment matrix M k ( y i ) of order k , asso ciated with y , whose en tr ies indexed b y m ulti-indices β (ro ws) and γ (columns) r ead [ M k ( y i )] β ,γ := y i ( β + γ ) , ∀ β , γ ∈ N n k , and so are linear in y i . Similarly , give n a p olynomial g ( x ) = P α g α x α , define the lo c alising matrix M k ( g y i ) of order k , asso ciated with y and g , whose en tries read [ M k ( g y i )] β ,γ := X α g α y i ( α + β + γ ) , ∀ β , γ ∈ N n k . In part icular, matrix M 0 ( g y i ) is iden tical to L y i ( g ) where for ev ery i , L y i : R [ x ] → R is the linear functional defined b y: g 7→ L y i ( g ) := X α ∈ N n g α y iα , ∀ g ∈ R [ x ] . Let u i := ⌈ (deg q i ) / 2 ⌉ , i = 1 , . . . , N , r j := ⌈ (deg g j ) / 2 ⌉ , j = 1 , . . . , m , and with no loss of generality assume that u 1 ≤ u 2 ≤ . . . ≤ u N . Consider the hierarc hy of semidefinite programming ( SDP) relaxations: f ∗ k = inf y i N X i =1 L y i ( p i ) s.t. M k ( y i ) 0 , i = 1 , . . . , N M k − r j ( g j y i ) 0 , i = 1 , . . . , N , j = 1 , . . . , m L y 1 ( q 1 ) = 1 L y i ( x α q i ) = L y 1 ( x α q 1 ) , ∀ α ∈ N n 2( k − u i ) , i = 2 , . . . , N . (5) 4 Theorem 2.2 L et Assumption 2.1 hold and c onsider the hier ar chy of SDP r elaxations (5). Then it fol lows that (a) f ∗ k ↑ f ∗ as k → ∞ . (b) Mor e over, if ( y k i ) is an o p timal solution of (5), and if rank M k ( y k i ) = rank M k − u i ( y k i ) =: R, i = 1 , . . . , N then f ∗ k = f ∗ and one may extr ac t R glob al minimizers. Pro of: The pro of o f (a) is classical. One first prov e that if ( y k i ) is a nearly optimal solution of ( 5 ), i.e. f ∗ k ≤ N X i =1 L y k i ( p i ) ≤ f ∗ k + 1 k , then there exists a subsequence ( k ℓ ) and a sequence y i , i = 1 , . . . , N , such that lim ℓ →∞ y k ℓ iα = y iα , ∀ α ∈ N n , i = 1 , . . . , N . F rom this p oint wise conv ergence it easily f o llo ws tha t for ev ery i = 1 , . . . , N and j = 1 , . . . , m , M k ( y i ) 0 , M k ( g j y i ) 0 , k = 0 , 1 , . . . By Putinar’s theorem [9, Theorem 2.14] this implies that the sequence y i has a repre- sen ting measure supp orted on K , i.e., there exists a finite Borel measure µ i on K suc h that L y i ( f ) = Z K f d µ i , ∀ f ∈ R [ x ] . Moreo v er, still b y p oint wise conv ergence, L y i ( q i x α ) = Z K x α q i ( x ) dµ i = L y 1 ( q 1 x α ) = Z K x α q 1 ( x ) dµ 1 , ∀ α ∈ N n . (6) Therefore, let dν i := q i ( x ) dµ i whic h is a proba bility measure supp orted on K . As K is compact, b y (6 ), ν i = ν 1 for ev ery i = 1 , . . . , N . Finally , again by p oin t wise con v ergence: f ∗ ≥ lim ℓ →∞ f ∗ k ℓ = lim ℓ →∞ N X i =1 L y k ℓ i ( p i ) = N X i =1 Z K p i dµ i = N X i =1 Z K p i q i q i dµ i = N X i =1 Z K p i q i dν 1 = Z K N X i =1 p i q i ! dν 1 ≥ f ∗ whic h prov es (a) b ecause f ∗ k is monoto ne non-decreasing. In a ddition, ν 1 is an optimal solution of ( 4 ) with optimal v alue f ∗ = ˆ f . Statemen t (b) follow s f rom the flat extension theorem of Curto and Fialk o w [9 , Theorem 3.7] and eac h y i has an atomic represen ting measure supp orted on R p oints of K . 5 3 Sparse SD P r e laxations In this section w e assume that n , the n umber of v ariables in problem (1), is large, sa y from 1 0 to 100, and moreo v er that some sparsit y pattern is presen t in the p olynomial data. 3.1 GMP form ulation Let I 0 := { 1 , . . . , n } = ∪ N i =1 I i with p ossible ov erlaps, and let R [ x k : k ∈ I i ] denote the ring of p olynomials in t he v ariables x k , k ∈ I i . Denote b y n i the cardinality of I i . One will assum e that K ⊂ R n in (2) is c ompact, and one kno ws some M > 0 suc h that x ∈ K ⇒ M − k x k 2 ≥ 0 . F or ev ery i ≤ N , intro duce the quadratic p olynomial x 7→ g m + i ( x ) = M − P k ∈ I i x 2 k . The index set { 1 , . . . , m + N } has a partitio n ∪ N i =1 J i with J i 6 = ∅ for ev ery i = 1 , . . . , N . In t he sequel we assume that fo r ev ery i = 1 , . . . , N , p i , q i ∈ R [ x k : k ∈ I i ] and for eve ry j ∈ J i , g j ∈ R [ x k : k ∈ I i ]. Next, for ev ery i = 1 , . . . , N , let K i := { z ∈ R n i : g k ( z ) ≥ 0 , k ∈ J i } so that K in (2) has the equiv alen t c haracterization K = { x ∈ R n : ( x k , k ∈ I i ) ∈ K i , i = 1 , . . . , N } . Similarly , for ev ery i, j ∈ { 1 , . . . , N } suc h that i 6 = j and I i ∩ I j 6 = ∅ , K ij = K j i := { ( x k , k ∈ I i ∩ I j ) : ( x k , k ∈ I i ) ∈ K i ; ( x k , k ∈ I j ) ∈ K j } . Let M ( K ) b e the space of finite Borel measures on K , and for ev ery i = 1 , . . . , N , let π i : M ( K ) → M ( K i ) denote the pro jection o n K i , that is, for ev ery µ ∈ M ( K ): π i µ ( B ) := µ ( { x : x ∈ K ; ( x k , k ∈ I i ) ∈ B } ) , ∀ B ∈ B ( K i ) where B ( K i ) is the usual Bor el σ - algebra asso ciated with K i . F or ev ery i, j ∈ { 1 , . . . , N } suc h that i 6 = j and I i ∩ I j 6 = ∅ , the pro jection π ij : M ( K i ) → M ( K ij ) is also defined in an ob vious similar manner. F or ev ery i = 1 , . . . , N − 1 define the set: U i := { j ∈ { i + 1 , . . . , N } : I i ∩ I j 6 = ∅ } , and consider the infinite dimensional problem ˆ f := inf µ i ∈M ( K i ) N X i =1 Z K i p i dµ i s.t. Z K i q i dµ i = 1 , i = 1 , . . . , N π ij ( q i dµ i ) = π j i ( q j dµ j ) , ∀ j ∈ U i , i = 1 , . . . , N − 1 . (7) Definition 3.1 Sp arsity p attern ( I i ) N i =1 satisfies the running interse ction pr op erty if for every i = 2 , . . . , N : I i \ i − 1 [ k =1 I k ! ⊆ I j , for some j ≤ i − 1 . 6 Theorem 3.1 L et K ⊂ R n in (2) b e c omp act. I f the sp a rs i ty p attern ( I i ) N i =1 satisfies the running interse ction pr op erty then ˆ f = f ∗ . Pro of: That ˆ f ≤ f ∗ is straightforw ard. As K is compact and q i > 0 on K for ev ery i = 1 , . . . , N , f ∗ = P N i =1 f i ( x ∗ ) f o r some x ∈ K . So let µ b e the D irac measure δ x ∗ at x ∗ and let ν i b e the pro jection π i µ o f µ on K i . That is ν i = δ ( x ∗ k ,k ∈ I i ) , the Dirac measure at the p oint ( x ∗ k , k ∈ I i ) of K i . Nex t, for ev ery i = 1 , . . . , N , define the measure dµ i := q i ( x ∗ ) − 1 dν i . Ob viously , ( µ i ) is a f easible solution of (7) because µ i ∈ M ( K i ) and R q i dµ i = 1, for ev ery i = 1 , . . . , N , and one also has: ( x ∗ k , k ∈ I i ∩ I j ) = π ij µ i = π j i µ j , ∀ j 6 = i suc h that I j ∩ I i 6 = ∅ . Finally , its v alue satisfies N X i =1 Z K i p i dµ i = N X i =1 p i ( x ∗ ) /q i ( x ∗ ) = f ∗ , and so ˆ f ≤ f ∗ . W e next prov e the conv erse inequality ˆ f ≥ f ∗ . Let ( µ i ) b e an arbitrary feasible solution of (7), a nd for eve ry i = 1 , . . . , N , denote b y ν i the probability measure on K i with density q i with resp ect to µ i , that is, ν i ( B ) := Z K i ∩ B q i ( x ) dµ i ( x ) , ∀ B ∈ B ( K i ) . By definition of the linear progra m (7), π ij ν i = π j i ν j for ev ery couple j 6 = i suc h that I j ∩ I i 6 = ∅ . Therefore, b y [9 , Lemma B.13] there exists a probability measure ν on K suc h that π i ν = ν i for ev ery i = 1 , . . . , N . But t hen N X i =1 Z K i p i dµ i = N X i =1 Z K i p i q i dν i = N X i =1 Z K i p i q i dν = Z K N X i =1 p i q i ! dν ≥ f ∗ and so ˆ f ≥ f ∗ . 3.2 A hierarc h y of sparse SDP rel axations Let y = ( y α ) be a real sequence indexed in the canonical basis ( x α ) of R [ x ]. D efine the linear functional L y : R [ x ] → R , b y: f = X α ∈ N n f α x α ! 7→ X α ∈ N n f α y α , ∀ f ∈ R [ x ] . F or ev ery i = 1 , . . . , N , let N ( i ) := { α ∈ N n : α k = 0 if k 6∈ I i } ; N ( i ) k := { α ∈ N ( i ) : X i α i ≤ k } . 7 An obvious similar definition of N ( ij ) (= N ( j i ) ) and N ( ij ) k (= N ( j i ) k ) applies when considering I j ∩ I i 6 = ∅ . Let y = ( y α ) b e a giv en sequ ence indexed in the canonical basis of R [ x ]. F or ev ery i = 1 , . . . , N , the sparse momen t matr ix M k ( y , I i ) asso ciated with y , has its r ows and columns indexed in the cano nical basis ( x α ) of R [ x k : k ∈ I i ], and with en tries: M k ( y , I i ) α,β = L y ( x α + β ) = y α + β , ∀ α , β ∈ N ( i ) k . Similarly , for a giv en p olynomial h ∈ R [ x k : k ∈ I i ], the sparse lo calizing matrix M k ( h y , I i ) asso ciated with y and h , has its ro ws and columns indexed in the canoni- cal basis ( x α ) of R [ x k : k ∈ I i ], and with en tries: M k ( h y , I i ) α,β = L y ( h x α + β ) = X γ ∈ N ( i ) h γ y α + β + γ , ∀ α, β ∈ N ( i ) k . With K ⊂ R n defined in (2), let r j := ⌈ (deg g j ) / 2 ⌉ , for ev ery j = 1 , . . . , m + N . Conside r the hierarc h y o f semidefinite relaxations: f ∗ k = inf y N X i =1 L y ( p i ) s.t. M k ( y , I i ) 0 , i = 1 , . . . , N M k − r j ( g j y , I i ) 0 , ∀ j ∈ J i , i = 1 , . . . , N L y ( q i ) = 1 , i = 1 , . . . , N L y ( x α q i ) = L y ( x α q j ) = 0 , ∀ α ∈ N ( ij ) , ∀ j ∈ U i , i = 1 , . . . , N − 1 with | α | + max[deg q i , deg q j ] ≤ 2 k . (8) Theorem 3.2 L et K ⊂ R n in ( 2) b e c om p act. L et the sp arsity p attern ( I i ) N i =1 satisfy the runnin g interse ction pr op e rty, a nd c on s i d er the hie r ar chy of semidefini te r elaxations define d in (8 ). Then: (a) f ∗ k ↑ f ∗ as k → ∞ . (b) If an optimal solution y ∗ of (8) sa tisfi e s rank M k ( y ∗ , I i ) = rank M k − v i ( y ∗ , I i ) =: R i , ∀ i = 1 , . . . , N , (wher e v i = max j ∈ J i [ r j ] ), and rank M k ( y ∗ , I i ∩ I j ) = 1 , ∀ j ∈ U i , = 1 , . . . , N − 1 , then f ∗ k = f ∗ and one may extr act fini tely man y glob al m i n imizers. Pro of: The pro o f is similar to that of Theorem 2.2 a nd also to tha t of [9, Theorem 4.7]. One first prov e that if ( y k ) is a nearly optimal solution o f (5), i.e. f ∗ k ≤ N X i =1 L y k ( p i ) ≤ f ∗ k + 1 k , then there exists a subsequence ( k ℓ ) and a sequence y , suc h t ha t lim ℓ →∞ y k ℓ α = y α , ∀ α ∈ N ( i ) , i = 1 , . . . , N . 8 F rom this p oin twise conv ergence it easily fo llo ws that for ev ery i = 1 , . . . , N and j ∈ J i , M k ( y , I i ) 0 , M k ( g j y , I i ) 0 , j ∈ J i ; i = 1 , . . . , N . No w o bserv e that eac h set K i ⊂ R n i satisfies Assumption 2.1. Therefore, b y Putinar’s theorem [9, Theorem 2.14] the sequence y i = ( y α ), α ∈ N ( i ) (a subsequence of y ), has a represen ting measure µ i supp orted on K i . F or ev ery ( i, j ) with j ∈ U i , denote b y y ij the sequence ( y α ), α ∈ N ( ij ) . Again, b y p oin t wise conv ergence, L y ( q i ) = 1, i = 1 , . . . , N , and L y ( q i x α ) = Z K i x α q j ( x ) dµ j = Z K j x α q j ( x ) dµ j , ∀ α ∈ N ( ij ) , ∀ j ∈ U i . (9) Therefore, for ev ery i = 1 , . . . , N , dν i := q i ( x ) dµ i is a finite Bor el probabilit y measure supp orted on K i . As measures on compact sets are momen t determinate, (9) yields: π ij ν i = π j i ν j , ∀ ( i, j ) , j ∈ U i . Therefore, b y [9, Lemma B.13] there exists a probability measure ν on K suc h that π i ν = ν i for ev ery i = 1 , . . . , N . But then f ∗ ≥ lim ℓ →∞ f ∗ k ℓ = lim ℓ →∞ N X i =1 L y k ℓ i ( p i ) = N X i =1 Z K p i dµ i = N X i =1 Z K p i q i q i dµ i = N X i =1 Z K p i q i dν i = Z K N X i =1 p i q i ! dν ≥ f ∗ . As the conv erging subsequenc e w as arbitrary , and ( f ∗ k ) is monoto ne non decreasing, we finally get f ∗ k ↑ f ∗ . In addition, ν is an optimal solution of (7) with optimal v alue f ∗ = ˆ f . The pro of of (b) is as in [7] and uses the flat extension theorem o f Curto and F ialk o w [9, Theorem 3 .7] from whic h, the sequence y ∗ i = ( y ∗ α ), α ∈ N ( i ) , has an atomic represen t ing measure supp orted on R i p oin ts of K i , for ev ery i = 1 , . . . , N . 4 GloptiP oly and examples In this section w e sho w that the generalized momen t problem (GMP) form ulation of rational optimization problem (1) has a straigh t f orw ard Mat la b implemen tation when using our softw are G lo ptiP o ly 3 [5]. Rather than explaining the approach in full g eneralit y with aw kw ard notations, w e describ e three simple examples. 4.1 Wilkinson-lik e rational function Consider the elemen tary univ a riate ratio na l optimization problem f ∗ = sup x ∈ R f ( x ) , f ( x ) = N X i =1 p i ( x ) q i ( x ) = N X i =1 1 x 2 + i 9 with N an integer. The only real critical p oin t is x = 0, at whic h the ob jectiv e function tak es its maximum f ∗ = f (0 ) = N X i =1 1 i . Reducing to the same denominator f ( x ) = P i Q j 6 = i ( x 2 + j ) Q i ( x 2 + i ) = p ( x 2 ) q ( x 2 ) yields the we ll-kno wn Wilkinson p olynomial q whose squared ro ot mo duli are the in tegers from 1 to N . This p olynomial w as describ ed in the mid 1960s b y James H. Wilkinson to illustrate the difficult y of finding n umerically the ro ots of p olynomials. If we c ho ose e.g. N = 20, reduction t o the same denominator is hop eless since the constan t co efficien t in monic p olynomial q is 20! = 24 32902008 1 76640000 . The GMP formulation (4) of this problem reads (up to replacing inf with sup in t he ob jectiv e function): sup µ i ∈M ( R ) P N i =1 R R p i dµ i s . t . R R q 1 dµ 1 = 1 R R x α q i dµ i = R R x α q 1 dµ 1 , ∀ α ∈ N n , i = 1 , . . . , N . Our Matlab script to mo del and solve this problem is as follows: N = 20; mpol(’x’,N); % create variables q = cell(N,1); % problem data mu = cell(N,1); % measures for i = 1:N, q{i} = i+x(i)^2; mu{i} = meas(x(i)); end % model GMP k = 0; % relaxation order f = mass(mu{1}) ; % objective function e = [mom(q{1}) == 1]; % moment contraints for i = 2:N f = f + mass(mu{i}); e = [e; mom(mmon(x(1),k)* q{1}) == mom(mmon(x (i),k)*q{i })]; end % model SDP relaxation of GMP P = msdp(max(f) ,e); % solve SDP relaxation [stat,obj] = msol(P) Instructions mpol , meas , mass , mom , mmon , msdp , max and msol are G lo ptiP o ly 3 commands, see the user’s guide [5] for more info rmation. F or readers who are not familiar with this pac k a ge, v ariable f is the ob jectiv e function to b e maximiz ed. Since p i = 1 for all i = 1 , . . . , N , it is the sum of masses o f measures µ i . V ector e stor es the linear momen t constrain ts and the instruction mmon(x,k) generates all mono mials of v a r iable x up to degree k . Finally , instruction msdp generates the SDP relaxation of the G MP , and msol solv es the SDP problem with the default conic solve r (SeDuMi 1.3 in our case). A t the first SDP relaxation (i.e. for k=0 ) w e obtain a rank-one moment matrix corre- sp onding to a Dir a c a t x ∗ = 0: >> [stat,obj] = msol(P) 10 Global optimality certified numerically stat = 1 obj = 3.5977 whic h is consisten t with Maple’s > f := sum(1/(x^2+i) , i=1..20); > evalf(subs(x = 0, f)); 3.5977 Note that for this example Assumption 2.1 is viola t ed, since w e optimize ov er the non- b ounded set K = R . In spite of this, we could solv e t he problem glo ba lly . 4.2 Relev ance of the comp actness assumption With this elemen tar y example we w ould lik e to emphasize the pra ctical relev ance of As- sumption 2.1 on the existence o f an algebraic certificate of compactness of set K . Consider the univ ariate problem f ∗ = inf x ∈ K f ( x ) , f ( x ) = 1 + x + x 2 1 + x 2 + 1 + x 2 1 + 2 x 2 . (10) First let K = R . The numerator of the gradient of f ( x ) has tw o real ro ots, one of whic h b eing the global minim um lo cated at x ∗ = − 1 . 4215 for whic h f ∗ = 1 . 1286 . The follo wing GloptiP oly script models and solv es the SDP relaxations of orders k = 0 , . . . , 9 of the GMP formulation of this pro blem: mpol x1 x2 f1 = 1+x1+x1^2; g1 = 1+x1^2; f2 = 1+x2^2; g2 = 1+2*x2^2; mu1 = meas(x1); mu2 = meas(x2); bounds = []; for k = 0:9 P = msdp(min(mo m(f1)+mom(f 2)), .. . mom(mmon(x1 ,k)*g1) == mom(mmo n(x2,k)*g2) , mom(g1) == 1); [stat, obj] = msol(P); bounds = [bounds; obj]; end bounds In vec tor bounds w e retriev e t he following monotically increasing sequence of lo w er b ounds f ∗ k (up to 5 digits) obtained by solving the SDP relaxations (5): A t SDP relaxation k = 9, GloptiP oly certifies global opt imality and extracts the global minimizer. T able 1 sho ws that the conv ergence of the hierarc h y of SDP r elaxatio ns is ra t her slo w for this very simple example. This is due to the fact that Assumption 2 .1 is violated, since we o ptimize o v er the non-b o unded set K = R . On Figur e 1 we rep ort the sequence s o f lo w er b ounds obtained by solving the SDP relax- ations of problem (10) o n compact sets K = [ − R, R ] for R = 2 , 3 , . . . , 9. 11 order k b ound f ∗ k order k bo und f ∗ k 0 1.0000 5 1.0793 1 1.0000 6 1.1264 2 1.0170 7 1.1283 3 1.0220 8 1.1286 4 1.0633 9 1.1286 T able 1 : Low er b ounds for SD P r elaxatio ns of problem (10). 1 2 3 4 5 6 7 8 9 10 1 1.05 1.1 1.15 SDP relaxation order Lower bound Figure 1: Low er b ounds for SDP relaxatio ns of problem (1 0) on b ounded sets K = [ − R, R ] for R = 2 (top curve) to R = 9 (b ottom curve). 4.3 Exploiting sparsit y with GloptiP oly Ev en though v ersion 3 of G loptiP oly is designed to exploit problem sparsity , there is no illustration of this feature in t he softw are user’s guide [5]. In this section w e pro vide suc h a simple example. No t e also that GloptiPoly is not able to detect sparsity in a g iv en problem, con trary to Spar sePOP whic h uses a heuristic to find c hordal extensions of graphs [12]. Ho w ev er, SparsePOP is not designed to handle directly ra tional optimization problems. Consider the elemen tary example of [7, Section 3.2]: inf x ∈ R 4 x 1 x 2 + x 1 x 3 + x 1 x 4 s . t . x 2 1 + x 2 2 ≤ 1 x 2 1 + x 2 3 ≤ 2 x 2 1 + x 2 4 ≤ 3 for whic h the v ariable index subs ets I 1 = { 1 , 2 } , I 2 = { 1 , 3 } , I 3 = { 1 , 4 } s atisfy the running in tersec tion prop erty of Definition 3 .1 . Note that this problem is a particular case of (1) with a p olynomial ob jectiv e f unction. Without exploiting sparsit y , the GloptiPoly script to solve t his problem is as follows: 12 mpol x1 x2 x3 x4 Pdense = msdp(min(x1*x2+x 1*x3+x1*x4), ... x1^2+x2^2<= 1,x1^2+x3^ 2<=2,x1^2+x4^2<=3,2); [stat,obj] = msol(Pdense); GloptiP oly certifies global o ptima lity with a momen t matrix o f size 15, and 3 lo calizing matrices of size 5. And here is the script exploiting sparsit y , splitting the v ariables in to sev eral measures µ i consisten tly with subsets I i : mpol x1 3 mpol x2 x3 x4 mu(1) = meas([x1(1) x2]); % first measure on x1 and x2 mu(2) = meas([x1(2) x3]); % second measure on x1 and x3 mu(3) = meas([x1(3) x4]); % third measure on x1 and x4 f = mom(x1(1)*x 2)+mom(x1(2 )*x3)+mom(x1(3)*x4); % obj ective function k = 3; % SDP relaxation order m1 = mom(mmon(x 1(1),k)); % moments of first measure m2 = mom(mmon(x 1(2),k)); % moments of second measure m3 = mom(mmon(x 1(3),k)); % moments of third measure K = [x1(1)^2+x2 ^2<=1, x1(2)^2+x3^2<=2, x1(3)^2+x4^2<=3]; % supports Psparse = msdp(min(f),m1= =m2,m3==m2,K,mass(mu)==1); [stat,obj] = msol(Psparse); GloptiP oly certifies global o ptimalit y with 3 momen t matrices o f size 6, and 3 lo calizing matrices of size 3. 4.4 Comparison with the epigraph approac h In most of the examples w e ha v e pro cessed, the epigra ph appro ac h described in the In- tro duction (consis ting of introducing one lifting v ariable for eac h rational term in the ob jectiv e function) was less efficien t than the GMP approa ch. Typic ally , the order of the SDP relaxation (and hence its size) required to certify global optimality is t ypically larger with the epigraph approach. When ev aluating the epigraph approac h, w e also observ ed that it is n umerically preferable to replace the inequalit y constraints r i q i ( x ) − p i ( x ) ≥ 0 with equalit y constrain ts r i q i ( x ) − p i ( x ) = 0 in the definition of semi-algebraic set ˆ K in (3). F or the example o f Section 4.1 the epigraph a ppro ac h with inequalities certifies global optimalit y at order k = 5, whereas the epigraph approac h with equalities requires k = 1. As a t ypical illustration of the iss ues faced with the epigraph approa c h consider the example with eighth-degree terms inf x ∈ R 2 f ( x ) = 10 X i =1 ( x 1 + x 2 )( x 2 1 + x 2 1 x 2 2 + x 4 2 + i 2 ) − ( ix 2 2 + 1)( x 4 1 + x 2 2 + 2 i ) ( x 4 1 + x 2 2 + 2 i )( x 2 1 + x 2 1 x 2 2 + x 4 2 + i 2 ) (11) whic h is co oked up to hav e sev eral lo cal optima and sufficien tly high degree to prev ent reduction to the same denominator. After a suitable scaling to mak e critical p oints fit within the b o x [ − 1 , 1 ] 2 , as required by the momen t SDP relaxations form ulated in the p o w er basis [4, Section 6.5], the G MP approac h yields a certificate of global optima lity with x ∗ 1 = − 0 . 60450, x ∗ 2 = − 2 . 2045, f ∗ = − 6 . 2844 at order k = 6 in a few seconds on 13 a standard PC. In contrast, the epigraph approac h do es not provide a certificate for an order as high as k = 10, requiring more than one min ute of CPU time. 4.5 Shek el’s fo xholes Consider the mo dified Shek el fo xholes rationa l function minimization problem [2 ] min x ∈ R n N X i =1 1 P n j = 1 ( x j − a ij ) 2 + c i (12) whose data a ij , c i , i = 1 , . . . , N , j = 1 , . . . , n can b e found in [1, T able 16]. This function is designed to ha v e many lo cal minima, and w e kno w the global minimum in the case n = 5, see [1, T able 17]. After a s uitable scaling to mak e critical p oin ts fit within the b o x [0 , 1] 5 , and after addition of a Euclidean ball constrain t cen tered in t he b o x, the GMP appro a c h yields a certificate of glo ba l optimalit y at o rder k = 3 in less than o ne minute on a standard PC. The extracted minimizer is x ∗ 1 = 8 . 0254 , x ∗ 2 = 9 . 1483 , x ∗ 3 = 5 . 1138, x ∗ 4 = 7 . 6213, x ∗ 5 = 4 . 5638, which matc hes with the kno wn global minimizer to four significant digits. This p oin t can b e refined if giv en an initial g uess for a lo cal optimization metho d. If w e use a standard quasi-Newton BF GS algorithm, w e obtain after a few it era t io ns a p oint matc hing the kno wn g lo bal minimizer to eight significan t digits. In the case n = 10, for whic h the global minim um is giv en in [1, T able 1 7], the GMP approac h yields a certificate of global optimalit y at o rder k = 2 in ab out 750 seconds of C PU time . Here to o, w e observ e that the extracted minimizer x ∗ 1 = 8 . 0249, x ∗ 2 = 9 . 1518, x ∗ 3 = 5 . 1140, x ∗ 4 = 7 . 6209, x ∗ 5 = 4 . 5640, x ∗ 6 = 4 . 7110, x ∗ 7 = 2 . 996, x ∗ 8 = 6 . 1259, x ∗ 9 = 0 . 73424 , x ∗ 1 0 = 4 . 982 0 is a go o d a pproximation to the minimizer, with four correct significan t digits. If necessary , this p oin t can b e used as an initial guess for refining with a lo cal solv er. Note that it is not p ossible to exploit problem sparsity in this case, since all the v aria bles app ear in eac h term in sum (12). 4.6 Rosen bro c k’s function Consider the rational optimization problem f ∗ = max x ∈ R n n − 1 X i =1 1 100( x i +1 − x 2 i ) 2 + ( x i − 1) 2 + 1 (13) whic h has the same critical p o in ts a s the we ll-kno wn Rosenb ro c k problem min x ∈ R n n − 1 X i =1 (100( x i +1 − x 2 i ) 2 + ( x i − 1) 2 ) whose geometry is troublesome fo r lo cal optimization solvers . It can b een easily sho wn that the global maximum f ∗ = 1 of problem (13) is ac hiev ed at x ∗ i = 1, i = 1 , . . . , n . Our exp eriments with lo cal optimization algorithms rev eal that standard quasi-Newton solvers or functions of the Optimization to olb ox fo r Matlab, called rep eatedly with random initial guesses, typic ally yield lo cal maxima quite far from the global maxim um. 14 With our GMP approach, after exploiting sparsity and adding b ound constrain ts x 2 i ≤ 16, i = 1 , . . . , n , we could solv e problem (13 ) with a certificate of global opt imality for n up to 1000. T ypical CPU times ra nge from 10 seconds for n = 100 to 50 0 seconds for n = 1000. 5 Conclus ion The problem of minimizing the sum of many low-degree (typically no n- con v ex) ratio nal fractions on a (t ypically non-con v ex) semi-algebraic s et arises in sev eral imp ortan t ap- plications, a nd notably in computer vision (triangulation, estimation of the fundamen tal matrix in epip ola r geometry) and in systems con tro l ( H 2 optimal control with a fixed- order con troller of a linear system sub ject to para metric uncertain t y). These engineering problems motiv ated our w ork, but the application of our tec hniques to computer vision and systems con trol will b e describ ed elsewhere. These fra ctio na l programming problems b eing non conv ex, lo cal optimization approaches yield only upp er b ounds on the optim um. In this pap er w e w ere in terested in computing the g lobal minim um (a nd p ossibly global minimizers) or a t least, computing v alid lo w er b ounds on the global minim um, for f r a c- tional progra ms in v o lving a sum with man y t erms. W e ha v e used a semidefinite pr o gram- ming (SDP) relaxation approa c h by f o rm ulating the ra tional opt imizatio n problem as an instance of the generalized momen t problem (GMP). In additio n, pro blem structure can b e sometimes exploited in the case where the n um b er of v ariables is large but sparsit y is presen t. Numerical exp erimen ts with our public-domain softw are GloptiP oly inte rfaced with off-the-shelf semidefinite programming solv ers indicate that the approach can solv e problems that can b e challenging for state-of-the-a r t global optimization algorithms. This is consisten t with the exp erimen ts made in [3 ] where the (dense) SDP relaxation approac h w a s first applied to ( p olynomial) optimization problems of computer vision. F or larger and/o r ill-conditioned problems, it can happ en that GloptiP oly extracts fr o m the momen t ma t r ix a minimizer whic h is not v ery accurate. It can also happ en that Glop- tiP oly is not able to extract a minimizer, in whic h case first-order moments appro ximate the minimizer (pro vided it is unique, whic h is generically true for ratio nal optimization). The appro ximate minimizer can b e then input to any lo cal o ptimizatio n algorithm a s an initial guess. A comparison of our approac h with other tec hniques of glo bal optimization (rep or t ed e.g. on Hans Mittelmann’s or Arnold Neumaier’s w ebpages) is out of the scop e of this pap er. W e b eliev e how ev er that suc h a comparison would b e fair only if no expert tuning is re- quired fo r alternat ive algorithms. Indeed, when using GloptiPoly the o nly assumption we mak e is that we kno w a ball con taining the global optimizer. Besides this, o ur results are fully repro ducible (Matlab files repro ducing o ur examples are av ailable up on request) and our SDP relaxatio ns are solved with g eneral-purp ose semidefinite programming solv ers. Ac kn owledgmen ts W e are grateful to Mic hel D evy , Jean-Jo s´ e Orteu, T om´ a ˇ s P a jdla, Thierry Sen tenac and Rekha Thomas f o r insigh t f ul discussions on applications of real a lg ebraic geometry a nd SDP in computer vision, and to Josh T ay lor for his feedbac k on the example of section 4.3. 15 References [1] M. M. Ali, C. Khompatrap o rn, Z. B. Zabinsky . A n umerical ev aluation of sev eral sto c hastic algorithms on selected contin uous global optimization test problems. J. Glob al O p tim. , 31(4):635 - 672, 200 5. [2] H. Bersini, M. Dorigo, S. La ngerman, G. Seron t, L. Ga m bardella. Results of the first in ternational con test on evolutionary optimisation. IEEE Intl. Conf. Evolutionary Computation , Nagoy a, Japan, 199 6. [3] F. Kahl, D. Henrion. Glo bally optimal estimates for geometric reconstruction prob- lems. IEEE Intl. Conf. Computer Vision , Beijing , China, 2 0 05. [4] D. Henrion, J. B. Lasserre. GloptiPoly: global o ptimization ov er p olynomials with Matlab and SeDuMi. ACM T r ans. Math. Sof twa r e , 29 :165–194, 2003 . [5] D. Henrion, J. B. Lasserre, J. L¨ ofb erg. G loptiP oly 3: momen ts, optimization and semidefinite progr a mming. Optim. Metho d s and Softwar e , 2 4:761–779 , 200 9 . [6] D. Jib etean, E. de Klerk. Global optimization of ratio nal functions: a semidefinite programming a pproac h. Math. Pr o gr amming , 106:93- 1 09, 20 06. [7] J. B. Lasserre. Con vergen t SDP r elaxations in p olynomial optimization with sparsit y . SIAM J. Optim. , 1 7:822-843 , 2006. [8] J. B. Lasserre. A semidefinite prog r a mming a pproac h to the generalized problem of momen t s. Math. Pr o gr amming , 112:65-9 2, 2008. [9] J. B. Lasserre. Moments, p ositive p olynomials and their applic a tions , Imp erial College Press, London, 2009. [10] S. Schaible, J. Shi. F ra ctional prog ramming: the sum-of-ratios case. Optim. Metho ds Softwar e , 18( 2 ):219–229, 2003. [11] S. W aki, S. Kim, M. Ko jima, M. Muramatsu. Sums of squares a nd semidefinite pro- gramming relaxations for p olynomial optimization problems with structured sparsit y . SIAM J. Optim. , 1 7:218-242 , 2006. [12] H. W aki, S. Kim, M. Ko jima , M. Muramatsu, H. Sugimoto. Spar sePOP: a sparse semidefinite programming relaxation of p olynomial optimization problems. ACM T r a ns. Math. Softwar e , 35:1 -13, 2008. 16

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment