Artificial Immune Privileged Sites as an Enhancement to Immuno-Computing Paradigm

The immune system is a highly parallel and distributed intelligent system which has learning, memory, and associative capabilities. Artificial Immune System is an evolutionary paradigm inspired by the biological aspects of the immune system of mammals. The immune system can inspire to form new algorithms learning from its course of action. The human immune system has motivated scientists and engineers for finding powerful information processing algorithms that has solved complex engineering problems. This work is the result of an attempt to explore a different perspective of the immune system namely the Immune Privileged Site (IPS) which has the ability to make an exception to different parts of the body by not triggering immune response to some of the foreign agent in these parts of the body. While the complete system is secured by an Immune System at certain times it may be required that the system allows certain activities which may be harmful to other system which is useful to it and learns over a period of time through the immune privilege model as done in case of Immune Privilege Sites in Natural Immune System.

💡 Research Summary

The paper introduces a novel perspective on artificial immune systems (AIS) by borrowing the concept of Immune Privileged Sites (IPS) from mammalian biology. In natural immunity, certain organs such as the eye, brain, testis, and the fetal uterus are able to tolerate the presence of foreign antigens without initiating an inflammatory response. This tolerance is mediated by specialized mechanisms involving regulatory T‑cells, TGF‑β, complement inhibitors, and other immunosuppressive factors, ultimately leading to a state of immune “privilege” where the site is effectively ignored by the immune system after an initial exposure period.

Motivated by this biological exception‑making behavior, the authors propose an “Artificial Immune Privilege Algorithm” (AIPA). The algorithm works as follows: (1) continuously scan the environment for external agents (data, processes, objects, etc.); (2) when a new agent is detected, perform the normal defensive action and record the outcome; (3) compare the recorded outcome against a pre‑defined set of rules that constitute a Trust Quotient (Tq); (4) if the outcome satisfies the rules, store the agent’s identifier and characteristics in a “trusted entity” database; (5) on subsequent encounters, the system checks the database first and, if the agent is trusted, bypasses the defensive routine. In effect, the system learns to treat certain agents as “privileged” after a short learning phase, mirroring the biological transition from attack to tolerance.

Two illustrative applications are described.

-

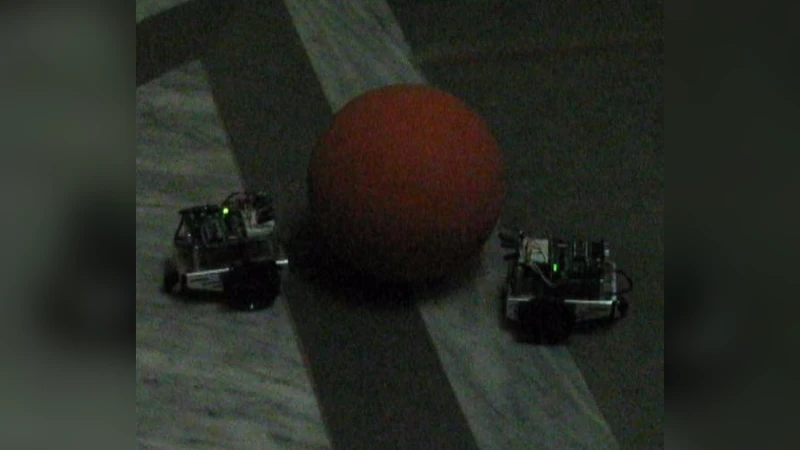

Robotics – A surveillance robot is tasked with following a red ball while a maintenance robot periodically moves the ball. Initially, the surveillance robot treats any moving object as a potential threat and reacts accordingly. After repeated interactions with the maintenance robot, the surveillance robot records the maintenance robot’s signature in its trusted database. Consequently, when the ball is moved again, the robot no longer executes avoidance maneuvers, allowing smoother operation. A secondary “fail‑safe” mechanism is introduced: two robots monitor each other so that if one loses sight of the ball, the other can continue tracking, echoing the redundancy found in natural immune networks.

-

Antivirus / Anti‑malware – Conventional antivirus solutions rely on signature matching and heuristic analysis to quarantine malicious software. The authors suggest augmenting this with a privilege mode: when a program is flagged as malicious, the user is prompted with a CAPTCHA. If the user supplies the correct response, the program is removed from the threat database and added to a trusted list. Future scans will then treat the program as benign, preventing repeated false positives. This approach aims to reduce unnecessary alerts and allow intentional, user‑approved software to coexist with security mechanisms.

The paper emphasizes several strengths: (a) it introduces a biologically inspired “exception” mechanism that shifts AIS from pure detection‑elimination to a learn‑and‑tolerate paradigm; (b) the rule‑based Trust Quotient and simple database architecture make the concept easily extensible to various domains; (c) the generalized framework is demonstrated in both embodied robotics and software security, suggesting broad applicability.

However, the work suffers from notable shortcomings. The authors provide only high‑level pseudo‑code and conceptual diagrams; there is no empirical evaluation, benchmark comparison, or quantitative analysis of detection accuracy, false‑positive rates, or learning speed. In the robotics scenario, the time required for an agent to become trusted, the robustness of the trust database against adversarial manipulation, and the impact on overall system safety are not measured. In the antivirus case, relying on user‑entered CAPTCHA introduces usability concerns and may be vulnerable to social engineering; the paper does not discuss how to prevent malicious actors from deliberately triggering the privilege mode to gain persistent access. Moreover, the definition of the Trust Quotient is left abstract; practical deployment would require domain‑specific rule engineering, yet the paper offers no methodology for deriving these rules.

Finally, the security implications of granting “privilege” to previously malicious entities are under‑explored. In biological systems, an IPS can be exploited by pathogens that masquerade as tolerated antigens, leading to chronic infection. Analogously, a software system that learns to ignore certain threats could be subverted if an attacker manages to satisfy the trust rules during the learning phase. The paper acknowledges the novelty of the concept but stops short of proposing safeguards such as periodic re‑evaluation of trusted entities, anomaly detection overlays, or multi‑factor verification.

In conclusion, the manuscript presents an intriguing and original idea—translating immune privilege into artificial immune computing—and sketches a plausible algorithmic framework. While the conceptual contribution is valuable, the lack of experimental validation, detailed rule specification, and security analysis limits its immediate impact. Future work should focus on implementing the algorithm on real robotic platforms and security suites, measuring performance metrics, and designing robust mechanisms to prevent abuse of the privilege mode. Only then can the proposed “Artificial Immune Privileged Site” become a practical tool in the AIS toolbox.

Comments & Academic Discussion

Loading comments...

Leave a Comment