Potential of 4d-VAR for exigent forecasting of severe weather

Severe storms, tropical cyclones, and associated tornadoes, floods, lightning, and microbursts threaten life and property. Reliable, precise, and accurate alerts of these phenomena can trigger defensive actions and preparations. However, these crucial weather phenomena are difficult to forecast. The objective of this paper is to demonstrate the potential of 4d-VAR (four dimensional variational data assimilation) for exigent forecasting (XF) of severe storm precursors and to thereby characterize the probability of a worst-case scenario. 4d-VAR is designed to adjust the initial conditions (IC) of a numerical weather prediction model consistent with the uncertainty of the prior estimate of the IC while at the same time minimizing the misfit to available observations. For XF the same approach is taken but instead of fitting observations, a measure of damage or loss or an equivalent proxy is maximized or minimized. To accomplish this will require development of a specialized cost function for 4d-VAR. When 4d-VAR solves the XF problem a by-product will be the value of the background cost function that provides a measure of the likelihood of occurrence of the forecast exigent conditions. 4d-VAR has been previously applied to a special case of XF in hurricane modification research. A summary of a case study of Hurricane Andrew (1992) is presented as a prototype of XF. The study of XF is expected to advance forecasting high impact weather events, refine methodologies for communicating warning and potential impacts of exigent weather events to a threatened population, be extensible to commercially viable products, such as forecasting freezes for the citrus industry, and be a useful pedagogical tool.

💡 Research Summary

The paper introduces “exigent forecasting” (XF) as a novel application of four‑dimensional variational data assimilation (4d‑VAR) to explore worst‑case scenarios of high‑impact weather events such as severe thunderstorms, tornadoes, and tropical cyclones. Traditional 4d‑VAR seeks the atmospheric state that minimizes a cost function J = J_b + J_o, where J_b measures the misfit to a background (prior) state using a background error covariance matrix, and J_o measures the misfit to observations using an observation error covariance. The method iteratively adjusts the initial conditions (IC) using the model’s adjoint to reduce J.

In XF, an additional term J_d is introduced to represent a user‑defined “damage” or “benefit” metric, weighted by a factor w_d, yielding J = J_b + J_o + w_d J_d. By choosing J_d appropriately—e.g., a wind‑damage function for hurricanes or the Significant Tornado Parameter (STP) for tornado precursors—the optimization can be directed to either minimize expected loss (best‑case) or maximize a risk metric (worst‑case). The weight w_d is varied to generate a family of solutions; each solution’s background cost J_b provides a statistical measure of how likely the corresponding IC perturbation is, given the assumed error statistics. Because J_b follows a χ² distribution under Gaussian assumptions, the magnitude of J_b can be interpreted as a relative probability of the scenario.

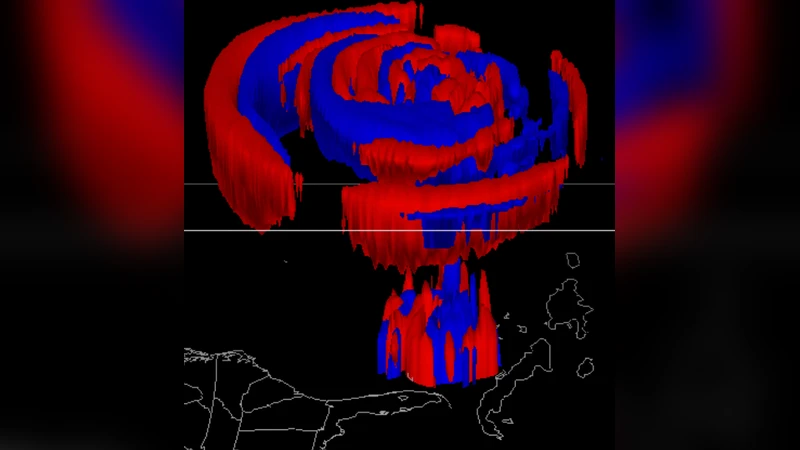

The authors demonstrate the concept with two main case studies. First, they apply XF to hurricane control, using the MM5 4d‑VAR system to steer Hurricane Iniki away from Kauai and to reduce damaging winds in Hurricane Andrew. The cost function J_d in these experiments quantifies property loss as a function of wind speed, area, and land‑use values. Optimal perturbations—often quasi‑axisymmetric temperature anomalies—are found to convert kinetic energy into thermal potential energy, temporarily weakening the storm. Experiments restricting perturbations to temperature alone succeed less reliably, while perturbations limited to pressure or vertical velocity show mixed results, highlighting the importance of a multi‑variable control vector.

Second, the paper outlines how XF could be used for tornado‑precursor forecasting. Since tornadoes are too small to be directly resolved, the authors propose using STP—a composite index of instability, shear, and other mesoscale parameters—as a proxy for tornado risk. By maximizing STP through the XF cost function, the method identifies IC perturbations that would raise STP values in specific locations and times. Plotting J_b versus w_d for each grid point yields a “risk map” indicating where a worst‑case tornado scenario is both plausible (low J_b) and severe (high STP). This approach complements ensemble forecasting, which captures overall uncertainty but may not highlight the most hazardous tail of the distribution.

The methodology section discusses practical challenges. Accurate background error covariances for storm‑scale forecasts are still an open problem; the authors suggest using climatological covariances or hybrid ensemble/4d‑VAR covariances. They also note that XF must operate within the tight time constraints of operational forecasting, recommending spatial and temporal restriction of the cost function and careful tuning of w_d to avoid unrealistic solutions. Subjective expert evaluation (pattern matching, physical plausibility) is advocated as a final filter.

Finally, the paper outlines future directions: extending XF to commercial products (e.g., citrus freeze forecasts), incorporating parametric uncertainties (e.g., surface roughness) into the adjoint, and using XF as a pedagogical tool for training forecasters. The authors argue that XF provides a systematic, probabilistically grounded way to ask “what is the worst plausible outcome?” and to quantify its likelihood, thereby improving decision‑making for high‑impact weather events.

Comments & Academic Discussion

Loading comments...

Leave a Comment