A General Framework for Development of the Cortex-like Visual Object Recognition System: Waves of Spikes, Predictive Coding and Universal Dictionary of Features

This study is focused on the development of the cortex-like visual object recognition system. We propose a general framework, which consists of three hierarchical levels (modules). These modules functionally correspond to the V1, V4 and IT areas. Both bottom-up and top-down connections between the hierarchical levels V4 and IT are employed. The higher the degree of matching between the input and the preferred stimulus, the shorter the response time of the neuron. Therefore information about a single stimulus is distributed in time and is transmitted by the waves of spikes. The reciprocal connections and waves of spikes implement predictive coding: an initial hypothesis is generated on the basis of information delivered by the first wave of spikes and is tested with the information carried by the consecutive waves. The development is considered as extraction and accumulation of features in V4 and objects in IT. Once stored a feature can be disposed, if rarely activated. This cause update of feature repository. Consequently, objects in IT are also updated. This illustrates the growing process and dynamical change of topological structures of V4, IT and connections between these areas.

💡 Research Summary

The paper presents a biologically inspired hierarchical visual object recognition framework that mirrors the ventral visual stream (VVS) of the primate brain, specifically the V1, V4, and inferior temporal (IT) areas. The authors construct three processing levels, each corresponding to one of these cortical regions, and interconnect them with both bottom‑up (feed‑forward) and top‑down (feedback) pathways.

V1 Modeling

At the lowest level, V1 is simulated using four Gabor filter banks oriented at 0°, 45°, 90°, and 135°. Each filter bank convolves the input image, producing four orientation maps. An all‑to‑all lateral inhibitory network selects, for each pixel, the orientation with the strongest response, yielding an Integrated Orientation Map (IOM). This map serves as the raw edge‑based representation that feeds forward to V4.

V4 Processing

V4 is divided into three functional neuron types:

- V4‑INT neurons that simply pass the IOM forward.

- V4‑gSOM neurons (growing Self‑Organizing Maps) that receive 3×3 patches of the IOM. The gSOM continuously expands: a new neuron is created whenever an incoming patch is farther than 0.1·dist_V4 from all existing prototypes, where dist_V4 is the average Euclidean distance among stored prototypes. Each prototype thus becomes a visual feature (a “center” for later matching).

- V4‑RBF neurons that implement Gaussian radial basis functions. For a given input patch X and a prototype P_i, the response is exp(−β_V4‖X−P_i‖²). The tuning parameter β_V4 is set to the inverse of twice the variance, with variance chosen as one‑tenth of dist_V4.

The V4‑RBF layer densely tiles the IOM, producing two derived maps: a Feature Map (the index of the best‑matching prototype at each location) and a Response Map (the corresponding RBF activation values).

Waves of Spikes

A key novelty is the temporal “wave of spikes” encoding. Neurons with higher normalized activation fire earlier: those with response = 1 generate the first spike wave; neurons with response ≥ 0.9 fire in the second wave, and so on. Consequently, the static visual information is unfolded into a sequence of spike waves that travel upward to IT. The first wave contains only perfectly matched features; subsequent waves add progressively weaker matches, effectively delivering a coarse‑to‑fine representation over time.

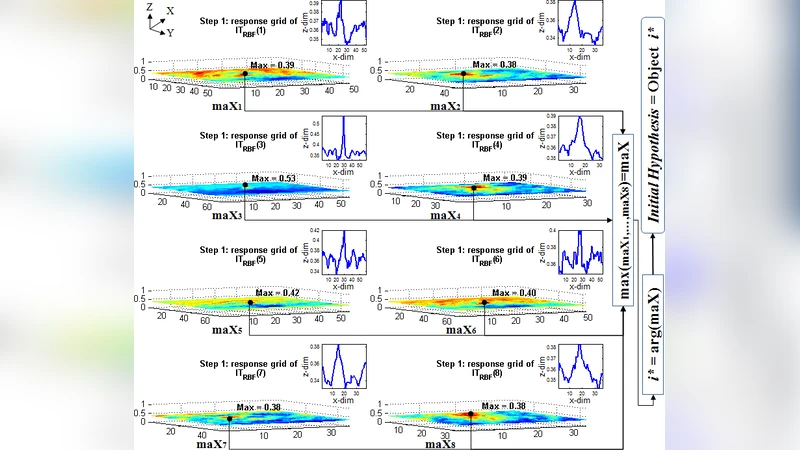

IT Modeling

The IT level also employs a growing SOM (IT‑gSOM) to store whole objects. Each stored object becomes the centre O_bj of a set of IT‑RBF neurons. The IT‑RBF response is exp(−β_IT‖X−O_bj‖²), where X is the current Feature Map and β_IT is derived analogously to β_V4, using the average distance among feature numbers (dist_IT). A threshold α = 0.67 (on a normalized scale) determines whether an IT‑RBF unit’s response is sufficient for recognition; responses below α indicate a novel object.

Predictive Coding Interaction

Predictive coding is realized through the reciprocal V4‑IT connections. When the first spike wave reaches IT, the IT‑RBF unit with the highest activation proposes a hypothesis object. As later spike waves arrive, the hypothesis is re‑evaluated: if the cumulative evidence raises the activation above α, the hypothesis is confirmed; otherwise, the system may reject it and create a new IT‑gSOM entry. Feedback from IT‑RBF units modulates V4‑RBF activity (amplification), thereby influencing subsequent spike waves and refining the bottom‑up signal.

Developmental Dynamics

Both V4 and IT exhibit continual growth and pruning. New visual features that appear frequently are added to the V4‑gSOM; rarely activated features are removed, leading to an evolving feature repository. Likewise, IT‑gSOM expands when novel objects are encountered and contracts when objects become obsolete. This dynamic restructuring reflects the authors’ notion of “development” as the emergence of new neurons and inter‑level connections driven by visual experience.

Experimental Validation

The authors test the architecture on ten grayscale stimuli (hand and cup silhouettes, 100 × 100 px). They demonstrate:

- Extraction of 3×3 orientation‑based prototypes in V4.

- Generation of successive spike waves that progressively convey more of the stimulus.

- Successful hypothesis formation and confirmation via predictive coding after a few spike waves.

- Adaptive growth of the feature and object repositories over multiple presentations.

Significance

The framework bridges two major trends in computational neuroscience and AI: (1) incorporation of realistic cortical timing (spike latency) and bidirectional processing, and (2) continual learning through self‑organizing maps. By coupling wave‑based spike encoding with predictive coding, the model reproduces the brain’s “fast initial guess → iterative refinement” strategy, achieving rapid yet accurate object recognition without relying on deep feed‑forward cascades alone. This work thus offers a concrete, testable architecture for building more brain‑like visual systems that can learn and reorganize autonomously over time.

Comments & Academic Discussion

Loading comments...

Leave a Comment