Opinions within Media, Power and Gossip

Despite the increasing diffusion of the Internet technology, TV remains the principal medium of communication. People’s perceptions, knowledge, beliefs and opinions about matter of facts get (in)formed through the information reported on by the mass-media. However, a single source of information (and consensus) could be a potential cause of anomalies in the structure and evolution of a society. Hence, as the information available (and the way it is reported) is fundamental for our perceptions and opinions, the definition of conditions allowing for a good information to be disseminated is a pressing challenge. In this paper starting from a report on the last Italian political campaign in 2008, we derive a socio-cognitive computational model of opinion dynamics where agents get informed by different sources of information. Then, a what-if analysis, performed trough simulations on the model’s parameters space, is shown. In particular, the scenario implemented includes three main streams of information acquisition, differing in both the contents and the perceived reliability of the messages spread. Agents’ internal opinion is updated either by accessing one of the information sources, namely media and experts, or by exchanging information with one another. They are also endowed with cognitive mechanisms to accept, reject or partially consider the acquired information.

💡 Research Summary

The paper investigates how different communication channels—traditional broadcast media, expert sources, and peer‑to‑peer (P2P) gossip—shape public opinion in a society where television still dominates despite the spread of the Internet. Using data from the 2008 Italian political campaign, the authors construct a socio‑cognitive agent‑based model that extends the classic bounded‑confidence opinion dynamics framework in two important ways. First, each agent holds two continuous opinions (representing welfare and security concerns) rather than a single scalar value, reflecting the multi‑issue nature of real political debates. Second, agents receive external information from two distinct sources: a “media” source that repeatedly broadcasts a fixed message (that security is more important than welfare) to a configurable fraction of the population, and an “expert” source that provides highly reliable information and is accessed through the social network.

Agents are embedded in a scale‑free network generated by preferential attachment, mirroring real‑world social structures with a few highly connected hubs. Interaction follows the bounded‑confidence rule: if the absolute difference between two agents’ opinions is below a tolerance threshold t, the receiving agent moves a fraction m toward the other’s opinion. Experts are modeled as especially persuasive: their messages are accepted regardless of the tolerance threshold.

The simulation explores a four‑dimensional parameter space: (1) media reach (10 %–70 % of agents receive the broadcast each round), (2) expert prevalence (0 %–30 % of agents have direct expert access), (3) the “white‑zone” proportion (agents not reached by either media or experts, 0 %–40 %), and (4) tolerance levels (t = 0.1, 0.3, 0.5 representing conservative, moderate, and open agents).

Key findings include:

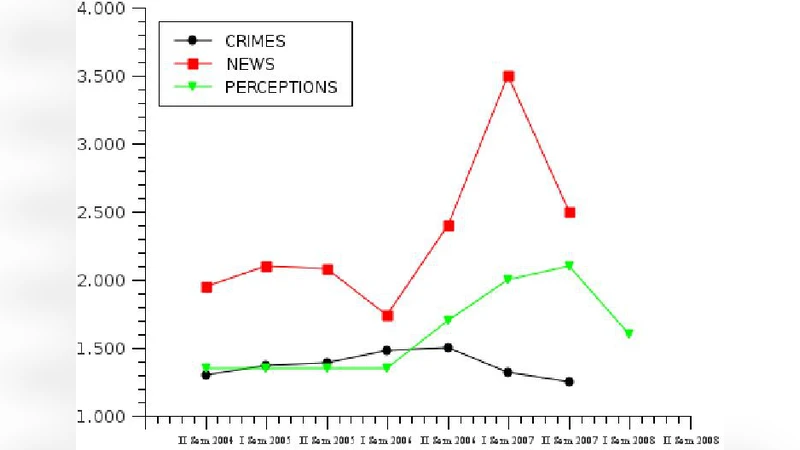

- When media reach is below roughly 30 %, P2P gossip dominates opinion formation, and the population maintains a balanced distribution of welfare and security preferences. Above this threshold, the broadcast message quickly drives the entire system toward a security‑biased consensus, illustrating the powerful agenda‑setting effect of mass media.

- Introducing experts at a modest level (≈10 % of agents) markedly improves the overall quality of information, especially when agents are less tolerant (low t). Experts act as “information lemons” correctors, counteracting the bias introduced by the media and fostering a more equitable welfare‑security balance.

- A sizable white‑zone (≥20 % of agents) weakens both media and expert influence, leading to higher opinion variance and stronger polarization. This result underscores the risk of information asymmetry: when a substantial portion of the population is disconnected from reliable sources, societal cohesion deteriorates.

- Higher tolerance (large t) amplifies the impact of both media and experts, accelerating convergence but reducing opinion diversity. Conversely, low tolerance preserves multiple opinion clusters; in this regime, experts can sustain minority viewpoints that would otherwise be suppressed.

The authors argue that a mixed communication ecosystem—where expert nodes are embedded within a P2P network alongside a regulated broadcast media—can mitigate the spread of biased or “lemon” information. Policy implications include strengthening expert networks (e.g., through fact‑checking hubs), reducing the white‑zone via digital inclusion initiatives, and promoting media literacy to calibrate citizens’ tolerance thresholds.

Limitations are acknowledged: the two opinion dimensions are treated as independent, whereas real issues are often interdependent; expert credibility is static rather than dynamically evolving; and the empirical grounding is limited to a single Italian election, which may constrain generalizability. Future work is proposed to model inter‑issue dependencies, introduce time‑varying trust, and test the framework across diverse cultural and political contexts.

Comments & Academic Discussion

Loading comments...

Leave a Comment