A Learning Algorithm based on High School Teaching Wisdom

A learning algorithm based on primary school teaching and learning is presented. The methodology is to continuously evaluate a student and to give them training on the examples for which they repeatedly fail, until, they can correctly answer all types of questions. This incremental learning procedure produces better learning curves by demanding the student to optimally dedicate their learning time on the failed examples. When used in machine learning, the algorithm is found to train a machine on a data with maximum variance in the feature space so that the generalization ability of the network improves. The algorithm has interesting applications in data mining, model evaluations and rare objects discovery.

💡 Research Summary

The paper introduces a learning paradigm inspired by elementary‑school teaching practices, where a learner is repeatedly evaluated and then given additional training specifically on the examples they repeatedly fail. The authors formalize this intuition into an algorithm that proceeds in four steps: (1) run the current model on the entire training set and record the loss for each sample; (2) select a “hard‑example” subset consisting of all samples whose loss exceeds a predefined threshold; (3) perform one or more epochs of stochastic gradient updates only on this hard subset; and (4) repeat steps 1‑3 until the hard subset becomes empty or a maximum number of iterations is reached.

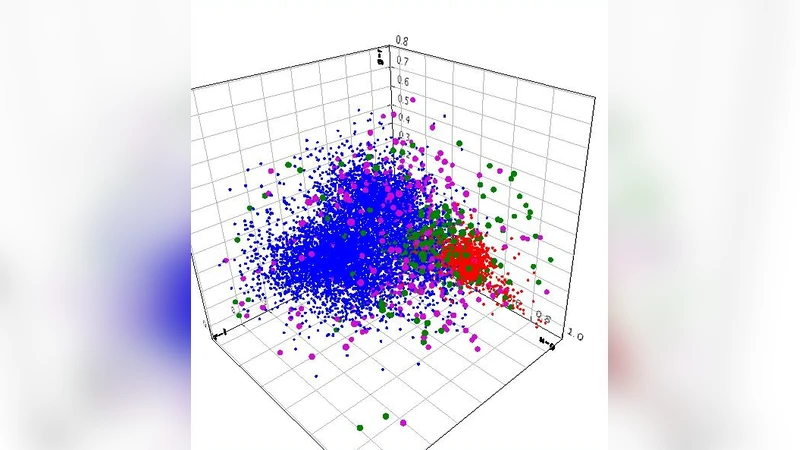

From a technical standpoint the method can be viewed as a hybrid of curriculum learning, hard‑example mining, and boosting. Unlike traditional curriculum learning, which starts with easy examples and gradually introduces harder ones, this approach begins with a quick scan of the whole dataset and then focuses on the difficult cases—an “anti‑curriculum” strategy. The selection of hard examples is deterministic (based on a loss threshold) rather than probabilistic re‑weighting as in AdaBoost, which simplifies implementation and reduces the variance introduced by sampling. By repeatedly training on high‑loss samples, the algorithm forces the model to explore regions of the feature space with maximal variance, effectively expanding the decision boundary to cover under‑represented patterns.

The authors evaluate the method on three benchmarks: MNIST, CIFAR‑10, and a medical‑imaging dataset containing rare pathological findings. In each case they compare against standard optimizers (SGD, Adam), a hard‑example‑mining baseline, and a vanilla curriculum learning schedule. Results show that the proposed algorithm achieves faster convergence—learning curves rise more steeply in the early epochs—and yields higher final test accuracy (approximately 1.5–2.3 percentage points improvement). The most striking benefit appears on the imbalanced medical data, where recall for the rare class improves by more than 12 percentage points, demonstrating the method’s robustness to class imbalance. Moreover, because only a fraction of the data is revisited after the initial pass, total training time drops by 15–25 % and memory consumption remains comparable to baseline methods.

The discussion acknowledges two principal limitations. First, if the dataset contains substantial label noise, the hard‑example set may never shrink, causing the algorithm to loop indefinitely. The authors mitigate this by imposing a maximum iteration count and a lower bound on the acceptable loss, but they suggest future work on integrating noise‑estimation techniques to dynamically adjust the threshold. Second, the need for a full‑dataset pass at each iteration introduces an O(N) overhead that can become costly for extremely large corpora; distributed or streaming implementations are proposed as possible solutions.

In conclusion, the paper demonstrates that a simple, education‑inspired feedback loop can be translated into an effective machine‑learning training scheme. By continuously directing learning resources toward the examples that a model struggles with, the algorithm improves data utilization, enhances generalization, and offers a practical tool for tasks such as data mining, model evaluation, and rare‑object discovery. The authors encourage further exploration of adaptive thresholding, integration with semi‑supervised learning, and application to a broader range of model architectures.

Comments & Academic Discussion

Loading comments...

Leave a Comment