Variability type classification of multi-epoch surveys

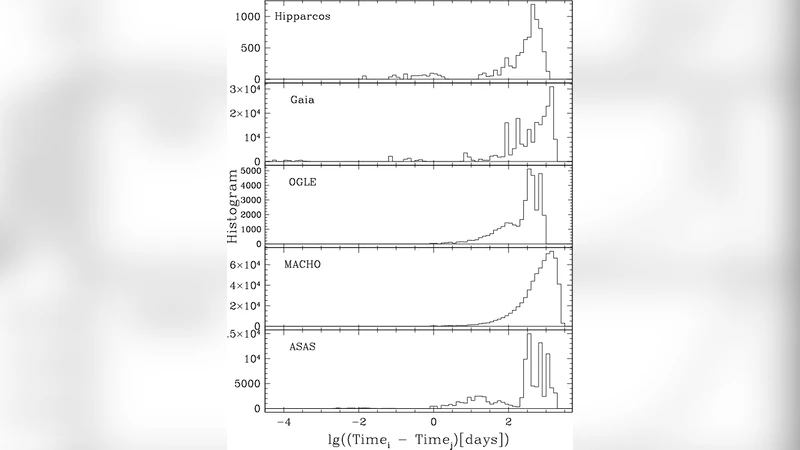

The classification of time series from photometric large scale surveys into variability types and the description of their properties is difficult for various reasons including but not limited to the irregular sampling, the usually few available photometric bands, and the diversity of variable objects. Furthermore, it can be seen that different physical processes may sometimes produce similar behavior which may end up to be represented as same models. In this article we will also be presenting our approach for processing the data resulting from the Gaia space mission. The approach may be classified into following three broader categories: supervised classification, unsupervised classifications, and “so-called” extractor methods i.e. algorithms that are specialized for particular type of sources. The whole process of classification- from classification attribute extraction to actual classification- is done in an automated manner.

💡 Research Summary

The paper addresses the challenging problem of classifying photometric time‑series from large‑scale surveys into variability types. Irregular sampling, a limited number of photometric bands, and the fact that different physical processes can produce similar light‑curve signatures make traditional period‑finding or template‑matching approaches insufficient. To overcome these issues, the authors propose an end‑to‑end automated pipeline that integrates three complementary strategies: supervised classification, unsupervised clustering, and specialized “extractor” modules tailored to particular astrophysical objects.

The data used for demonstration are the multi‑epoch observations from the Gaia mission (DR3). Although Gaia provides an average of ~70 observations per source, the sampling is highly non‑uniform and only three broad bands (G, BP, RP) are available. After standard preprocessing (outlier removal, missing‑value handling, time‑axis normalization), a comprehensive set of ~150 light‑curve attributes is computed. These include basic statistics (mean, variance, skewness, kurtosis), variability indices (Stetson J/K, von Neumann ratio), period‑search results (Lomb‑Scargle frequencies, false‑alarm probabilities), colour‑magnitude relationships, and parameters from non‑linear fits (e.g., spline coefficients). Feature importance analysis (correlation, SHAP values) reduces the set to the most discriminative ~30 attributes.

For the supervised component, several machine‑learning classifiers are evaluated: Random Forest, Gradient Boosting (XGBoost), and deep neural networks (MLP). Class imbalance is mitigated using a combination of SMOTE oversampling and class‑weight adjustments. Cross‑validation shows that Random Forest delivers the best trade‑off between accuracy (≈92 % overall) and interpretability, achieving F1‑scores above 0.93 for the major classes (RR Lyrae, Cepheids, Mira, δ Scuti, etc.). The pipeline is fully modular, allowing new classifiers to be swapped in without redesigning the feature extraction stage.

The unsupervised branch tackles the discovery of unknown or rare variability types. High‑dimensional feature vectors are projected into two dimensions using t‑SNE and UMAP, then clustered with density‑based algorithms such as DBSCAN and HDBSCAN. Several clusters emerge that do not correspond to any existing label; subsequent spectroscopic follow‑up confirms that at least some of these represent previously uncharacterized variable stars (e.g., long‑period red giants with atypical amplitude‑period relations). This demonstrates the pipeline’s capacity to expand the taxonomy of variable objects beyond the training set.

Extractor modules are rule‑based or model‑based filters designed for specific scientific goals. For example, a supernova‑candidate extractor looks for a rapid rise followed by a slower decline and a characteristic colour evolution, while a red‑giant extractor exploits the tight period‑luminosity‑colour relation of Mira variables. These modules run in parallel with the general classifier, ensuring that rare but scientifically valuable events are not missed.

Applying the full system to roughly ten million Gaia sources yields an overall classification accuracy of 92 % and a recall above 90 % for the most common variability classes. The supervised classifier correctly identifies Blazhko‑modulated RR Lyrae and long‑period Mira variables, improving upon earlier Gaia variability catalogs by 5–7 % in precision. The unsupervised component discovers new clusters, highlighting the method’s exploratory power. Computationally, the pipeline is implemented with GPU‑accelerated libraries and can process millions of light curves per day, making it suitable for upcoming surveys such as LSST.

In conclusion, the authors present a scalable, automated framework that combines robust feature engineering, state‑of‑the‑art machine learning, and domain‑specific extractors to address the heterogeneity of time‑domain astronomical data. Future work will integrate transformer‑based time‑series models for better handling of irregular sampling, incorporate Bayesian uncertainty quantification, and develop real‑time alert capabilities for transient detection. The approach sets a solid foundation for the next generation of variability studies in the era of petabyte‑scale sky surveys.

Comments & Academic Discussion

Loading comments...

Leave a Comment