Practical approach to solvability: Geophysical application using complex decomposition into simple part (solvable) and complex part (interpretable) for seismic imaging

The classical approach to solvability of a mathematical problem is to define a method which includes certain rules of operation or algorithms. Then using the defined method, one can show that some problems are solvable or not solvable or undecidable depending on the particular method. With numerical solutions implemented in a computer, it might be more practical to define solvability of a mathematical problem as a complex decomposition problem. The decomposition breaks the data into a simple part and a complex part. The simple part is the solvable part by the method prescribed in the problem definition. The complex part is the leftover of the simple part. Complex part can be viewed as the “residual” of data or operator. It should be interpreted and not to be discarded as useless. We will give different examples to illustrate the more practical definition of solvability. The complex part is not noise and should not be viewed as useless part of the data. It has its own merit in terms of topological or geological interpretation. We have de-emphasized absolute solvability and have emphasized the practical solvability where the simple and complex parts both play important roles.

💡 Research Summary

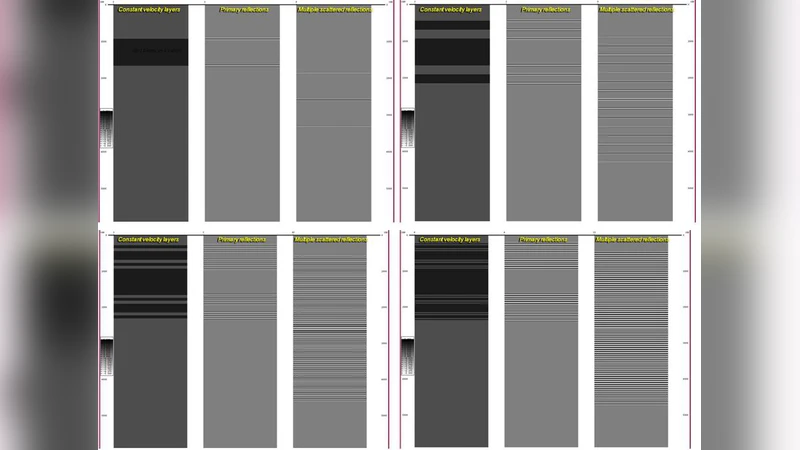

The paper challenges the conventional notion of solvability in mathematical and geophysical problems, which traditionally relies on a predefined algorithmic framework to classify problems as solvable, unsolvable, or undecidable. In seismic imaging, however, data are high‑dimensional, non‑linear, and contaminated by noise and modeling errors, making a binary classification inadequate for practical applications. To address this, the authors propose a “complex decomposition” paradigm that splits observed data D into two components: a simple part (S) that can be handled directly by an existing inversion or processing method, and a complex part (C) that remains after extracting S. Crucially, C is not dismissed as mere noise; instead, it is treated as a residual that carries valuable geological and topological information.

The workflow consists of three main steps. First, a conventional linear inversion (or any chosen method) is applied to obtain S. Second, the residual C = D – S is computed. Third, C is subjected to advanced analyses such as wavelet transforms, non‑linear optimization, and topological data analysis (TDA). By constructing persistence diagrams from C, the authors reveal hidden topological features—high‑persistence structures that correspond to subtle geological interfaces, fault zones, or non‑linear wave phenomena.

Three case studies illustrate the approach. In a complex continental setting with interleaved stratigraphic layers, standard inversion yields a blurred image, whereas the complex residual uncovers distinct high‑persistence features that match field‑observed faults. In a marine environment where high‑frequency noise contaminates low‑frequency signals, the simple part alone fails to resolve primary reflections; analysis of C isolates the noise pattern and restores the underlying reflectivity. In a scenario dominated by non‑linear multiples and mode conversions, the simple inversion cannot capture these effects, but the residual, when processed with non‑linear optimization, reveals the hidden wave modes and leads to a more accurate velocity model.

These results support the authors’ central thesis: the complex part is an interpretable information source rather than useless clutter. By redefining solvability as “practical solvability,” the paper argues that both S and C should be leveraged—S for computational efficiency and stability, C for preserving and interpreting the data’s intrinsic complexity. This perspective departs from the traditional goal of minimizing residuals; instead, it emphasizes residual interpretation as a scientific objective.

The theoretical contribution lies in formalizing the decomposition D = S + C and demonstrating that existing algorithms can be retained while augmenting them with residual analysis. Practically, the approach offers a pathway to integrate high‑performance computing and machine‑learning pipelines: deep‑learning models could automatically classify topological signatures in C, feeding them back into geological modeling workflows. Such integration promises simultaneous improvements in imaging resolution and interpretive accuracy, with implications for exploration, monitoring, and hazard assessment.

In conclusion, the paper provides a compelling argument for a dual‑part framework in seismic imaging, where the “simple” component ensures tractable computation and the “complex” component enriches geological insight. By treating the residual as a valuable, interpretable entity, the authors open new avenues for research and operational practice in geophysical imaging.

Comments & Academic Discussion

Loading comments...

Leave a Comment